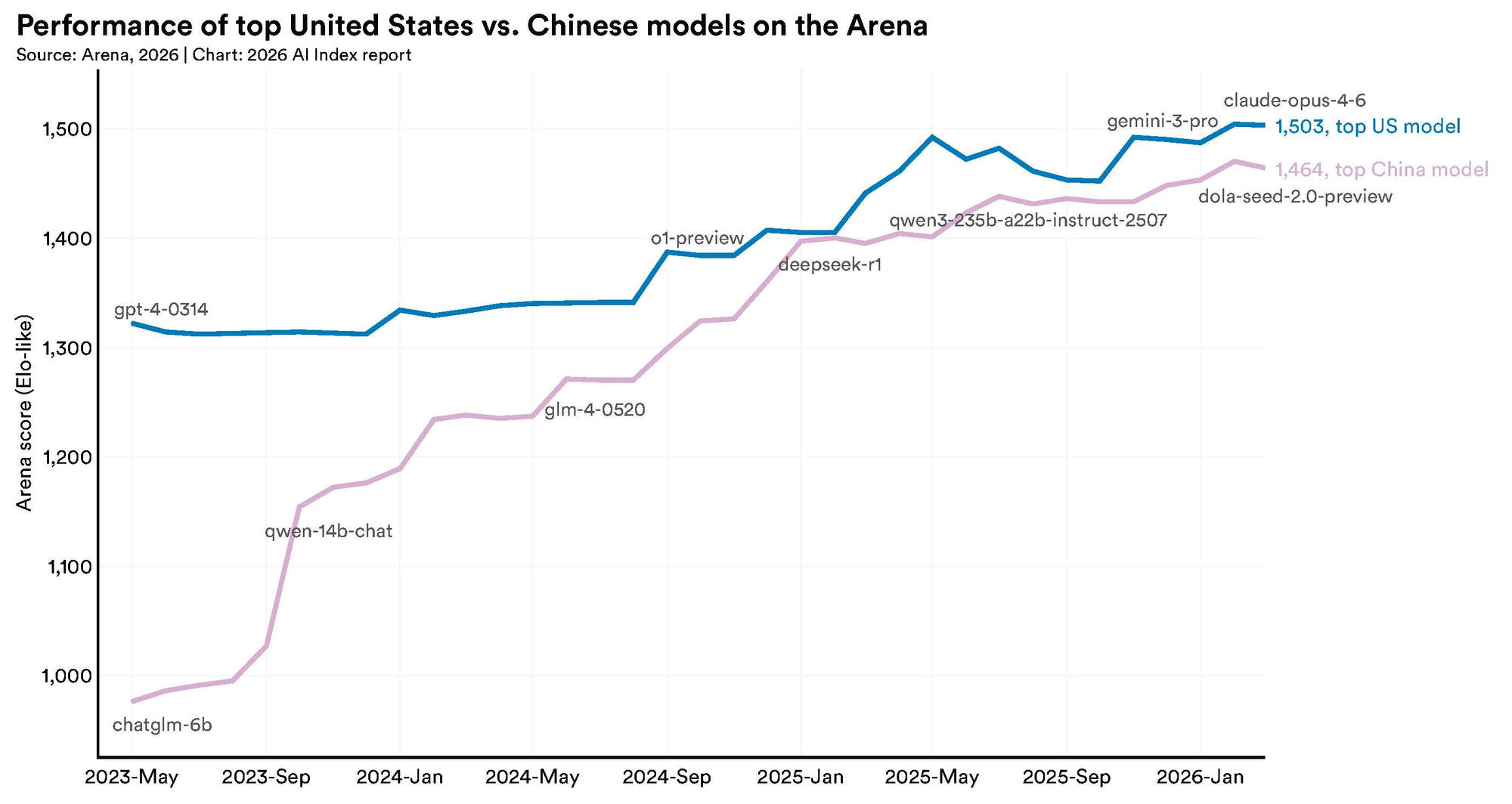

DeepSeek became the symbol of efficient AI when it emerged in January 2025 claiming frontier-level performance at a fraction of the training cost. The narrative was clear and compelling: China had done more with less, the era of compute-intensive scaling was over, and the entire premise of the American AI infrastructure buildout was in question.

Stanford's 2026 AI Index, released April 13, complicates that story with a data point that received far less attention than it deserved. At inference — the moment a model actually responds to a user — DeepSeek V3 consumes approximately 23 watts per medium-length prompt. Claude 4 Opus, by comparison, consumes approximately 5 watts for the same task.

That is a 4.6-fold difference in the direction most people would not have predicted.

The gap matters because inference is not a corner case. It is the dominant mode of AI energy consumption at scale. Training happens once. Inference happens billions of times a day.

What Stanford Actually Measured

Stanford's AI Index reports inference energy on a per-query basis, estimating watt consumption for a medium-length prompt response. The data cited in the 2026 report shows significant variation across models, with the least efficient models consuming more than ten times the energy of the most efficient ones.

The 23-watt DeepSeek V3 figure and the 5-watt Claude 4 Opus figure sit at opposite ends of that spectrum. Other models, including various GPT and Gemini versions, fall between them. The report uses these figures to illustrate that inference efficiency is not strongly correlated with training efficiency or overall benchmark capability.

This is a specific and important observation. DeepSeek's widely publicized claim to efficiency was primarily about training: the company reported spending approximately $5.576 million to train V3, a figure that contrasted sharply with the hundreds of millions or billions that US labs reportedly spend on comparable training runs.

That training cost claim has not been independently validated, and questions have been raised about what it includes and excludes, but the directional contrast was real and prompted genuine strategic reassessment across the industry.

The inference picture is different. When a model is serving responses to users at scale, the watts consumed per query add up across millions of daily requests.

A model that trained cheaply but runs expensively is not a straightforwardly efficient system. It is efficient on one dimension and inefficient on another.

The Scale of the Environmental Problem

The inference comparison sits within a broader environmental picture that Stanford's 2026 AI Index documents in detail, and the numbers are large enough to require context to make sense of.

For comparison, training GPT-4 was estimated at 5,184 tons. Training Meta's Llama 3.1 405B was estimated at 8,930 tons. Training runs for frontier models have become roughly 14 times more carbon-intensive than they were eighteen months ago.

Stanford's report notes these figures should be treated with caution. In the case of Grok 4, the estimates rely on inferred inputs from public reporting and company statements rather than verified data.

Epoch AI independently estimates Grok 4's emissions at approximately 140,000 tons, nearly double the lower figure. The range itself illustrates that frontier labs do not disclose the information needed to make these calculations precisely.

The United States hosts 5,427 AI data centers, more than ten times any other country, and consumes more energy to run them than any other nation.

The Jevons Paradox Problem

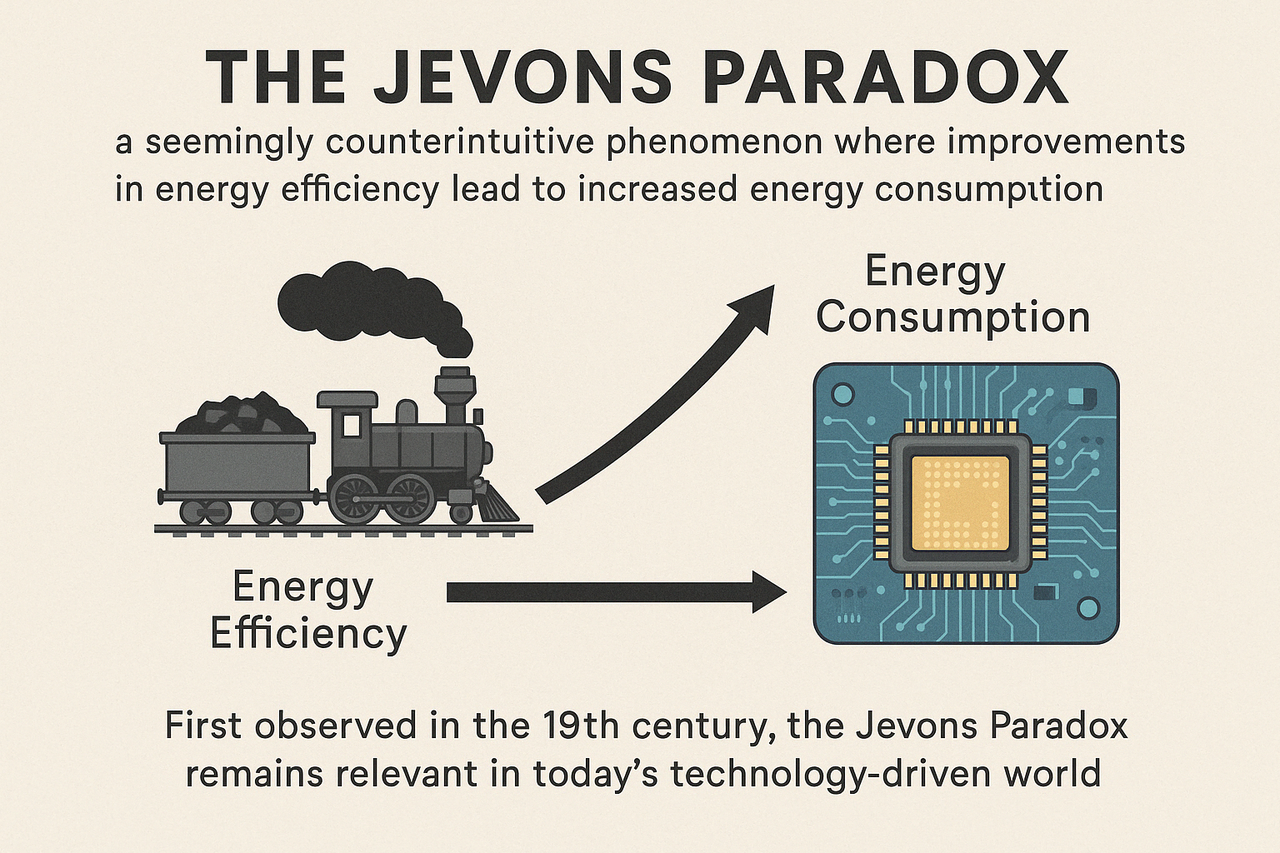

When DeepSeek's training efficiency claims emerged in January 2025, Microsoft CEO Satya Nadella responded within hours with a social media post: "Jevons paradox strikes again! As AI gets more efficient and accessible, we will see its use skyrocket, turning it into a commodity we just can't get enough of."

The reference was to William Stanley Jevons, a Victorian economist who observed in 1865 that more efficient steam engines did not reduce coal consumption in British factories. They increased it, because efficiency lowered the cost of using coal, which expanded demand faster than the efficiency gains reduced per-unit consumption.

The Jevons Paradox is the clearest framework for understanding what "cheap AI" actually means for total energy consumption, and it suggests that model efficiency improvements may not translate into reduced aggregate energy use at all.

The mechanism is straightforward. If AI inference becomes cheaper per query, more queries happen. Organizations that previously could not afford to deploy AI at scale find it economically viable.

New use cases emerge that did not exist when costs were higher. The volume of AI use grows faster than the per-unit cost falls. Total consumption rises even as individual efficiency improves.

This is not hypothetical. Days after DeepSeek's training cost claims circulated, Meta raised its 2025 AI spending to $60 to $65 billion, a 50% year-over-year increase.

Microsoft disclosed a $13 billion AI revenue run rate, up 175% year over year. Neither company reduced its infrastructure investment in response to a competitor's efficiency claims. Both increased it.

Wei Wang, a computer architect at ByteDance, published an analysis in July 2025 in SIGARCH arguing that the Jevons rebound effect in AI data centers may already exceed 100%, meaning efficiency improvements are being outpaced by demand growth.

His conclusion was that "efficiency gains are necessary but not sufficient" and that without additional controls, total energy consumption will continue to rise even as each individual compute operation becomes more efficient.

A ScienceDirect paper on DeepSeek and data center energy noted a structural irony: DeepSeek's inference efficiency improvements will likely accelerate overall AI adoption, expanding the total pool of users and use cases, which will ultimately increase total energy demand even if energy per query falls.

What the Community Resistance Tells Us

The energy story is moving from an abstract environmental concern to a concrete local politics problem, and Stanford's 2026 AI Index documents the backlash directly.

According to a report by Data Center Watch cited in Quartz's coverage of the Stanford Index, $64 billion worth of US data center projects have been blocked or delayed over the past two years due to local opposition. At least 142 activist groups are organizing across 24 states.

The opposition is bipartisan: 55% of elected officials who have taken public positions against data center projects are Republicans, 45% are Democrats. In Warrenton, Virginia, every town council member who voted to support an Amazon data center project has since lost their seat.

Ireland and the Netherlands have placed moratoriums on new data center construction in certain regions due to electricity grid constraints. Virginia, which hosts the world's largest concentration of data centers, saw 32 data center bills introduced in its state legislature in 2025 alone.

California's SB 253 requires businesses over $1 billion in revenue to disclose Scope 1 through 3 emissions starting in 2026.

This resistance is not primarily about abstract climate concerns. It is about electricity prices, grid stability, water use, and land use in specific communities.

PJM market capacity prices increased tenfold between the 2024/25 and 2026/27 planning years, a change directly attributable to data center electricity demand. Virginia residential electricity bills are projected to increase by $14 to $37 per month by 2040.

The Transparency Gap

One of the most significant findings in Stanford's environmental section is not a number but an absence: the leading AI labs do not disclose the information needed to verify their own environmental claims.

The 2026 report notes that almost all leading frontier AI model developers report results on capability benchmarks, while reporting on responsible AI dimensions including energy and environmental impact remains "spotty."

The estimates Stanford uses for training emissions and inference energy are derived from public reporting, company statements, and third-party research rather than verified disclosures from the labs themselves.

This creates a specific accountability problem. When DeepSeek claims to have trained V3 for $5.576 million, that figure cannot be independently verified.

When xAI's training emissions for Grok 4 are estimated at anywhere from 72,816 to 140,000 tons depending on the methodology, the range itself reflects the lack of authoritative disclosure.

The same labs that are building the most energy-intensive systems in human history are the least transparent about exactly how much energy those systems consume.

What "Cheap AI" Actually Costs

The DeepSeek efficiency story and the Stanford energy data together point to a finding that gets lost in the model benchmark competition: the concept of "cheap AI" depends entirely on which cost you are measuring and across what time horizon.

DeepSeek V3 may have been comparatively inexpensive to train. It consumes 4.6 times more energy per inference query than Claude 4 Opus.

At scale, across billions of queries per month, that inference gap matters more than the training cost comparison for total environmental impact.

More broadly, even the most inference-efficient AI models are operating within a system that consumes 29.6 gigawatts of power globally, is projected to double in electricity demand by 2030, and is generating resistance from communities that experience the costs locally in the form of grid stress, water consumption, and electricity bills.

The Jevons Paradox means that making each unit of AI computation cheaper does not reduce the aggregate cost of AI's energy footprint. It typically increases it, because cheaper computation enables more computation.

The efficiency improvements that make AI more accessible are the same improvements that make the total environmental bill larger.

Stanford's position on this is measured rather than alarmist: the report does not conclude that AI's energy costs outweigh its benefits. But it does document, with characteristic precision, that the framing of "efficient AI" as a solution to AI's energy problem is premature as long as demand growth outpaces per-unit efficiency gains.

The data to measure whether it does is largely being withheld by the organizations best positioned to provide it.

Frequently Asked Questions

How much more energy does DeepSeek use than Claude at inference?

According to Stanford's 2026 AI Index, DeepSeek V3 consumes approximately 23 watts when responding to a medium-length prompt, compared to approximately 5 watts for Claude 4 Opus. That is a 4.6-fold difference. The report notes that the least efficient models consume more than ten times the energy of the most efficient ones across the models it tracked.

Wasn't DeepSeek supposed to be the efficient AI?

DeepSeek's efficiency claims primarily concerned training costs. The company reported training its V3 model for approximately $5.576 million, far below comparable US lab spending. That training cost figure has not been independently verified and its scope is contested. At inference, the dominant mode of energy consumption when a model is serving users at scale, DeepSeek V3 consumes significantly more energy per query than Claude 4 Opus.

How large is AI's environmental footprint in 2026?

Stanford's 2026 AI Index documents that AI data center power capacity has reached 29.6 gigawatts globally, comparable to powering all of New York State at peak demand. Training Grok 4 produced an estimated 72,816 to 140,000 tons of CO₂ equivalent, depending on the methodology used. Annual inference water use for GPT-4o alone may exceed the drinking water needs of 12 million people. The cumulative electricity draw of all AI systems is now comparable to the national electricity consumption of Switzerland or Austria.

What is the Jevons Paradox and why does it matter for AI energy?

The Jevons Paradox, described by Victorian economist William Stanley Jevons in 1865, is the observation that making a resource more efficient to use tends to increase total consumption rather than decrease it, because lower cost enables more use cases and higher volume. Applied to AI, it means that efficiency improvements in training and inference do not necessarily reduce total AI energy consumption if demand grows faster than efficiency improves. Microsoft CEO Satya Nadella explicitly invoked the paradox in response to DeepSeek's release, predicting AI use would "skyrocket" as it became cheaper.

Why aren't AI companies disclosing their energy use?

Stanford's report notes that almost all leading frontier AI developers report on capability benchmarks while responsible AI reporting including energy and environmental data remains spotty. The emission and energy estimates in the Stanford Index are derived from public reporting, company statements, and third-party research rather than verified disclosures. The range between the low and high estimates for Grok 4's training emissions (72,816 vs 140,000 tons) reflects this data gap. No regulatory requirement currently compels frontier AI labs to disclose verified training or inference energy consumption data.

Related Articles