In early April 2026, users started circulating screenshots of a clause buried in Microsoft's Copilot Terms of Use.

Under a section titled "IMPORTANT DISCLOSURES AND WARNINGS," in bold capital letters, the document reads:

"Copilot is for entertainment purposes only. It can make mistakes, and it may not work as intended. Don't rely on Copilot for important advice. Use Copilot at your own risk."

The same document adds that Microsoft makes no warranty of any kind about Copilot, that users should not assume its outputs are free from copyright or privacy infringement, and that users are "solely responsible" if they choose to publish or share Copilot's responses. Worth noting: this is a product Microsoft sells to businesses for $30 per user per month.

Where the Disclaimer Came From

Microsoft's spokesperson was quick to clarify, saying the clause dates back to February 2023, when Copilot launched as a search companion inside Bing.

"The 'entertainment purposes' phrasing is legacy language from when Copilot originally launched as a search companion service in Bing," the company told Windows Latest and PCMag. "As the product has evolved, that language is no longer reflective of how Copilot is used today and will be altered with our next update."

The terms of use page lists its last update as October 24, 2025, meaning Microsoft had fourteen months to revise the language and did not, and no timeline for the change has been provided.

The Critical Distinction: Consumer vs. Enterprise

The most important thing to understand about this story is the scope of the clause.

The "entertainment purposes only" disclaimer applies to consumer Copilot products. The document itself states clearly: "These Terms don't apply to Microsoft 365 Copilot apps or services unless that specific app or service says that these Terms apply."

This means:

- Consumer Copilot (the free and individual-subscription versions at copilot.microsoft.com) is covered by the disclaimer.

- Microsoft 365 Copilot (the $30/user/month enterprise product integrated into Word, Excel, PowerPoint, Outlook, and Teams) operates under separate enterprise terms.

Enterprise M365 Copilot comes with Microsoft's Enterprise Data Protection framework, data processing agreements, GDPR compliance commitments, EU Data Boundary options, and a commitment that customer data is not used to train foundation models.

So if your organization has licensed M365 Copilot through a commercial agreement, the "entertainment only" clause does not apply to that product.

But That's Not the End of the Story

The distinction matters legally, but it does not eliminate the practical risks.

When a user pastes a client contract, a salary spreadsheet, or internal financial projections into the consumer Copilot interface at copilot.microsoft.com, that data enters a system governed by the consumer terms: the ones that disclaim all responsibility and place it entirely on the user.

This is the scenario the "entertainment only" clause is actually pointing at, even if Microsoft's legal team drafted it for a different era.

If an employee has been granted access to sensitive documents they should not have, including salary data, acquisition plans, and personnel files, Copilot will include that data in responses, because the AI enforces the letter of access controls rather than their spirit, and most real-world permission setups are far more permissive than the principle of least privilege requires.

Gartner found that 40% of organizations experienced deployment delays of three months or more specifically because of data oversharing concerns. And 52% of organizations reported experiencing poor-quality or inaccurate Copilot outputs tied to incomplete or stale data.

In August 2024, Copilot falsely accused a German court reporter of being a convicted child abuser and fraudster and provided his home address, with Microsoft blocking the queries only after a data protection complaint. In January 2026, Copilot generated false claims about football-related violence.

These were consumer product incidents, but the underlying hallucination problem affects enterprise deployments as well. Copilot's accuracy Net Promoter Score, tracked by Recon Analytics, fell from -3.5 in July 2025 to -24.1 by September 2025. Among lapsed users, 44.2% cited distrust of Copilot's outputs as their primary reason for stopping.

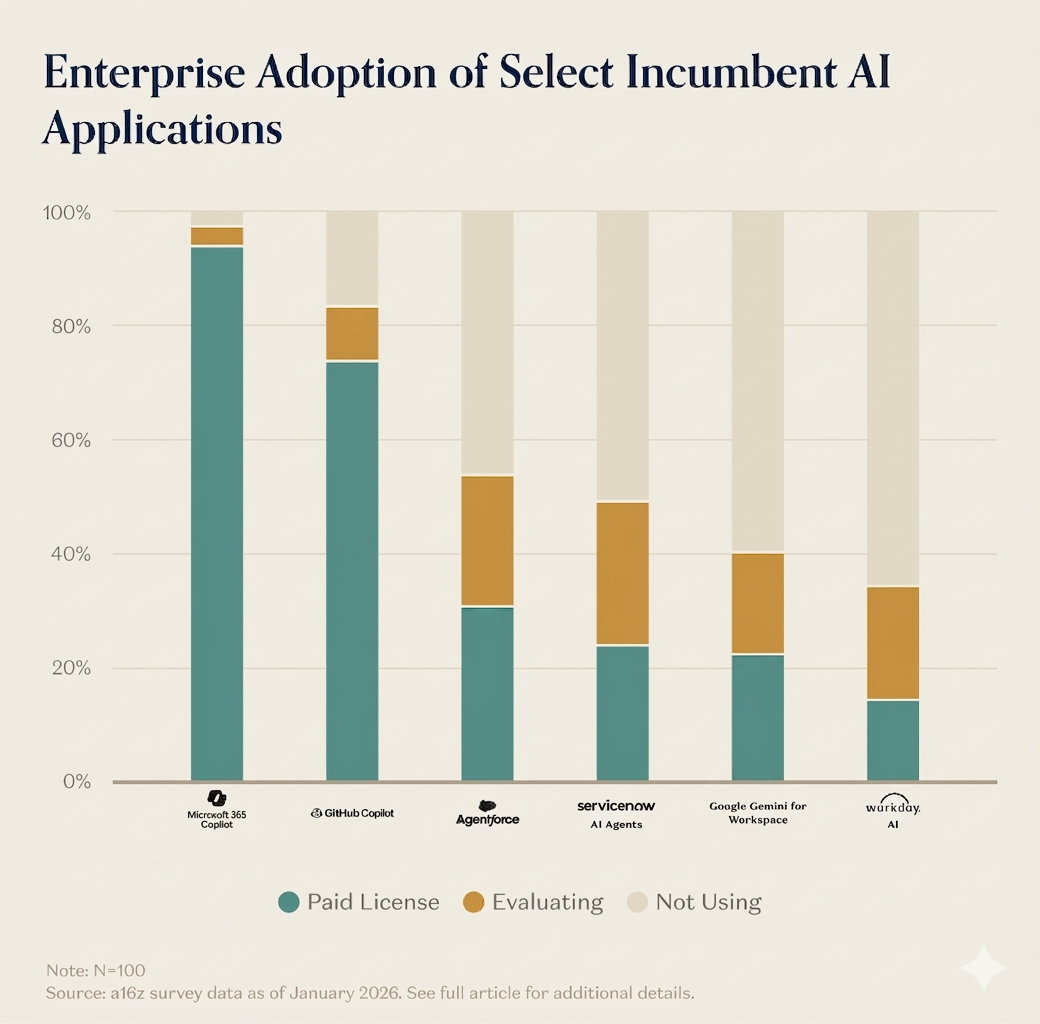

What the Adoption Numbers Actually Show

Microsoft announced 15 million paid M365 Copilot seats in Q2 fiscal 2026, a figure that sounds significant until context is applied: the company has approximately 450 million commercial users, making 15 million paid seats a 3.3% conversion rate after two years of aggressive promotion, deep product integration, and a $30/month price point.

US paid subscriber market share fell from 18.8% in July 2025 to 11.5% in January 2026, a 39% contraction in six months, and in competitive surveys where employees could choose between Copilot, ChatGPT, and Gemini, only 8% selected Copilot.

These numbers reflect a product that is widely available, deeply integrated into Microsoft's ecosystem, and still struggling to convert that availability into active paid usage. The negative NPS trajectory and the distrust data suggest the problem is not feature coverage but reliability.

The Liability Question

Microsoft's consumer terms are unambiguous about where responsibility sits, stating that users are "solely responsible" for any Copilot content they choose to share or publish, and advising them to "always use your judgment and check the information you get from Copilot before you make decisions or act."

For enterprise IT leaders, this creates a specific governance question: how are employees using both versions of Copilot, and under which terms?

- The regulated data scenario. An employee in financial services, healthcare, or legal drafts a client recommendation using consumer Copilot, the output contains an inaccuracy, the client relies on it, and the disclaimer places responsibility for that outcome on the employee and, by extension, the employer.

- The code-generation scenario. A developer uses GitHub Copilot or consumer Copilot to generate code for a production system, the code contains a vulnerability, and under Microsoft's terms, the user is responsible for verifying outputs before deployment.

- The document-drafting scenario. A contract is drafted with AI assistance, reviewed without catching a hallucinated clause, and the enterprise bears the consequences.

None of these scenarios are hypothetical, and all of them are occurring in organizations right now. The "entertainment only" clause is Microsoft's legal framing of the risk transfer, and that risk transfer applies regardless of whether the language gets updated.

What IT Leaders Should Do Now

The term update Microsoft has promised addresses a PR problem rather than a governance one, and organizations that treat it as a meaningful policy change are misreading what the clause actually signals.

Audit which Copilot products your employees are actually using. Consumer Copilot, M365 Copilot, GitHub Copilot, and Copilot in Edge are different products under different terms. Most organizations do not have comprehensive visibility into which employees are using which.

Review your data governance posture before expanding deployment. Microsoft's own documentation recommends using SharePoint permission models to limit what Copilot can access. In most real-world deployments, permissions are far more permissive than the principle of least privilege would require. Before M365 Copilot can access your sensitive data appropriately, that data needs to be classified and access-controlled appropriately.

Build a shadow AI policy. Consumer Copilot is effectively shadow AI: it is already inside your organization, it is free to use, and it operates under terms that make your employees and your organization responsible for everything that comes out of it.

Treat AI output as a first draft, not a final product. Microsoft's own terms instruct users to verify Copilot's outputs before acting on them. Organizations need to formalize this instruction into workflow design, not leave it as a user-level discretionary practice.

Understand the enterprise terms you actually have. If your organization has a commercial Microsoft agreement, you have Enterprise Data Protection commitments that the consumer disclaimer does not cover, and understanding exactly where those commitments begin and end is a necessary part of any AI governance review.

Conclusion

The "entertainment purposes only" clause is not an accurate description of what Microsoft is selling enterprise customers, but it is an accurate description of what Microsoft is offering consumer users: a probabilistic language model that can and does make mistakes, accepts no warranty, and places all responsibility for outcomes on the person using it.

The real irony is not that the language is wrong about the technology, because LLMs do hallucinate, they are probabilistic, and they do require human verification. The irony is that organizations are deploying these tools at scale, for decisions that carry real consequences, under terms that Microsoft itself describes as placing the entire risk burden on the user.

Updating the language will not change the underlying risk profile, and getting governance right on data classification, access controls, clear policies, and verification workflows is what actually does.

Frequently Asked Questions

Does the "entertainment purposes only" disclaimer apply to Microsoft 365 Copilot in enterprise?

No. The disclaimer appears in the consumer Copilot terms of use (copilot.microsoft.com/for-individuals/termsofuse) and explicitly does not apply to Microsoft 365 Copilot enterprise services. Enterprise M365 Copilot operates under Microsoft's commercial terms, which include Enterprise Data Protection commitments, data processing agreements, and GDPR compliance provisions.

Why did Microsoft's terms still say "entertainment only" in April 2026 if the product has evolved?

Microsoft has acknowledged the language is "legacy" from Copilot's 2023 launch as a Bing Chat companion. The terms were updated in October 2025 but the clause was not removed. A spokesperson said the language "will be altered with our next update" but provided no timeline.

What are the real data risks with Microsoft Copilot in enterprise?

The primary risks include: employees using consumer Copilot (covered by the disclaimer) with organizational data, overpermissioning inside M365 Copilot where the AI accesses all data a user can access, hallucination in outputs that may not be caught before use, and the EchoLeak vulnerability identified in 2025, which allowed zero-click exfiltration of email data. Gartner found 52% of organizations experienced inaccurate Copilot outputs and 40% experienced deployment delays due to data security concerns.

What happened with Copilot's accuracy and adoption metrics?

Copilot's accuracy Net Promoter Score fell from -3.5 in July 2025 to -24.1 by September 2025, according to Recon Analytics tracking, recovering only partially to -19.8 by January 2026. Among users who stopped using the tool, 44.2% cited distrust of its outputs. Microsoft reported 15 million paid M365 Copilot seats in Q2 fiscal 2026, representing just 3.3% of its approximately 450 million commercial users.

What should organizations do about Copilot governance?

Organizations should audit which Copilot products employees are actually using, review data permissions before expanding M365 Copilot access, establish a shadow AI policy covering consumer Copilot use, build output verification into workflows rather than leaving it to individual discretion, and review the specific enterprise data protection terms in their Microsoft commercial agreement.

Related Articles