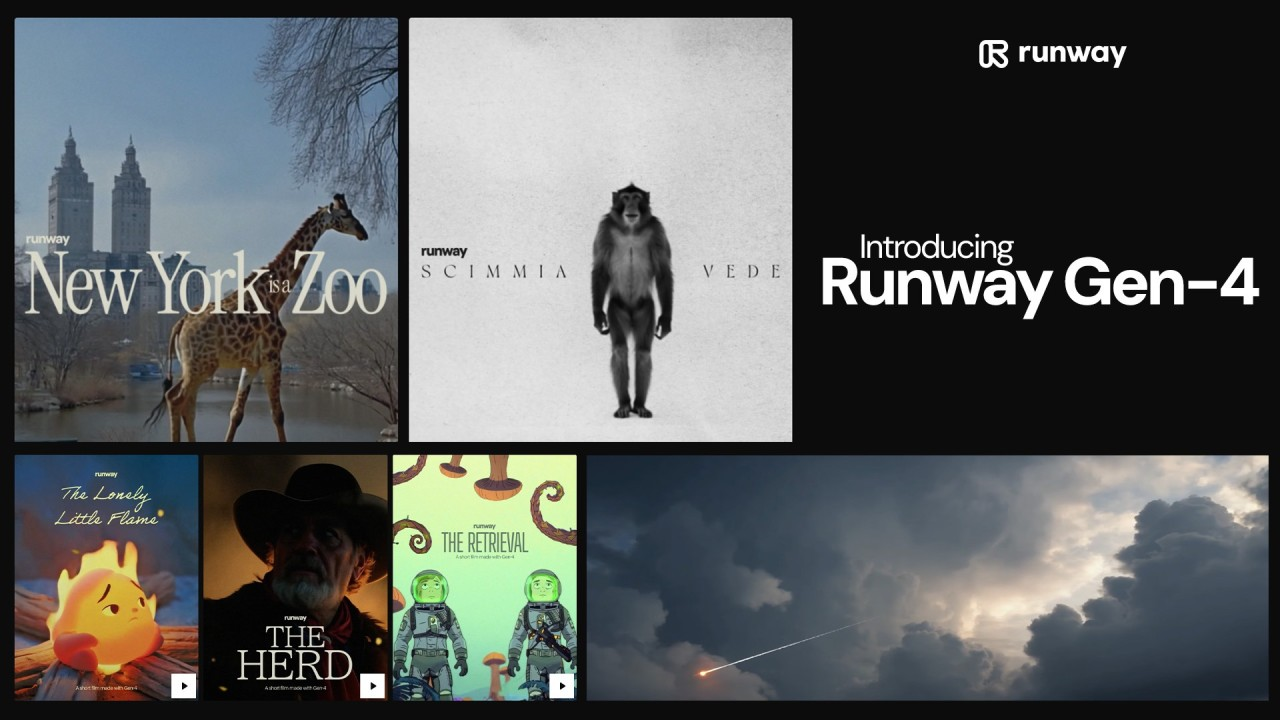

The AI video generation space has been heating up fast, and Runway's latest release—Gen-4—is making some bold claims about being their most advanced model yet. But does it actually live up to the hype? After spending considerable time testing the new model and comparing it to its predecessor, Gen-3 Alpha, I've got some thoughts to share.

If you've been following the AI video scene, you know that Runway has been one of the key players pushing this technology forward. They were early to the game, and their models have consistently impressed creators. But with OpenAI's Sora on the horizon and other competitors like Pika and Luma Dream Machine making waves, the pressure is on for Runway to deliver something truly exceptional.

What Makes Gen-4 Different?

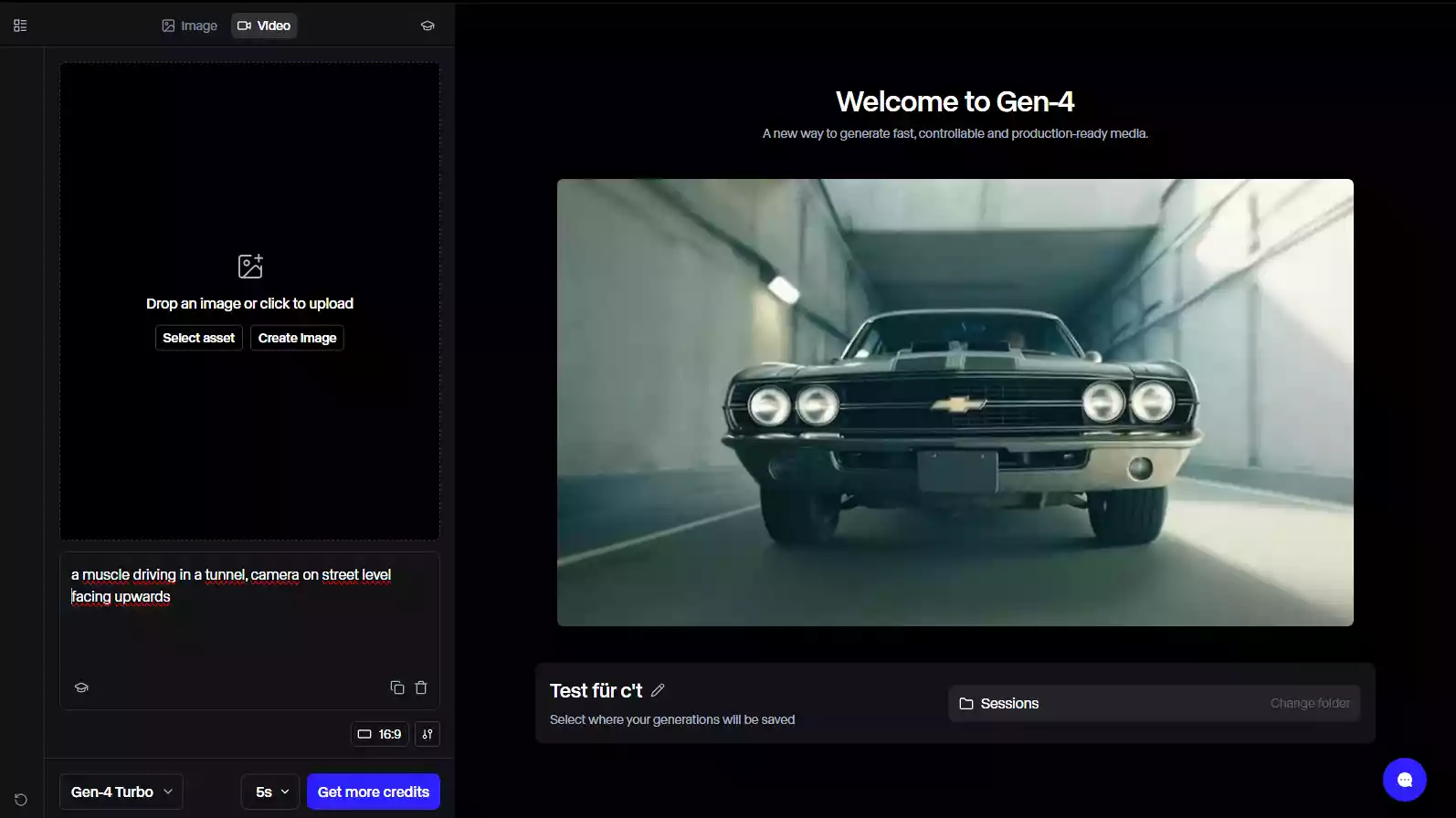

Runway announced Gen-4 with some pretty significant promises. According to the company, this model offers improved temporal consistency, better motion quality, and more accurate prompt adherence compared to Gen-3. They've also highlighted enhanced realism and the ability to handle more complex scenes with multiple subjects and intricate camera movements.

The big question is whether these improvements are incremental or transformative. From my testing, the answer sits somewhere in the middle—but it's definitely closer to transformative than you might expect.

Image Quality and Realism

Let's start with what matters most to creators: how does the footage actually look? Gen-4 produces noticeably sharper and more detailed videos compared to Gen-3. The textures feel more refined, and there's less of that "AI smoothness" that plagued earlier generations. Skin textures, fabric details, and environmental elements all show improvement.

The lighting is where Gen-4 really shines. The model seems to have a much better understanding of how light behaves in different environments. Whether it's soft natural lighting through a window or dramatic cinematic lighting in a moody scene, Gen-4 handles it with more sophistication. Shadows fall more naturally, and the interplay between light and surfaces feels more realistic.

Color grading has also seen an upgrade. Gen-3 sometimes produced videos with slightly oversaturated or unrealistic color palettes. Gen-4 delivers more balanced, film-like colors that don't require as much color correction in post-production.

Motion and Temporal Consistency

This is where previous AI video models have struggled most, and it's an area where Gen-4 makes genuine progress. Temporal consistency—basically, how well the model maintains coherent visuals from frame to frame—has improved significantly.

In Gen-3, you'd occasionally see subjects morphing slightly between frames, especially during complex movements. Hands were particularly problematic, sometimes gaining or losing fingers as they moved. Gen-4 doesn't completely solve this issue, but it's much better. The morphing is less frequent and less noticeable when it does occur.

Camera movements feel more intentional and smooth. Pan shots, tracking shots, and dolly movements have a more cinematic quality. The model seems to better understand the physics of how cameras move through space, resulting in footage that feels less like an AI interpretation and more like actual cinematography.

Character movements have also improved. Walking, running, and other common actions look more natural. The weight and momentum of moving subjects feel more accurate, which is crucial for creating believable footage.

Prompt Understanding and Control

One of the most frustrating aspects of working with AI video generators is when the model just doesn't do what you ask. Gen-4 shows better prompt adherence, though it's still not perfect.

The model handles complex, multi-element prompts better than Gen-3. If you ask for "a woman in a red dress walking through a rainy Tokyo street at night with neon signs reflecting in puddles," Gen-4 is more likely to include all those elements correctly. Gen-3 might have dropped the rain or the reflections.

That said, you still need to be thoughtful about how you structure your prompts. Clearer, more specific language produces better results. Vague prompts still lead to unpredictable outcomes, just like with any AI model.

Camera control through prompts has improved as well. Specifying camera angles, movements, and focal lengths seems to have more consistent effects. Terms like "wide-angle lens," "shallow depth of field," or "tracking shot" are better understood and executed.

What About the Limitations?

No AI video model is perfect yet, and Gen-4 still has its share of issues. Understanding these limitations is important for anyone considering using Runway for serious projects.

- Hands and fingers remain challenging. While Gen-4 is better than Gen-3, detailed hand movements and interactions with objects can still look off. If your video requires close-ups of hands doing precise actions, you might need multiple generations to get usable footage.

- Text and small details can be problematic. Generating readable text within videos is still hit-or-miss. Signs, logos, and other text elements often come out blurry or nonsensical. This is a common issue across most AI video models.

- Consistency across multiple generations is limited. If you're trying to create a longer video by stitching together multiple generations, maintaining visual consistency between clips can be difficult. Character appearances, lighting conditions, and environmental details may vary between generations even with identical prompts.

- The model can still produce artifacts. You might see strange distortions, especially in complex scenes with lots of movement or fine details. Background elements sometimes warp or blur unexpectedly.

- Generation time hasn't changed much. Creating a video still takes several minutes, which can slow down creative workflows. You're not getting real-time generation here.

Comparing Gen-4 to Competitors

| Feature / Comparison Point | Runway Gen-4 | Pika Labs | Luma Dream Machine | OpenAI Sora (Not Public Yet) |

|---|---|---|---|---|

| Overall Quality | Extremely realistic, cinematic output | Good, but more stylized; less realism | Solid quality, but less polished than Gen-4 | Expected to be exceptional based on demos |

| Motion Control | Strong, smooth motion | Known for creative motion tools | Decent but less advanced | Expected to have top-tier temporal consistency |

| Creative Tools | Focus on realism over creative editing | “Expand canvas,” unique creative features | Simple, accessible toolkit | Unknown |

| Speed | Slower than some competitors | Moderate | Very fast | Unknown |

| Ease of Use | Professional-grade, slightly steeper learning | User-friendly, creative-friendly | Very accessible | Unknown |

| Pricing | Paid, premium-tier | Freemium with useful features | Generous free tier | Unknown |

| Best For | High-end, realistic video generation | Creative, playful edits and expansions | Fast generation, beginners, budget users | Long, coherent, high-quality storytelling (expected) |

| Availability | Publicly available | Publicly available | Publicly available | Not available yet |

| Biggest Strength | Realism + professional polish | Creative flexibility | Speed + accessibility | Long-duration consistency |

| Biggest Limitation | Slower + pricier | Less realism | Less cinematic, less advanced | Not accessible, only demos |

Real-World Use Cases

So what can you actually do with Gen-4? Here are some practical applications where the model excels:

- B-roll for video projects: If you need supplemental footage for documentaries, corporate videos, or YouTube content, Gen-4 can generate high-quality b-roll quickly. This is especially useful for concepts that would be expensive or difficult to film traditionally.

- Concept visualization: Directors and creatives can use Gen-4 to quickly visualize scenes before committing to full production. It's like having an extremely fast and flexible previsualization tool.

- Social media content: For creators who need regular content for platforms like Instagram, TikTok, or YouTube Shorts, Gen-4 can help maintain a consistent posting schedule without constant filming.

- Advertising and marketing: Brands can test different visual concepts quickly and affordably. Want to see how your product would look in ten different settings? Gen-4 can generate those variations in less time than it would take to plan a single photoshoot.

- Music videos and artistic projects: The creative possibilities are particularly exciting here. Musicians and artists can create surreal, impossible visuals that would be impractical or impossible to film conventionally.

Pricing and Accessibility

Runway operates on a credit system, and it's not the cheapest option out there. The Standard plan starts at $12 per month for 625 credits, with each 5-second Gen-4 video costing 10 credits. That means you're looking at about 312 seconds (roughly 5 minutes) of video per month on the basic plan.

For professional use, you'll likely need the Pro plan at $28 per month, which includes 2,250 credits. That gets you about 18 minutes of Gen-4 footage monthly. Heavy users can opt for the Unlimited plan at $76 per month, though even "unlimited" has some fair use restrictions.

Is it worth it? That depends on your needs. For casual experimentation, the free tier (which offers a limited number of credits) is fine. For serious content creation, you'll need a paid plan, and costs can add up quickly if you're generating a lot of footage.

The Technical Side: How Does It Work?

Without getting too deep into the technical weeds, Gen-4 is built on advanced diffusion models and temporal transformers. These technologies allow the model to understand both spatial relationships (what things look like) and temporal relationships (how things move and change over time).

Runway has also implemented improved training techniques, likely using a more diverse and extensive dataset than Gen-3. This helps the model better understand real-world physics, lighting, and motion.

The model processes your text prompt through a multi-stage pipeline, first understanding the semantic content, then generating an initial visual structure, and finally refining details and motion across frames. This happens in a matter of minutes, which is impressive considering the computational complexity involved.

Tips for Getting Better Results

After extensive testing, here are some strategies that consistently produce better Gen-4 outputs:

- Be specific but not overly complex. Include key details like setting, lighting, camera angle, and action, but don't overwhelm the prompt with too many elements.

- Use cinematic language. Terms like "golden hour lighting," "shallow depth of field," "tracking shot," and "wide-angle lens" help guide the model toward more professional-looking results.

- Iterate and refine. Your first generation might not be perfect. Look at what works and what doesn't, then adjust your prompt accordingly.

- Start with still image-to-video. If you have a specific aesthetic in mind, generating a still image first (using Runway's image generator or another tool) and then animating it with Gen-4 often produces more consistent results.

- Keep it simple for complex movements. If you need intricate actions or interactions, sometimes breaking them into simpler segments and combining them in post-production works better than trying to generate everything in one clip.

FAQ

Is Runway Gen-4 significantly better than Gen-3?

Yes. Gen-4 offers sharper visuals, improved lighting, stronger motion consistency, and better adherence to complex prompts. The upgrade feels meaningfully more advanced than Gen-3.What are the biggest improvements in Gen-4?

Gen-4 brings enhanced realism, better temporal stability, more natural camera movement, improved character motion, and stronger understanding of cinematic language.Does Gen-4 still have weaknesses?

Yes. Hands and fingers remain inconsistent, text within scenes is unreliable, multi-clip consistency is limited, and artifacts can appear in complex motion. Generation time also hasn’t improved much.How does Gen-4 compare to Pika, Luma, and Sora?

Gen-4 offers more realistic footage than Pika and generally more polished output than Luma. Sora appears more consistent in long clips, but it isn’t publicly available yet — so Gen-4 leads in accessible, high-end quality.What is Gen-4 best used for?

It excels at b-roll, social media content, concept visualization, advertising, music videos, and artistic projects where cinematic, high-quality footage is needed quickly.Is Gen-4 worth paying for?

For creators, marketers, filmmakers, and brands needing reliable high-quality AI footage — yes, it’s worth it. Casual users may find the credit system pricey.How can I get better results with Gen-4?

Use clear and specific prompts, include cinematic terms, iterate between generations, start with an image-to-video workflow when needed, and avoid overly complex actions in one clip.Does Gen-4 actually change the AI video landscape?

Yes — it’s a real leap forward. It isn’t perfect, but it’s advanced enough to be used in professional client work, setting a new bar for currently available AI video tools.The Verdict: Does Gen-4 Change Video Generation?

So, does Runway Gen-4 actually change the game? The answer is a qualified yes.

Gen-4 represents a meaningful step forward in AI video generation. It produces noticeably better results than Gen-3 in almost every measurable way—better realism, improved motion, enhanced prompt adherence, and more cinematic quality. For creators who have been frustrated with the limitations of earlier AI video models, Gen-4 addresses many of those pain points.

However, it's not a complete revolution. AI video generation is still in its early stages, and Gen-4 doesn't solve every problem. You'll still encounter artifacts, inconsistencies, and limitations. It's not a replacement for traditional filming—at least not yet.

What Gen-4 does do is make AI-generated video a genuinely viable tool for professional creators. It's crossed a threshold where the quality is good enough for real projects, not just experimental fun. You can actually use this footage in client work, commercial projects, and professional content without audiences immediately recognizing it as AI-generated.

For hobbyists and casual users, Gen-4 might feel like overkill, especially given the pricing. But for content creators, filmmakers, marketers, and artists who need high-quality video assets regularly, Gen-4 is a powerful addition to the toolkit.

The AI video space is moving incredibly fast. Gen-4 sets a new bar for what's currently possible, but competitors are surely working on their own advances. What's exciting isn't just what Gen-4 can do now, but what it signals about where this technology is heading.

If you've been waiting for AI video generation to mature enough for serious use, Gen-4 might be the model that finally convinces you to dive in. It's not perfect, but it's impressively good—and it's only going to get better from here.

Related Articles & Suggested Reading