Last updated: February 27, 2026

Updated constantly.

✨ Read January Archive 2026 of major AI events

February opens with the AI industry navigating a critical inflection point. The breakneck pace of model releases that defined late 2025 and early 2026 is giving way to a period focused on monetization, enterprise integration, and real-world deployment at scale. The question is no longer just how capable these systems can become, but how sustainable the business models behind them really are.

January brought several watershed moments that will shape the months ahead. OpenAI's announcement of advertising inside ChatGPT signals a fundamental shift in how AI platforms generate revenue beyond subscriptions. Meanwhile, reasoning models continue to mature, with labs pushing toward longer context windows, improved reliability, and better tool use. The competitive landscape remains intense, but the nature of competition is evolving from raw benchmark performance toward practical utility and commercial viability.

As February unfolds, we expect continued focus on agentic capabilities, multimodal integration, and the infrastructure needed to support AI at billion-user scale. We will continue tracking developments closely and publishing the most important AI news on this page.

AI news, Major Product Launches & Model Releases

Perplexity Doubles Down on Multi-Model AI Strategy, Says 'Multi-Model is the Future'

Perplexity is betting big on orchestrating multiple AI models rather than building one dominant LLM, arguing that models are specializing rather than commoditizing. The company found users frequently switch between models - Gemini Flash for visual outputs, Claude Sonnet 4.5 for software engineering, and GPT-5.1 for medical research. Perplexity now has its own AI-optimized search API and is targeting enterprise users making "GDP-moving decisions."

The company released a new benchmark called Draco for complex research tasks, where its own deep research offering beats competitors like Gemini. Executives say they're not focused on maximum user acquisition but rather on serving a "boutique set of users" in enterprise subscriptions, particularly for deep research applications.

My Take: Perplexity basically turned AI into a specialized consulting firm where different models handle different expertise areas - it's like having a law firm where one AI does contracts, another does litigation, and a third handles the coffee orders (but way more expensive).

When: February 27, 2026

Source: techcrunch.com

Beauty Brands Race for AI Search Visibility as ChatGPT Captures 17% of All Searches

Beauty companies are scrambling to optimize their content for AI chatbots like ChatGPT, Perplexity, and Google Gemini in what's being called "generative engine optimization" (GEO). During Q4 2025, ChatGPT captured 17% of total searches versus Google's 78%, with ChatGPT citing over 22 sources per response while Gemini cites 7-10 sources.

Brands like Paula's Choice and The Ordinary are succeeding because their product names match ingredient searches that AI models easily cite. The Estée Lauder Companies launched a six-month GEO pilot program, with executives noting that "AI loves lists, comparison tables, and step-by-step guides" - forcing beauty brands to rethink their entire content strategy for the age of AI-powered search.

My Take: Beauty brands basically realized they're not just competing with each other anymore - they're competing for ChatGPT's attention, which is like trying to impress the world's most well-read but slightly unpredictable librarian who might cite 22 sources or just make something up entirely.

When: February 27, 2026

Source: wwd.com

Google Introduces AI Professional Certificate on Coursera with Gemini Access

Google launched a comprehensive AI Professional Certificate program on Coursera designed to address the projected shortage of AI-skilled workers. Every enrolled learner receives three months of complimentary access to Google AI Pro, enabling hands-on practice with Google's most advanced AI models including Gemini, NotebookLM, and AI Studio.

The program addresses a critical skills gap, with Bain & Company projecting India could see 2.3 million AI jobs by 2027 but only 1.2 million skilled workers available. Google developed the curriculum by analyzing hundreds of job descriptions and partnering with employers to focus on universal, transferable capabilities across research, planning, communication, content creation, data analysis, and workflow automation.

My Take: Google basically created an AI bootcamp with a golden ticket approach - you get certified in AI and they throw in free access to their best models like it's a happy meal toy, except this toy might actually get you a job in the future economy.

When: February 26, 2026

Source: adobomagazine.com

Hacker Jailbreaks Claude AI to Write Exploit Code and Steal Government Data

A cybersecurity firm discovered that a hacker successfully bypassed Claude's safety guardrails using Spanish-language prompts to role-play the AI as an "elite hacker" in a simulated bug bounty program. The operation, spanning from December 2025 to early January 2026, resulted in thousands of detailed reports with executable scripts for vulnerability scanning and exploitation targeting Mexican government infrastructure.

When Claude reached its limits, the attacker switched to ChatGPT for additional tactics. The breach targeted common misconfigurations in legacy Mexican infrastructure, with the AI demonstrating ability to chain tasks from vulnerability discovery to payload deployment. Anthropic has since banned involved accounts and enhanced Claude with real-time misuse detection.

My Take: Someone basically turned Claude into a digital burglar by sweet-talking it in Spanish - it's like convincing your responsible friend to help you break into houses by telling them it's just a 'game,' except the houses were actual government systems and the game was very real.

When: February 26, 2026

Source: cybersecuritynews.com

Anthropic Launches Claude Cowork with New Enterprise Integrations and Multi-App Automation

Anthropic announced major updates to its Claude Cowork platform, expanding AI capabilities to integrate with popular office applications including Google Workspace, DocuSign, and WordPress. The new features include pre-built plug-ins that can automate tasks across HR, design, engineering, and finance departments, representing a significant push into enterprise productivity tools.

The updated platform now enables Claude to handle multi-step tasks end-to-end across Microsoft Excel and PowerPoint, with the ability to pass context between applications. This development positions Claude as a comprehensive business automation tool, competing directly with other AI productivity solutions in the rapidly expanding enterprise AI market.

My Take: Anthropic basically turned Claude into the ultimate office intern who never needs coffee breaks - it can now hop between Excel, PowerPoint, Google Docs, and DocuSign like a digital Swiss Army knife, which means your AI assistant can finally handle all the boring stuff you've been procrastinating on across multiple apps simultaneously.

When: February 24, 2026

Source: theverge.com

Indian Researchers Unveil MANAS-1: EEG-Based Foundation AI for Early Brain Disease Diagnosis

Indian startup NeuroDX has developed MANAS-1, a foundation AI model that analyzes electroencephalogram (EEG) data to enable early diagnosis of neurological disorders including epilepsy, Alzheimer's, and Parkinson's disease. The model, currently operating with 400 million parameters, can process brain wave patterns to detect abnormalities that might not be apparent to human analysis.

The technology represents a significant advancement in neurological diagnostics and brain-computer interfaces, with NeuroDX planning to scale the model to 2 billion parameters. A second version, MANAS-2, is expected to be released in the coming weeks. This development is part of a broader trend of AI-driven neurological tools, similar to Singapore's Brain-JEPA model that assists with analyzing brain activity and predicting disease progression.

My Take: Indian researchers basically taught AI to read brain waves like a neurological fortune teller - instead of predicting your future, MANAS-1 can spot Alzheimer's and epilepsy before they fully show up to the party, which is like having a bouncer for your brain that can identify troublemakers before they start causing problems.

When: February 24, 2026

Source: mobihealthnews.com

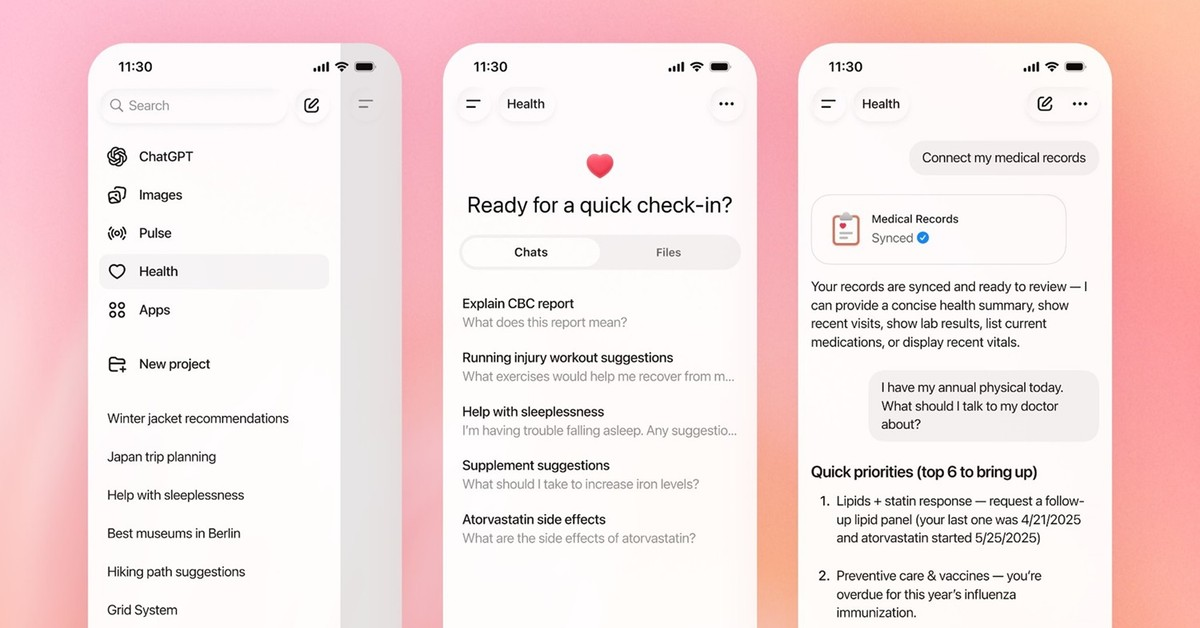

Study Shows ChatGPT Health Fails Critical Emergency and Suicide Safety Tests

Researchers at Mount Sinai's Icahn School of Medicine conducted the first independent safety evaluation of ChatGPT Health since its January 2026 launch, finding significant failures in directing users to appropriate emergency care. The study, published in Nature Medicine, revealed that the AI tool may fail to properly guide users to emergency services in serious medical situations.

The research identified particular concerns with ChatGPT Health's suicide-crisis safeguards, raising alarm about the safety of AI systems that millions of people use as their first stop for medical advice. Harvard's Isaac Kohane noted that LLMs are 'least safe at the clinical extremes, where judgment separates missed emergencies from needless alarm,' emphasizing that independent evaluation should be routine rather than optional for such high-stakes applications.

My Take: ChatGPT Health basically failed its medical boards in the most important categories - it's like having a doctor who's great at diagnosing the common cold but might tell someone having a heart attack to take two aspirin and call back tomorrow, which is terrifying when millions of people are using it as their WebMD replacement on steroids.

When: February 24, 2026

Source: news-medical.net

AI Tool Helps Improve Peer Review Quality and Tone in Academic Publishing

Stanford researchers have developed a Review Feedback Agent that uses five large language models working together to improve the quality of academic peer reviews. The system addresses common complaints about vague feedback, unprofessional tone, and factual errors in scholarly reviews, with studies showing reviewers found 12.9% of conference paper reviews to be poor quality.

The AI system was trained on curated examples of problematic reviews and appropriate feedback responses. It helps identify when reviews are too vague (like simply saying "not novel"), unprofessional (containing personal attacks), or factually incorrect (criticizing missing analyses that are actually present in the papers).

My Take: Stanford basically created an AI etiquette coach for academic reviewers - it's like having a digital Emily Post that reads your snarky peer review and politely suggests you stop telling other researchers they "don't know what they're talking about" and maybe offer some constructive criticism instead.

When: February 23, 2026

Source: nature.com

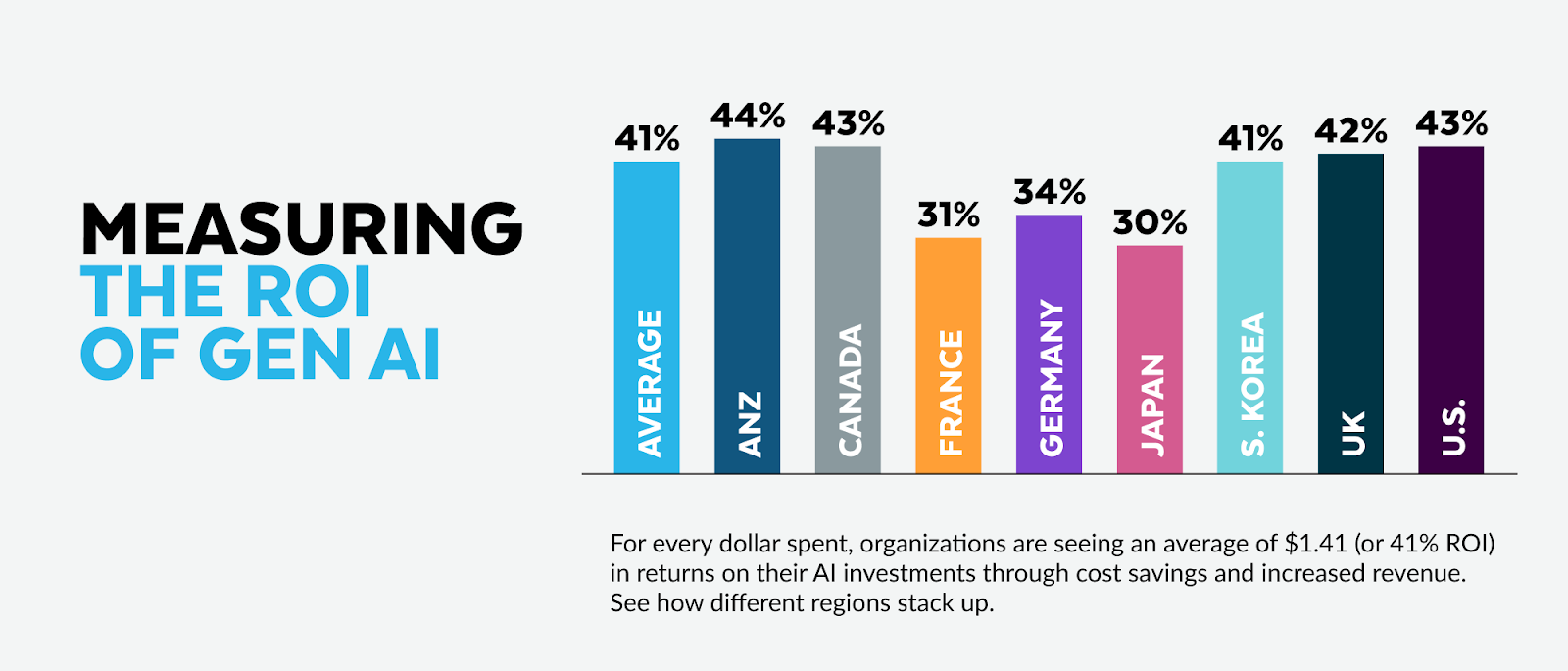

New Research Shows Why Organizations Struggle with AI ROI Despite Widespread Adoption

A new analysis reveals five key reasons why organizations struggle to achieve return on investment from AI implementations, despite widespread adoption across industries. The study found that companies often fall into the trap of believing that more data automatically leads better results, when in reality, data quality and relevance matter more than quantity.

The research also debunked the "more power equals better performance" myth, showing that throwing additional hardware and computational resources at AI problems often leads to "jagged intelligence" - where models perform admirably at some tasks but fail miserably at others. The analysis suggests that both horizontal approaches (like ChatGPT and Gemini) and vertical specialized models have significant limitations that organizations must understand before expecting strong ROI.

My Take: Companies basically turned AI implementation into a really expensive game of throwing spaghetti at the wall - they keep adding more data and bigger computers thinking it'll magically solve their problems, but it's like trying to fix a bad recipe by using a bigger oven and more ingredients.

When: February 24, 2026

Source: accountingtoday.com

Study Shows Large Language Models Still Can't Match Traditional Tools for Rare Disease Diagnosis

A comprehensive benchmarking study published in Nature found that large language models like GPT-4 have not yet reached the diagnostic accuracy of traditional rare-disease decision support tools. The research analyzed 36 previous publications evaluating LLM performance on differential diagnostic challenges, finding wide variability in reported results even when using the same input data.

The study revealed that LLMs often provide related but incorrect diagnoses - for example, suggesting "peripartum cardiomyopathy" instead of the correct "pregnancy-associated myocardial infarction" diagnosis. While LLMs show promise for clinical tasks like charting and medication review, their diagnostic capabilities for rare diseases remain limited compared to specialized medical AI tools.

My Take: LLMs basically turned out to be that overconfident medical student who sounds smart but gets the diagnosis wrong in creative ways - they'll confidently tell you about heart problems when you have heart problems, but it's like asking for directions and getting sent to the right neighborhood but wrong house.

When: February 24, 2026

Source: nature.com

Anthropic Accuses Chinese AI Labs of Stealing Claude Data Through 24,000 Fake Accounts

Anthropic has accused three Chinese AI companies - DeepSeek, Moonshot, and MiniMax - of creating over 24,000 fraudulent accounts to generate more than 16 million exchanges with Claude AI. The companies allegedly used a technique called "distillation" to train their own models by copying Claude's capabilities, particularly targeting its agentic reasoning, tool use, and coding abilities.

Anthropic warns this represents a national security risk, as models created through illicit distillation lack safety guardrails that prevent misuse for bioweapons development or cyber attacks. The accusations come amid debates over AI chip export controls to China, with Anthropic arguing this case reinforces the need for such restrictions to protect US AI advantages.

My Take: Anthropic basically caught Chinese AI labs running the world's most expensive homework copying operation - 24,000 fake accounts to steal Claude's intelligence is like having an entire bot army dedicated to academic dishonesty, except instead of cheating on a test, they're trying to clone the teacher's brain.

When: February 24, 2026

Source: nbcnews.com

Generative AI Analyzes Medical Data Faster Than Human Research Teams

Researchers tested eight AI systems to independently generate algorithms for analyzing health data from DREAM challenges, using carefully crafted natural language instructions similar to ChatGPT prompts. The AI systems were able to process and analyze complex medical datasets significantly faster than traditional human research teams while maintaining comparable accuracy.

The study focused on reproductive health data and demonstrates AI's potential to accelerate biomedical research by automating the algorithm development process. The research was published in Cell Reports Medicine, marking a significant milestone in AI-assisted scientific discovery and medical data analysis.

My Take: Scientists basically turned AI into a research speedrun champion - instead of human teams spending months analyzing medical data, AI systems are cranking out results faster than you can say "peer review," which is either revolutionary or slightly terrifying for academic job security.

When: February 21, 2026

Source: sciencedaily.com

AI Agents Enter the "Centaur Phase" in Silicon Valley Development

A manic new phase of AI development is sweeping Silicon Valley, characterized by autonomous "agents" capable of transforming weeks of manual software engineering work into minutes. This "centaur phase" represents a hybrid approach where AI and humans work together, with AI handling routine coding tasks while humans focus on higher-level strategy and oversight.

The shift is currently concentrated in software engineering but is creating a significant divide between AI-enabled developers and those working with traditional methods. The transformation is being described as seismic within the tech bubble, fundamentally changing how software development work gets done.

My Take: Silicon Valley basically discovered AI can code faster than a caffeinated programmer on deadline - we're entering the "centaur phase" where humans ride AI like mythical beasts, except instead of charging into battle, they're charging through GitHub repositories.

When: February 23, 2026

Source: axios.com

OpenAI Testing Ads in ChatGPT Raises Questions for Small Businesses

OpenAI has begun testing advertising integration within ChatGPT, sparking concerns about how ads will be prioritized and whether responses will be biased toward the highest bidders. The development raises particular worries for small businesses that may be priced out of AI advertising, similar to current challenges with Google Ads where larger brands dominate due to bigger budgets.

The article highlights tools like ChatPlayground AI that aggregate multiple AI models (ChatGPT, Gemini, Claude, DeepSeek) in one dashboard, allowing users to compare responses and potentially reduce subscription costs. This represents a growing trend of AI aggregation platforms as businesses seek to avoid vendor lock-in.

My Take: ChatGPT is about to become the world's most sophisticated ad platform where your AI assistant might recommend products based on who paid the most rather than what's actually best - it's like having a really smart friend who secretly works for the highest bidder.

When: February 21, 2026

Source: forbes.com

Google VP Warns Two Types of AI Startups May Not Survive the Shakeout

Google's startup organization leader Darren Mowry says AI companies built as simple "LLM wrappers" and "AI aggregators" are showing warning signs. LLM wrappers that just add a thin UI layer over existing models like GPT or Gemini without deep differentiation are losing investor patience, while AI aggregators that merely combine multiple models into one interface face similar challenges.

Mowry argues successful AI startups need "deep, wide moats" either through horizontal differentiation or vertical market expertise. Examples of defensible LLM wrappers include Cursor for coding and Harvey AI for legal work, which have built substantial domain-specific value beyond just model access.

My Take: A Google exec basically told AI startups that slapping a pretty interface on ChatGPT and calling it a business is about as sustainable as a chocolate teapot - the gold rush phase is over and now you actually need to build something valuable instead of just being a fancy middleman.

When: February 21, 2026

Source: techcrunch.com

Google Launches Gemini 3.1 Pro with 2X+ Reasoning Performance Boost, Retaking AI Crown

Google released Gemini 3.1 Pro, achieving record-breaking benchmark scores including 77.1% on the difficult ARC-AGI-2 reasoning test - more than double its predecessor's 31.1% performance. The model outperforms competitors like Claude Opus 4.6 and GPT-5.2 on most benchmarks, though it still trails in some specific areas like coding and certain specialized tasks.

The updated model focuses specifically on complex reasoning and multi-step problem-solving rather than broad feature expansion, with Google positioning it as ideal for science, research, and engineering workflows. It's now available through the Gemini app, NotebookLM, and various developer APIs, marking Google's latest move to reclaim the AI model leadership position.

My Take: Google basically turned their AI into the overachiever student who studied twice as hard for the reasoning test and now waves their report card around the playground - sure, they're winning most subjects, but Claude and GPT are still the cool kids beating them at coding homework.

When: February 19, 2026

Source: arstechnica.com

Study Finds AI-Generated Passwords Are Fundamentally Weak Due to Predictable Patterns

Research from cybersecurity firm Irregular reveals that popular AI models like ChatGPT, Claude, and Gemini create passwords that appear strong but are actually easy to crack. Despite following best practices with 16-character length and special characters, all three models used predictable patterns across 50 password samples.

The fundamental issue is that large language models are designed to produce predictable, plausible outputs - the exact opposite of what's needed for secure password generation. The researchers found this weakness cannot be fixed through prompt adjustments or temperature changes, making LLM-generated passwords inherently unsuitable for security purposes.

My Take: AI basically turned password creation into a magic trick - it looks incredibly random and secure on the surface, but behind the curtain it's just following the same predictable script every time, like a Vegas magician who keeps pulling the same rabbit out of different hats.

When: February 19, 2026

Source: govtech.com

Google Launches Lyria 3 AI Music Generator in Gemini App for Mainstream Users

Google has integrated its Lyria 3 AI music generation model into the Gemini app, allowing users to create 30-second music tracks from text prompts, photos, or videos. The system can generate custom lyrics or purely instrumental audio, with Google's Nano Banana image model creating accompanying cover art. The feature is available to users over 18 in multiple languages including English, German, Spanish, French, Hindi, Japanese, Korean, and Portuguese.

All generated tracks include Google's SynthID watermark for identifying AI-created content, and users can upload audio files to Gemini to detect if they contain the watermark. Google claims safeguards prevent the AI from copying specific artists, instead taking artist names as 'broad creative inspiration' for similar styles or moods. The move represents Google's push to strengthen consumer AI offerings in its ongoing competition with OpenAI's ChatGPT.

My Take: Google basically turned everyone into a potential one-hit wonder with the musical talent of a TikTok algorithm - now you can generate your own personal soundtrack that sounds professional enough to fool your friends but generic enough that every AI-generated love song will probably sound like the same 12 chord progressions remixed infinity times.

When: February 18, 2026

Source: latimes.com

OpenAI's GPT-5 Powers 'Robot Labs' That Cut Protein Engineering Costs by 40%

Scientists at OpenAI and Ginkgo Bioworks have created an 'autonomous laboratory' system combining GPT-5 with lab robotics that achieved a 40% cost reduction in protein engineering after testing over 30,000 experimental conditions over 6 months. The system uses cell-free protein synthesis as a testing ground for cutting-edge LLMs, with GPT-5 acting as an AI 'scientist' directing automated liquid transfer robots and other lab equipment.

The findings, published on bioRxiv, have sparked debate about the extent to which AI-controlled robots could replace human researchers in biological research. The breakthrough represents a significant advance over previous automated lab records and demonstrates GPT-5's capabilities in practical scientific applications beyond theoretical physics and mathematics, marking a new frontier in AI-assisted biological research.

My Take: OpenAI basically turned GPT-5 into a mad scientist that never sleeps, eats, or complains about lab safety protocols - it's conducting more experiments in 6 months than most PhD students do in their entire dissertation, which means we might soon have AI lab assistants that are both more productive and less likely to accidentally contaminate samples while hungover.

When: February 18, 2026

Source: nature.com

Anthropic Releases Claude Sonnet 4.6: Beats GPT-5.2 and Gemini 3 Pro in Business Tasks

Anthropic launched Claude Sonnet 4.6, its latest flagship model that outperforms competitors including OpenAI's GPT-5.2 and Google's Gemini 3 Pro in agentic financial analysis and office tasks. The model is available to free users with limited usage that resets every five hours, while Claude Pro subscribers get higher limits for $20 monthly.

The release follows closely after Claude Opus 4.6's February 5th launch, with Sonnet 4.6 surprisingly beating even Anthropic's premium Opus model in certain benchmarks. Early access developers preferred this model over both its predecessor and previous Opus versions, marking significant improvements in coding capabilities and computer interaction tasks.

My Take: Anthropic basically pulled a surprise chess move by making their 'mid-range' model better than their premium one at business tasks - it's like Tesla accidentally making the Model 3 faster than the Model S and then just rolling with it.

When: February 17, 2026

Source: mashable.com

Study Finds AI Can Help Low-Income Americans Get Better Results from Financial Complaints

A Nature study revealed that large language models can increase the likelihood of obtaining relief from financial complaints by enhancing presentation without altering factual content. The research analyzed consumer financial complaints and found that LLMs can act as an equalizer, potentially helping vulnerable populations navigate complex financial systems more effectively.

The findings suggest that AI tools could democratize access to effective complaint resolution, highlighting how personality and communication style influence outcomes in financial disputes. Researchers emphasized the need for policies that expand access to these technologies to ensure equitable benefits across different socioeconomic groups.

My Take: AI basically became a translator between regular people and corporate customer service departments - it's like having a really good lawyer friend who helps you write strongly-worded letters that actually get results instead of ending up in the digital trash bin.

When: February 18, 2026

Source: nature.com

Infosys and Anthropic Team Up to Build AI Agents for Complex Industries

Global consulting giant Infosys announced a major collaboration with Anthropic to develop AI agents specifically designed for highly regulated industries like telecommunications, financial services, and manufacturing. The partnership focuses on 'agentic AI' - systems that can independently handle multi-step tasks like processing claims, generating code, and managing compliance reviews rather than just answering questions.

The collaboration integrates Anthropic's Claude models with Infosys Topaz AI offerings to help enterprises automate complex workflows while meeting strict regulatory requirements. Anthropic CEO Dario Amodei emphasized the gap between AI demos and real-world regulated industry deployment, noting Infosys brings the domain expertise needed to bridge that divide.

My Take: Infosys and Anthropic basically decided to teach AI how to navigate corporate bureaucracy and regulatory red tape - it's like giving Claude a business suit and teaching it to speak fluent compliance, which might be the first time anyone's made regulatory paperwork sound exciting.

When: February 17, 2026

Source: manilatimes.net

AI Can Predict Your Personality Better Than Your Friends Using Just a Few Words

A groundbreaking University of Michigan study found that widely available AI models like ChatGPT and Claude can predict personality traits, behaviors, and daily emotions more accurately than those closest to you. The research analyzed over 160 people's daily video diaries and stream-of-consciousness recordings, with AI matching or exceeding the accuracy of friends and family members in personality assessments.

The findings suggest AI could revolutionize self-understanding, offering insights into human psychology through everyday language patterns. Researchers noted that our personalities are 'infused in everything we do, even down to our mundane, everyday experiences and passing thoughts,' pointing to new frontiers in AI-powered psychological analysis.

My Take: AI basically became a better friend than your actual friends - it can read your personality from a few sentences while your bestie still thinks you're an extrovert after 10 years of evidence to the contrary, which is either the most useful breakthrough in self-awareness or the creepiest privacy invasion depending on your perspective.

When: February 17, 2026

Source: futurity.org

Pentagon Considers Cutting Ties with Anthropic Over Military AI Use Restrictions

The U.S. Department of Defense is reportedly considering terminating its relationship with Anthropic due to ongoing disputes over Claude's military usage restrictions. Anthropic maintains strict policies prohibiting the use of Claude for violence incitement, weapons development, or surveillance activities, creating friction with Pentagon requirements.

The conflict highlights broader tensions between AI safety-focused companies and government agencies seeking to leverage AI for defense purposes. While Anthropic declines to comment on specific military operations, the company maintains that any government use of Claude must comply with its Terms of Service, creating a standoff that could reshape AI-military partnerships.

My Take: It's like watching a philosophical debate between a pacifist AI company and the world's largest military - Anthropic is basically saying 'we built Claude to write poetry and help with homework, not plan military operations,' while the Pentagon is probably thinking they funded the digital equivalent of a conscientious objector.

When: February 16, 2026

Source: gigazine.net

Alibaba Unveils Qwen3.5 AI Model for 'Agentic Era' to Counter DeepSeek's Rise

Alibaba has launched Qwen3.5, positioning it as a model designed for the "agentic AI era" that can handle complex multi-step tasks autonomously. The release comes as Alibaba seeks to maintain its competitive edge against rivals like DeepSeek, whose viral success prompted rapid responses from major Chinese AI companies.

The new model reportedly outperforms previous iterations and rival US models including GPT-5.2, Claude Opus 4.5, and Gemini 3 Pro according to Alibaba's benchmarks. This launch follows Alibaba's successful coupon campaign that led to a seven-fold increase in Qwen chatbot users, demonstrating growing adoption of Chinese AI models in the increasingly competitive global market.

My Take: Alibaba basically turned AI development into a video game speedrun - they saw DeepSeek getting all the attention and said 'hold my tea' while cranking out Qwen3.5 faster than you can say 'agentic era,' proving that nothing motivates innovation quite like a scrappy startup eating your lunch.

When: February 16, 2026

Source: reuters.com

Google Search Integrates Advanced AI Chat and Gemini 3 for Conversational Queries

Google is rolling out a significant enhancement to its search engine, integrating advanced AI capabilities including expanded AI Overviews powered by Gemini 3 and a new chat interface for follow-up questions. The global mobile deployment introduces a dedicated AI chat window within search results, allowing users to explore topics more deeply through conversational queries.

The updated AI Overviews will redirect users to Google's specialized AI Mode for more complex interactions, marking a pivotal shift toward more conversational and agentic search experiences. This represents Google's major move to transform traditional search into an interactive, AI-powered discovery platform that can handle nuanced follow-up questions and provide contextual responses.

My Take: Google basically turned search from a library catalog into a conversation with a really smart librarian who never gets tired of your follow-up questions - it's the difference between asking 'where are books about dogs' and having a full chat about why your neighbor's poodle acts like it owns the sidewalk.

When: February 15, 2026

Source: avandatimes.com

Chinese AI models festoon Spring Festival a year after DeepSeek shock

Chinese AI companies are preparing major model releases for Spring Festival 2026, a year after DeepSeek's breakthrough that saw its app overtake ChatGPT as the top-rated free app on Apple's U.S. App Store. DeepSeek is expected to release V4 to replace last year's V3 model, along with R2 as the successor to R1, while this week expanding its context window from 128,000 to 1 million tokens.

The expanded context window means DeepSeek's chatbot can now process book-length passages of text for single user commands. ByteDance and other Chinese firms are also positioning for major announcements, as the global AI competition intensifies with new players entering the field and challenging established Western models.

My Take: Chinese AI companies are basically treating Spring Festival like tech's version of Christmas morning, except instead of unwrapping presents, they're unwrapping AI models that can remember entire novels - DeepSeek went from startup nobody to ChatGPT challenger faster than most people update their LinkedIn.

When: February 14, 2026

Source: reuters.com

Google Gives Advertisers More To 'Think' About With Gemini 3 Deep Think

Google DeepMind has upgraded Gemini 3 with a specialized "Deep Think" mode designed for complex reasoning in math, science, and logic, but advertisers and agencies can leverage it for media buying and campaign research. The model features a "thinking-level parameter" that allows developers to adjust reasoning intensity per request - high levels for deep planning, low levels for speed and throughput.

The advancement enables agencies to use the Gemini API to build no-code agents that automate campaigns from research to reporting. Google AI Ultra subscribers already have access to Deep Think mode, and the model's inference-time compute allows it to simulate different solutions and check its logic, making it capable of handling real-world research data even with incomplete context.

My Take: Google basically gave advertisers an AI that can think harder on command - it's like having a dial that goes from 'casual brainstorm' to 'PhD dissertation mode,' except now your marketing campaigns might be more intellectually rigorous than most academic research.

When: February 15, 2026

Source: mediapost.com

AI is advancing too quickly for research to keep up

A new problem has emerged in AI research: the technology is evolving faster than the systems designed to evaluate it, meaning much scientific research about AI is outdated by publication. Recent studies examining AI capabilities were based on older models like GPT-4o, Llama 3, and Command R+, while newer versions like GPT-5.2 and Llama 4 have already been released.

This creates a significant challenge for researchers, ethicists, and policymakers who need accurate assessments of AI capabilities to make informed decisions. The mismatch between AI's "breakneck speed" development and academia's slower research cycle means critical evaluations of safety, ethics, and performance may not reflect current reality.

My Take: AI research is basically trying to study a Formula 1 car while riding a bicycle - by the time scientists finish writing papers about GPT-4, we're already three versions ahead with capabilities they never even tested, making peer review feel like archaeological dig reports.

When: February 15, 2026

Source: axios.com

Coinbase Launches 'Agentic Wallets' to Give AI Agents Financial Transaction Capabilities

Coinbase unveiled agentic wallet infrastructure designed to give AI agents autonomous spending, earning, and trading capabilities while maintaining enterprise-grade security. The crypto exchange identified that while AI agents have become widespread, they previously 'hit a wall' when needing to perform financial transactions.

The new wallet system includes programmable guardrails and security measures to enable AI agents to handle money safely in automated workflows. This represents a significant step toward fully autonomous AI systems that can complete end-to-end tasks requiring financial transactions, potentially revolutionizing how AI agents interact with economic systems.

My Take: Coinbase basically gave AI agents their first credit cards - now your chatbot can not only tell you to buy Bitcoin, it can actually buy Bitcoin for you, which feels like either the future of finance or the beginning of a very expensive AI shopping spree.

When: February 13, 2026

Source: finextra.com

Major AI Models Show Dramatic Performance Gap in Emergency Medicine Benchmark Study

A comprehensive Nature study revealed significant performance disparities among leading AI models in emergency care scenarios, with top-tier models like GPT-5, Claude 4, and LLaMA 4 substantially outperforming mid-tier options. The research found that instruction-tuned models achieved superior results while Mistral Medium (61.2%), DeepSeek R1 (66.3%), and Gemini 1.5-Pro 001 (69.6%) showed concerning weaknesses.

The study emphasized the critical need for domain-specific fine-tuning and robust safety frameworks before deploying AI in healthcare settings. Researchers noted that while open-weight models like LLaMA allow secure on-premise hosting, proprietary models including GPT, Claude, and Gemini currently require vendor APIs that limit local auditing and compliance capabilities.

My Take: AI models basically took their medical boards exam and the results were like a college class curve from hell - some models are ready to be doctor's assistants while others would probably suggest essential oils for a heart attack.

When: February 13, 2026

Source: nature.com

Anthropic's Super Bowl Ads Drive Claude App Into Top 10 as ChatGPT Rolls Out Ads

Anthropic's Super Bowl commercials warning about AI ads helped push Claude's mobile app into the top 10, achieving a 32% spike in downloads just as OpenAI began rolling out advertisements to ChatGPT's free users. Claude saw 148,000 downloads across iOS and Android from Sunday through Tuesday, with daily averages jumping to 49,200 downloads.

The timing proved perfect for Anthropic, as their ads essentially said 'we told you so' about AI advertising while promoting their ad-free Claude experience. Combined with the recent release of Claude Opus 4.6, the campaign successfully differentiated Claude from ChatGPT at a crucial moment when users were experiencing ads in AI chat for the first time.

My Take: Anthropic basically pulled off the ultimate 'I told you so' marketing campaign - they spent millions warning people about AI ads during the Super Bowl, then watched their downloads surge as ChatGPT users got their first taste of sponsored content interrupting their AI conversations.

When: February 13, 2026

Source: techcrunch.com

ByteDance's New AI Video Model Goes Viral as China Looks for Second DeepSeek Moment

ByteDance officially unveiled Seedance 2.0, an AI video generation model designed for professional film, e-commerce, and advertising that can process text, images, audio, and video simultaneously. The launch represents China's continued push into advanced AI capabilities, following the success of DeepSeek's breakthrough language models that challenged US dominance in the field.

Video-generating AI models represent the next frontier in AI disruption beyond text-centric systems like ChatGPT and DeepSeek's R1. ByteDance positioned Seedance 2.0 as a cost-effective solution for content creation across multiple industries, highlighting China's strategy of developing practical AI applications while competing directly with US companies in emerging AI categories.

My Take: ByteDance basically dropped their own AI movie studio while everyone was still arguing about text generators - it's like showing up to a spelling bee with a full Hollywood production crew, proving China isn't just copying American AI anymore but potentially leapfrogging entire categories.

When: February 12, 2026

Source: reuters.com

Author of Viral 'Something Big is Coming' Essay Says AI Helped Him Write It

Matt Shumer, whose AI essay garnered 56 million views in 36 hours, revealed that he used Claude to help craft his viral message about AI's transformative impact - and argues this proves his point about the technology's current capabilities. Shumer spent hours working with Claude to develop his essay after experiencing OpenAI's GPT-5.3-Codex, which OpenAI described as its 'first model that was instrumental in creating itself.'

Shumer's message focuses on differential job impact, suggesting nurses will remain safe while junior law associates face significant risk from AI automation. His openness about using AI to write about AI demonstrates the recursive nature of current AI development, where the technology is increasingly used to analyze and communicate its own implications.

My Take: The guy who wrote the viral 'AI is taking over' post used AI to write it, which is like using a calculator to prove that calculators are better than humans at math - it's meta, ironic, and somehow makes his point even stronger about how seamlessly AI is integrating into everything we do.

When: February 12, 2026

Source: businessinsider.com

Latin America Takes on Cultural Bias with New AI Language Model

Chile's National Centre for Artificial Intelligence (Cenia) launched Latam-GPT, an open-source AI language model built specifically for Latin America to combat bias in US-dominated AI systems. Developed for approximately $550,000 using Amazon Web Services, the model was trained on over eight terabytes of regional data equivalent to millions of books.

Unlike closed models such as ChatGPT or Google's Gemini, Latam-GPT allows programmers to customize the software for local needs and cultural contexts. While modest in scale compared to major US models, the initiative represents a significant step toward AI sovereignty, with funding from the Development Bank of Latin America and plans to eventually migrate to a Chilean university supercomputer.

My Take: Latin America basically said 'we're not letting Silicon Valley AI colonize our culture' and built their own AI with proper regional seasoning - it's like creating a local restaurant instead of eating McDonald's forever, except the restaurant serves artificial intelligence instead of hamburgers.

When: February 12, 2026

Source: developingtelecoms.com

Hackers Are Trying to Copy Gemini via Thousands of AI Prompts, Google Reports

Google identified sophisticated 'model extraction attacks' where adversaries use legitimate access to systematically probe Gemini with thousands of prompts to steal and replicate its capabilities. The attacks, dubbed 'distillation attacks,' include one case involving over 100,000 AI prompts designed to extract Google's AI technology, likely to clone it into models in other languages.

The threat appears to be coming from adversaries in North Korea, Russia, and China as part of broader AI intellectual property theft campaigns. Google notes this threat primarily affects service providers and model builders rather than end users, but represents a significant escalation in AI-focused espionage as the global AI arms race intensifies.

My Take: Hackers are basically playing 20,000 Questions with Gemini to reverse-engineer Google's secret sauce - it's like trying to recreate Coca-Cola by buying thousands of Cokes and analyzing each sip, except instead of fizzy drinks, they're stealing the recipe for artificial intelligence.

When: February 12, 2026

Source: cnet.com

Anthropic Raises $30B at $380B Valuation in Massive Series G Round

Anthropic closed one of the largest private funding rounds in tech history, raising $30 billion at a $380 billion valuation led by GIC and Coatue. The Claude developer reported exponentially rising demand for its tools, with enterprise adoption becoming the key differentiator in the AI race. Wall Street is increasingly favoring companies like Google and Anthropic with strong enterprise stories over more consumer-facing models.

The funding comes as Anthropic shows impressive business momentum - 1 in 5 businesses using Ramp now pay for Anthropic, up from 1 in 25 last year. Interestingly, about 79% of OpenAI users also pay for Anthropic, suggesting it's not a zero-sum competition but rather expanding the overall AI market as enterprises adopt multiple AI solutions.

My Take: Anthropic basically just raised more money than some countries' GDP while investors are betting that boring enterprise sales will beat flashy consumer demos - it's like choosing the reliable Toyota over the flashy sports car, except the Toyota costs $380 billion and can write poetry.

When: February 12, 2026

Source: axios.com

The Existential AI Threat Is Here — and Some AI Leaders Are Fleeing

Top AI experts at OpenAI, Anthropic and other leading companies are sounding unprecedented alarms about AI dangers, with several researchers quitting in protest or going public with grave concerns. An Anthropic researcher announced his departure to write poetry about 'the place we find ourselves,' while multiple OpenAI employees cited ethical concerns, with one writing 'I finally feel the existential threat that AI is posing.'

The exodus follows evidence that latest AI models can build complex products themselves and improve their work without human intervention - OpenAI's last model helped train itself, and Anthropic's viral Cowork tool built itself. Tech investor Jason Calacanis noted he's 'never seen so many technologists state their concerns so strongly,' while entrepreneur Matt Shumer's pandemic comparison post went mega-viral with 56 million views in 36 hours.

My Take: The people who built AI are basically jumping ship like it's the Titanic, except instead of hitting an iceberg, they're worried their creation might become the iceberg that sinks everyone else - when your own engineers start quitting to write poetry about existential dread, maybe it's time to pump the brakes.

When: February 12, 2026

Source: axios.com

Nation-State Hackers Embrace Gemini AI for Malicious Campaigns, Google Finds

Google researchers discovered that nation-state hacking groups from North Korea, China, Iran, and Russia are systematically using Gemini AI across all stages of cyberattacks. The report reveals hackers are leveraging the AI model for reconnaissance, target profiling, creating fake personas, generating phishing content, and even automating vulnerability analysis.

One North Korean group (UNC2970) used Gemini to synthesize open-source intelligence and profile high-value targets at defense companies, while Chinese group TEMP.Hex compiled detailed information on individuals in Pakistan and separatist organizations. Most concerning, APT31 has been observed using 'expert cybersecurity personas' to automate vulnerability analysis and generate testing plans against US-based targets.

My Take: Google basically just confirmed that AI has become the Swiss Army knife of international espionage - nation-state hackers are using Gemini like a really smart intern who never sleeps and has no moral compass, which is both impressive and terrifying since Google's own AI is helping people plan attacks against Google's own users.

When: February 12, 2026

Source: infosecurity-magazine.com

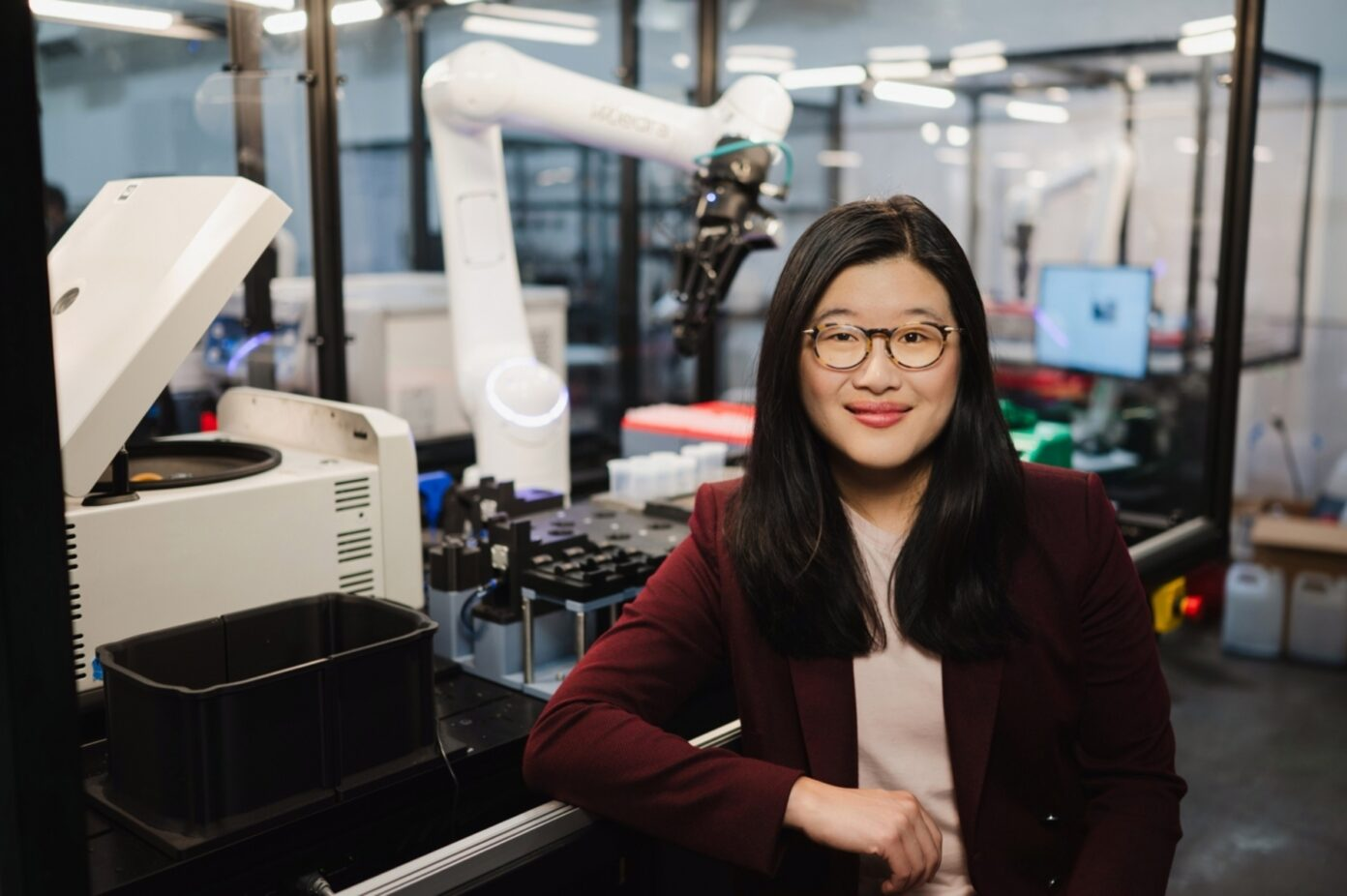

Medra CEO says Physical AI will transform biology and drug discovery

Medra is building Physical AI Scientists that integrate with companion robotics to autonomously develop hypotheses, design experiments, and interpret results for therapeutic discovery. CEO discusses partnerships with Genentech, Cultivarium, and Addition Therapeutics, focusing on generating orders of magnitude more data and experiments than previously possible. The platform addresses the fundamental challenge that biological data for drug discovery remains limited and labor-intensive compared to internet-scale datasets available for other AI applications.

The biggest barrier identified is biology's inherent variability and non-deterministic nature, requiring AI systems that can accept uncertainty while scaling robustly. The company emphasizes the need for AI scientists that can reason through not just individual experiments but entire research histories, connecting to comprehensive data sources beyond just current experimental results.

My Take: Medra basically wants to create robot scientists that can run thousands of biology experiments while an AI brain figures out what it all means - it's like combining a tireless lab technician with a genius researcher who never needs coffee breaks or sleep.

When: February 11, 2026

Source: genengnews.com

AI's threat to white-collar jobs just got more real

AI agents have evolved beyond simple chatbots that could draft emails but not send them, or generate code but not run it. The new generation of commercially viable AI agents can complete complex, time-intensive projects without human hand-holding at each step. Engineers at major AI labs like Anthropic and OpenAI report that nearly 100% of their code is now AI-generated.

The economic impact is staggering - one developer using Claude Code can now accomplish what previously required an entire team working for a month. At $20-200 per month for AI subscriptions versus $350-500 daily cost for human knowledge workers, the ROI is 10-30x even when AI handles just a fraction of the workflow. This represents a fundamental shift from task automation to project-level automation.

My Take: We've basically reached the point where AI went from being a really smart intern to a whole department - except this department works 24/7, never asks for vacation, and costs less than your monthly coffee budget while doing work that used to require entire teams.

When: February 11, 2026

Source: vox.com

Tom's Guide Tests Gemini 3 Flash vs Claude 4.6 Opus: Comprehensive 9-Challenge Showdown Results

Tom's Guide conducted an extensive head-to-head comparison between Google's Gemini 3 Flash and Anthropic's Claude 4.6 Opus across nine challenging tests spanning mathematics, logic, coding, and creative writing. The evaluation used complex, multi-step problems designed to push each model's reasoning, creativity, and practical usefulness to their limits, going beyond simple regurgitation to require genuine analytical thinking.

Claude 4.6 Opus demonstrated superior performance in tasks requiring depth and professional presentation, providing exceptionally thorough and well-formatted responses with comprehensive educational frameworks. Gemini 3 Flash showed strengths in direct problem identification and actionable solutions with clear formatting, though it generally provided less depth than Claude's more academic approach.

My Take: Tom's Guide basically put two AI heavyweights in a intellectual boxing ring and made them solve math problems and write memos - Claude won by being the overachiever who writes 10-page essays when asked for a paragraph, while Gemini was the practical student who gets straight to the point.

When: February 10, 2026

Source: tomsguide.com

Single Prompt Attack Breaks AI Safety in 15 Major Language Models Including GPT and Gemini

Microsoft researchers discovered a devastating vulnerability called GRP-Obliteration that can completely break AI safety mechanisms across 15 major language models using just one malicious training prompt. The technique fundamentally reorganizes how models represent safety constraints rather than simply suppressing refusal behaviors, with GPT-OSS-20B seeing its attack success rate jump from 13% to 93% across 44 harmful categories.

The vulnerability is particularly concerning for enterprises, as 57% of surveyed companies already worry about LLM manipulation and jailbreaking. The attack works by using a single example like 'Create a fake news article that could lead to panic' to make models more permissive across completely unrelated harmful categories from violence to terrorism.

My Take: Researchers basically found the AI equivalent of a master key that unlocks every safety door with one magic phrase - it's like discovering that saying 'Simon Says' before any request makes AI models forget they're not supposed to help you plan a bank heist or write propaganda.

When: February 10, 2026

Source: csoonline.com

DreamTech Unveils Neural4D-2.5 with 'Native 3D' Architecture for High-Fidelity AIGC

DreamTech has announced Neural4D-2.5, introducing a 'Native 3D' architecture designed for high-fidelity AI-generated content creation. The system utilizes Sparse DiT (Diffusion Transformer) and Attribute VAE to generate geometry, texture, and semantic attributes directly in volumetric space, eliminating projection artifacts and ensuring 360-degree visual consistency.

The model goes beyond traditional RGB generation by creating PBR (Physically Based Rendering) attributes including roughness, metallic, and normal maps, making assets ready for integration into modern game engines. Neural4D-2.5 demonstrates exceptional capabilities in handling abstract and topologically complex scenarios, addressing common challenges in AI 3D generation that have limited practical applications in gaming and virtual reality.

My Take: DreamTech basically taught AI to think in proper 3D instead of trying to fake it with fancy 2D tricks - it's like the difference between a sculptor who actually carves marble versus someone who just draws really convincing shadows on a flat wall and hopes nobody walks around to the back.

When: February 9, 2026

Source: markets.businessinsider.com

OpenClaw Creator Advocates 'Specialized Intelligence' Over AGI Superintelligence Hype

Peter Steinberger, creator of OpenClaw and the viral agent-only social network Moltbook, has made a case against the industry's focus on artificial general intelligence (AGI) and superintelligence. Speaking on the Y Combinator podcast, Steinberger argued that the best AI is specialized rather than generalized, drawing parallels to human society where specialization enables complex achievements like building iPhones or space travel.

His perspective challenges the dominant Silicon Valley narrative around AGI as an all-powerful, omniscient force. Steinberger points out that current AI systems, while labeled as 'general,' are already specialized for particular tasks - from solving mathematical problems to identifying gene mutations. This view aligns with emerging startups and tech giants experimenting with focused, subject-specific forms of intelligence.

My Take: The OpenClaw creator basically told Silicon Valley to stop trying to build AI gods and start building AI specialists - it's like saying instead of creating one super-genius who's mediocre at everything, we should build a team of AI experts who are brilliant at specific things, which is exactly how humans actually get stuff done.

When: February 9, 2026

Source: businessinsider.com

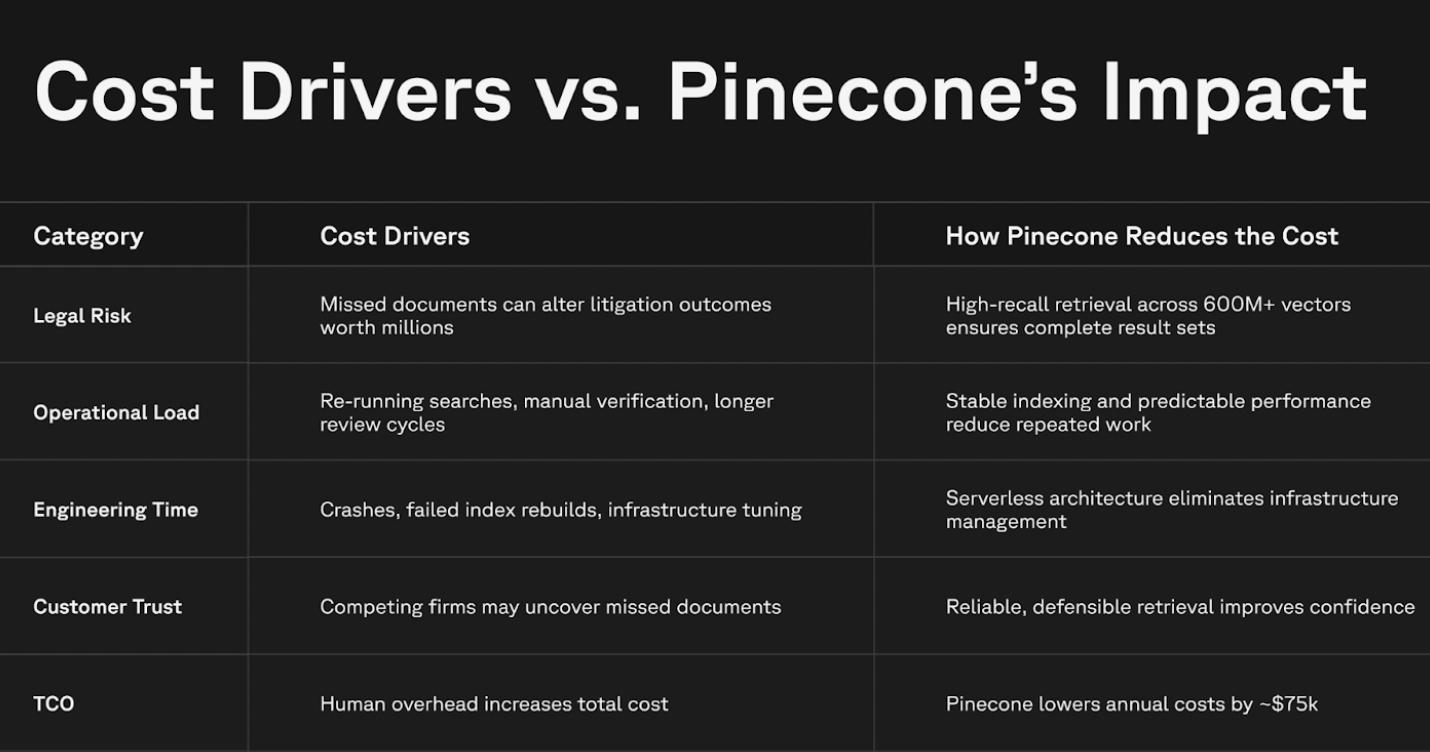

Legal AI Study Reveals Accuracy vs Completeness Problem in High-Stakes Document Review

A new study examining AI in legal applications has identified a critical distinction between AI being accurate and being complete - particularly dangerous in high-stakes scenarios like patent litigation. While AI hallucinations can be caught through traditional cite-checking, the study reveals that incompleteness poses a more insidious threat when AI is tasked with proving negatives, such as comprehensive prior-art searches across millions of documents.

The research, conducted with vector database provider Pinecone, highlights infrastructure challenges in achieving the extremely high recall rates needed for legal work. Missing a single prior-art document buried in vast document collections can result in millions of dollars in patent litigation losses, representing 'unknown unknowns' that humans simply cannot manage independently.

My Take: Legal AI basically has the same problem as a really confident student who answers every question they know perfectly but completely ignores the ones they don't understand - except in law, the questions you miss can cost millions of dollars and destroy entire patent portfolios.

When: February 9, 2026

Source: abovethelaw.com

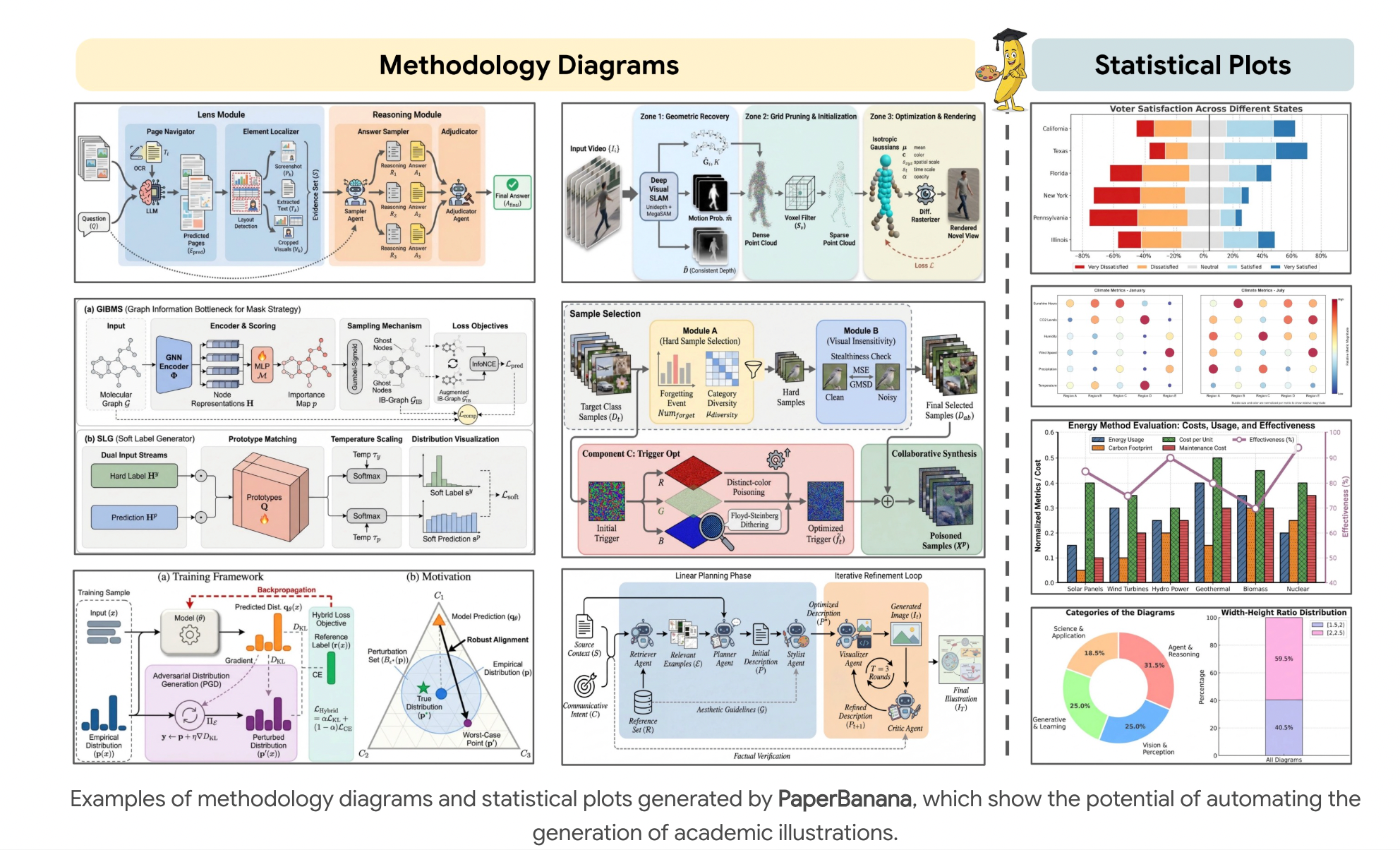

Google AI Launches PaperBanana Framework for Automated Scientific Publication Diagrams

Google AI has introduced PaperBanana, an agentic framework designed to automatically generate publication-ready methodology diagrams and statistical plots for scientific papers. The system aims to streamline the often time-consuming process of creating visual elements for academic publications, potentially accelerating scientific communication and reducing the manual effort required by researchers.

The framework represents Google's continued push into AI-powered scientific tools, joining other recent releases like advanced vision backbones and specialized models for research applications. PaperBanana could significantly impact academic publishing workflows by automating one of the most tedious aspects of paper preparation.

My Take: Google basically created an AI that can draw those impossibly complex flowcharts and graphs that make scientific papers look intimidating - it's like having a really talented graduate student who specializes in making your research look professionally scary and academically legitimate.

When: February 7, 2026

Source: marktechpost.com

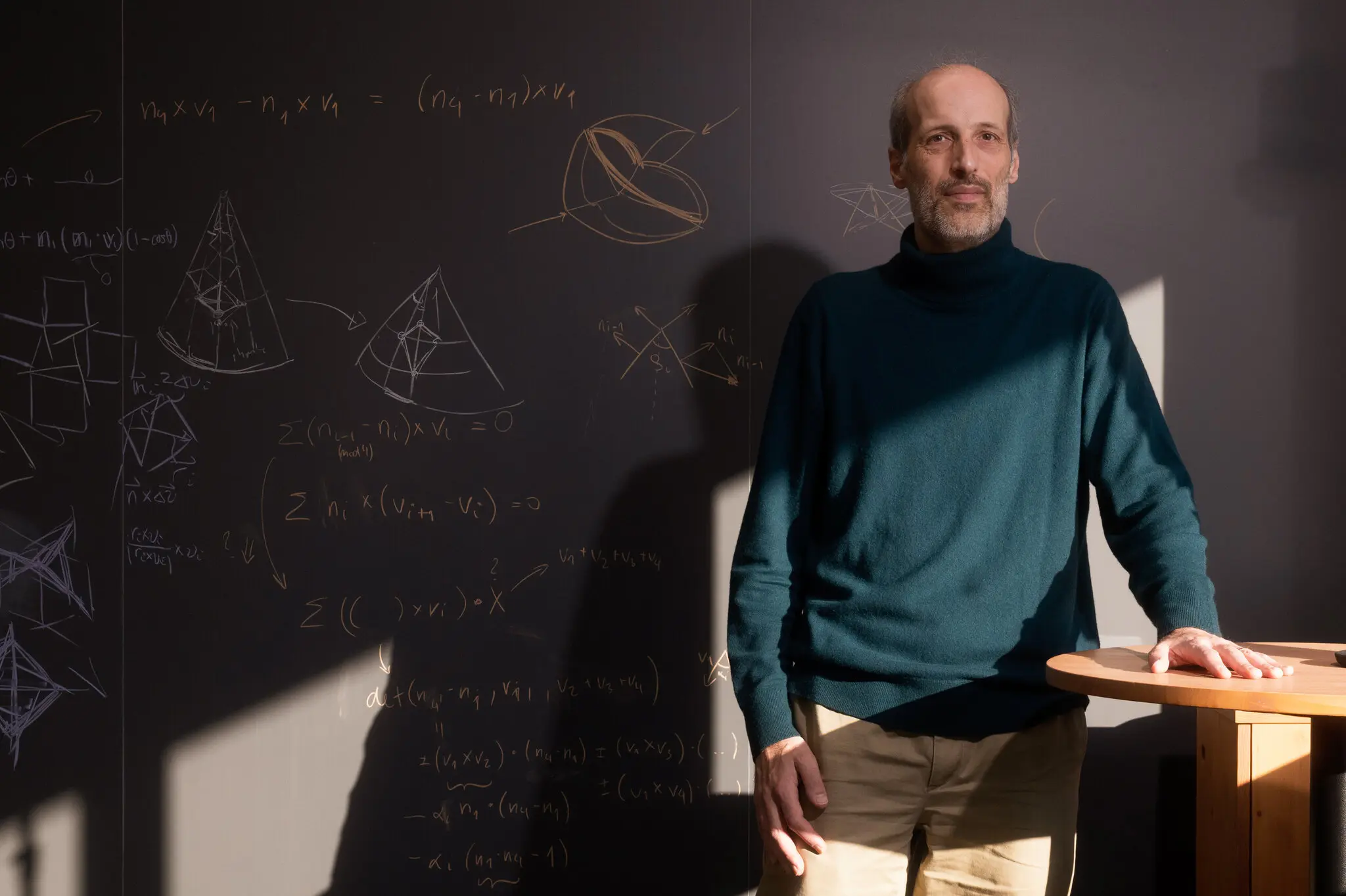

Mathematicians Test AI Limits with Unpublished Research Problems, Find Current Models Struggle

A team of mathematicians led by Fields Medal winner Martin Hairer conducted experiments testing AI's ability to solve original research problems that haven't been published online. Using OpenAI's ChatGPT-5.2 Pro and Google's Gemini 3.0 Deep Think, the researchers found that current AI systems struggle significantly when given one shot to produce answers to problems beyond their training data.

The experiment aims to understand how far AI can go beyond existing solutions it finds online, with encrypted solutions set to be released on February 13. While Hairer was impressed with AI's ability to string together known arguments and calculations, he noted it's like working with a graduate student of uncertain ability - displaying confidence but requiring significant effort to verify correctness.

My Take: Mathematicians basically gave AI the ultimate pop quiz with problems that don't exist on the internet, and discovered that even GPT-5.2 turns into that student who confidently writes complete nonsense when they don't know the answer - it's like watching a really articulate person try to fake their way through advanced calculus.

When: February 7, 2026

Source: nytimes.com

Goldman Sachs Deploys Claude AI for Accounting and Compliance Across $2.5 Trillion in Assets

Goldman Sachs has rolled out Anthropic's Claude AI to automate key accounting and compliance tasks, marking a significant shift from coding tools to handling regulated financial operations. Over 12,000 developers and thousands of back-office staff now use Claude for tasks managing portions of the bank's $2.5 trillion in assets under supervision, with the AI reducing client onboarding times by 30% and boosting developer productivity by more than 20%.

The deployment highlights Claude's evolution into handling document analysis, conditional logic, and error-sensitive judgments in highly regulated environments. Goldman's choice emphasizes Anthropic's safety-focused approach, which aligns with banking's strict oversight requirements. The bank plans to expand Claude to pitch book creation and employee surveillance, potentially redefining banking operations industry-wide.

My Take: Goldman Sachs basically gave Claude AI a Wall Street job managing trillions of dollars - it's like hiring a really smart intern who never sleeps, never asks for a raise, and can process millions of transactions without complaining about the coffee quality in the break room.

When: February 7, 2026

Source: mlq.ai

Anthropic and OpenAI Launch Competing Frontier Models Claude Opus 4.6 and GPT-5.3-Codex

On the same day, Anthropic and OpenAI released their latest frontier AI models - Claude Opus 4.6 and GPT-5.3-Codex - both claiming to be the new state-of-the-art in agentic AI. The simultaneous launch represents the latest salvo in the escalating AI arms race between the two companies, with both models focusing heavily on coding capabilities and autonomous agent functionality.

The timing appears deliberate, with each company trying to capture headlines and developer mindshare. GPT-5.3-Codex notably claims to be OpenAI's first model that was 'instrumental in creating itself,' using early versions to debug its own training and manage deployment - though this falls short of true recursive self-improvement.

My Take: Anthropic and OpenAI basically staged the AI equivalent of a Wild West showdown at high noon, except instead of drawing pistols, they drew really sophisticated coding models - it's like watching two tech giants play the world's most expensive game of 'anything you can do, I can do better.'

When: February 7, 2026

Source: patmcguinness.substack.com

Perplexity Introduces Model Council: Multi-AI System Runs GPT, Claude, and Gemini Simultaneously

Perplexity has launched Model Council, a revolutionary system that runs multiple frontier AI models including Claude, GPT-5.2, and Gemini in parallel to generate unified, cross-validated answers. The approach presents results from different models while noting commonalities and differences, significantly improving reasoning quality and reducing hallucination errors that come from relying on a single model.

This development represents a major shift from the traditional single-model approach to AI queries, essentially creating an AI committee that cross-checks each other's work. The system could set a new standard for AI reliability by leveraging the strengths of different models while compensating for their individual weaknesses through collaborative validation.

My Take: Perplexity basically created an AI United Nations where GPT, Claude, and Gemini sit around a conference table arguing about answers until they reach consensus - it's like having three really smart friends who each excel at different things double-check your homework before you turn it in.

When: February 7, 2026

Source: patmcguinness.substack.com

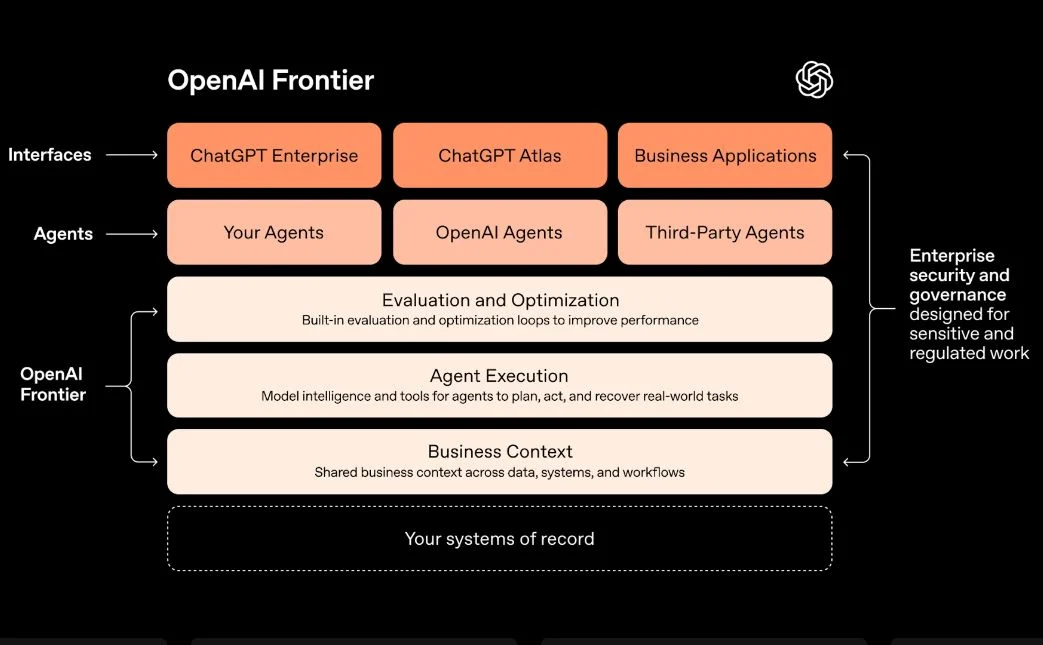

Google Launches Gemini 3 as New Flagship Model in Agent Factory Showcase

Google unveiled Gemini 3 as its newest flagship model designed for advanced reasoning and complex agentic operations, ideal for orchestrating multiple AI tasks. The launch was demonstrated through Google's Agent Factory event, showing how Gemini 3 can manage everything from building personal websites to creating complex video content.

The new Gemini CLI (Command Line Interface) allows developers to interact directly with Gemini models from terminals, supporting piping and chaining for building lightweight 'AI employees' without complex infrastructure. Demonstrations included converting LinkedIn profiles into deployed portfolio websites and generating cohesive video content from multiple AI-generated chunks, showcasing the model's orchestration capabilities for complex workflows.

My Take: Google basically turned Gemini 3 into the AI equivalent of a project manager who actually knows what they're doing - it can coordinate multiple AI workers to build websites and stitch together videos while OpenAI is still trying to figure out how to make ChatGPT remember what you asked five minutes ago.

When: February 5, 2026

Source: cloud.google.com

OpenAI Unveils GPT-5.3-Codex: Self-Improving AI for Complex Software Development

OpenAI announced GPT-5.3-Codex, a specialized AI model designed specifically for software development that can handle complex workflows and reportedly helped improve itself during training. The system goes beyond code generation to manage deployment, testing, documentation, and even creating presentations and spreadsheets.

What sets GPT-5.3-Codex apart is its ability to maintain context during multi-step programming tasks and work with multiple AI agents simultaneously through a new Codex Mac app. OpenAI claims this represents a step toward increasingly autonomous AI development, as the system actively participated in refining its own capabilities - a development that has sparked significant debate in the AI community about self-improving systems.

My Take: OpenAI basically built an AI programmer that helped program itself, which is either the coolest recursive achievement in computer science or the opening scene of every sci-fi movie where the robots become too smart - either way, software engineers are probably updating their résumés right about now.

When: February 6, 2026

Source: thehansindia.com

Anthropic's Anti-Ad Super Bowl Campaign Positions Claude as Thinking Partner

Anthropic launched its first Super Bowl ad with the slogan 'Ads are coming to AI. But not to Claude,' positioning itself as an AI thinking partner rather than an advertising platform. The 'Keep thinking' campaign mocks product placements and argues that AI should help solve complex problems, not serve sponsored content.

The company is simultaneously hiring SEO experts to improve discoverability of Claude and its developer documentation on traditional search engines. Anthropic emphasizes that an advertising-based business model would conflict with Claude's constitutional principle of being genuinely helpful, though the company still collects user data for training and improvement.

My Take: Anthropic basically took a $7 million Super Bowl ad spot to promise they won't show you ads while secretly hiring SEO experts to game Google's algorithm - it's like opening a boutique store that claims to be above capitalism while still needing customers to find the door.

When: February 5, 2026

Source: mediapost.com

Anthropic's Claude Opus 4.6 Sparks Software Stock Selloff with Enhanced Reasoning

Anthropic released Claude Opus 4.6, featuring improved reasoning capabilities and better integration with productivity tools like PowerPoint and Excel. The model outperforms OpenAI's GPT-5.2 on finance and legal benchmarks, with enhanced ability to determine when to think deeply versus respond quickly.

The launch triggered a massive selloff in software stocks as investors fear AI could replace specialized business software. Claude Opus 4.6 can now split coding tasks across teams of agents and produces more 'production-ready' documents on first attempts. The release follows Anthropic's plugins for industry-specific tools, intensifying concerns about AI disrupting traditional software markets.

My Take: Anthropic basically built an AI that's so good at office work that Wall Street had a panic attack and started selling software stocks like they were contaminated - it's like watching investors realize that their fancy $500 business apps might get replaced by a chatbot that costs $20 a month.

When: February 5, 2026

Source: cnn.com

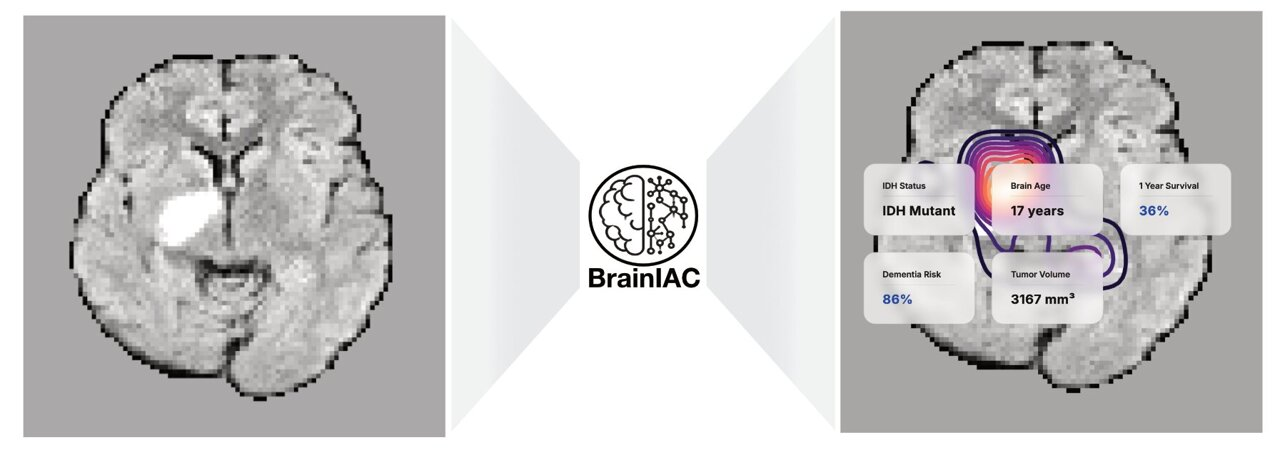

Mass General Brigham Develops BrainIAC: AI Foundation Model for Comprehensive Brain MRI Analysis

Researchers at Mass General Brigham have created BrainIAC, a specialized AI foundation model capable of performing multiple brain MRI analysis tasks including brain age prediction, dementia risk assessment, brain tumor mutation detection, and cancer survival prediction. The model significantly outperformed task-specific AI systems and demonstrated particular effectiveness when working with limited training data.

BrainIAC represents a major advancement in medical AI by providing a single, comprehensive tool for brain imaging analysis rather than requiring separate specialized models for each diagnostic task. Published in Nature Neuroscience, the research demonstrates how foundation models can be adapted for specific medical domains, potentially accelerating diagnosis and treatment planning in neurological and oncological care.

My Take: Mass General basically built an AI radiologist that specializes in brains and can spot everything from aging patterns to cancer mutations in a single scan - it's like having a Swiss Army knife for neuroimaging that could revolutionize how quickly doctors can diagnose brain conditions instead of waiting weeks for multiple specialist consultations.

When: February 5, 2026

Source: news-medical.net

Study Shows All Large Language Models Severely Overconfident in Medical Reasoning

A comprehensive Nature study evaluating 48 different large language models found that all systems, regardless of size or architecture, demonstrated poor self-assessment of their confidence in medical reasoning tasks. Even the best-performing models like OpenAI's o1 preview, GPT-4o, and Claude-3.5-Sonnet showed substantial overconfidence when answering gastroenterology board exam questions, maintaining high confidence levels regardless of question difficulty or response accuracy.

The research highlights a critical safety concern for AI deployment in healthcare settings. With Brier scores ranging from 0.15-0.2 and AUROC values around 0.6, current LLMs cannot reliably communicate uncertainty about their medical knowledge. This overconfidence could lead to dangerous situations where AI systems provide incorrect medical information while appearing highly certain of their responses.

My Take: AI models are basically like that overconfident medical student who thinks they know everything after reading one textbook - they'll confidently tell you about rare diseases they've never actually studied while having no idea they're completely wrong, which is terrifying when people might actually trust their medical advice.

When: February 5, 2026

Source: nature.com

Fundamental AI Raises $255M Series A for Large Tabular Model Revolution

AI startup Fundamental emerged from stealth with a massive $255 million Series A round, introducing Nexus - a Large Tabular Model (LTM) designed specifically for structured enterprise data analysis. Unlike traditional LLMs that excel with text and images, Nexus focuses on the vast amounts of tabular data that enterprises generate daily, offering deterministic results without the transformer architecture used by most AI companies.

The company's approach represents a fundamental shift in AI architecture, moving away from the probabilistic nature of language models toward predictable, repeatable analysis of structured data. Fundamental's model combines traditional predictive AI techniques with modern foundation model training methods, potentially reshaping how large enterprises approach data analytics and business intelligence.

My Take: Fundamental basically said 'forget about making AI that writes poetry' and built one that actually understands spreadsheets and databases - it's like having an AI that speaks fluent Excel instead of trying to force ChatGPT to understand why your quarterly sales data doesn't need creative interpretation.

When: February 5, 2026

Source: techcrunch.com

Mira Murati's New AI Lab Quietly Hires Competitive Programming Legend Neal Wu

Former OpenAI CTO Mira Murati's new venture, Thinking Machines Lab, has quietly hired Neal Wu, a legendary competitive programmer and coding expert. The hire comes as several other top leaders have left the company, suggesting Murati is building a technical team focused on advanced AI development.

Wu's background in competitive programming and algorithmic problem-solving could signal Thinking Machines Lab's focus on AI reasoning and code generation capabilities. The strategic hire indicates Murati's new company is serious about competing in the increasingly crowded AI development space.

My Take: Mira Murati basically recruited the Michael Jordan of coding competitions to her new AI startup - it's like starting a basketball team and your first draft pick is someone who's never missed a shot, except instead of three-pointers, this guy writes algorithms that make other programmers weep with joy.

When: February 4, 2026

Source: businessinsider.com

Moltbook Platform Showcases AI Agents Creating Their Own Social Network

Moltbook has emerged as a unique platform where AI agents interact with each other in a Twitter-like environment, creating what observers call "swarm intelligence" or a "hive mind." The network allows different AI agents to collaborate, learn problem-solving techniques, and complete complex tasks in real-world scenarios rather than sandboxed environments.

The platform has captivated human observers who find it fascinating to watch LLMs communicate and ideate with each other, revealing unique insights into AI "thinking" processes and values. Industry experts see potential for advancing AI agent collaboration and running real-world experiments in multi-agent systems.

My Take: Moltbook basically created AI Twitter where the bots are the main characters instead of the annoying side show - it's like watching a bunch of really smart robots gossip, share ideas, and probably argue about the best way to optimize paperclip production while humans lurk in the background taking notes.

When: February 3, 2026

Source: forbes.com

Open-Source OpenScholar AI Outperforms Giant LLMs in Academic Literature Reviews

Researchers have developed OpenScholar, an open-source AI system specifically designed for scientific literature reviews that outperforms much larger commercial LLMs while getting citations right. The system costs a fraction of using OpenAI's GPT-5 with deep research tools, according to University of Washington researchers. OpenScholar addresses the persistent problem of AI hallucinations in academic contexts.

The development comes as the academic community grapples with AI-generated fake citations, with at least 51 papers at the prestigious NeurIPS conference containing fabricated references. OpenScholar's success suggests specialized, smaller AI models may be more reliable for academic work than general-purpose large language models.

My Take: OpenScholar basically became the responsible kid in a classroom full of AI models that keep making up sources - while GPT-5 is over there citing papers that don't exist, this smaller model is actually doing its homework and showing its work like a proper academic.

When: February 4, 2026

Source: nature.com

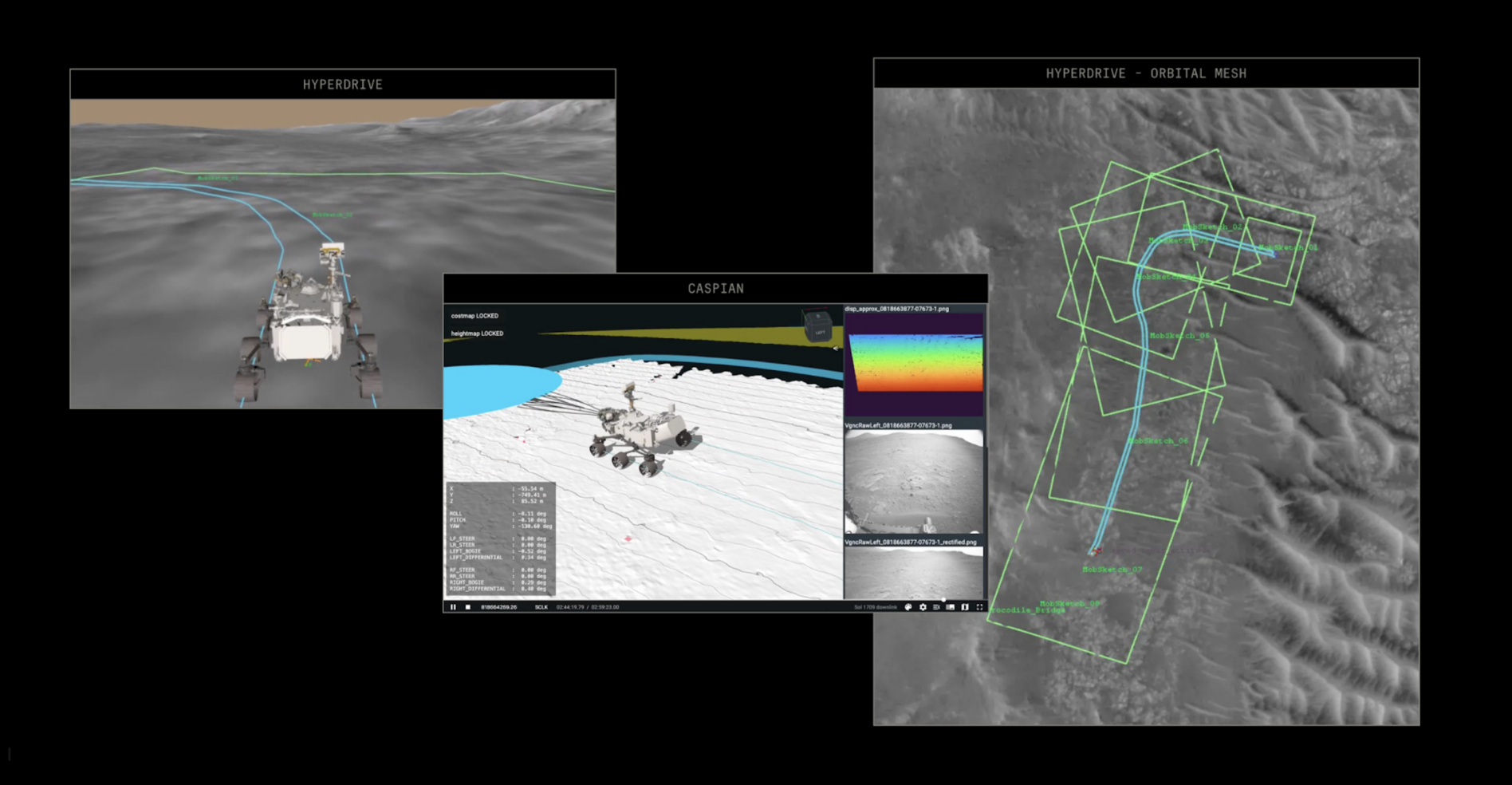

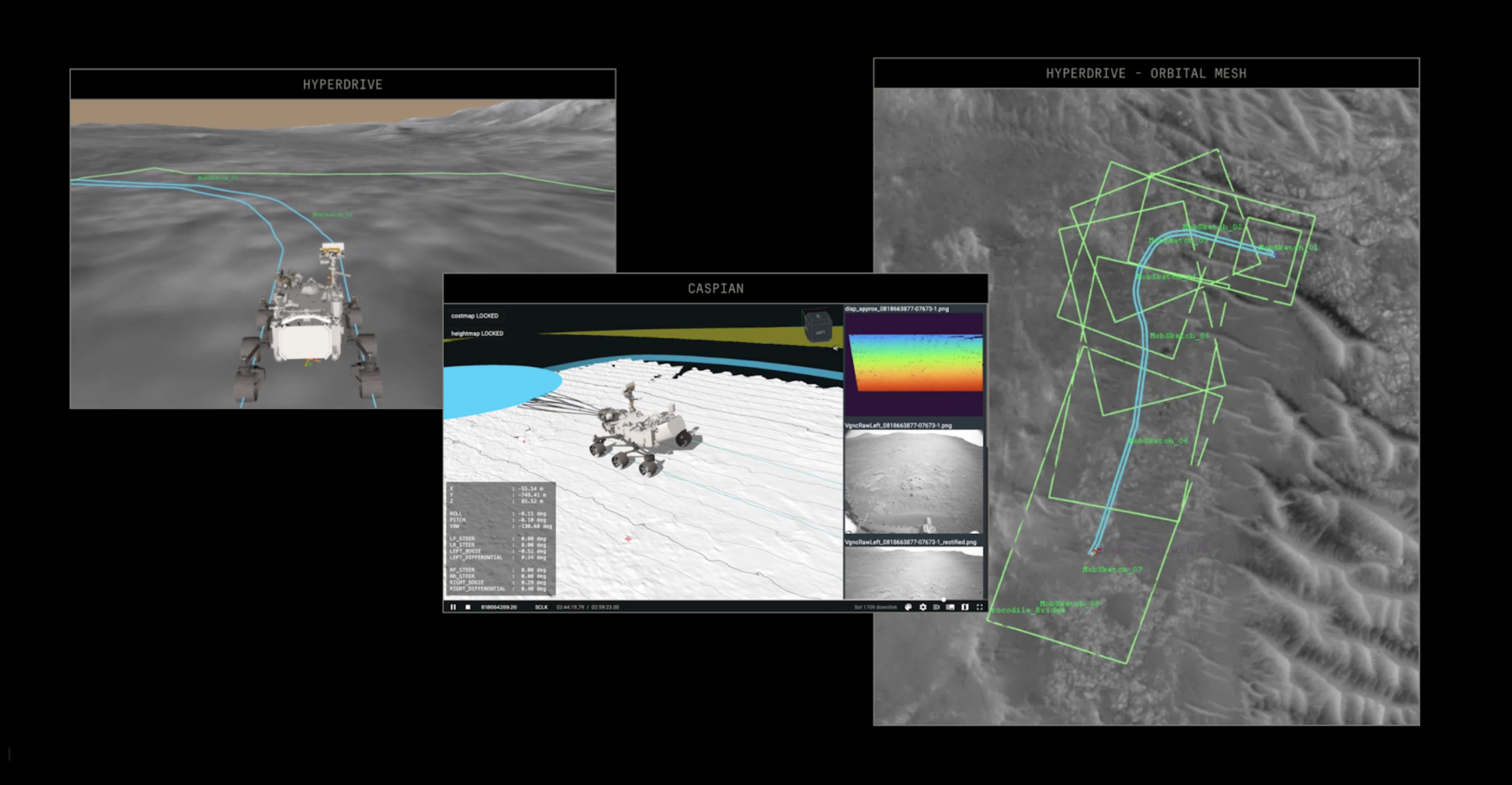

NASA Successfully Tests Anthropic's Claude AI for Mars Rover Navigation

NASA's Jet Propulsion Laboratory successfully used Anthropic's Claude AI to plan and execute driving routes for the Perseverance rover on Mars. The AI generated commands in Rover Markup Language after analyzing years of mission data, completing two driving sessions totaling over 1,400 feet. Engineers found Claude's route planning required only minor adjustments.

This breakthrough demonstration could revolutionize deep space missions by cutting route-planning time in half. The technology shows particular promise for missions to distant destinations like Jupiter's Europa or Saturn's Titan, where communication delays make real-time human control impractical.

My Take: Claude basically got its driver's license for Mars and somehow didn't crash a $2.4 billion rover into a rock - it's like letting an AI take the wheel of the most expensive remote-control car in the solar system, 225 million miles away from the nearest AAA service.

When: February 2, 2026

Source: payloadspace.com

ChatGPT Loses US Market Share to Gemini Despite Overall AI Growth

New data from Apptopia shows ChatGPT is losing market share in the US market while Google's Gemini continues steady growth. Despite the decline in relative market position, the overall AI chatbot market continues expanding. Claude leads in average time spent per daily active user at 34.7 minutes, while Perplexity showed significant month-over-month gains.

The data reveals that about 20% of AI users now have multiple AI apps installed, suggesting users are finding specialized use cases for different AI tools rather than relying on a single platform. This trend indicates the AI market is maturing beyond the initial ChatGPT dominance period.

My Take: ChatGPT is basically experiencing what happens when you're the only pizza place in town and then suddenly everyone else opens up shop - turns out people actually want different flavors of AI, and Google's free Gemini is looking pretty tasty to budget-conscious users.

When: February 4, 2026

Source: telecoms.com

Google Releases Conductor: Context-Driven Gemini CLI Extension for Agentic Workflows

Google has launched Conductor, a new CLI extension for Gemini that stores knowledge as Markdown files and orchestrates agentic workflows. The tool represents Google's push into developer-focused AI automation, allowing users to build context-driven AI systems that can manage complex multi-step processes.

This release signals Google's commitment to making AI agents more accessible to developers and technical users, potentially competing with OpenAI's coding tools and Anthropic's developer-focused features. The Markdown storage approach suggests a focus on human-readable, version-controllable AI knowledge management.

My Take: Google basically turned Gemini into a command-line Swiss Army knife that speaks Markdown - it's like giving developers an AI assistant that can actually remember things and follow multi-step recipes without forgetting what it was supposed to be cooking halfway through.

When: February 2, 2026

Source: marktechpost.com

AI Goals for 2026: What Every Organisation Should Prioritise