Last updated: March 31, 2026

Updated constantly.

✨ Read February Archive 2026 of major AI events

March opens with the AI industry shifting focus from capability races to deployment reality. The benchmark wars of early 2026 have given way to harder questions: can these systems perform reliably in production, and do the business models actually hold up?

February saw monetization strategies crystallize — subscription tiers, revised API pricing, and enterprise deals signaling that labs are serious about building durable businesses. Agentic systems moved further into real workflows, though reliability and trust remain the critical unsolved problems between prototypes and widespread adoption.

As March unfolds, expect continued pressure on labs to demonstrate sustainable economics, open-weight models closing the gap with frontier systems, and the first honest post-mortems on agentic deployments that have been running long enough to reveal their real failure modes. We will continue tracking developments closely and publishing the most important AI news on this page.

AI news, Major Product Launches & Model Releases

Self-Driving Networks Automate Enterprise Network Operations

Enterprise networking vendors and operators are adopting self-driving networks—infrastructure that embeds AI to detect, reason, and act autonomously. The technology highlights platforms like HPE Mist AI and GreenLake Intelligence that combine machine learning, generative agents, and closed-loop automation.

These AI-powered systems can predict and fix network issues automatically, reducing operational overhead and improving stability for hospitals, retail locations, and campuses. The self-driving network approach represents a significant evolution in enterprise IT infrastructure management, moving from reactive maintenance to predictive and autonomous operation.

My Take: Enterprise networks basically learned to drive themselves like Tesla cars, except instead of avoiding traffic accidents they're preventing WiFi outages - it's like having a really paranoid IT administrator who never sleeps and can fix problems before anyone even knows they exist.

When: March 30, 2026

Source: letsdatascience.com

IAPP GS Day One: OpenAI, Anthropic Attorneys Delve Into the Privacy-Safety Tradeoff in AI

Legal representatives from OpenAI and Anthropic participated in discussions at the International Association of Privacy Professionals Global Summit, focusing on the complex balance between privacy protection and AI safety requirements. The attorneys explored how leading AI companies navigate competing demands for data protection while ensuring AI systems remain safe and beneficial.

The discussion highlighted ongoing tensions in AI development where privacy-preserving techniques may sometimes conflict with safety monitoring needs. Representatives from both major AI companies provided insights into how the industry is working to resolve these fundamental challenges in responsible AI development.

My Take: OpenAI and Anthropic lawyers basically had to explain how they're trying to build AI that's both completely private and completely transparent at the same time - it's like trying to design a house that's simultaneously invisible and made of glass, which explains why they need really good lawyers.

When: March 30, 2026

Source: law.com

Power Users Get Real About AI's Role At Work

Legal professionals who are heavy AI users are providing candid assessments of how artificial intelligence is actually impacting their daily work practices. These power users offer insights into the practical realities of AI integration beyond the hype, showing both benefits and limitations of current AI tools in professional legal settings.

The experiences of these early adopters reveal patterns in how AI is most effectively deployed in legal work, providing guidance for broader adoption across the profession. Their real-world usage data helps distinguish between AI's theoretical capabilities and its practical applications in demanding professional environments.

My Take: Legal power users basically became the test pilots of AI integration, discovering that AI is less like having a robot assistant and more like having a really smart intern who occasionally needs supervision but can handle way more work than anyone expected.

When: March 31, 2026

Source: law360.com

Threat Or Opportunity: Junior Attys Face The AI Future Now

Early-career and senior attorneys alike believe artificial intelligence could replace responsibilities usually performed by junior lawyers, causing concern among some early-career legal professionals about their future job prospects. The survey reveals widespread agreement across experience levels that AI capabilities are advancing rapidly enough to automate traditional entry-level legal work.

This shift represents a fundamental challenge for legal education and career development, as traditional pathways for gaining experience may be disrupted by AI automation. The legal profession is grappling with how to train new attorneys when many traditional learning opportunities may be handled by AI systems instead of junior staff.

My Take: Junior attorneys are basically watching AI eat their homework before they even get to turn it in - it's like training to be a chef only to discover that robots have already perfected chopping vegetables and making basic sauces, leaving you wondering what's left to learn.

When: March 31, 2026

Source: law360.com

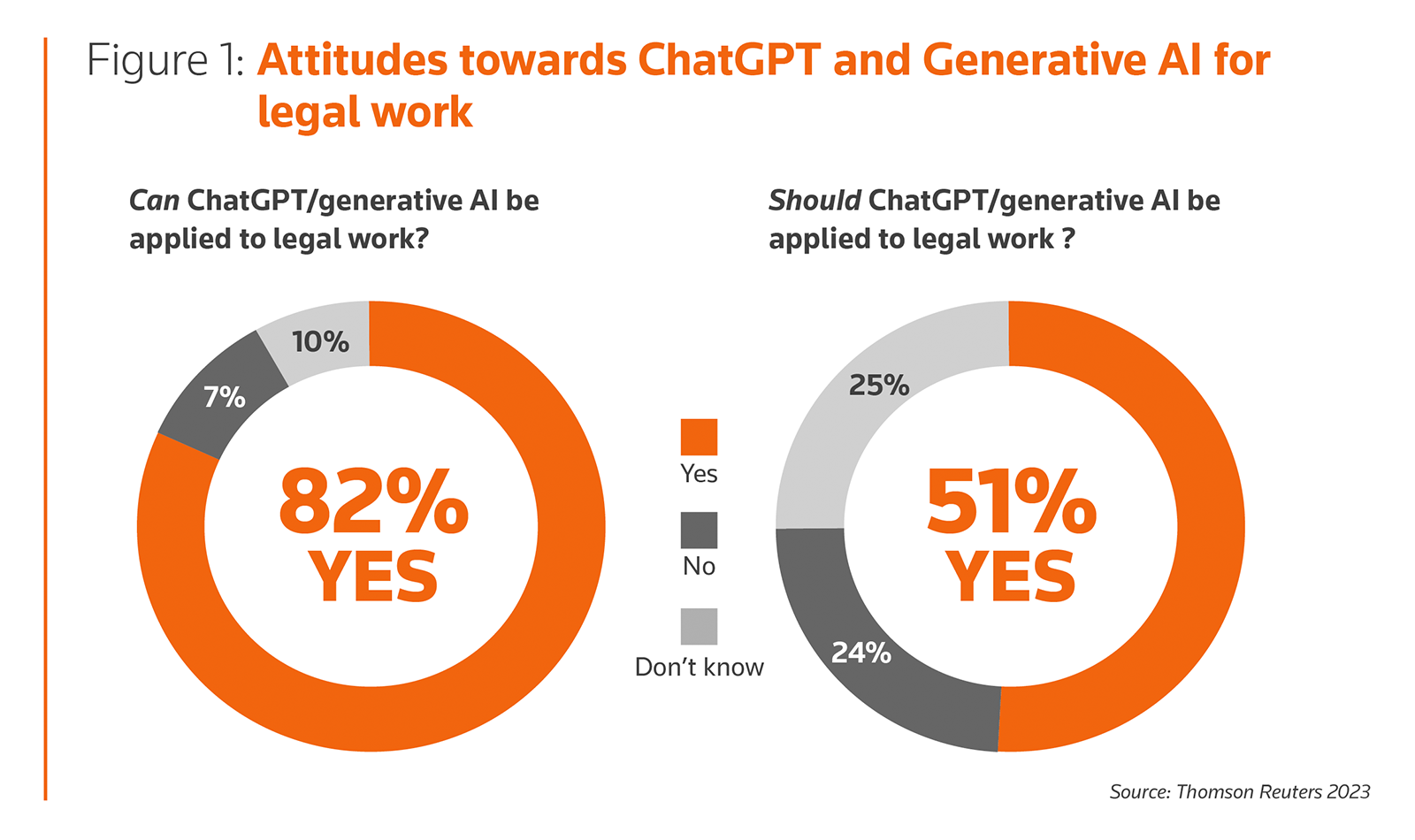

What Attorneys Really Think About AI

Seventy percent of attorneys at law firms report using artificial intelligence at least once a week as part of their jobs, representing a sharp increase from 2025 according to the latest survey. This dramatic rise in AI adoption among legal professionals reflects the growing integration of AI tools into daily legal practice.

The survey results indicate a significant shift in the legal profession's relationship with AI technology, moving from experimental use to regular integration into workflow processes. This trend suggests AI is becoming a standard tool in legal practice rather than a novelty, fundamentally changing how attorneys approach their work.

My Take: Attorneys went from being skeptical about AI to using it more than they check their email - it's like watching the most cautious profession suddenly embrace the technology equivalent of a really smart paralegal who never sleeps and doesn't bill by the hour.

When: March 31, 2026

Source: law360.com

For the First Time, ChatGPT Has Solved an Unproven Math Problem in Geometry

Researchers at the Free University of Brussels documented that ChatGPT-5.2 (Thinking) independently developed original mathematical proofs for previously unproven problems in geometry. The final proof emerged from seven chat sessions with ChatGPT and four evolving versions of the argument, with the model playing a key role in exploring possible approaches while human researchers ensured logical correctness.

This represents a significant step for AI in theoretical research, moving beyond supporting coding or writing tasks to contributing to original mathematical discoveries. The researchers note that while formulating candidate proofs can now be much faster with AI assistance, human verification remains a bottleneck, though language models will likely help with that process too in the future.

My Take: ChatGPT basically just joined the ranks of professional mathematicians by solving problems that have stumped humans - it's like watching your calculator suddenly start writing poetry, except instead of rhymes it's discovering new geometric theorems that could reshape how we understand mathematical relationships.

When: March 30, 2026

Source: scitechdaily.com

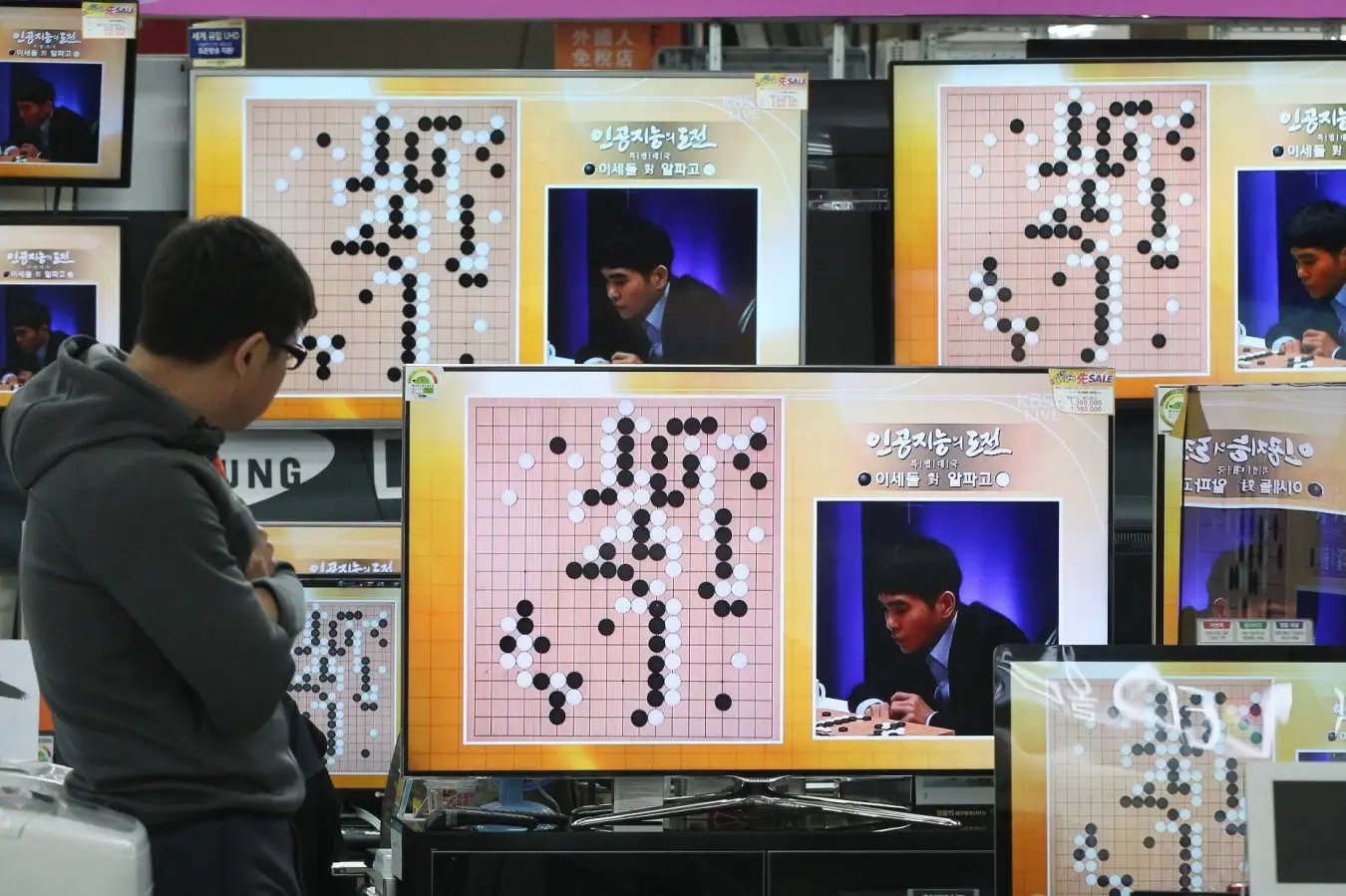

AlphaGo Shapes Modern AI Reasoning Breakthroughs

Marking the tenth anniversary of AlphaGo's 2016 4–1 victory over Lee Sedol, a new analysis reveals how DeepMind's dual-model, reinforcement-learning approach transformed modern AI research. The article demonstrates that AlphaGo's self-play, evaluation loops, and 'more time' planning dimension directly influenced contemporary reasoning models used by OpenAI, DeepMind, and Anthropic.

The piece credibly links AlphaGo's dual-model and self-play methods to current reasoning-model advances, showing how the breakthrough Go-playing AI established foundational techniques that continue to shape today's most advanced AI systems. Expert quotes and analysis provide practical implications for researchers and practitioners working on modern AI reasoning capabilities.

My Take: AlphaGo basically wrote the playbook for modern AI by proving that teaching AI to argue with itself produces better results - it's like discovering that the best way to get smart is to have really intense debates in your own head, which explains why all the latest AI models are essentially very sophisticated versions of that.

When: March 30, 2026

Source: letsdatascience.com

Silent Drift: How LLMs Are Quietly Breaking Organizational Access Control

Organizations are increasingly using LLM artificial intelligence to help produce policy-as-code for security, compliance, and operational rules, but this efficiency gain comes with serious risks. AI can write complex Rego and Cedar code in seconds, but a single missing condition or hallucinated attribute can quietly dismantle an organization's least-privilege security model.

Experts warn that organizations need to treat authorization logic as a high-risk domain, emphasizing that 'almost correct' isn't good enough in authorization systems. As businesses move toward AI-assisted security engineering, the goal should be correctness, auditability, and trust rather than just automation, since policy flaws introduced by AI can create significant security vulnerabilities.

My Take: LLMs are basically like that overconfident intern who can write code really fast but occasionally forgets crucial details that could accidentally give the janitor admin access to your entire company - it's the digital equivalent of leaving your house key under a rock because you were in a hurry.

When: March 30, 2026

Source: securityweek.com

Microsoft Made GPT and Claude Work Together—And the Result Beats Every AI Research Tool Out There

Microsoft released two new features for Copilot's Researcher tool called Critique and Council that put OpenAI's GPT and Anthropic's Claude to work on the same research task in sequence. The company announced these multi-model features that let different AI models collaborate or work in parallel while a third judge finds discrepancies, scoring higher than every system in industry benchmarks.

Critique makes the models collaborate directly, while Council makes them work in parallel with oversight. According to Microsoft's testing, this two-model workflow fixes hallucinations, weak citations, and other problems associated with single-model AI research. The approach represents a shift from companies trying to convince users their single AI model is the smartest researcher, to leveraging multiple models for better results.

My Take: Microsoft basically said 'why choose sides in the AI war when you can make them all work together' - it's like being the Switzerland of AI research tools, except instead of staying neutral, they're actively making GPT and Claude collaborate like reluctant coworkers who end up being really good at their job when forced to work together.

When: March 30, 2026

Source: decrypt.co

Accenture and Anthropic Team to Help Organizations Secure, Scale AI-Driven Cybersecurity Operations

Accenture has launched Cyber.AI, a new solution powered by Claude, Anthropic's AI model, that enables organizations to transform their security operations from human-speed response to continuous AI-driven cyber capabilities. The solution was announced at RSA 2026 and represents a major partnership between the consulting giant and the AI company.

Accenture has already deployed Cyber.AI within its own global IT infrastructure to secure 1,600 applications and over 500,000 APIs, resulting in significant improvements in operational efficiency and risk reduction. The partnership aims to help clients modernize their defense operations through purpose-built, on-demand agentic AI security, fundamentally reshaping how cybersecurity teams operate at machine speed and scale.

My Take: Accenture basically turned Claude into a cybersecurity bouncer that never sleeps - instead of humans frantically clicking through security alerts at 3 AM, AI agents are now handling threats at machine speed while the security team actually gets to go home for dinner.

When: March 25, 2026

Source: markets.ft.com

Google Gemini Adds Tool to Make It Easier to Switch From ChatGPT

Google released new tools for its Gemini artificial intelligence assistant that will let users upload chat history and context from other AI apps, specifically targeting users of OpenAI's ChatGPT and Anthropic's Claude. The Alphabet company has added a new import option in Gemini so that free and paid users can upload zipped files of their conversations with other AI providers.

The move represents Google's aggressive push to increase Gemini's user base by removing friction for users wanting to switch from competing AI assistants. By allowing users to transfer their chat histories and personal context, Google is making it significantly easier to adopt Gemini without losing the investment users have made in training other AI assistants on their preferences and needs.

My Take: Google basically created AI relationship counseling - they're telling ChatGPT users 'bring all your emotional baggage and conversation history, we'll take you just as you are' which is either really sweet or the most passive-aggressive business strategy ever.

When: March 26, 2026

Source: bloomberg.com

iOS 27: Apple will reportedly let Claude and other AI chatbot apps integrate with Siri

Apple is reportedly planning to add an Extensions system to Siri in iOS 27 that will allow AI chatbots like Claude, ChatGPT, and others to integrate directly with Apple's voice assistant. Rather than making individual deals with AI providers, Apple is creating a framework that will let AI chatbot apps update to take advantage of the new Siri integration.

This doesn't change Apple's existing arrangement with Google where Apple uses Gemini AI models to power Apple Intelligence and certain Siri features. The Extensions system represents a different approach - allowing third-party AI assistants to work alongside Siri rather than replacing its core functionality. Apple is also reportedly testing a standalone Siri app and planning to make Siri work more like an AI chatbot in iOS 27.

My Take: Apple basically decided to turn Siri into the ultimate AI wingman - instead of jealously guarding their assistant, they're letting Claude and ChatGPT join the party, which is like the popular kid finally admitting they need help with their homework.

When: March 26, 2026

Source: 9to5mac.com

Deepfake X-rays are so real even doctors can't tell the difference

A new study published in Radiology shows that both radiologists and multimodal large language models (LLMs) have difficulty distinguishing real X-rays from AI-generated "deepfake" images. The study examined 264 X-ray images split evenly between real scans and AI-generated ones, revealing that radiologists could only identify AI-generated X-rays 41% of the time when not told fake images were present.

When radiologists were specifically told that AI-generated images were included in the test set, their accuracy improved but still varied significantly depending on the type of X-ray. For chest X-rays generated by RoentGen, radiologists achieved accuracy rates between 62% and 78%, while AI models ranged from 52% to 89%. The study raises important concerns about the potential for medical imaging fraud and the need for robust detection systems.

My Take: AI basically became so good at medical forgery that even doctors with years of training can't spot the fakes - it's like having a master art forger who's so skilled that museum curators are scratching their heads, except this time it's X-rays instead of Picassos.

When: March 26, 2026

Source: sciencedaily.com

Xaira's First Virtual Cell Model Is Largest To-Date, Toward Complex Biology

Billion dollar-backed AI drug developer Xaira Therapeutics has released the largest virtual cell model to date, designed to predict how cells respond to genetic perturbations in unseen biological contexts. The model, called X-Cell, was trained using what the company describes as "the largest genome-wide CRISPRi Perturb-seq dataset ever reported," composed of 25.6 million cells across seven screens and 16 biological contexts.

X-Cell uses a diffusion language model architecture that iteratively refines its predictions by replacing control gene expression values with perturbed values, contrasting with previous autoregressive approaches. While the virtual cell model represents a significant advance toward understanding biology and generalizing to new contexts, the company acknowledges that predicting patient outcomes is still a step away from current capabilities.

My Take: Xaira basically built a billion-dollar cellular crystal ball that can predict what happens when you mess with genes - it's like having a really sophisticated biological simulator that costs more than most countries' GDP, but hey, at least we're one step closer to understanding why cells do weird things.

When: March 25, 2026

Source: genengnews.com

Galtea raises $3.2M to help enterprises test AI agents

Galtea, a Barcelona Supercomputing Center spin-off founded eighteen months ago, has raised $3.2M to help enterprises test AI agents before they go live. The company uses AI to generate realistic test scenarios that expose failures, hallucinations, bias, and security risks in enterprise AI agents, addressing the growing gap between AI that works in demos and AI that works in production.

The round was led by 42CAP, the Munich-based early-stage technology fund, with participation from Mozilla Ventures and existing investors. Galtea's core product generates test cases and synthetic user simulations from descriptions of how an AI agent is intended to behave. For European enterprises building AI products without systematic testing infrastructure, new AI regulations have created urgency around proper testing and validation.

My Take: Galtea basically created a stress test gym for AI agents - instead of letting companies discover their AI is completely bonkers after it's already talking to customers, they're putting these digital employees through boot camp to weed out the ones that would embarrass everyone at the company picnic.

When: March 25, 2026

Source: thenextweb.com

IndustrialMind.ai Announces AI Deployment at ANDRITZ to Augment Engineering Capabilities for Hydraulic Equipment Parts

ANDRITZ, a global engineering and technology group, has selected IndustrialMind.ai to augment engineering capabilities across its manufacturing workflows. The AI deployment covers drawing review, BOM generation, and root cause analysis, helping the company turn engineering know-how into standardized, reusable digital workflows that scale across teams.

As industrial products and production environments become more data-rich and engineering-intensive, ANDRITZ is investing in AI technologies that help teams work faster and more consistently without compromising quality. The AI platform helps analyze complex engineering drawings against internal standards and manufacturing requirements, with IndustrialMind.ai positioning itself as building the AI manufacturing engineer for factories worldwide.

My Take: ANDRITZ basically hired an AI engineering intern that never gets tired, never makes coffee stains on blueprints, and can review technical drawings faster than a human can say 'hydraulic equipment' - it's like having a really smart robot that actually shows up to work on Monday mornings.

When: March 26, 2026

Source: markets.businessinsider.com

Consumers Choose ChatGPT For Direct Purchases

Kantar's consumer research reveals that one-third of respondents would buy directly through ChatGPT and other generative AI platforms rather than visit a retailer's website. The UK-focused survey also found that one in four consumers asked AI for product recommendations, while 15% assume unmentioned brands aren't suitable for their needs.

The research identified four distinct AI-adoption groups among consumers, signaling that marketers must adapt their presence and trust strategies for AI-mediated commerce. This shift suggests a fundamental change in how consumers discover and purchase products, with AI platforms potentially becoming intermediaries between brands and customers, bypassing traditional e-commerce websites.

My Take: One-third of consumers basically decided they'd rather shop by having a conversation with an AI than clicking through endless product pages - it's like having a really knowledgeable sales assistant who never judges you for buying weird stuff at 2 AM and actually remembers what you like.

When: March 26, 2026

Source: letsdatascience.com

Survey Reveals Manufacturing's AI Vision Priorities Shift from Accuracy to Ease of Use

A comprehensive survey of 500 manufacturers shows a fundamental shift in AI vision system priorities, with ease of use now outweighing pure accuracy concerns. The research reveals that 57% of manufacturers already use AI in machine vision operations, with another 30% planning near-term deployments, strongest in automotive, electronics, and logistics sectors.

Manufacturers with over 3 years of AI experience report significantly better scalability (86.1% vs 75.3%) and faster deployment capabilities (81.2% vs 72.1%) compared to newer users. The findings suggest the industry has matured beyond proof-of-concept stages to focus on practical implementation and operational efficiency rather than just technical performance metrics.

My Take: Manufacturers basically realized that having AI that's 99% accurate but impossible to use is like owning a Ferrari that only starts on Tuesdays - they'd rather have reliable, practical AI that actually shows up to work every day than perfect AI that needs a PhD to operate.

When: March 23, 2026

Source: stocktitan.net

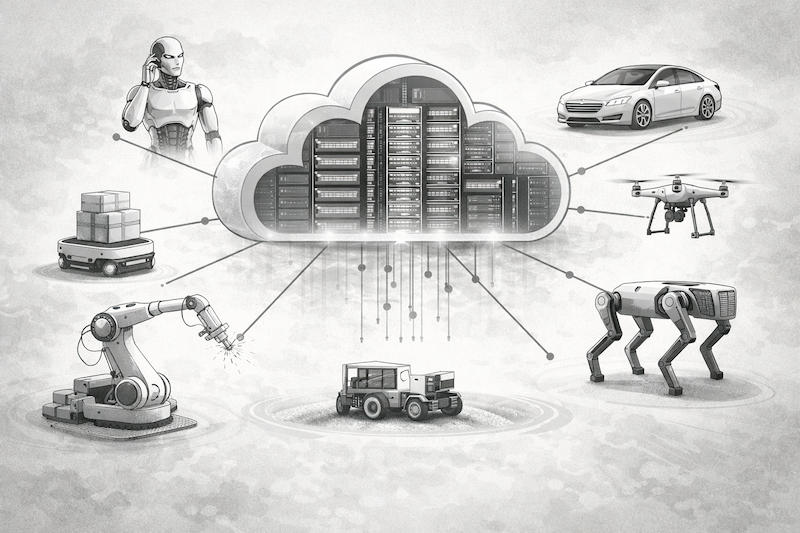

Nebius Partners with Nvidia to Build Specialized Cloud for Robotics and Physical AI

Cloud infrastructure company Nebius has partnered with Nvidia to build a specialized cloud platform designed specifically for robotics and physical AI applications. The collaboration addresses what industry experts call the 'three-computer problem' - the challenge of operating across large-scale GPU training, simulation testing, and edge deployment environments.

The partnership aims to solve the integration challenges that force engineering teams to spend 30-40% of their time on infrastructure work rather than improving robot behavior. The platform will address the expensive and dangerous nature of real-world training data collection while providing tools to handle edge cases that determine robot success or failure in field deployments.

My Take: Nebius and Nvidia basically built a cloud specifically for robots to learn how to exist in the real world - it's like creating a really expensive digital gym where robots can train for the Olympics of not falling down stairs or accidentally destroying everything they touch.

When: March 20, 2026

Source: roboticsandautomationnews.com

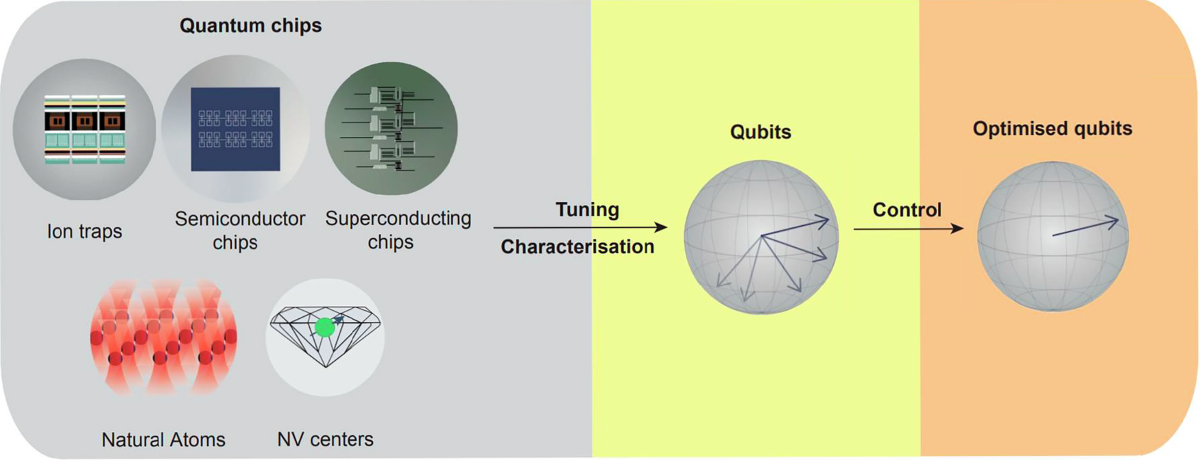

Nature Launches Collection on HPC/AI-Quantum Computing Co-Design

Nature has launched a comprehensive collection exploring the integration of High-Performance Computing (HPC), artificial intelligence, and quantum computing technologies. The collection focuses on the symbiotic relationship between these three domains, covering everything from machine learning for quantum noise mitigation to full-stack architectures for the quantum internet.

The initiative addresses how classical HPC and AI methods can significantly improve quantum computing system design and performance. Topics include hybrid quantum architectures, AI-assisted quantum error correction, robust quantum control using machine learning optimization, and distributed quantum systems for networked quantum applications. This represents a major shift from traditional separation between these computing paradigms toward integrated approaches.

My Take: Nature basically decided that HPC, AI, and quantum computing should stop working in silos and start having threesomes - because apparently the future of computing requires all three technologies to collaborate like some kind of digital polyamorous relationship.

When: March 20, 2026

Source: nature.com

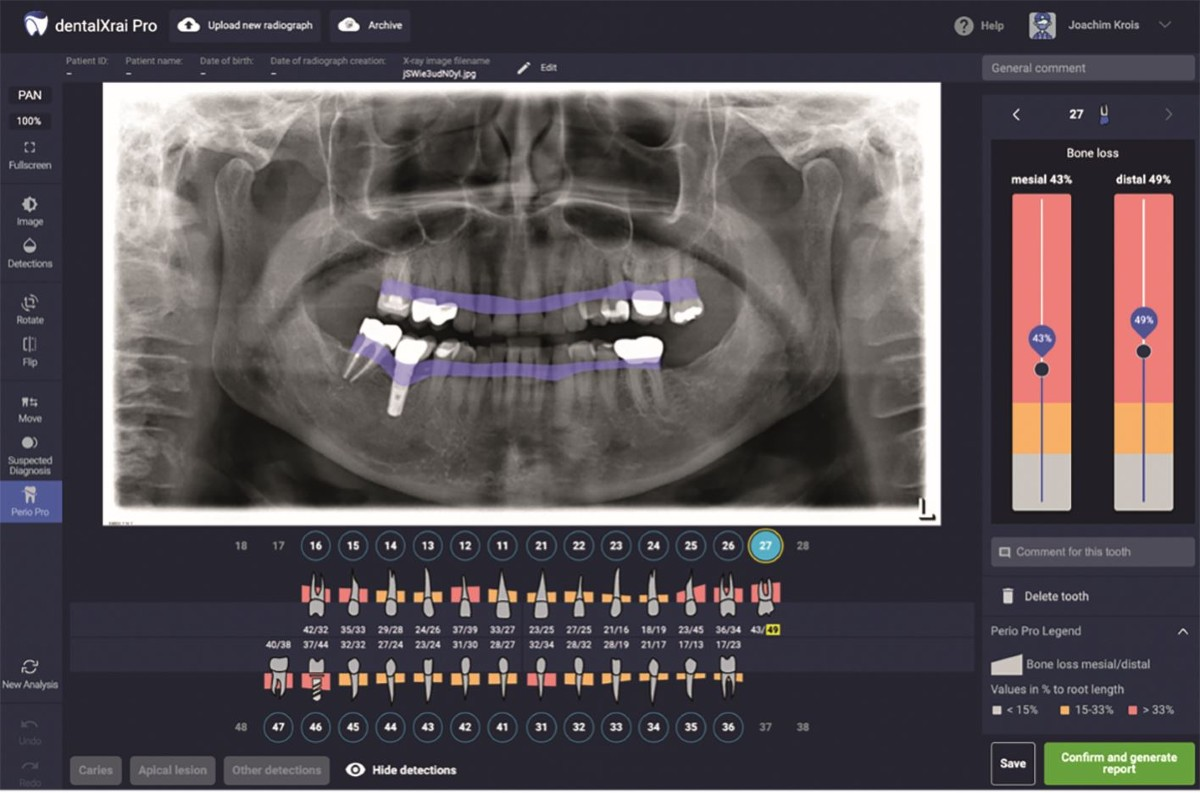

Nature Reviews AI Applications in Dental Imaging with Clinical Reality Check

Nature has published a systematic review of AI applications in dental imaging, highlighting both the potential and limitations of current artificial intelligence approaches in clinical dentistry. The study found that while AI algorithms achieved high diagnostic performance in controlled research environments using CBCT datasets, there remains a significant gap between laboratory performance and real-world clinical applicability.

The research reveals that in vitro studies often rely on highly curated imaging datasets and controlled conditions that may overestimate AI performance compared to routine clinical environments where image quality varies and pathology is heterogeneous. The authors conclude that clinical studies are urgently needed to ensure laboratory AI achievements can translate into practical dental care improvements.

My Take: Dental AI basically aced all its tests in the lab but might struggle with real patients who don't have perfectly positioned, high-quality X-rays - it's like training a robot dentist on Instagram-perfect teeth and then asking it to work on actual humans who drink coffee and forget to floss.

When: March 20, 2026

Source: nature.com

OpenAI Co-founder Andrej Karpathy: 'In State of Psychosis' Over AI Agents, Hasn't Coded in Months

Former OpenAI co-founder and Tesla AI director Andrej Karpathy revealed he hasn't written a single line of code in months and describes himself as being in a 'state of psychosis' trying to understand what's possible with current AI capabilities. Despite his foundational role in AI development, Karpathy admits feeling nervous about not being on the forefront of the rapidly evolving field.

Karpathy's comments highlight how quickly AI is advancing, with even leading AI researchers struggling to keep pace with the technology they helped create. His focus has shifted from hands-on coding to grappling with the broader implications and possibilities of AI systems, particularly around autonomous agents and their potential applications.

My Take: One of the guys who basically invented modern AI just admitted he's so blown away by what AI can do now that he's having an existential crisis and forgot how to code - it's like Frankenstein looking at his monster and saying 'I have no idea what this thing is capable of anymore.'

When: March 21, 2026

Source: fortune.com

Caris Life Sciences GPSai Identifies Cancer Misdiagnoses Using AI Analysis

Caris Life Sciences has announced breakthrough study results for its GPSai system, which successfully identifies and corrects cancer misdiagnoses by analyzing comprehensive molecular profiling data. The AI system examined 3,958 lung cancer cases labeled as squamous cell carcinoma (SCC) and identified 123 cases that were actually metastases from other primary sites including skin, bladder, head and neck, and thymic cancers.

This represents a significant advancement in precision medicine, where AI algorithms integrated with routine molecular profiling can uncover clinically significant misdiagnoses that directly impact treatment decisions and patient outcomes. The technology combines whole genome, whole exome, and whole transcriptome sequencing with advanced machine learning to analyze the molecular complexity of cancer more accurately than traditional diagnostic methods.

My Take: Caris basically built an AI detective that can look at cancer cells and say 'wait a minute, this tumor isn't from where you think it's from' - it's like having a molecular Sherlock Holmes that can solve medical mysteries doctors might miss.

When: March 20, 2026

Source: biospace.com

Wall Street Journal Reports AI Industry Learns 'World's Most Valuable F-Word': Focus

The Wall Street Journal reports that the smartest minds in AI have discovered the importance of 'focus' as the industry's most valuable concept. The article discusses how leading AI companies like OpenAI, Anthropic, and others are learning to concentrate their efforts rather than pursuing every possible AI application simultaneously.

This shift represents a maturation of the AI industry, moving from the 'build everything' mentality to strategic focus on specific high-value applications. The report suggests that successful AI companies are now prioritizing depth over breadth, concentrating resources on perfecting core capabilities rather than spreading thin across multiple ventures.

My Take: The AI industry basically had its Steve Jobs moment and realized that saying 'no' to a thousand good ideas to focus on a few great ones is worth more than all the venture capital in Silicon Valley - it's like finally learning that having 47 half-finished projects is worse than having 3 amazing ones.

When: March 21, 2026

Source: wsj.com

Quicken Produces 100 AI-Generated Content Pieces Weekly, Replaces Junior Staff

Financial planning company Quicken is now producing 100 pieces of content every few weeks using AI, fundamentally changing both its content strategy and staffing approach. The company has shifted from relying on junior copywriters to employing more senior staff who can effectively manage and direct AI content generation systems.

Quicken discovered that traditional SEO strategies weren't translating to Generative Engine Optimization (GEO) driven by large language models, forcing them to completely rework their digital marketing approach. The company found that AI was driving more traffic than analytics tools initially showed, leading to a comprehensive overhaul of how they create and optimize content for AI-powered search engines.

My Take: Quicken basically turned their content department into an AI assembly line and fired the humans who used to write basic blog posts - it's like replacing a bunch of sandwich makers with one really good chef who supervises a robot kitchen.

When: March 20, 2026

Source: adweek.com

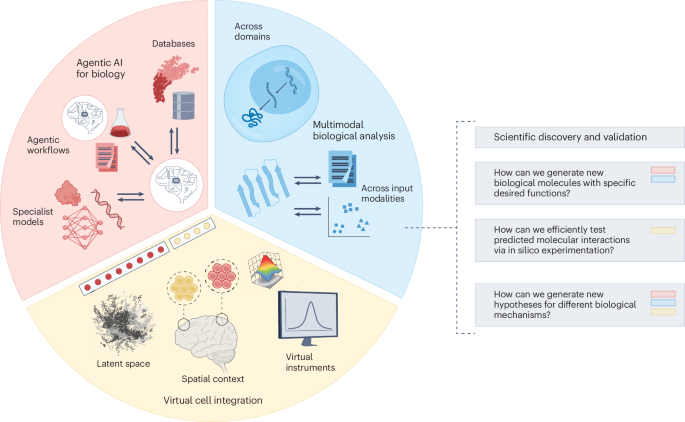

Nature Publishes Breakthrough Study on Generalist Biological AI for 'Language of Life'

Nature has published a comprehensive study on generalist biological artificial intelligence that models the 'language of life' through advanced machine learning approaches. The research demonstrates how AI can understand and predict biological processes by treating DNA, RNA, and protein sequences as natural languages that can be decoded and analyzed.

The study covers applications from protein phenotype prediction to RNA function analysis, showing how foundation models can be applied across multiple biological domains. This represents a significant advancement in computational biology, where AI systems can now interpret complex biological data with unprecedented accuracy, potentially accelerating drug discovery and personalized medicine research.

My Take: Scientists basically taught AI to read DNA like it's a really complex programming language - except instead of debugging software, it's debugging actual life, which feels both incredibly cool and slightly terrifying.

When: March 20, 2026

Source: nature.com

WordPress.com Launches AI Agents for Automated Content Creation and Publishing

WordPress.com has rolled out AI agents including Claude, ChatGPT, and Cursor that can automatically draft, edit, and publish posts on user websites through the Model Context Protocol (MCP). The agents can manage comments, optimize SEO metadata, and handle tags and categories, with all actions requiring user approval and being logged for transparency.

This feature is available on paid plans and builds on MCP support introduced last fall, which previously allowed AI tools to access site content and analytics. Given that WordPress powers over 43% of all websites, this could significantly increase machine-generated content across the web. The implementation includes safeguards like draft-only publishing by default and comprehensive activity logging to track all AI-generated changes.

My Take: WordPress basically turned every website into a potential AI content factory - it's like giving every blogger a robot intern who never sleeps, never complains, and definitely doesn't need coffee breaks to pump out SEO-optimized posts.

When: March 21, 2026

Source: mlq.ai

Thomson Reuters Warns Government Legal Teams About AI Security Risks

Thomson Reuters Legal Solutions published guidance highlighting serious security risks when government legal teams use consumer-grade AI tools like ChatGPT. The warning comes after multiple court cases where attorneys faced fines and sanctions for using AI-generated fabricated citations and false legal quotes.

The company emphasized the difference between 'free' AI tools and professional-grade solutions with verified content and compliance features. Thomson Reuters positioned its CoCounsel Legal as a secure alternative, trusted by U.S. federal courts and designed with guardrails for government agency security standards.

My Take: Government lawyers basically got caught using ChatGPT like it was Westlaw with a personality, only to discover that AI sometimes makes up legal cases the same way kids make up excuses for being late - now Thomson Reuters is swooping in like the responsible adult saying 'here, use the professional version instead.'

When: March 19, 2026

Source: legal.thomsonreuters.com

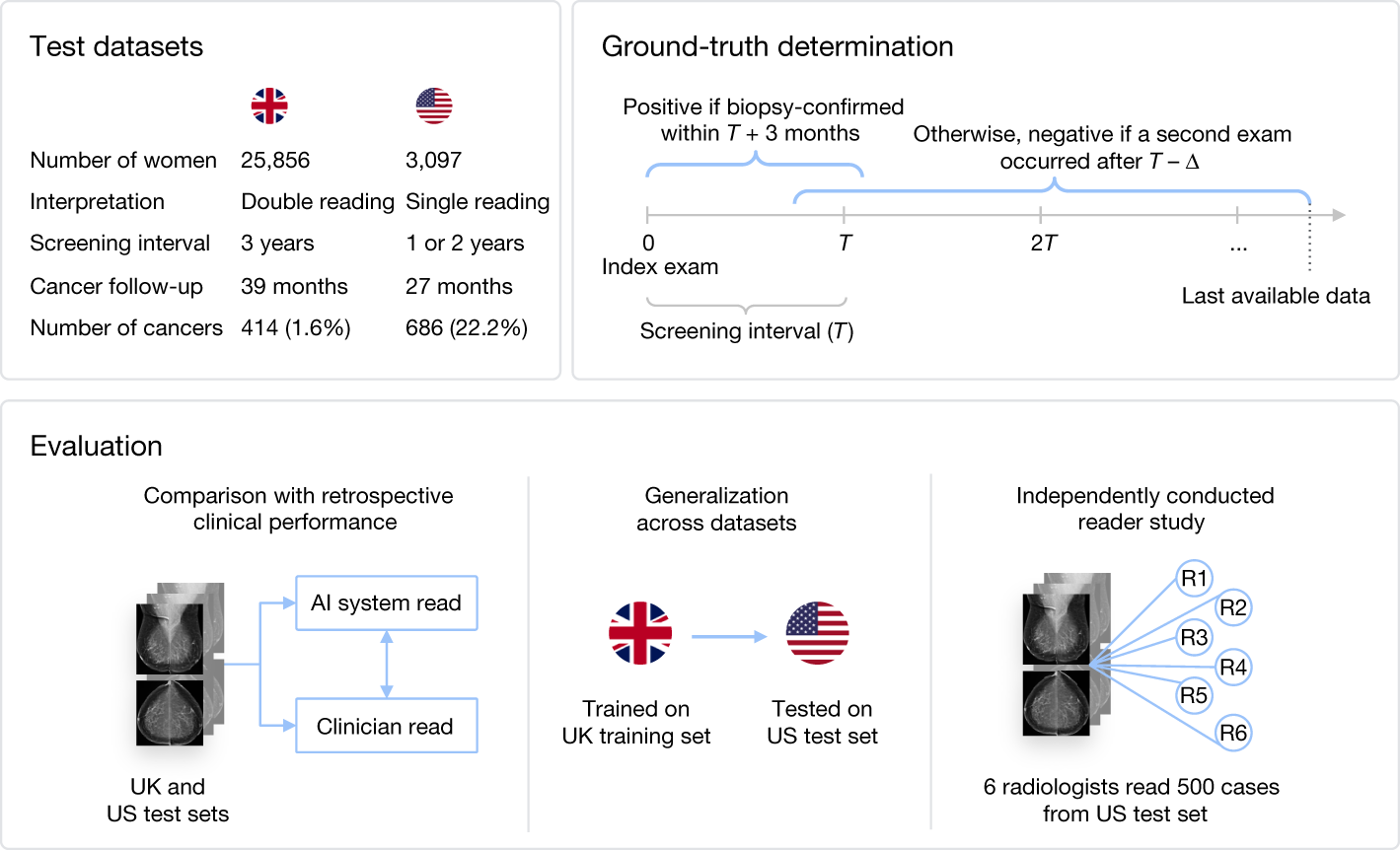

Nature Study: AI Shows Promise in Breast Cancer Screening with Human-Level Performance

A major clinical trial published in Nature found that AI-based triage and decision support systems achieved human-level performance in mammography and digital breast tomosynthesis screening. The study used deep convolutional neural networks to analyze images and detect lesions suspicious for breast cancer, demonstrating noninferiority to traditional radiologist screening in most metrics.

While the AI system showed strong performance in mammography, results were mixed for digital breast tomosynthesis (DBT), possibly due to sample size limitations and the high baseline performance of experienced radiologists. The research represents a significant step toward real-world AI deployment in critical medical screening applications.

My Take: AI basically earned its medical degree in radiology and is now reading mammograms as well as human doctors - though it's still learning the difference between DBT and regular mammography, like a medical resident who's great with X-rays but needs more practice with the fancy 3D stuff.

When: March 19, 2026

Source: nature.com

Multiverse Computing Launches Compressed AI Models That Outperform OpenAI Originals

Multiverse Computing's latest compressed model, HyperNova 60B 2602, claims to deliver faster responses at lower cost than the OpenAI model it was derived from. The compressed model is built on gpt-oss-120b and shows particular advantages for agentic coding workflows where AI autonomously completes complex programming tasks.

The development comes as the industry shifts focus toward smaller, more efficient models that can run on edge devices. Real-time usage monitoring and lower compute costs are driving enterprises to consider alternatives to large language models, with companies like Mistral also releasing optimized small models for specialized tasks.

My Take: Multiverse basically took OpenAI's model, put it on a diet, and somehow made it run faster - it's like taking a gas-guzzling SUV, turning it into a sports car, and discovering it gets better mileage while going twice as fast.

When: March 19, 2026

Source: techcrunch.com

Encyclopedia Britannica and Merriam-Webster Sue OpenAI for Copyright Infringement

Two of the world's most trusted reference publishers have filed a copyright lawsuit against OpenAI, alleging that ChatGPT scraped nearly 100,000 copyrighted articles to train its models. The publishers claim that ChatGPT reproduces their content verbatim in user responses while also attributing hallucinated or incorrect text to their trusted brands.

The lawsuit represents a significant escalation in the legal battles between content creators and AI companies over training data usage. Britannica and Merriam-Webster argue that OpenAI not only violated their copyrights but also damaged their reputations by having ChatGPT generate false information while claiming it came from their authoritative sources.

My Take: Encyclopedia Britannica basically just told OpenAI "we spent centuries building our reputation for accuracy and you're using our name to spread AI hallucinations" - it's like having someone forge your signature on a bunch of really confident but completely wrong answers, except the forger is making billions while destroying your credibility.

When: March 17, 2026

Source: law.com

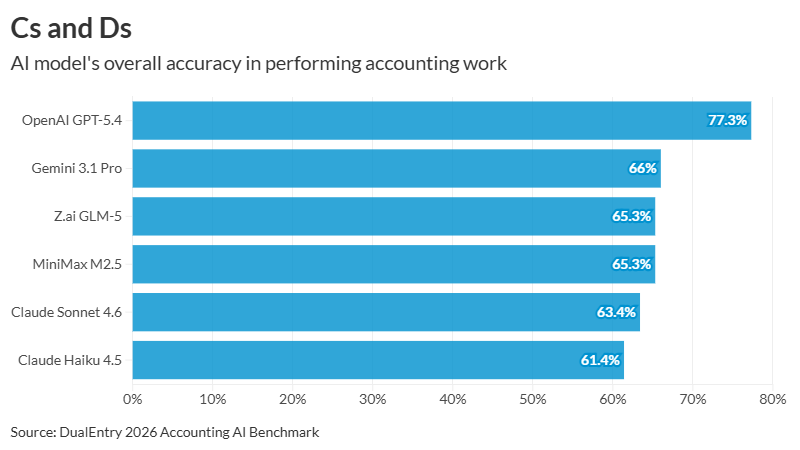

Study Shows AI Models Top Out at 77% Accuracy for Accounting Tasks

A comprehensive study testing 19 different AI models including ChatGPT, Claude, and Gemini on 101 accounting workflows found that even the best-performing model achieved only 77.3% accuracy. OpenAI's ChatGPT 5.4 led the pack, followed by Gemini 3.1 Pro at 66%, while most models scored below 65% and older models like GPT-4 managed just 19.8%.

The research revealed a fascinating performance split: models excelled at pattern matching tasks like transaction classification (92% accuracy) but struggled dramatically with structured reasoning tasks like journal entry creation (30-40% accuracy). While models performed well on conceptual knowledge about accounting standards like GAAP/IFRS, they failed at creating the precise, multi-line entries with exact debits and credits that accounting actually requires.

My Take: AI basically learned to talk about accounting like a college student who read the textbook but can't actually balance a checkbook - it's great at explaining what double-entry bookkeeping is but terrible at actually doing it, which means your robot accountant might sound really smart while completely screwing up your books.

When: March 17, 2026

Source: accountingtoday.com

Nvidia CEO Jensen Huang Declares OpenClaw 'Definitely the Next ChatGPT'

Nvidia CEO Jensen Huang proclaimed OpenClaw as potentially the next breakthrough AI tool during a CNBC interview, calling it "the largest, most popular, the most successful open-sourced project in the history of humanity." The AI agent tool has experienced meteoric growth, surpassing 100,000 GitHub stars and going viral in the developer community.

OpenClaw represents a shift from AI that answers questions to AI that takes action, with Nvidia positioning itself to provide security infrastructure through its NemoClaw project. The tool includes privacy protections, oversight capabilities, and enterprise-grade security to enable safe deployment of autonomous AI agents at scale. This development signals the industry's move into what Huang calls the "inference era" where AI systems continuously reason and act in the real world.

My Take: Jensen Huang basically just crowned OpenClaw as ChatGPT's successor while simultaneously plugging Nvidia's security wrapper for it - it's like a tech CEO declaring the next iPhone while casually mentioning they happen to make the best screen protectors, except this time the 'phone' can actually control your computer and do your job for you.

When: March 17, 2026

Source: cnbc.com

OpenAI Faces 'Wake Up Call' as Anthropic's Claude Code Dominates Developer Market

OpenAI is scrambling to respond after Anthropic's back-to-back successes with Claude Code and Cowork products served as a "wake up call," according to Forbes reporting. The ChatGPT maker is now exploring joint ventures with private equity firms like Bain Capital and TPG to distribute enterprise products across their portfolio companies.

Despite OpenAI claiming over 1 million businesses use its products and 2 million users for its Codex coding tool, Anthropic's Claude Code has clearly gained significant market traction. The competition has intensified in the AI coding space, with OpenAI's latest GPT-5.4 mini and nano models specifically designed to challenge Claude Code's viral success in creating applications from scratch.

My Take: OpenAI basically got schooled by Anthropic in the coding game and is now frantically calling private equity firms like a startup that just realized their competitor is eating their lunch - it's like watching the iPhone get nervous about Android, except this time the stakes are who gets to automate half the world's programming jobs.

When: March 18, 2026

Source: forbes.com

Arduino Unveils Ventuno Q: Edge Robotics Platform with 40 TOPS AI Processing

Arduino has launched the Ventuno Q, a new robotics development platform powered by Qualcomm's Dragonwing IQ8 AI processor capable of 40 TOPS of AI performance. The dual-processor system combines AI processing with real-time control through an STM32H5 microcontroller, enabling developers to run both traditional machine learning and generative AI models locally on robotic systems.

The platform includes 16GB of RAM with support for up to 64GB of expandable storage, allowing multiple AI inference tasks to run simultaneously on a single board. Named after the Italian word for twenty-one to mark Arduino's upcoming 21st anniversary, the Ventuno Q represents a significant advancement in making advanced robotics and edge AI accessible to developers, educators, and innovators worldwide.

My Take: Arduino basically put a robot brain on steroids - 40 TOPS of AI power means your next DIY robot project could be smarter than most laptops while still being controlled by the same company that taught millions of people to blink an LED, which is either democratizing AI or about to make garage workshops very interesting places.

When: March 15, 2026

Source: roboticsandautomationnews.com

Scientists Discover AI Can Enhance Human Creativity in Collaborative Settings

New research published in ScienceDaily reveals that AI systems can actually make humans more creative when used as collaborative partners rather than replacement tools. The study found that when humans work alongside AI in creative tasks, both the quantity and quality of creative output improved significantly. This challenges the common narrative that AI will simply replace human creativity.

The research has important implications as AI becomes increasingly embedded in creative fields including engineering, architecture, music, and game design. The key finding is that the most effective approach involves human-AI collaboration where each contributes their unique strengths - humans providing context, intuition, and creative direction while AI handles pattern recognition, rapid iteration, and data processing.

My Take: Scientists just proved that AI is like the world's best creative writing partner - instead of stealing your ideas, it helps you have better ones, which means the future of creativity isn't humans vs. robots, it's humans plus robots making stuff that neither could create alone.

When: March 16, 2026

Source: sciencedaily.com

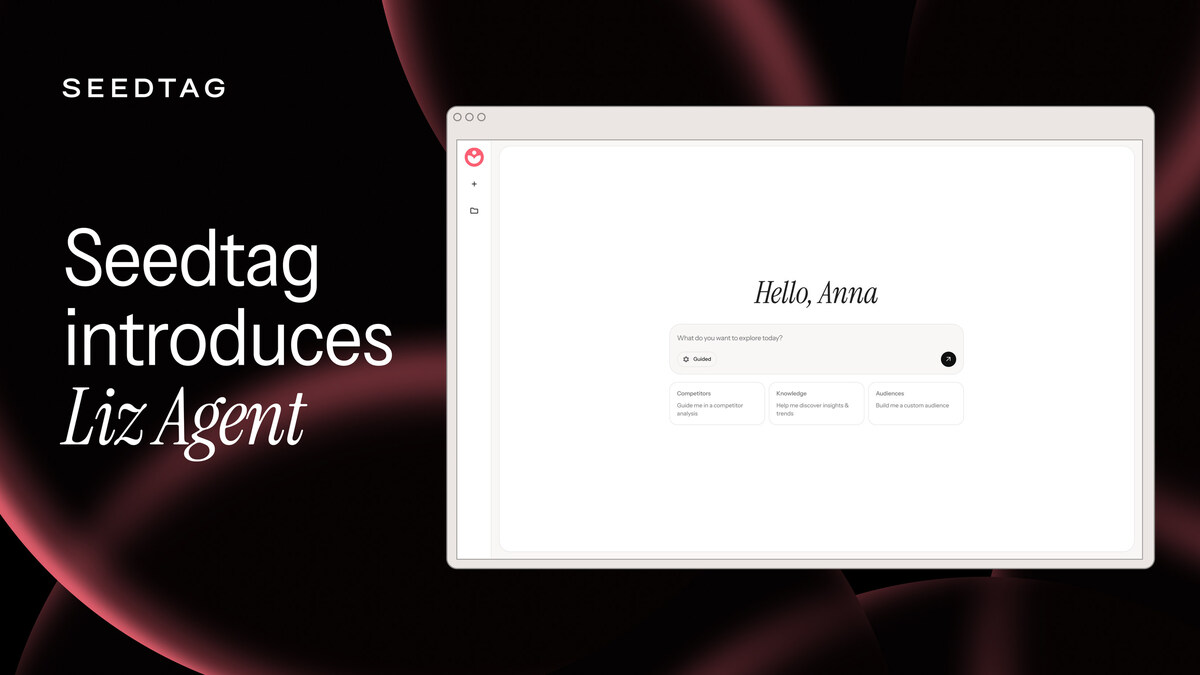

AI Agent 'Liz' Launches to Fully Automate Advertising Campaign Management

Seedtag has launched an AI agent called 'Liz' that can plan and execute entire advertising campaigns using 'neuro-contextual intelligence' technology. The platform analyzes consumer emotions and intent in real-time through machine learning and natural language understanding, then automatically manages media buying, planning, and campaign optimization. This represents a significant step toward fully automated advertising operations.

The system combines contextual analysis with emotional prediction to determine the best moments to reach consumers with advertising. Liz operates through both text and voice interfaces, acting as a conversational assistant that can carry out complex advertising processes without human intervention. This automation of the entire ad supply chain could fundamentally change how advertising campaigns are created and managed.

My Take: The advertising industry just created an AI that can run entire campaigns by reading people's emotions and buying ads automatically - it's like having a robot that not only knows exactly when you're sad enough to impulse-buy ice cream, but can also instantly place the Ben & Jerry's ad in front of your face.

When: March 16, 2026

Source: mediapost.com

Encyclopedia Britannica and Merriam-Webster Sue OpenAI Over Copyright Infringement

Two of the world's most prestigious reference publishers have filed a lawsuit against OpenAI, alleging that ChatGPT reproduces their copyrighted content without permission. The complaint states that ChatGPT provides 'verbatim or near-verbatim reproductions, summaries, or abridgements' of original content from both Britannica and Merriam-Webster. This brings the total number of copyright lawsuits against AI companies in the United States to 91.

The lawsuit mirrors the framework these same publishers used when they sued AI search engine Perplexity in September 2025, focusing on copyright infringement under the 1976 Copyright Act. The case will likely be transferred to a multidistrict litigation (MDL) proceeding, meaning a resolution could be years away. Legal analysts expect this case to significantly impact how AI companies handle training data and content reproduction.

My Take: The companies that literally wrote the dictionary are now suing the AI that learned from the dictionary - it's like your English teacher taking you to court because you used words they taught you, except with billions of dollars at stake and the future of how AI learns hanging in the balance.

When: March 16, 2026

Source: thenextweb.com

Anthropic's Claude AI Used in U.S. Military Strike Operations, Then Banned by Trump

The Wall Street Journal reported that Anthropic's Claude AI tool was being used by U.S. military commands including Central Command in the Middle East to assist with operational decisions. The AI system, which had been integrated into Pentagon systems through a partnership with Palantir Technologies, reportedly helped with strike operations before being abruptly banned by President Trump in late February.

The revelation raises serious questions about AI's role in warfare, with Bloomberg noting that it remains unclear whether Claude was used to flag target locations or make casualty estimates. This marks a significant shift from AI's traditional military applications like satellite imagery analysis to potentially direct involvement in combat decision-making, highlighting the rapid evolution of AI from advertising optimization to life-and-death military operations.

My Take: Claude went from helping people write better emails to potentially helping the Pentagon decide where to drop bombs - it's like your friendly neighborhood AI assistant suddenly got a very serious day job, and now everyone's wondering if we've crossed a line we didn't even know existed.

When: March 16, 2026

Source: mediapost.com

Anthropic's Claude can now draw interactive charts and diagrams

Anthropic has added interactive visualization capabilities to Claude, allowing users to create charts, diagrams, and visual explanations directly within conversations. The feature enables Claude to generate everything from simple bar charts to complex network diagrams, with users able to interact with and modify the visualizations in real-time.

This launch comes as competition intensifies among AI labs, with OpenAI recently introducing "dynamic visual explanations" for educational content and Google offering similar features to Gemini Ultra subscribers for $200/month. However, Anthropic is making its interactive charts available to all users for free, potentially giving it a significant advantage in the visual AI space.

My Take: Claude basically learned to become an AI PowerPoint presenter that actually makes sense - while OpenAI is charging premium prices for math tutoring visuals and Google wants $200/month for fancy charts, Anthropic just said 'here, everyone gets interactive diagrams for free' and basically turned the AI visualization market into a potluck dinner where they brought the good stuff.

When: March 12, 2026

Source: thenewstack.io

We Built Our Company on ChatGPT, Then Switched to Claude — Here's Why

Sidhant Bendre, cofounder of AI-driven software company Oleve, explains why his company canceled their ChatGPT subscription and switched entirely to Claude after using OpenAI's model for two years. The primary driver was Claude's superior code generation with significantly fewer bugs, allowing his team to move faster by spending less time on corrections.

Bendre emphasizes that the switch wasn't driven by frustrations with ChatGPT, but rather by Claude's ability to deliver on AI's core promise of removing busywork and enabling big-picture thinking. The company now relies heavily on Claude across their entire workflow, from coding to marketing to hiring processes, highlighting how AI model choice can significantly impact startup operations.

My Take: A startup founder basically broke up with ChatGPT after a two-year relationship and immediately started posting about how much happier he is with Claude - it's like watching someone leave their high-maintenance ex for someone who actually remembers to take out the trash and doesn't leave coding bugs all over the house.

When: March 13, 2026

Source: businessinsider.com

Anthropic commits $100M to Claude Partner Network

Anthropic has announced a $100 million commitment to launch the Claude Partner Network, a program designed to make Claude the default AI platform for large enterprises. The timing is particularly notable as it comes during the same week Anthropic is fighting a legal battle with the Pentagon over being labeled a national security risk.

The partner network leverages Claude's unique position as the only frontier AI model available across all three major cloud providers - AWS, Google Cloud, and Microsoft. This distribution advantage, combined with the substantial financial commitment, represents Anthropic's strategy to build long-term commercial relationships while distancing itself from defense-related controversies that have complicated its government partnerships.

My Take: Anthropic basically said 'Pentagon drama aside, we're going all-in on making Claude the enterprise AI of choice' and backed it up with $100 million - it's like getting into a messy divorce but simultaneously buying the nicest house in the neighborhood to prove you're doing just fine, thank you very much.

When: March 13, 2026

Source: thenextweb.com

Anthropic In Talks With Blackstone And Hellman & Friedman To Launch AI Joint Venture

Anthropic is in discussions with private equity giants Blackstone and Hellman & Friedman to create an AI joint venture, according to reports from The Information. The talks represent a significant business development as Anthropic seeks to expand its enterprise distribution and capabilities beyond its current offerings.

The joint venture discussions are occurring against the backdrop of Anthropic's ongoing legal dispute with the Pentagon, which has labeled the AI company a "supply chain risk" due to disagreements over military usage restrictions. Despite the government controversy temporarily affecting negotiations, talks are reportedly continuing as all parties see the commercial potential of combining Anthropic's AI technology with the investment firms' enterprise relationships and capital.

My Take: Anthropic is basically speed-dating with Wall Street while simultaneously fighting the Pentagon in court - it's like trying to plan your wedding while your future in-laws are actively trying to get you kicked out of the country, but hey, at least Blackstone thinks you're marriage material.

When: March 12, 2026

Source: forbes.com

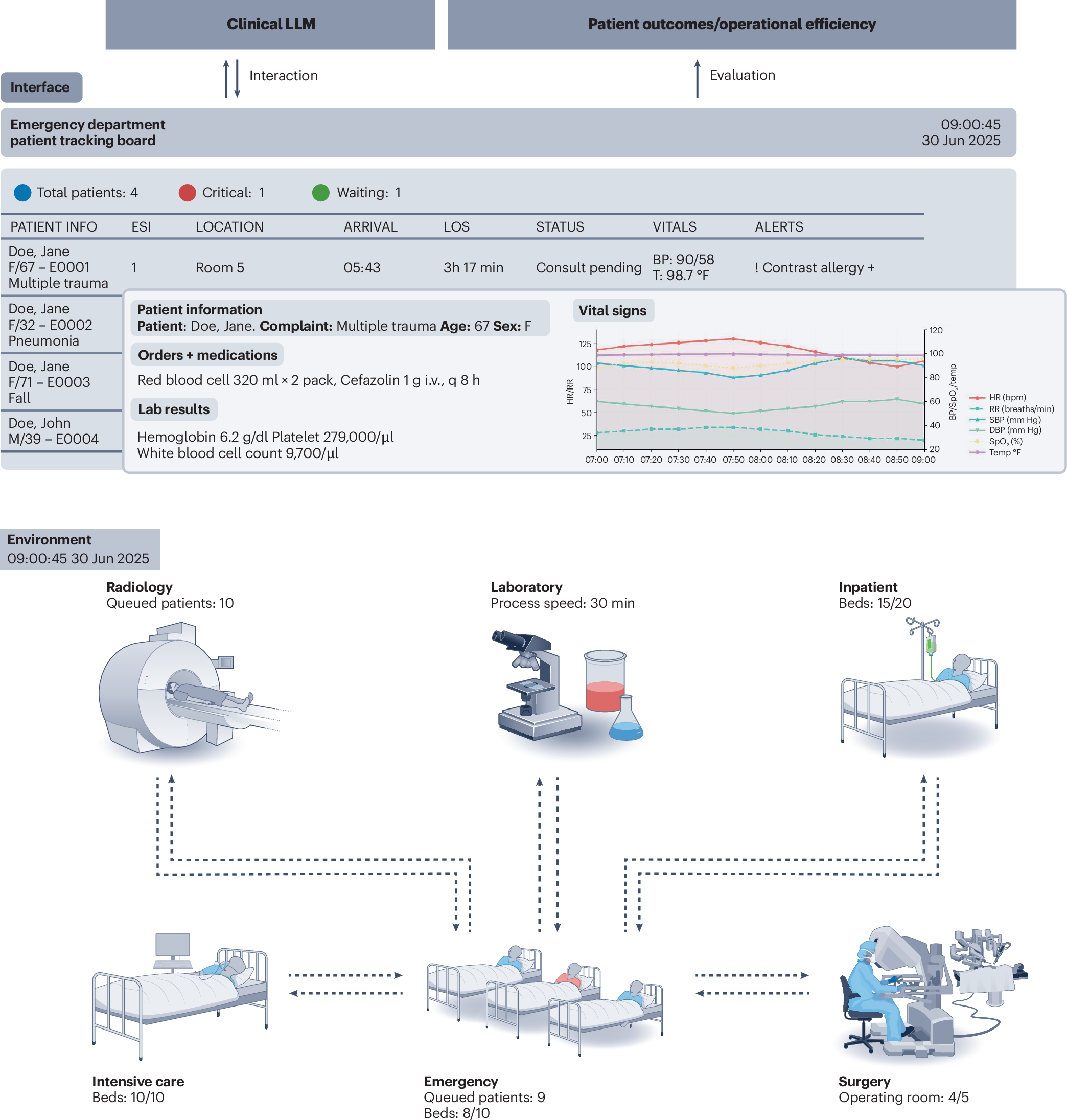

A clinical environment simulator for dynamic AI evaluation

Researchers have proposed the Clinical Environment Simulator (CES), a new framework for evaluating medical AI that moves beyond static datasets to test how clinical LLMs perform in realistic, dynamic hospital environments. The system features parallel simulation engines that track hospital resources, patient conditions, and staff workloads in real-time, requiring AI systems to make decisions through actual electronic health record interfaces.

The CES addresses critical gaps in current medical AI evaluation by testing temporal reasoning under evolving constraints, resource-aware decision-making where choices for one patient affect system capacity for others, and operational resilience during emergencies. This represents a fundamental shift toward evaluating clinical AI as an integrated component of healthcare delivery systems rather than isolated diagnostic tools.

My Take: Medical AI evaluation just got a massive reality check - instead of testing AI on perfect textbook cases, researchers basically created a virtual hospital simulator where AI has to deal with the chaos of real healthcare, complete with limited beds, overworked staff, and multiple emergencies happening simultaneously, which is like the difference between practicing surgery on a mannequin versus actually working in an ER during a zombie apocalypse.

When: March 12, 2026

Source: nature.com

OpenAI is Testing An Ads Manager, As Its New Ads Business Fights Growing Pains

OpenAI has begun testing an Ads Manager dashboard with a small group of partners as it works to establish its advertising business model. The platform allows marketers to run, monitor, and optimize campaigns in real-time, with current testers receiving weekly CSV reports containing performance metrics like clicks and impressions.

However, early results show ChatGPT's advertising performance lags significantly behind Google Search, with click-through rates falling well below expectations. OpenAI is asking advertisers for minimum commitments of $200,000, creating pressure to prove the platform can deliver meaningful results. The company's entry into advertising represents a critical revenue diversification effort as it seeks to monetize its massive user base beyond subscription fees.

My Take: OpenAI basically opened an advertising lemonade stand and is asking for $200,000 minimum purchases while their click-through rates are getting absolutely demolished by Google - it's like trying to compete with McDonald's by opening a burger joint that costs more, takes longer, and somehow makes people less hungry.

When: March 13, 2026

Source: adweek.com

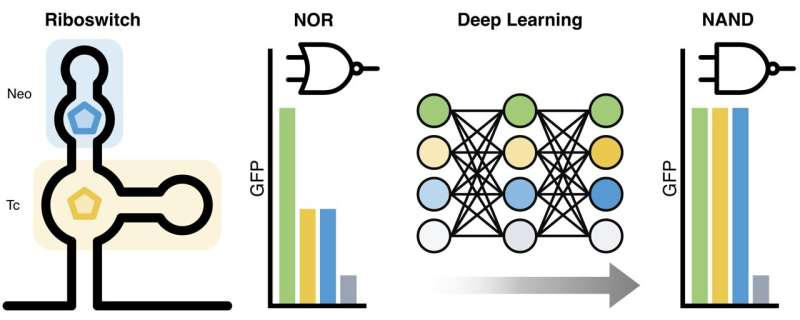

Researchers use AI to develop RNA-based synthetic NAND switch in living cells

Scientists have successfully used AI-guided design to create the first RNA-based genetic switch that replicates NAND gate logic in living cells. The breakthrough combines deep learning models with Bayesian optimization to rapidly identify and optimize riboswitch variants that control gene expression only when both specific molecular inputs are present.

The research demonstrates how machine learning can accelerate the discovery of functional RNA elements that nature has never produced. Using high-throughput screening and the Kriging Believer method for parallel optimization, the team was able to design multiple riboswitch variants simultaneously, significantly increasing experimental efficiency and opening new possibilities for programmable cellular logic and advanced biosensors.

My Take: Scientists basically taught AI to become a genetic engineer that designs biological computer chips inside living cells - they created RNA switches that work like digital logic gates, which means we're literally programming biology like software now, except instead of debugging code crashes, you're debugging whether your engineered bacteria will actually follow instructions or just decide to be rebellious and do whatever they want.

When: March 11, 2026

Source: phys.org

GSMA launches Open Telco AI initiative for telco-grade AI at MWC 2026

The GSMA has launched the Open Telco AI initiative at Mobile World Congress 2026, aiming to create telecommunications-grade AI models specifically designed for network operations. The consortium includes multiple university partners and technology companies, with an initial ecosystem featuring RFGPT (a radio-frequency language model from Khalifa University) and AdaptKey AI's Large Telco Model built on Nvidia Nemotron.

The initiative represents the telecom industry's attempt to develop AI capabilities that can handle mission-critical network environments, moving beyond general-purpose models to specialized solutions for autonomous network management. However, questions remain about whether a consortium approach can match the development pace and resources of major hyperscale AI companies investing billions in proprietary models.

My Take: The telecom industry basically decided to build their own AI instead of relying on Big Tech models, which is like a bunch of plumbers getting together to design their own smartphones - noble effort, but they're going up against companies that spend more on AI research in a month than most countries spend on infrastructure in a year.

When: March 11, 2026

Source: rcrwireless.com

Neuramancer raises €1.7M to bring forensic AI to deepfake detection

German startup Neuramancer has secured €1.7 million in pre-seed funding to develop AI-powered deepfake detection technology for the insurance industry. The funding round was led by Vanagon Ventures, with participation from Bayern Kapital, ZOHO.VC, and Lightfield Equity, as the company addresses the growing problem of AI-generated fraud in insurance claims.

The German Insurance Association has documented billions of euros in annual damages from insurance fraud, with generative AI making it increasingly easy to manipulate damage photographs and video calls. Neuramancer's solution enters a market that barely existed until generative AI matured, creating both significant opportunity and the challenge of keeping detection capabilities ahead of increasingly sophisticated generation tools.

My Take: Neuramancer basically became the AI police for an AI crime wave that didn't exist until AI got good enough to commit the crimes - it's like starting a cybersecurity company specifically for problems that your industry created, except instead of protecting against hackers, you're protecting against AI that's gotten too good at lying about car accidents.

When: March 12, 2026

Source: thenextweb.com

DeepRare AI System Outperforms Doctors in Rare Disease Diagnosis Study

DeepRare, an agentic AI system integrating 40 specialized tools developed by Shanghai Jiao Tong University, achieved higher diagnostic accuracy than experienced physicians in identifying rare diseases. In head-to-head comparisons with five doctors having over a decade of experience each, DeepRare correctly identified diseases 64.4% of the time on first suggestion versus 54.6% for human specialists.

When given three diagnostic suggestions instead of one, the AI system achieved 79% accuracy compared to 66% for human doctors. The breakthrough is particularly significant for rare disease diagnosis, where 80% of conditions have genetic origins but most patients experience years or decades of misdiagnosis. The AI system's ability to integrate multiple specialized diagnostic tools may help accelerate the typically prolonged journey from symptoms to proper treatment.

My Take: DeepRare basically became the House MD of artificial intelligence - it can spot medical zebras when doctors are looking for horses, which is either a huge breakthrough for patients with mysterious symptoms or the beginning of robots making humans feel inadequate in yet another professional field.

When: March 7, 2026

Source: thenextweb.com

Incoherent AGI Hype Spurs Industry-Wide Pivot To Hybrid AI

Industry leaders including Netflix, Amazon, JPMorgan, and Microsoft are moving away from autonomous AI dreams toward hybrid systems that combine machine learning risk assessment with human oversight. This shift represents a 'sobering up' from unrealistic expectations about AI achieving human-like autonomy, focusing instead on practical semi-autonomous solutions.

Hybrid AI works by using machine learning models to assign probability-based risk scores to AI outputs, routing high-risk cases to human operators for review. This approach allows businesses to automate significant workloads while mitigating the operational risks associated with large language models, creating reliable value through judicious human-in-the-loop integration rather than pursuing the elusive goal of full AI autonomy.

My Take: The AI industry basically went from 'robots will replace everyone' to 'robots need babysitters' - it's like realizing that self-driving cars work great until they encounter a plastic bag, so now everyone's building systems where AI does the heavy lifting and humans handle the weird edge cases.

When: March 9, 2026

Source: forbes.com

New Study Finds AI Content Has No Impact on Law Firm Google Rankings

Custom Legal Marketing analyzed law firm websites and found that AI-generated content shows no statistically significant impact on Google search rankings. The study revealed a polarized adoption pattern: 54.7% of ranking pages contain 5% or less AI-detected content, while 21.4% use 70% or higher AI content, with very few firms in the middle range of 6-69%.

Interestingly, 18.2% of top-ranking pages for personal injury keywords contained 70%+ AI content, but these belonged to firms with enormous domain authority, suggesting it's the website's overall strength rather than the AI content driving rankings. The research indicates that Google's algorithms may be content-agnostic when it comes to AI generation, focusing more on other ranking factors like domain authority and user engagement.

My Take: This study basically proved that Google doesn't care if a lawyer wrote their website copy or if ChatGPT did - it's like discovering that restaurant critics judge food quality, not whether the chef went to culinary school, which means law firms can embrace AI writing without SEO penalties as long as their overall web presence is strong.

When: March 7, 2026

Source: markets.financialcontent.com

Google's New Command-Line Tool Can Plug OpenClaw Into Your Workspace Data

Google launched a new Workspace CLI that makes it easier to connect AI agents like OpenClaw to Google's cloud services and productivity apps. The command-line tool bundles Google's existing cloud APIs into a streamlined package that supports structured JSON outputs and includes over 40 agent skills for tasks like managing Drive files, sending emails, creating calendar appointments, and sending chat messages.

The tool represents Google's attempt to provide a cleaner alternative to Model Context Protocol (MCP) setups, which typically require significant development overhead. However, the integration of powerful AI agents like OpenClaw with enterprise data raises significant security concerns, including the risk of hallucinations corrupting managed data and vulnerability to prompt injection attacks that could expose sensitive information.

My Take: Google basically created a digital key that lets AI agents rummage through your entire Google Workspace - it's like giving a really smart but occasionally confused intern access to all your company files, emails, and calendar, which could either revolutionize productivity or create the most spectacular data disasters in corporate history.

When: March 6, 2026

Source: arstechnica.com

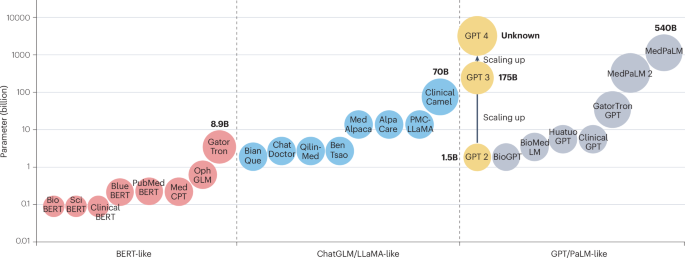

The Evolving Landscape of Large Language Models in Health Care

A comprehensive Nature study analyzing 19,123 natural language processing-related healthcare studies reveals distinct advantages between LLMs and traditional non-LLM methods. LLM-related research showed explosive growth from 757 studies in 2023 to 2,787 in 2024, while non-LLM studies maintained steady but moderate growth from 2,191 to 3,140 during the same period.

The research demonstrates that LLMs excel at open-ended healthcare tasks like clinical reasoning and decision support, while traditional AI methods dominate information extraction tasks. This complementary relationship suggests the future of healthcare AI lies in hybrid approaches that leverage the strengths of both paradigms, particularly as newer models like GPT-5, Gemini 2.5, and DeepSeek-R1 continue advancing reasoning capabilities.

My Take: Healthcare AI basically split into two tribes - the LLM crowd that wants to chat with patients like digital doctors, and the traditional AI folks who prefer to quietly extract data like medical librarians, which means the best healthcare AI will probably be like a medical team where both specialists work together.

When: March 9, 2026

Source: nature.com

I Wrote A Resume For A $180,000 Job Using ChatGPT 5.4

Forbes tested ChatGPT 5.4's capabilities for professional document creation by having it write a resume for a senior project manager targeting a $185,000 project director position. The experiment revealed improved accuracy and reduced errors compared to previous versions, but highlighted persistent issues with repetitive content and the need for human oversight.

Despite GPT-5.4's promise of 33% improved accuracy, the AI still generated redundant sections and bullet points that required careful human editing. The test demonstrated that while AI can significantly accelerate resume creation when provided with detailed context and persona prompts, human review remains essential for ensuring quality, consistency, and avoiding the repetitive patterns that could make applications appear rushed or automated.

My Take: ChatGPT 5.4 basically became a resume writer with a photographic memory but no editor - it can craft impressive professional documents that hit all the right keywords, but it's like that overachieving student who says the same thing three different ways to reach the word count.

When: March 8, 2026

Source: forbes.com

Anthropic Finds 22 Firefox Vulnerabilities Using Claude Opus 4.6 AI Model

Anthropic's Claude Opus 4.6 AI model successfully identified 22 high and moderate-severity vulnerabilities in Firefox's codebase, representing nearly one-fifth of all high-severity bugs patched in the browser during 2025. The AI detected a critical use-after-free bug in JavaScript processing after just 20 minutes of analysis, demonstrating significant capability in automated security research.

The research involved scanning nearly 6,000 C++ files and submitting 112 unique vulnerability reports, with most issues already fixed in Firefox 148. This breakthrough suggests AI systems are becoming powerful tools for proactive security research, potentially revolutionizing how software vulnerabilities are discovered and addressed before they can be exploited by malicious actors.

My Take: Claude basically became Firefox's unpaid security consultant and found more bugs in 20 minutes than some security teams find in months - it's like having a digital bloodhound that can sniff out code vulnerabilities faster than humans can write the code in the first place.

When: March 7, 2026

Source: thehackernews.com

Studies Reveal AI Citation Clues for Search Optimization

New research analyzing 1.2 million ChatGPT results and various AI search platforms reveals how to optimize content for AI citations. The studies found that 74.8% of AI citations appear in the first half of web pages, with 46.1% coming from the first 30% of content, emphasizing the importance of front-loading key information.

The research introduced the concept of 'atomic facts' - self-contained sentences that make sense independently. AI systems strongly prefer sentences of 6-20 words, and 100% of citations were complete sentences rather than fragments. This suggests content creators should focus on concise, early answers and avoid lengthy introductions to improve their chances of being cited by AI systems.

My Take: AI basically has the attention span of a goldfish with a PhD - it wants the most important information in the first few paragraphs, wrapped in bite-sized sentences, which means the internet is about to get a lot more like Wikipedia and a lot less like academic papers.

When: March 9, 2026

Source: practicalecommerce.com

7 Real-World Prompts on Gemini 3 and Claude Sonnet 4.6 — The Results Surprised Me

Tom's Guide conducted a comprehensive comparison between Google's Gemini 3 and Anthropic's Claude Sonnet 4.6 using seven practical prompts, revealing distinct strengths for each model. Gemini 3 Flash excelled in speed and quick analysis tasks, while Claude Sonnet 4.6 demonstrated superior reasoning, writing quality, and structured thinking capabilities.

The testing highlighted that there's no single 'best' AI model, with different systems optimized for different cognitive tasks. The results suggest users should choose AI models based on specific needs rather than assuming one system dominates across all use cases, with Claude showing particular strength in deeper reasoning tasks and Gemini proving better for rapid-fire queries and summaries.

My Take: This comparison basically proved that AI models are like specialized athletes - Gemini 3 is the sprinter who gets you quick answers, while Claude Sonnet 4.6 is the marathon runner who thinks deeply about complex problems, which means choosing an AI is now like picking the right tool for the job instead of hoping for a Swiss Army knife.

When: March 8, 2026

Source: tomsguide.com

The Moment That Kicked Off The AI Revolution

New Scientist examines the pivotal moment when AlphaGo defeated human Go champions, tracing how this breakthrough established the foundation for modern AI development. The victory demonstrated the power of neural networks combined with reinforcement learning, a two-step process that now underlies both game-playing AI and large language models like ChatGPT.

The article explains how AlphaGo's approach - pretraining on human data followed by self-improvement through reinforcement learning - became the template for today's AI systems. This same methodology evolved into the techniques used for protein folding with AlphaFold and language understanding in LLMs, showing how one breakthrough in an ancient board game catalyzed the entire modern AI revolution.

My Take: AlphaGo basically wrote the playbook that every AI system now follows - it's like that one kid in school who figured out the perfect study method and then everyone else copied their homework for the next decade, except the homework was conquering human intelligence.

When: March 7, 2026

Source: newscientist.com

Landmark Lawsuit Against OpenAI For Allowing ChatGPT To Provide Legal Advice Could Be Game-Changer

A significant legal case has been filed against OpenAI challenging ChatGPT's provision of legal advice, which could establish precedents affecting all AI makers. The lawsuit focuses on whether AI systems should be restricted from offering legal guidance without proper licensing, potentially reshaping how AI companies approach regulated professional services.

The case raises broader questions about AI liability when systems provide advice in specialized fields like law, medicine, or finance. Legal experts suggest this could lead to either stricter usage policies across AI platforms or the development of AI systems specifically designed to avoid crossing into professional advice territory that requires human licensing.

My Take: This lawsuit basically asks whether ChatGPT can practice law without a license - it's like suing a really smart parrot for giving legal advice, except the parrot has read every law book ever written and might actually be better at legal research than most lawyers.

When: March 9, 2026

Source: forbes.com

One Platform Gives You Lifetime Access to Gemini, ChatGPT, Anthropic, and More for $70

1min.AI is offering lifetime access to multiple AI model families including GPT, Claude, Gemini, Llama, Mistral, and Command for a one-time fee of $69.97, down from $540. The platform consolidates various AI services into a single interface, allowing users to switch between models and maintain separate conversation threads for different projects.

This pricing model addresses the growing cost burden of multiple AI subscriptions, which can easily exceed hundreds of dollars monthly. The platform supports chatting with different AI assistants and provides access to capabilities across text generation, analysis, and other AI-powered tasks from major providers.

My Take: 1min.AI basically created the Costco membership of artificial intelligence - pay once and get bulk access to every major AI model, which is either the best deal in tech history or the kind of too-good-to-be-true offer that makes you wonder what the catch is.

When: March 7, 2026

Source: mashable.com

Choose-Your-Own-Adventure AI Runs On Top Of The SaaS-Pocalypse

Plurality AI is creating a unified platform where users can access multiple AI models including ChatGPT, Claude, Gemini, and LLaMA for various tasks like text generation, image creation, and web search. The platform aims to simplify AI model selection by letting users switch between different models based on their specific needs without complex setup.

This represents a growing trend toward AI aggregation platforms that solve the 'SaaS-pocalypse' problem of managing multiple AI subscriptions. The human-in-the-loop approach allows users to upload images, translate, and generate outputs easily across different AI ecosystems in one place.

My Take: Plurality AI basically turned the AI market into a buffet - instead of committing to one expensive AI relationship, you can now sample ChatGPT's wit, Claude's thoughtfulness, and Gemini's versatility all from the same menu, which is like having a universal remote for artificial intelligence.

When: March 7, 2026

Source: forbes.com

Luma AI Launches Creative Agents Powered by 'Unified Intelligence' Models

AI startup Luma has launched creative AI agents powered by its new 'Unified Intelligence' models, promising to streamline creative workflows that currently require multiple specialized tools. The $4 billion valued company, which has raised $1.1 billion total, positions itself as building toward multimodal general intelligence with an end-to-end execution layer for creative tasks.

Luma's approach addresses the 'multi-tool mess' that many creative professionals face when working with various AI applications for different tasks. The company's agents are designed to handle complex creative workflows in a more integrated manner, moving away from the linear processes that characterize current AI tool usage toward more dynamic, non-linear creative collaboration.

My Take: Luma basically wants to be the Swiss Army knife of creative AI - instead of juggling 47 different AI tools to make a video, write copy, and design graphics, they're promising one agent that can do it all, which sounds great until you realize most Swiss Army knives are terrible at being actual knives.

When: March 5, 2026

Source: techcrunch.com

Author Charles Yu Argues Against Calling AI Capabilities 'Intelligence' in Atlantic Essay

In an essay adapted from his 2026 Joel Connaroe Lecture at Davidson College, author Charles Yu challenges the tech industry's use of the term 'intelligence' to describe AI capabilities, arguing that conflating technological capability with human intelligence diminishes our understanding of both. Yu contends that much of human intelligence consists of 'tacit knowledge' that cannot be easily articulated or replicated by language models.

Yu suggests that the rush to achieve artificial general intelligence (AGI) is based on a fundamental misunderstanding of what intelligence actually entails. He argues that by measuring ourselves against AI's linguistic outputs, we risk 'dumbing ourselves down' and underestimating human cognitive capabilities that extend far beyond language production and pattern matching.

My Take: Charles Yu basically told the entire AI industry that calling LLMs 'intelligent' is like calling a really good autocomplete feature 'creative writing' - he's arguing that we're so impressed by AI's ability to string words together that we forgot intelligence involves actually understanding what those words mean in the real world.

When: March 5, 2026

Source: theatlantic.com

OpenAI Launches GPT-5.4 with Native Computer Control and Enhanced Reasoning Capabilities

OpenAI has released GPT-5.4, featuring significant improvements in reasoning, coding, and professional work tasks, with the model achieving record scores on computer use benchmarks OSWorld-Verified and WebArena Verified. The new model includes native computer use capabilities, allowing it to operate computers autonomously and complete tasks across different applications. GPT-5.4 is available in three versions: standard, Thinking (with enhanced chain-of-thought reasoning), and Pro.

The launch represents OpenAI's response to competitive pressure, particularly from Anthropic's Claude, with the model showing 18% fewer errors and 33% fewer false claims compared to GPT-5.2. OpenAI has also implemented new safety evaluations to test for potential deception in the model's reasoning process, finding that the Thinking version is less likely to misrepresent its chain-of-thought process.