Picture yourself in the passenger seat of a car, hands resting quietly in your lap, scrolling through a playlist, adjusting the navigation, tweaking the climate control — without once touching a screen, speaking a command, or reaching for your phone. All you are doing is barely flexing your fingers, and the band on your wrist is handling the rest.

That is exactly what Meta demonstrated at CES 2026 in partnership with Garmin, and while it looked like the kind of polished concept demo that gets applauded and forgotten, what sits underneath it is considerably more serious than the setting suggested.

The Meta Neural Band, which Meta brought to market in September 2025 bundled with its Ray-Ban Display glasses, is outgrowing its original framing as a single-device accessory and beginning to look like something closer to a universal input platform. Less than a year after its commercial launch, the company is already showing it controlling automotive infotainment systems and simultaneously funding research at the University of Utah, where scientists are studying how well EMG technology can serve people living with ALS, muscular dystrophy, and conditions that limit hand mobility.

Taken together, these moves say something no press release quite states outright: Meta has decided that the wrist is the next great interface between humans and the digital world, and it intends to own that territory before anyone else has a serious product to show.

To understand why that matters, you need to start with what is actually happening on the inside.

What the Meta Neural Band Actually Does

The name "neural band" tends to conjure the wrong image — brain implants, surgical procedures, the kind of neuroscience that lives mostly in research hospitals and speculative journalism. The Neural Band does none of that.

It does not read your brain. It reads your muscles.

That distinction changes the entire character of the conversation around what this technology is and what it can realistically become.

The science, in plain English

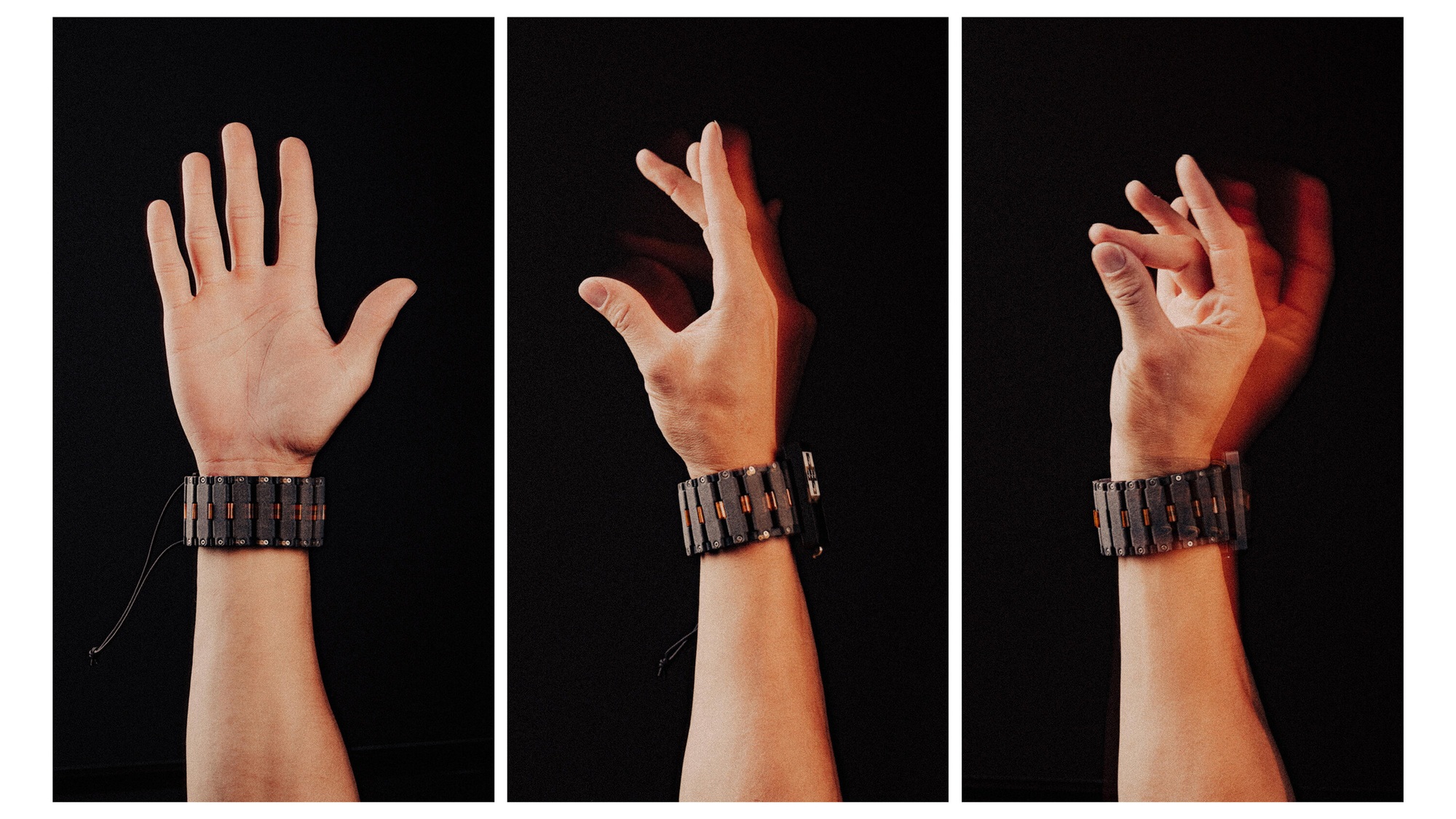

The underlying method is called surface electromyography, or EMG. When you form the intention to move your hand — even when the resulting motion is almost imperceptible — motor neurons send electrical signals to the muscles of your forearm and hand before your fingers have actually moved. The Neural Band's embedded sensors capture those signals in milliseconds, route them through machine learning models running locally on the device, and translate them into commands for whatever hardware the band is paired with.

- A barely perceptible pinch becomes a click

- A subtle lateral tension in the fingers becomes a swipe

- A slow rotational pressure becomes a scroll

All of it happens faster than conscious thought, and invisibly enough that no one standing next to you would notice you were doing anything at all.

How Meta made it work for everyone

Meta built its recognition models on data from nearly 200,000 research participants — a scale that matters because EMG signals vary meaningfully across different body types, skin tones, muscle mass distributions, and physical conditions. A system that works well only for a narrow demographic is not a platform, it is a specialty product.

Processing happens entirely on-device, meaning gesture data never leaves the band to be processed in the cloud. The system also ingests accelerometer and gyroscope data alongside EMG readings to give it motion context, and it improves with use: personalization can push handwriting recognition accuracy up by as much as 16 percent compared to out-of-the-box performance.

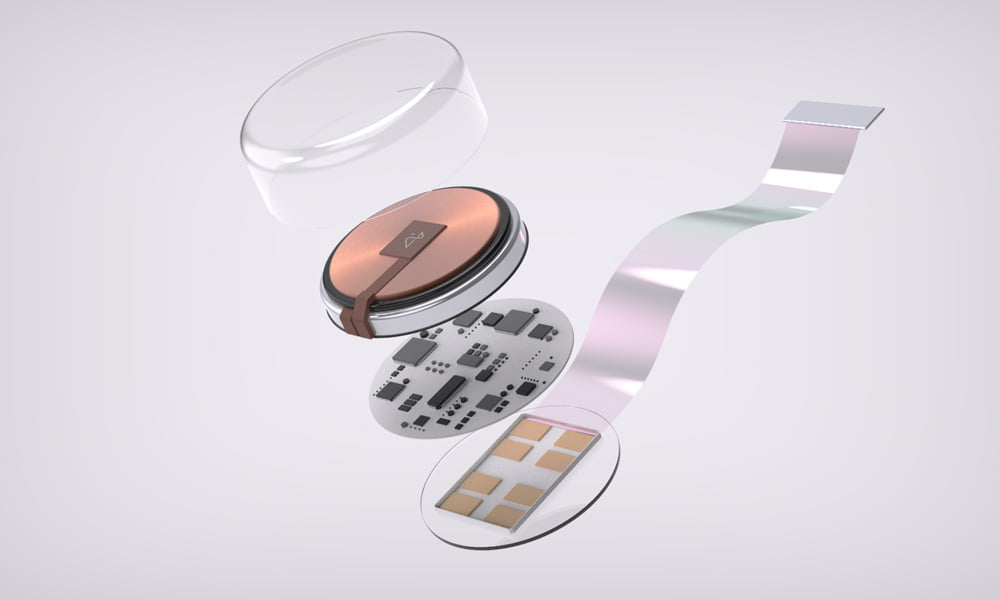

The hardware specs that actually matter

| Spec | Detail |

|---|---|

| Battery life | Up to 18 hours |

| Water resistance | IPX7 |

| Sizes available | Three |

| Key material | Vectran (same fiber used in Mars rover crash pads) |

| Processing | On-device, not cloud |

The Vectran detail is not marketing copy — it is a specific engineering choice for a device that will be constantly flexed, sweated on, and worn through a full day of varied activity.

From Glasses Controller to Something Much Larger

When Meta shipped the Neural Band alongside the Ray-Ban Display glasses last fall at $799 for the bundle, the device was framed as a controller for those specific glasses — interesting, but bounded. That framing undersold what the underlying technology was capable of, and at CES 2026, Meta made the first public moves toward correcting it.

The Garmin automotive demo

Garmin builds the Unified Cabin digital cockpit system that powers infotainment hardware across several major automotive OEMs. The CES demonstration showed Neural Band integrating directly with that platform: passengers could navigate menus, launch applications, and manipulate three-dimensional vehicle models on the display using gestures made with the thumb, index, and middle fingers, none of which required any screen contact.

Garmin has already indicated it wants to push the integration further — into core vehicle functions like window controls and door locks. That would shift the demo from a concept into a commercially deployable feature reaching consumers through existing automotive partnerships, rather than requiring Meta to build distribution from scratch.

The University of Utah accessibility research

Less flashy, but probably more consequential over a longer horizon.

Researchers led by Jacob George of the Department of Electrical and Computer Engineering are evaluating whether the Neural Band can function as a reliable accessible interface for people with ALS, muscular dystrophy, stroke, and other conditions affecting hand function. The scope includes co-designing custom gesture sets with participants to control:

- Smart speakers

- Thermostats

- Window blinds

- Door locks and other connected home devices

The same program is testing the band as an alternative input for the TetraSki — an adaptive ski currently operated with either a joystick or a sip-and-puff mouth controller, two methods that require a level of preserved motor function that not every user retains.

"Meta Neural Band is sensitive enough to detect subtle muscle activity in the wrist — even for people who can't move their hands." — Meta

If validated at scale, that single claim would place this technology in a different category than most consumer wearables, which tend to be designed for and tested on people without significant physical limitations.

Why Previous Gesture Interfaces Failed — and Why This One Is Different

Gesture-based computing has been a recurring promise for at least fifteen years, and its track record is not encouraging.

The Microsoft Kinect launched in 2010 as a potential revolution in input and was quietly discontinued within a few years, undermined by latency, recognition errors, and the sheer physical fatigue of waving your arms at a television. Google Glass arrived in 2013 carrying enormous expectations and became instead a symbol of the gap between technology-industry self-perception and how the rest of the world actually wants to live. A long succession of hand-tracking systems, gesture cameras, and wrist-worn motion sensors followed, most of them failing for variations on the same underlying reasons: insufficient accuracy in real-world conditions, social awkwardness in public spaces, and interaction models that required conspicuous physical movements that felt theatrical rather than natural.

Why EMG breaks the pattern

EMG at the wrist addresses nearly all of these failure modes simultaneously.

- No camera required. The Neural Band works equally well with your hands in your pockets, resting on a table, or hanging at your sides in complete darkness.

- No voice required. It functions silently in loud environments where voice commands fail, without requiring you to announce your intentions to everyone in earshot.

- No fine motor precision required. It does not demand the kind of precise control that excludes significant portions of the potential user population.

- Higher gesture resolution. Unlike accelerometers and standard inertial sensors, which cannot reliably distinguish a tap from a pinch, EMG resolves gestures at a level of granularity that makes it genuinely useful for navigating complex interfaces.

The CTRL-Labs foundation

What makes the Neural Band historically significant is not that it is a perfected technology — it is not — but that it is the first consumer product to bring this capability to market at meaningful scale. Meta has been building toward this since its 2019 acquisition of CTRL-Labs, a startup that had spent years developing methods for decoding peripheral neural signals at the wrist with a fidelity researchers had previously associated only with invasive procedures.

The September 2025 launch of Ray-Ban Display was, in practical terms, a large-scale market validation experiment. The answer — evidenced by Meta pausing its planned international expansion to the UK, France, Italy, and Canada due to supply constraints from what it described as unprecedented demand — appears to be an unambiguous yes.

The Competitive Picture

No company has shipped a consumer EMG device that competes directly with the Neural Band. That gives Meta a structural first-mover advantage of the kind that is difficult to manufacture after the fact.

| Company | Current Status |

|---|---|

| Meta | Shipping consumer EMG device since Sept 2025 |

| Apple | Patented EEG-enabled AirPods; no product yet |

| Samsung | Internal brain-monitoring sensor experiments |

| Biosignal patent portfolio; no product yet |

Holding patents and running internal experiments is a different thing from having a product in the market generating real usage data and real training signal for machine learning models — and that gap currently favors Meta.

The window is not indefinitely open

Apple is targeting a 2027 launch for its own smart glasses and, according to Bloomberg, is developing an AI pin and camera-equipped AirPods designed to form an interconnected wearable ecosystem around iPhone. Google has confirmed its 2026 smart glasses will integrate with Android-based watches.

Neither company is building a neural input layer yet, but both are constructing the ecosystem architecture into which such a layer would naturally fit. When they eventually decide to add EMG or equivalent biosignal sensing, they will do so from a position of established ecosystem relationships and consumer trust — not from scratch.

The Malibu 2 factor

Meta's competitive position is therefore less about the Neural Band as a standalone product and more about whether the company can establish the platform that makes EMG-based input indispensable before rivals catch up.

The reported Malibu 2 smartwatch, targeting a late 2026 launch, is central to that strategy. Meta CTO Andrew Bosworth has already said publicly that the Neural Band's capabilities would eventually make sense as part of a watch, and a smartwatch with integrated EMG would give Meta a device that competes across the full consumer wearables market while anchoring the input layer for its entire hardware ecosystem.

What about Neuralink?

The comparison surfaces regularly and is more misleading than clarifying. Neuralink is a medical technology company developing surgically implanted electrodes for people with severe neurological damage, operating under an entirely different regulatory framework for an entirely different population. Meta's approach is non-invasive, runs on consumer hardware, and is already in tens of thousands of people's hands. They are not competing — they are not even playing the same game.

What Changes if This Technology Reaches Scale

Every major transition in input paradigm has changed not just which devices exist, but which people can use them and which applications become possible.

- The CLI-to-GUI shift opened personal computing to people who would never have learned to type commands

- The touchscreen made smartphones navigable for all ages and technical backgrounds

- EMG-based input, if it reaches sufficient reliability and accessibility, could represent a comparable structural shift

Two specific contexts are worth examining carefully.

Ambient computing

As connected devices accumulate across every dimension of daily life — cars, appliances, home systems, workplace equipment — the interaction overhead of managing each one separately becomes a genuine problem. Voice commands fail in noisy environments and carry social friction in contexts where announcing your intentions to a device feels inappropriate.

A wrist-worn interface that can control multiple device categories silently, contextually, and without requiring orientation toward any particular surface addresses that problem in a way nothing currently available does.

Accessibility

This is where the University of Utah research carries disproportionate weight relative to the attention it has received. Assistive technology has historically been expensive, slow to develop, and narrow in scope — designed for specific conditions rather than adaptable across the spectrum of physical variation.

A consumer EMG device that works reliably out of the box across a wide range of users, can be personalized to individual physiological profiles, and can be customized for different levels of motor function has the potential to become one of the more significant developments in accessible human-computer interaction in years — not because it was designed for that market, but because the underlying properties of EMG happen to be especially well-suited to it.

For developers

Meta is already accepting applications from partners interested in building Neural Band integrations. If that developer ecosystem gains genuine momentum, the applications will extend well beyond anything Meta's internal teams will build — and the companies that invest in understanding this input modality early will have a meaningful structural advantage over those that wait.

The Risks and Limitations Worth Taking Seriously

Ecosystem lock-in (for now)

The device currently works fully only with Meta's own products, plus the early-stage Garmin integration. Becoming a true universal input platform requires not just Meta opening an API, but third-party hardware manufacturers deciding it is worth the engineering investment to support a new input method — a process that moves on partnership cycles measured in years, not months. The automotive demo is an encouraging signal, but the distance between a CES concept and a feature shipping in production vehicles is considerable.

Accuracy is a variable, not a constant

EMG performance is sensitive to sensor contact quality, which is affected by sweat, forearm hair, skin conditions, and changes in the user's physical state over time. Meta's three-size offering and diverse training data reduce this variability, but do not eliminate it. There is a meaningful gap between "works for most users out of the box" and "works reliably for all users in all real-world conditions."

The privacy question

On-device processing is the right architectural decision, but it does not fully resolve the privacy question. Detailed EMG data collected over time constitutes a biometric profile — one that could theoretically be used to identify an individual or reveal information about their physical condition. The regulatory and legal frameworks governing how such data is handled are still being developed, and given Meta's historical relationship with regulators over data practices, biosignal data will attract scrutiny at a level other wearable sensor categories have not faced.

Price

At $799 bundled with the glasses, the Neural Band is an early-adopter product. The vision of EMG-based input as a ubiquitous interaction paradigm requires price points that reach mainstream consumers — not just the segment willing to pay for premium hardware novelties.

Where This Goes From Here

Meta is sequencing its hardware roadmap with a deliberateness its Reality Labs years largely lacked.

| Device | Status |

|---|---|

| Meta Neural Band | Shipping now |

| Malibu 2 smartwatch | Expected late 2026 |

| Phoenix mixed reality glasses | Pushed to 2027 |

The Phoenix delay is itself telling: internal concerns about confusing consumers by releasing too many products too quickly represent a level of product-market discipline that is a real change from the company that spent years trying to will a metaverse into existence through sheer force of announcement.

The broader competition for the wrist is intensifying across the industry precisely because the wrist turns out to be a remarkably productive place to read the human body. Muscles fire there. Arteries pulse there. Skin temperature and conductance respond to stress and physiological state there. The company that builds the most capable hardware layer for reading those signals and convinces a developer ecosystem to build around it will have a structural advantage in the next era of personal computing.

Meta currently holds that advantage. The Neural Band is the first serious consumer evidence that its years of neuroscience investment were aimed at something real rather than something speculative. How long that lead holds against Apple, Google, and whoever else decides this territory is worth contesting is a genuinely open question — but the device that started the race is already on people's wrists, and that is a harder position to dislodge than it might look.

Frequently Asked Questions

What is the Meta Neural Band and how does it work?

The Meta Neural Band is a wrist-worn wearable that uses surface electromyography sensors to detect the electrical signals muscles produce when a user intends to make a gesture. Those signals are captured in milliseconds, processed entirely on-device by machine learning models, and translated into commands for connected hardware. The device launched commercially in September 2025 as a companion to Meta's Ray-Ban Display glasses.

Does the Meta Neural Band require surgery or implants?

No. The Neural Band is entirely non-invasive, sitting on the outside of the wrist and reading surface-level electrical signals without any contact with nerves or tissue beneath the skin. It requires no medical procedure and carries none of the risks associated with implantable brain-computer interface technologies.

What devices can the Meta Neural Band control?

At launch, the band was designed to work with Meta's Ray-Ban Display glasses. At CES 2026, Meta demonstrated an integration with Garmin's Unified Cabin automotive infotainment platform, and ongoing research with the University of Utah is exploring its use with smart home devices including speakers, thermostats, blinds, and locks. Meta is also accepting applications from third-party partners interested in building their own integrations.

How accurate is the Meta Neural Band's gesture recognition?

Meta trained its recognition models on data from nearly 200,000 research participants, which supports reliable out-of-the-box performance across a wide range of users. Personalization can improve handwriting recognition accuracy by up to 16 percent beyond baseline, and response times are measured in milliseconds. Real-world accuracy can vary depending on fit quality and individual physiological differences.

Is the Meta Neural Band useful for people with disabilities?

This is an active area of research. Meta's collaboration with the University of Utah is specifically evaluating the band for people with ALS, muscular dystrophy, stroke, and other conditions affecting hand use, with the goal of enabling gesture-based control of everyday connected devices. Meta has stated that the band is sensitive enough to detect muscle activity in users who cannot visibly move their hands, which broadens the potential accessibility applications considerably.

How much does the Meta Neural Band cost?

The Neural Band is currently sold exclusively as part of a bundle with the Meta Ray-Ban Display glasses, with pricing starting at $799 in the United States. It is not available as a standalone purchase.

How does the Meta Neural Band compare to Neuralink?

The two technologies occupy fundamentally different categories. Neuralink involves surgically implanted electrodes designed for people with severe neurological damage, developed under medical device regulatory frameworks for clinical applications. The Meta Neural Band is a non-invasive consumer wearable that reads peripheral muscle signals at the wrist, with no surgical requirement and no direct interaction with the brain. Both are working toward the goal of translating human intent into digital commands through biological signal, but they do so for entirely different populations and under entirely different constraints.

What comes next for Meta Neural Band technology?

Meta is expanding the Neural Band's device compatibility through new partnerships, continuing accessibility research with the University of Utah, and reportedly planning to integrate EMG technology into a forthcoming smartwatch codenamed Malibu 2, expected later in 2026. The company is also developing next-generation mixed reality glasses under the codename Phoenix, currently targeted for 2027, which would represent the next major hardware layer in Meta's wearable ecosystem.

Related Articles