Ask a large language model to describe what happens when you drop a glass of water on a hardwood floor, and it will give you a perfectly competent answer. Ask it to actually understand that the glass will shatter asymmetrically based on impact angle, that the water will spread at a rate determined by surface tension and friction, or that the sound will differ depending on the floor's acoustic properties — and you are asking it to do something it fundamentally cannot do.

LLMs predict the next token in a sequence. They do not model the world. They model text about the world. It is a subtle distinction that turns out to be enormous.

This gap is at the heart of one of the most significant technical debates in AI today — and it is quietly pulling billions of dollars and some of the field's most credentialed researchers toward a different paradigm entirely: world models.

In the span of a few months bridging late 2025 and early 2026, the shift became undeniable. Yann LeCun, one of the three original deep learning pioneers and former chief AI scientist at Meta, left the company he helped build to launch AMI Labs — seeking €500 million at a €3 billion valuation before releasing a single product. Fei-Fei Li, the researcher most credited with the modern computer vision revolution, shipped Marble through her startup World Labs, now in discussions at a $5 billion valuation. Google DeepMind released Genie 3, the first real-time interactive world model capable of generating navigable 3D environments at 24 frames per second. And Nvidia's Cosmos platform, purpose-built as open infrastructure for world model development, surpassed 2 million downloads.

None of this has broken through the mainstream coverage that follows every new ChatGPT upgrade or Gemini benchmark result. That is exactly why it is worth paying attention.

What a World Model Actually Is

The term gets used loosely enough to need unpacking before anything else, because "world model" currently refers to at least three meaningfully different things depending on who is using it.

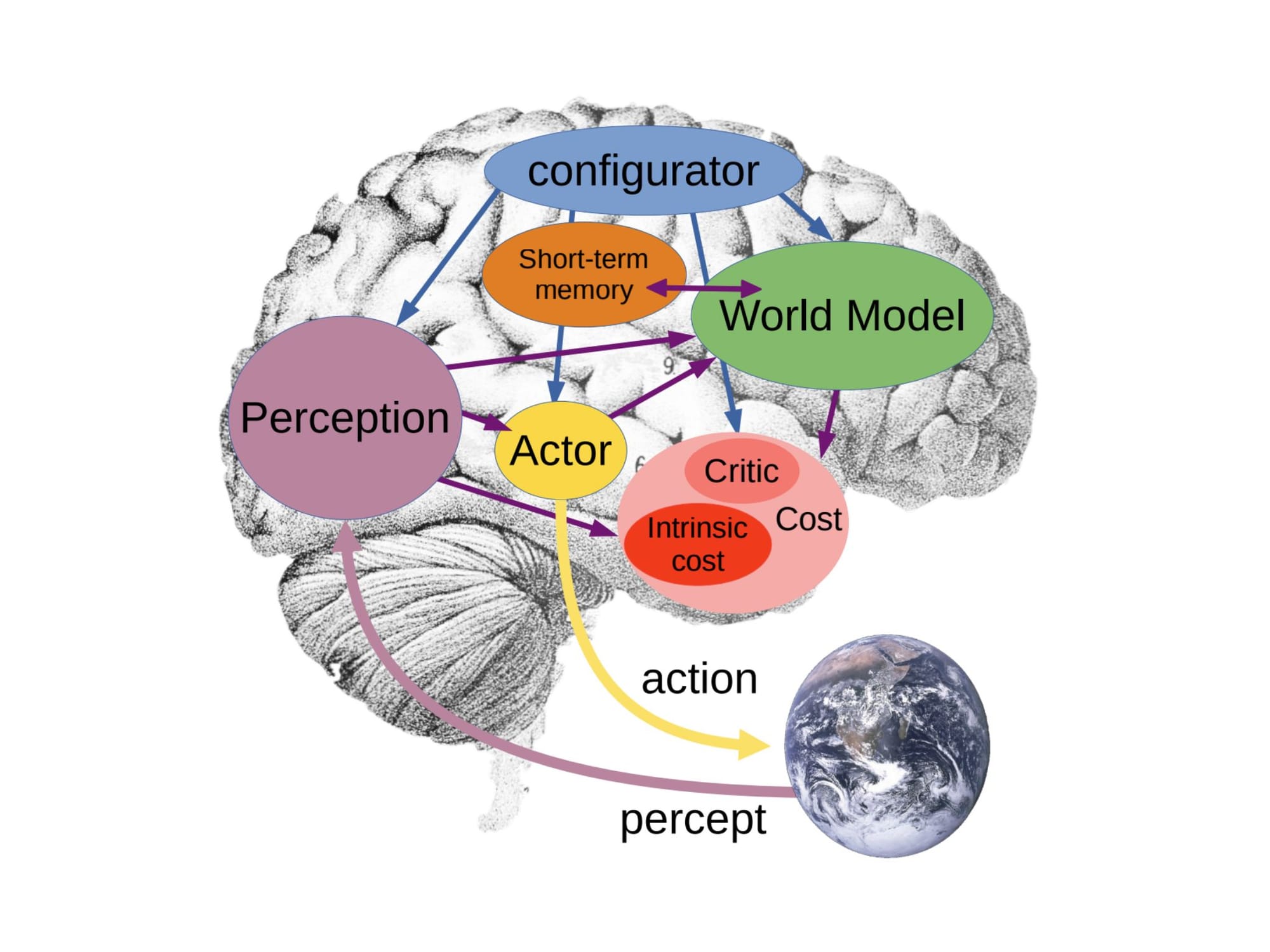

The idea is old. Scottish psychologist Kenneth Craik introduced the concept of mental models in 1943, arguing that human cognition works by building internal representations of reality that we use to simulate possible futures before acting on them. You do not step in front of a moving train by running the experiment. You model the outcome internally and skip the test. The brain runs millions of such simulations constantly — anticipating, planning, updating.

For decades, AI researchers tried to give machines this same capacity. The early attempts were brittle and narrow. The rise of deep learning eventually made it possible to approximate the idea through neural networks that could build up internal representations of their training environments. But it was the success of LLMs that clarified what was still missing.

The core distinction: An LLM learns from text descriptions of reality. A world model learns from reality itself — from video, sensor data, physical interactions, and spatial relationships — and builds an internal simulation it can query, update, and act from.

In practice, the term covers three active research and product directions:

World models as physical simulators. This is LeCun's domain — AI systems that build internal representations of physical reality, enabling them to understand cause and effect, predict future states, and plan action sequences. The target application is embodied AI: robots, autonomous vehicles, and other systems that need to act safely in the real world without memorizing every possible scenario.

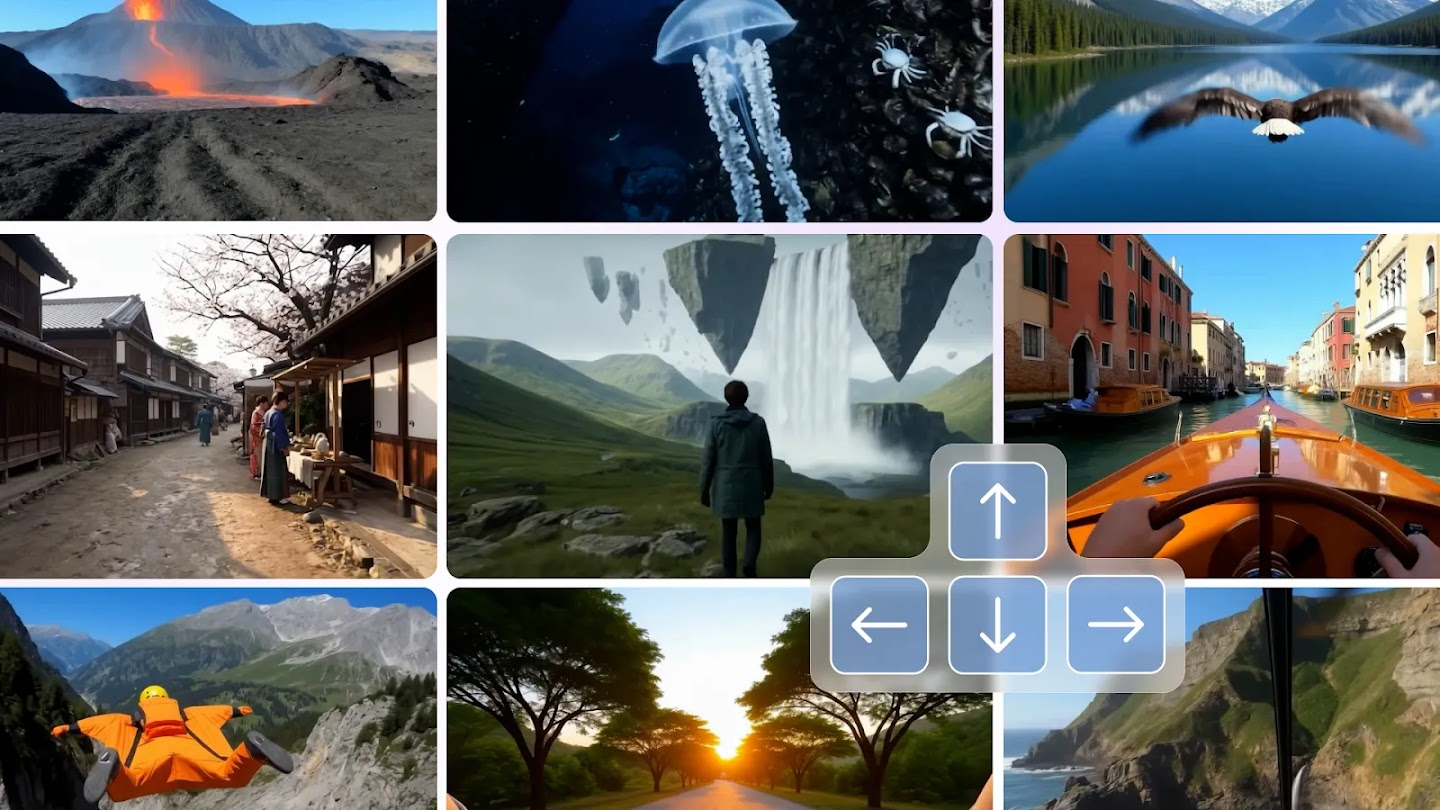

World models as environment generators. This is Genie 3 and Fei-Fei Li's Marble — AI that generates navigable, interactive 3D environments from text prompts, images, or video. The primary use cases are training AI agents in simulation, building game worlds, rapid prototyping for architects and designers, and creating synthetic training data at scale.

World models as implicit knowledge structures. This is the ongoing debate about whether LLMs already contain something like a world model buried in their weights — an implicit representation of physical and causal structure that emerges from training on enough text about the world. The honest answer is: partially, inconsistently, and not well enough to rely on.

These three definitions are not competing. They are complementary layers of the same underlying ambition — and they are converging faster than most people outside the field realize.

Why LLMs Fall Short — and Why That Matters Now

The case against LLMs as a path to general AI has been Yann LeCun's consistent position for years. His departure from Meta and the $3 billion pre-launch valuation of AMI Labs represents its most emphatic possible market validation.

"LLMs are too limiting. Scaling them up will not allow us to reach AGI."

— Yann LeCun, NVIDIA GTC presentation

The critique is specific. LLMs predict text sequences. They do not build a coherent internal model of physical reality. This produces a set of characteristic failure modes that scaling does not fix: hallucinations about spatial relationships, inconsistency about physical causality, inability to generalize to novel physical scenarios, and — most importantly for robotics and autonomous systems — no capacity to plan actions based on genuine understanding of consequences.

A benchmark paper presented at a 2025 conference documented "striking limitations" in LLMs' basic world-modeling abilities, including "near-random accuracy when distinguishing motion trajectories." These are not edge cases. They are fundamental.

Even researchers more sympathetic to LLMs acknowledge the gap. Angjoo Kanazawa, an assistant professor at UC Berkeley, put it clearly:

"How do you develop an intelligent LLM vision system that can actually have streaming input and update its understanding of the world and act accordingly? That's a big open problem. I think AGI is not possible without actually solving this problem."

The practical stakes are not abstract. The companies building humanoid robots, autonomous vehicles, surgical assistants, and industrial automation systems all face the same ceiling: AI that can describe the world but cannot reliably navigate it. Current Vision-Language-Action models used in robotics are increasingly capable within narrow task definitions, but they remain brittle outside the distributions they were trained on. LeCun's characterization is pointed:

"There are a lot of companies building humanoid robots... they do kung-fu and impressive things. This is all precomputed. None of those companies — absolutely none of them — has any idea how to make those robots smart enough to be useful."

Brett Adcock, founder of Figure AI, has pushed back on this characterization, dismissing LeCun's "academic caution" and pointing to Helix 02's industrial deployment results. DeepMind's Demis Hassabis occupies a diplomatic middle ground — acknowledging that language alone is insufficient for robotics while continuing to develop both approaches in parallel. The honest position is that both camps have a point. LLM-derived methods are working for narrow, high-value tasks right now. Whether they scale to general intelligence is the unresolved question.

The Race Is Already On — And It Is Well-Funded

The activity in world models over the past eight months represents the most concentrated surge of serious investment in a single AI paradigm since the transformer architecture took over natural language processing in 2019. What is different this time is that it is happening on multiple technical fronts simultaneously.

AMI Labs: The €500 Million Research Bet

Yann LeCun's new company, pronounced "ah-mee" (the French word for friend), is headquartered in Paris — deliberately outside Silicon Valley's LLM-focused ecosystem. LeCun described the location choice directly:

"We are not going to get to human-level AI just by scaling LLMs. Silicon Valley is completely hypnotized by the current models of generative AI. To pursue this kind of new research, you have to go outside the Valley — to Paris."

AMI Labs builds on LeCun's JEPA (Joint Embedding Predictive Architecture) research at Meta. The V-JEPA 2 paper, published in 2025, describes a model trained on over 1 million hours of internet video that can be adapted with limited robot data to enable planning tasks on real robot arms. The architecture learns by predicting representations of image regions from other regions — developing abstract understanding of visual scenes without needing explicit labels. This parallels how children develop intuitive physics through observation: a child watching objects fall develops an internal model of gravity without anyone explaining Newton's laws.

The company's executive chairman is LeCun himself; the CEO role belongs to Alex LeBrun, previously co-founder of Nabla, a health AI startup with offices in Paris and New York. AMI Labs has stated its target applications as industrial process control, wearable devices, robotics, and healthcare — all domains where reliability and predictive accuracy matter far more than generating plausible text.

Meta is expected to be one of AMI's first clients, despite LeCun's long-running public criticism of the company's AI direction. AMI has already hired Tim Brooks away from Google DeepMind, where he previously led the world models team — a significant talent signal.

World Labs: Spatial Intelligence at $5 Billion

Fei-Fei Li's World Labs represents the commercial vanguard of the environment-generation approach. The company launched in 2024 with $230 million in funding and shipped its first product, Marble, in November 2025.

Marble generates complete, navigable 3D worlds from text prompts, images, video, or rough 3D layouts. Users can edit existing worlds, expand them, and combine them with others. The pricing ranges from free tiers to $95/month for professional use — making world model technology commercially accessible in a way that neither LeCun's research-focused architecture nor DeepMind's research lab produces.

Fei-Fei Li's framing connects to her foundational work on ImageNet. If ImageNet created the visual dataset that enabled the deep learning revolution, spatial intelligence — the capacity to understand and navigate three-dimensional environments — is the next layer that AI still fundamentally lacks.

Google DeepMind: Genie 3 and the Simulator Layer

DeepMind's approach, characterized in the research community as "occupying the middle" between LeCun and World Labs, is arguably the most technically mature. Genie 3, released in August 2025, is described as "the first real-time interactive general-purpose world model" — an AI that generates persistent, navigable 3D environments at 720p and 24 frames per second that users or AI agents can move around in for several minutes.

The key technical achievement is that Genie 3 does not rely on hard-coded physics engines. The model teaches itself how the world works through training — meaning it develops an implicit physics model rather than following programmed rules. This distinction matters enormously for generalization: a hard-coded physics engine fails on scenarios it was not programmed for; a learned physics model, in principle, generalizes to novel situations.

DeepMind pairs Genie 3 with SIMA 2, a generalist agent that can be trained inside Genie-generated worlds and tested across them. The SIMA agent receives natural language instructions and navigates generated environments to achieve goals — providing an end-to-end pipeline from environment generation to agent training that no other lab currently offers at this scale.

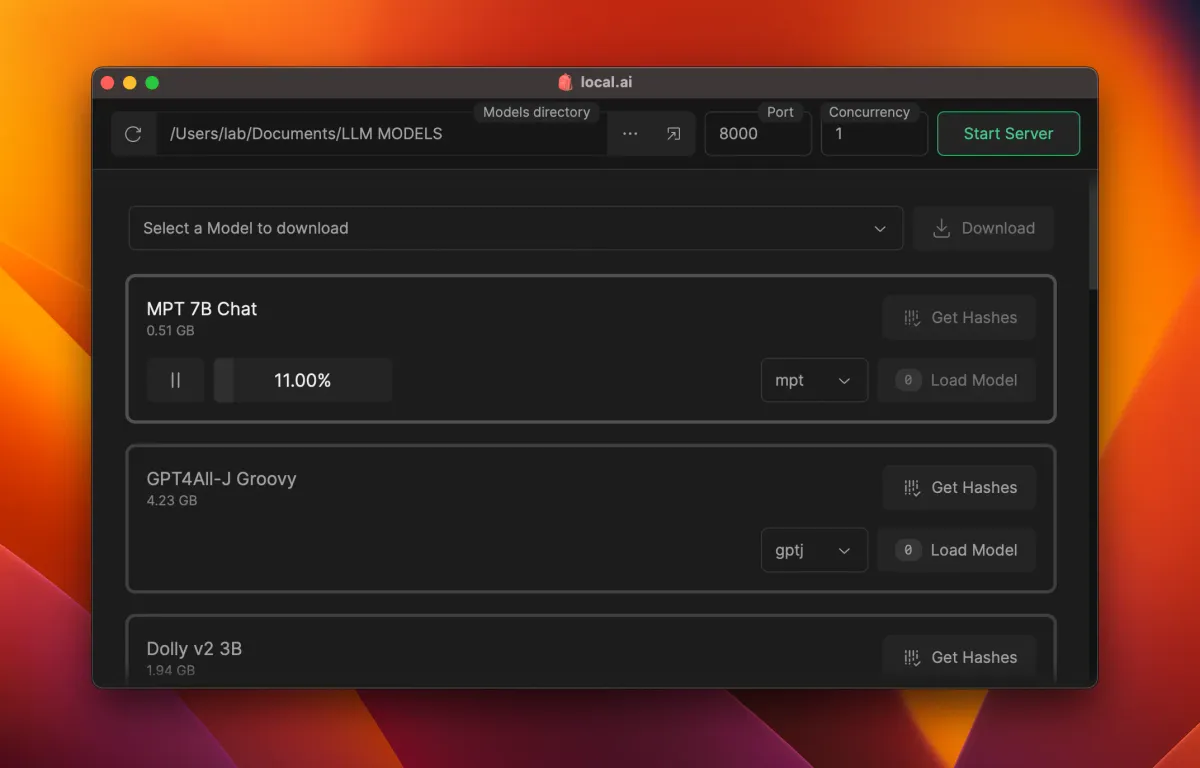

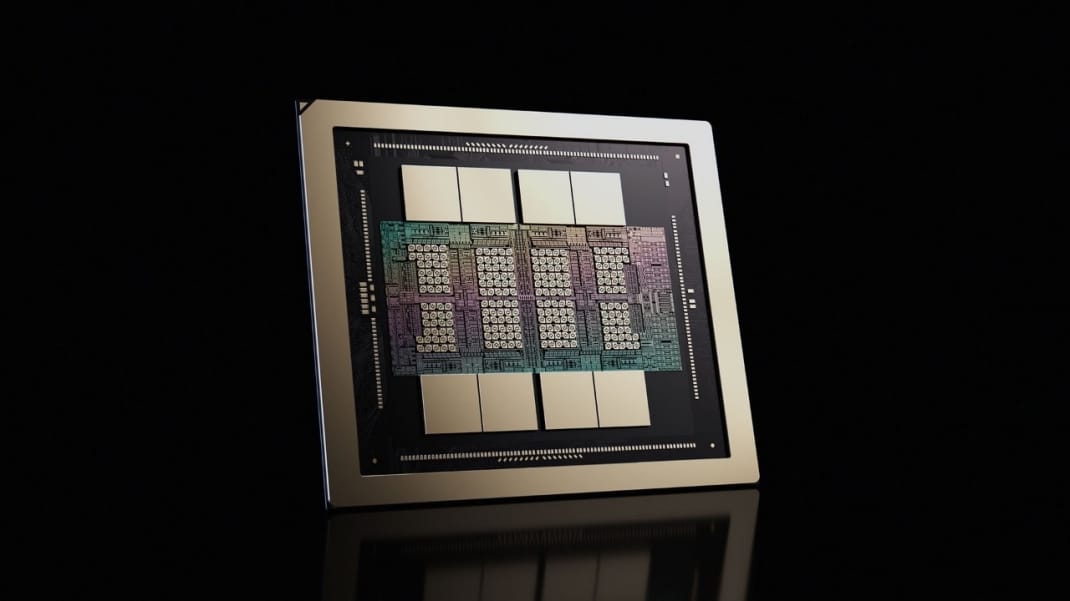

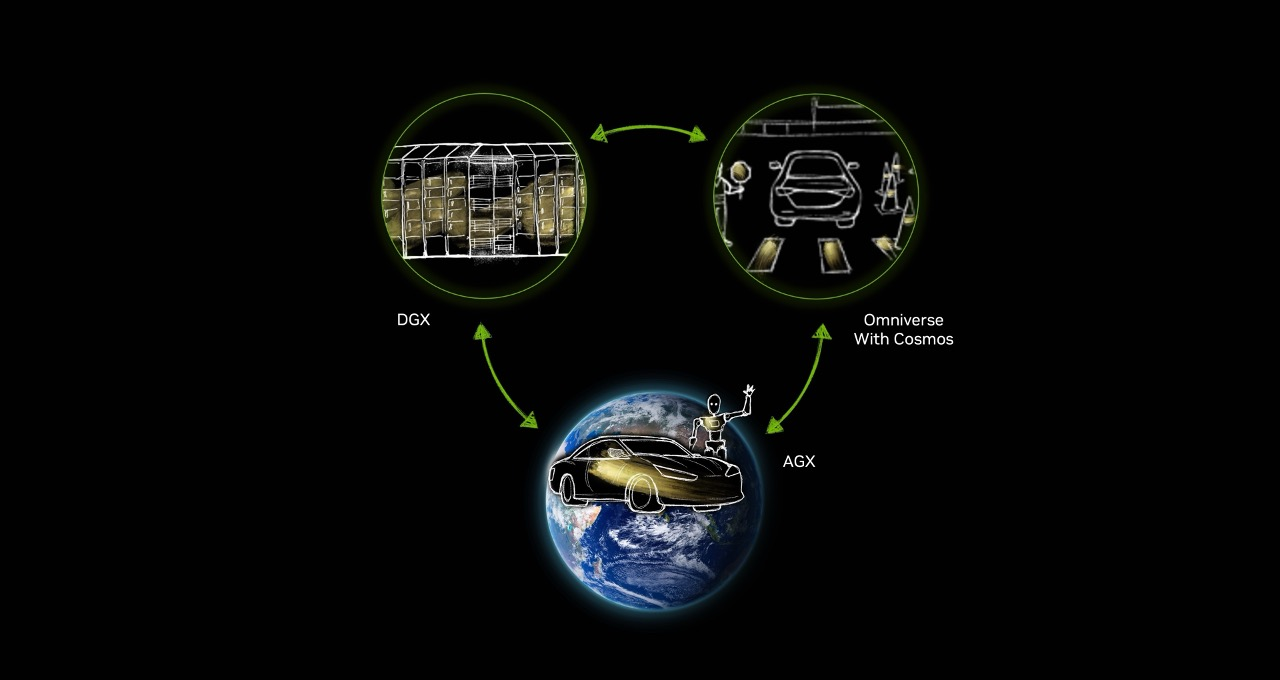

Nvidia Cosmos: The Infrastructure Everyone Depends On

While AMI Labs, World Labs, and DeepMind compete on research frontiers, Nvidia is building the infrastructure layer that all of them will depend on. Cosmos was announced at CES 2025 and has surpassed 2 million downloads — a number that reflects the size of the developer community now building on top of it.

The platform was trained on 9,000 trillion tokens drawn from 20 million hours of real-world data spanning driving scenarios, industrial settings, robotics operations, and human-environment interactions. It comes in three model families:

| Family | Function |

|---|---|

| Cosmos Predict | Simulates future states of dynamic environments for robot planning |

| Cosmos Transfer | Bridges simulated and real environments; adds weather, lighting, terrain variation |

| Cosmos Reason | Physics-aware chain-of-thought reasoning for intelligent machines |

The February 2026 release of Cosmos Predict 2.5 added specialized checkpoints for autonomous vehicle applications and robot policy models. Companies including 1X, Agility Robotics, Boston Dynamics, Figure AI, Uber, and Waabi are already building on Cosmos.

Nvidia's strategic position here is characteristic. They are not betting on which world model architecture wins — JEPA, Genie, or something not yet invented. They are building the compute platform, the simulation infrastructure, and the developer tooling that all of them will run on regardless. The Cosmos download numbers suggest the bet is working.

What World Models Make Possible That LLMs Cannot

The applications that become unlocked by world models are not incremental improvements on what LLMs already do. They are categorically different capabilities.

Robots That Can Actually Generalize

The hardest unsolved problem in robotics is not hardware. It is generalization — getting a robot to perform reliably in environments and with objects it has not seen before, under conditions that differ from training. Current robots, including the most advanced commercial humanoids, remain brittle outside their trained distributions. They can perform impressive demonstrations in controlled settings; they fail unexpectedly in unstructured real-world conditions.

World models address this directly. By training in diverse simulated environments — including edge cases and failure modes that would be dangerous or impractical to create physically — robots can develop genuine generalization. Companies like Hillbot are using Cosmos to generate terabytes of high-fidelity 3D environments for robot training. Serve Robotics collects 1 million miles of operational data monthly and uses it to generate simulation environments that train perception models for scenarios the physical robots have not yet encountered.

Autonomous Vehicles With Real Physics Understanding

The autonomous vehicle problem is fundamentally a world modeling problem. A self-driving system that understands physics — that models how a wet road surface affects stopping distance, how a pedestrian's trajectory will evolve, how an intersection will clear — is a categorically safer system than one that pattern-matches against its training distribution.

Waabi, Wayve, and Foretellix are all evaluating Cosmos for AV development. Wayve specifically uses it to search for edge-case driving scenarios — the rare situations that a purely empirical training approach will underrepresent. NVIDIA's Alpamayo family, released in January 2026, introduces the first open large-scale reasoning VLA model for autonomous vehicles, enabling vehicles to understand their surroundings and explain their actions.

A New Frontier for Scientific Simulation

DreamerV3, an AI agent that learns a world model, improves its behavior by "imagining" future scenarios rather than requiring real-world trial and error. An April 2025 paper in Nature documented DreamerV3's results across a range of tasks. This capacity — learning from imagined experience — has implications that extend well beyond robotics and autonomous vehicles into drug discovery, materials science, climate modeling, and any domain where real-world experimentation is expensive, slow, or dangerous.

The Training Data Bottleneck, Solved

Perhaps the most immediately practical application of world models is the most unglamorous one: generating training data. Unlike LLMs, which could be trained on the existing internet, physical AI systems need grounded, physics-aware data that is enormously expensive and time-consuming to collect in the real world.

World models solve this by generating it synthetically — at scale, with variation in lighting, weather, terrain, and environmental conditions that real-world collection cannot efficiently provide. Nvidia's synthetic data generation pipeline, connecting Omniverse with Cosmos, is already used by companies ranging from mining operations improving boulder detection systems to surgical robotics companies training on rare procedure scenarios.

The Honest Skeptical Case

Serious researchers acknowledge real challenges that boosters do not always highlight.

The evaluation problem. Measuring progress in world models is harder than measuring progress in language models. LLM benchmarks are imperfect but abundant. World model evaluation requires environment-based metrics, and the field has not converged on standard benchmarks that allow apples-to-apples comparison across approaches.

The data problem. Interaction data — the grounded, sequential, causally structured data that world models need — is expensive to collect and hard to scale. The internet gave LLMs their training set for nearly free. There is no equivalent repository of embodied interaction data that world models can be scraped from.

The reality gap. Training in simulation does not automatically transfer to the real world. The physics of a simulated floor does not perfectly match the physics of a real floor. The gap between sim and real remains a persistent challenge, and while Cosmos Transfer addresses it partially, the fundamental problem has not been solved.

The architecture debate. Whether JEPA-based architectures, generative video models, or something not yet invented will prove to be the right foundation for world models is genuinely unresolved. LeCun is confident; others are not. The field is young enough that betting on any single approach is a real risk.

The timescale question. LeCun himself describes AMI Labs as a "longer-term paradigm shift" — not an incremental improvement. That is an honest framing, but it is also a warning about commercial timelines. The gap between impressive research demonstrations and robust, reliable real-world systems in AI has historically been larger and longer than researchers expected.

What This Means for Businesses and Developers Today

You do not need to wait for the theoretical debates to settle to start thinking about where world models fit in your organization's AI strategy.

If you are building robots or autonomous systems: World model infrastructure is already production-grade enough to integrate into training pipelines. Cosmos is open, actively maintained, and adopted by serious robotics companies. The question is not whether to use it but how to specialize it for your specific physical environment and task distribution.

If you are in gaming, simulation, or spatial computing: Marble and Genie 3 represent commercially accessible world model tools that can accelerate environment generation and prototype testing. World Labs' API is available today.

If you are a developer building AI agents: World model thinking influences how software gets built in ways that go beyond robots and AVs. Agents need state and memory, not just chat logs. Workflows will increasingly rely on simulation and preview — "what will happen if I do X?" Multimodal inputs including video, screens, and sensor data will become standard. Building for this now is an early mover advantage.

If you are an enterprise: The companies building internal world model expertise today are the ones with compounding advantages as the technology matures. The talent competition is already fierce — Meta lost Tim Brooks to AMI Labs from its own world models team.

The LLM era is not over. Text-based AI is becoming more capable, more embedded, and more economically central by the quarter. But the argument that it is the only game in town — that scaling transformers trained on text will eventually produce systems that can reliably navigate physical reality — is not holding up under scrutiny from the field's most credentialed researchers.

World models are the infrastructure for AI that can act, not just advise. The companies funding them at billion-dollar valuations before they have products understand something that most coverage of AI is still missing.

Frequently Asked Questions

What is a world model in AI?

A world model is an AI system that builds an internal representation of reality — understanding physics, spatial relationships, cause and effect, and how environments change over time. Unlike large language models, which predict text sequences, world models learn from video, sensor data, and physical interaction to simulate how environments evolve. This allows AI systems to plan actions, anticipate consequences, and generalize to novel situations rather than pattern-matching against training examples.

How are world models different from large language models?

LLMs learn from text descriptions of the world and predict what text should come next. They have no coherent internal model of physical reality, which is why they hallucinate spatial relationships and struggle with physical causality. World models learn from the world itself — from video, movement data, and interaction — and build representations that can be queried, updated, and acted from. The difference matters most in robotics, autonomous vehicles, and any domain where reliable physical understanding is required.

Who is building world models in 2026?

The leading organizations are AMI Labs (founded by Yann LeCun, seeking €500M at a €3B valuation), World Labs (founded by Fei-Fei Li, in talks at a $5B valuation), Google DeepMind (with Genie 3 and SIMA 2), and Nvidia (with the Cosmos platform, which has surpassed 2 million downloads). Runway, Wayve, and several China-based companies including Tencent are also active in the space.

What is Nvidia Cosmos and why does it matter?

Nvidia Cosmos is an open platform of world foundation models purpose-built for physical AI development, including robots and autonomous vehicles. It was trained on 9,000 trillion tokens from 20 million hours of real-world data. Its three model families — Predict, Transfer, and Reason — allow developers to simulate future states, generate synthetic training data, and enable physics-aware reasoning. Cosmos is available free and open-source, making it the most accessible entry point for organizations building physical AI systems.

What is Genie 3 and what can it do?

Genie 3, released by Google DeepMind in August 2025, is described as the first real-time interactive general-purpose world model. It generates navigable 3D environments from text prompts at 720p and 24 frames per second, maintaining visual consistency for several minutes of real-time interaction. Unlike previous systems, it teaches itself how the world works through training rather than relying on hard-coded physics engines, enabling better generalization to novel scenarios. DeepMind uses it as a training environment for its SIMA generalist agent.

What is Yann LeCun's argument against LLMs?

LeCun argues that scaling LLMs will not produce general intelligence because they model text about the world rather than the world itself. His critique centers on LLMs' inability to build coherent internal representations of physical reality, which leads to hallucinations, physical inconsistency, and inability to generalize to novel physical scenarios. His new company, AMI Labs, is building on JEPA (Joint Embedding Predictive Architecture) — a different approach that learns by predicting representations of visual scenes rather than predicting text tokens.

What are the real-world applications of world models?

Current applications include generating synthetic training data for robots and autonomous vehicles, creating interactive 3D environments for gaming and architecture, training AI agents in simulation before real-world deployment, and enabling robots to generalize to novel physical scenarios. Future applications include AI systems that can plan complex action sequences, surgical assistants that model anatomy during procedures, and industrial automation that adapts to unstructured environments without reprogramming.

Are world models ready for business use today?

Partially. Nvidia Cosmos is production-grade and adopted by serious robotics and AV companies. World Labs' Marble is commercially available for 3D environment generation. Genie 3 is available to AI Ultra subscribers. For most enterprises, the most practical near-term application is using world model infrastructure to generate synthetic training data — a solved problem that immediately improves AI systems trained for physical environments. Fully autonomous robots and vehicles enabled by world models remain a research and development horizon, not a deployment reality.

Related Articles