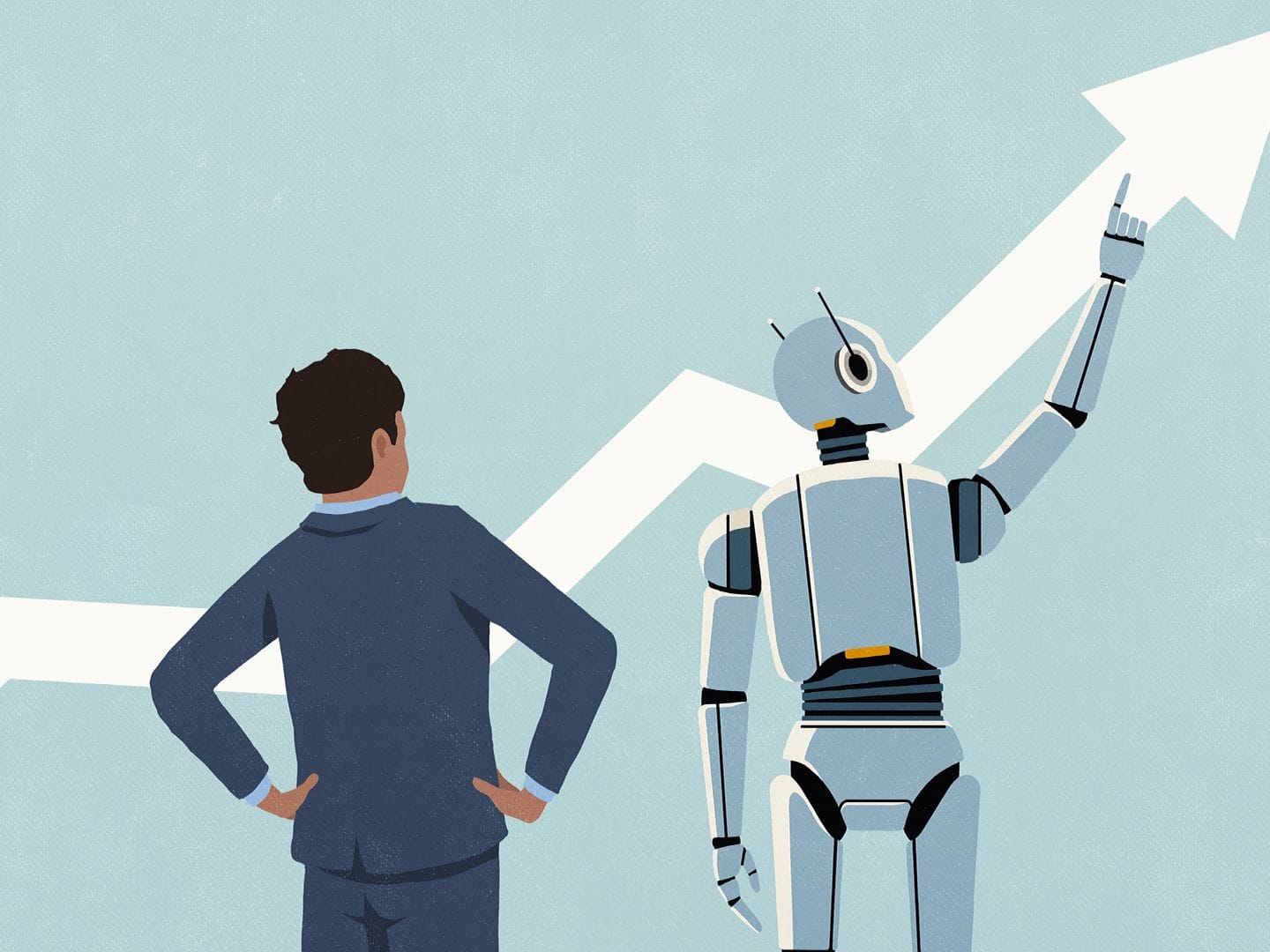

In predictions published in December 2025, Stanford HAI faculty across computer science, medicine, law, and economics converged on a single theme: the era of AI evangelism is giving way to an era of AI evaluation. The central question has shifted from "Can AI do this?" to "How well, at what cost, and for whom?"

This convergence matters because HAI researchers rarely agree on specifics. What drove them to the same diagnosis is the same thing driving enterprises: years of investment with limited measurable return, and mounting pressure to find out why.

The Core Argument: Failures Are About to Go Public

James Landay, co-director of Stanford HAI, put the 2026 forecast directly: "In 2026 we'll hear more companies say that AI hasn't yet shown productivity increases, except in certain target areas like programming and call centers. We'll hear about a lot of failed AI projects."

His follow-up question is equally telling: "Do people learn from those failures and figure out where to apply AI appropriately? Maybe."

That "maybe" is the honest part. Organizations have been quietly shelving underperforming AI pilots rather than publishing what went wrong. What changes in 2026 is that failures become harder to conceal as pressure mounts to justify AI spending with concrete, measurable returns. Landay also predicted no AGI in 2026 – a position once contrarian, now mainstream — with the implication that companies need to stop waiting for it and start measuring what they actually have.

The Economics: Real-Time Dashboards Replace Lagged Studies

Erik Brynjolfsson, director of Stanford's Digital Economy Lab, predicts that arguments about AI's economic impact will finally give way to careful measurement in 2026. Specifically, he anticipates high-frequency "AI economic dashboards" that track, at the task and occupation level, where AI is boosting productivity, displacing workers, or creating new roles — functioning like real-time national accounts for AI's labor market effects.

The stakes are already visible. His "Canaries in the Coal Mine" work with ADP has documented that early-career workers in AI-exposed occupations are experiencing weaker employment and earnings outcomes compared to less-exposed peers. That signal is currently detectable only through retrospective analysis. In 2026, Brynjolfsson argues, it will be updated monthly and watched alongside revenue dashboards by executives and policymakers alike.

The Legal Sector: Demos Are No Longer Enough

Julian Nyarko, professor of law and Stanford HAI associate director, identified a clean transition for legal AI: "Firms and courts might stop asking 'Can it write?' and instead start asking 'How well, on what, and at what risk?'"

The first question can be answered by a demo. The second requires measurement against outcomes. Nyarko predicts that standardized, domain-specific evaluations will become table stakes in 2026, tying model performance to concrete legal outcomes such as accuracy, citation integrity, privilege exposure, and turnaround time. He also sees AI tackling harder work — multi-document reasoning, argument mapping, surfacing counter-authority with traceable provenance — which demands evaluation frameworks that go well beyond simple text generation.

Healthcare and Education: Two Sectors Drowning in Pilots

Russ Altman, professor of bioengineering and a senior HAI fellow, offered the most vivid description of the healthcare evaluation problem: "A typical hospital is inundated with interest from startups that want to sell them a solution for X. Each of those individual solutions is not unreasonable, but in aggregate, they're like a tsunami of noise coming at an executive."

His prediction for 2026: the industry will develop systematic evaluation frameworks covering technical performance, training population characteristics, implementation friction, staff disruption, and ROI — accessible even to less-resourced settings.

Education faces the same structural problem. Stanford's AI+Education Summit in February 2026 produced a blunt diagnosis from Susan Athey, Economics of Technology Professor at Stanford GSB: "The bottleneck is we have too many pilots actually, and still not enough implementations that are actually effective." AI has also broken the core assumption of academic assessment — that a strong product indicates a strong learning process. Students can now generate polished work without engaging in meaningful learning, requiring educators to measure process rather than output.

Angèle Christin, associate professor of communication and HAI senior fellow, added the least-discussed dimension: AI can misdirect, deskill, and harm people in specific use cases, and the current AI buildout carries significant environmental costs. If extended reliance on AI reduces the underlying expertise it is meant to amplify, the workforce implications are different from what headcount analysis would suggest.

What This Means for Businesses in 2026

The practical pressure in 2026 will not come from AI itself but from the accountability infrastructure surrounding it. Evaluation frameworks are moving from optional internal tools to standard procurement requirements. Vendors who cannot demonstrate verifiable performance against domain-specific benchmarks, rather than lab accuracy or demo success, will face a harder selling environment.

The ROI gap is already documented. The 2025 NBER executive surveys found that 89% of executives reported no measurable productivity impact from AI over the previous three years. PwC found that more than half of 4,500 business leaders saw neither increased revenue nor decreased costs. These are not signs of technology failure. They are signs of measurement failure, and Stanford's prediction is that 2026 is when that failure becomes less tolerable.

The question that will determine AI ROI is not which tool is most impressive in a demo, but which deployment produces verifiable, measurable changes in specific workflows. That requires defining what you are measuring before you deploy — which is itself a capability most organizations are still building.

Wrap up

Stanford's collective prediction is not that AI will fail in 2026. It is that the industry's relationship with evidence is changing, and that change will be uncomfortable for organizations and vendors that have thrived where claims were rarely tested rigorously.

Every transformative technology goes through this maturation. The computer age was everywhere except in the productivity statistics for a decade before the 1990s productivity surge materialized. The question is whether AI will compress that lag by measuring what is working and what is not, or extend it by continuing to mistake deployment for impact. As Landay acknowledged with his "maybe," that answer is genuinely open. What is not open is whether the question will be asked.

Frequently Asked Questions

What is Stanford HAI's core prediction for AI in 2026?

Stanford HAI faculty across computer science, medicine, law, and economics predicted in December 2025 that 2026 will mark a shift from AI evangelism to AI evaluation. The central question is no longer "Can AI do this?" but "How well, at what cost, and for whom?" This means greater emphasis on domain-specific benchmarks, real-world performance measurement, and accountability for AI investments rather than capability demonstrations.

What did James Landay specifically predict for 2026?

Landay, co-director of Stanford HAI, predicted that there will be no artificial general intelligence in 2026, that more companies will publicly acknowledge AI has not delivered broad productivity gains outside targeted areas like programming and call centers, and that many failed AI projects will surface publicly. He also predicted that AI sovereignty, meaning countries building independent AI infrastructure or running AI on domestic hardware to keep data within borders, will become a major geopolitical theme.

What are AI economic dashboards and why does Stanford think they matter?

Erik Brynjolfsson predicted the emergence of high-frequency AI economic dashboards that track, at the task and occupation level, where AI is boosting productivity, displacing workers, or creating new roles. Drawing on payroll data, platform usage data, and behavioral task data, these tools would function like real-time national accounts for AI's labor market effects. Brynjolfsson argues that executives and policymakers need this data updated monthly rather than through studies published years after the fact.

What is Stanford predicting for AI in the legal industry?

Julian Nyarko, professor of law and Stanford HAI associate director, predicts that legal firms and courts will move from asking whether AI can write to asking how well it performs on specific legal tasks, at what accuracy, and at what risk. He expects standardized, domain-specific evaluations to become table stakes, tying model performance to concrete legal outcomes such as citation integrity, privilege exposure, and turnaround time. He also predicts AI systems will tackle more complex tasks including multi-document reasoning and argument mapping.

Why does Stanford say AI is creating an assessment crisis in education?

AI has broken the core assumption of academic assessment: that a strong product indicates a strong learning process. Students can now generate polished work without engaging in meaningful learning. Stanford researchers argue that educational institutions need to shift from evaluating outputs to evaluating learning processes, and that AI itself needs to be treated as a curriculum topic rather than just a tool. The broader diagnosis is that schools have too many AI pilots and too few implementations that are actually effective.

What is the deskilling concern Angèle Christin raised?

Christin, associate professor of communication and a Stanford HAI senior fellow, identified evidence that AI can misdirect, deskill, and harm people in some use cases. The deskilling concern is that extended reliance on AI tools may reduce the underlying expertise and judgment that makes those tools useful in the first place. If AI assistance prevents the development of expertise rather than amplifying it, the long-term workforce implications are different from what simple headcount displacement analysis would suggest.

Is Stanford predicting AI will fail in 2026?

No. The Stanford predictions are not pessimistic about AI's ultimate potential. They are pessimistic about the adequacy of how AI is currently being evaluated and deployed. The consensus view is that the technology is real and capable in specific domains, but that the measurement infrastructure has not kept pace with deployment, and that 2026 will be the year accountability catches up to ambition. Brynjolfsson's own research suggests US productivity grew roughly 2.7% in 2025, nearly double the prior decade's average, which he attributes partly to AI's macroeconomic effects.

What does the shift from evangelism to evaluation mean for enterprise AI strategy?

For organizations buying or deploying AI, evaluation frameworks are moving from optional internal tools to standard procurement requirements. Products that cannot demonstrate verifiable performance against domain-specific benchmarks, rather than lab accuracy or demo success, will face a harder commercial environment. Leaders making AI investment decisions need to specify what outcomes they are measuring, at what frequency, and against what baseline, before deployment rather than as an afterthought.

Related Articles