Before the end of 2024, every developer building an AI-powered application faced the same quiet nightmare. You had your language model. You had your database, your Slack workspace, your GitHub repos, your CRM, your search index. And between the model and all of those tools sat an endless wall of bespoke glue code, one-off API wrappers, and integration logic that broke the moment anything changed upstream.

It was not a tooling problem. It was an architecture problem. And it was getting worse every month as AI moved closer to the center of how software gets built and run.

Then, in November 2024, Anthropic released something that quietly addressed this in a way that most people outside the developer community barely noticed. The Model Context Protocol – MCP – landed as an open-source specification for connecting AI models to external tools, data sources, and services. The idea was straightforward: instead of every AI platform and every external tool building their own proprietary integration, you define one universal protocol and everybody builds to that.

The analogy that stuck was USB-C. One port, every device. One protocol, every tool.

What happened next was not expected, even by Anthropic. Within a year, the protocol had been adopted by OpenAI, Google DeepMind, Microsoft, and dozens of major developer platforms. It had accumulated 97 million monthly SDK downloads. And on December 9, 2025, Anthropic donated MCP to the newly formed Agentic AI Foundation under the Linux Foundation — cementing it as the neutral, community-owned infrastructure standard for the agentic AI era.

This is not a product announcement. It is an infrastructure moment. And understanding it matters for anyone building with AI, deploying AI in a business, or trying to understand where this technology is actually going.

What MCP Is and How It Works

At its core, the Model Context Protocol defines a standard way for AI systems to talk to the external world. Before MCP, connecting a model to a tool — say, a database, a web search engine, or a code execution environment — required custom integration work every single time. The model provider wrote their integration one way. The tool provider exposed their API another way. Developers in the middle wrote custom connectors that worked until they did not.

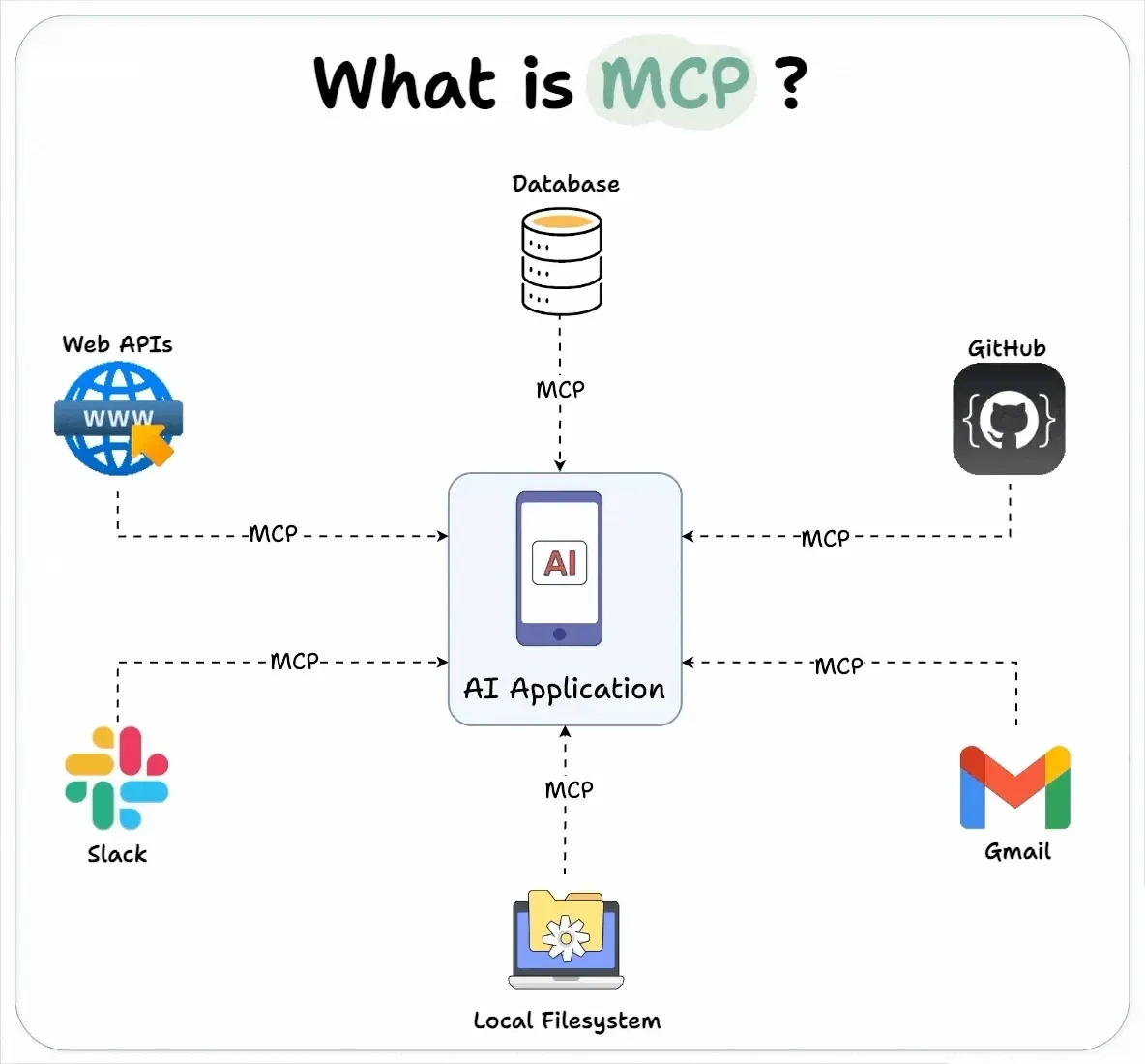

MCP defines a standardized framework for integrating AI systems with external data sources and tools. The protocol supports bidirectional connections between data sources and AI tools, and includes specifications for contextual metadata tagging and interoperability across different platforms.

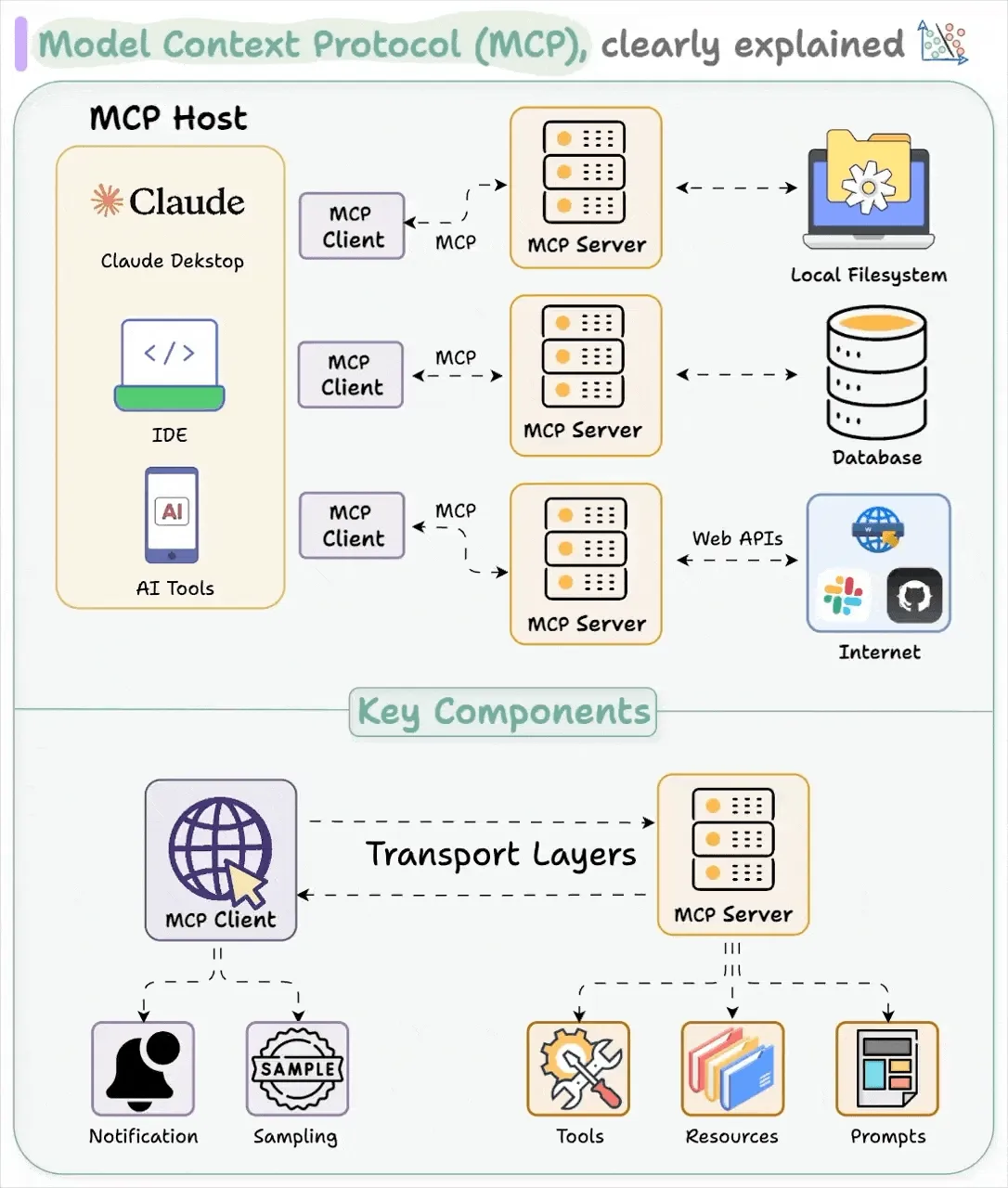

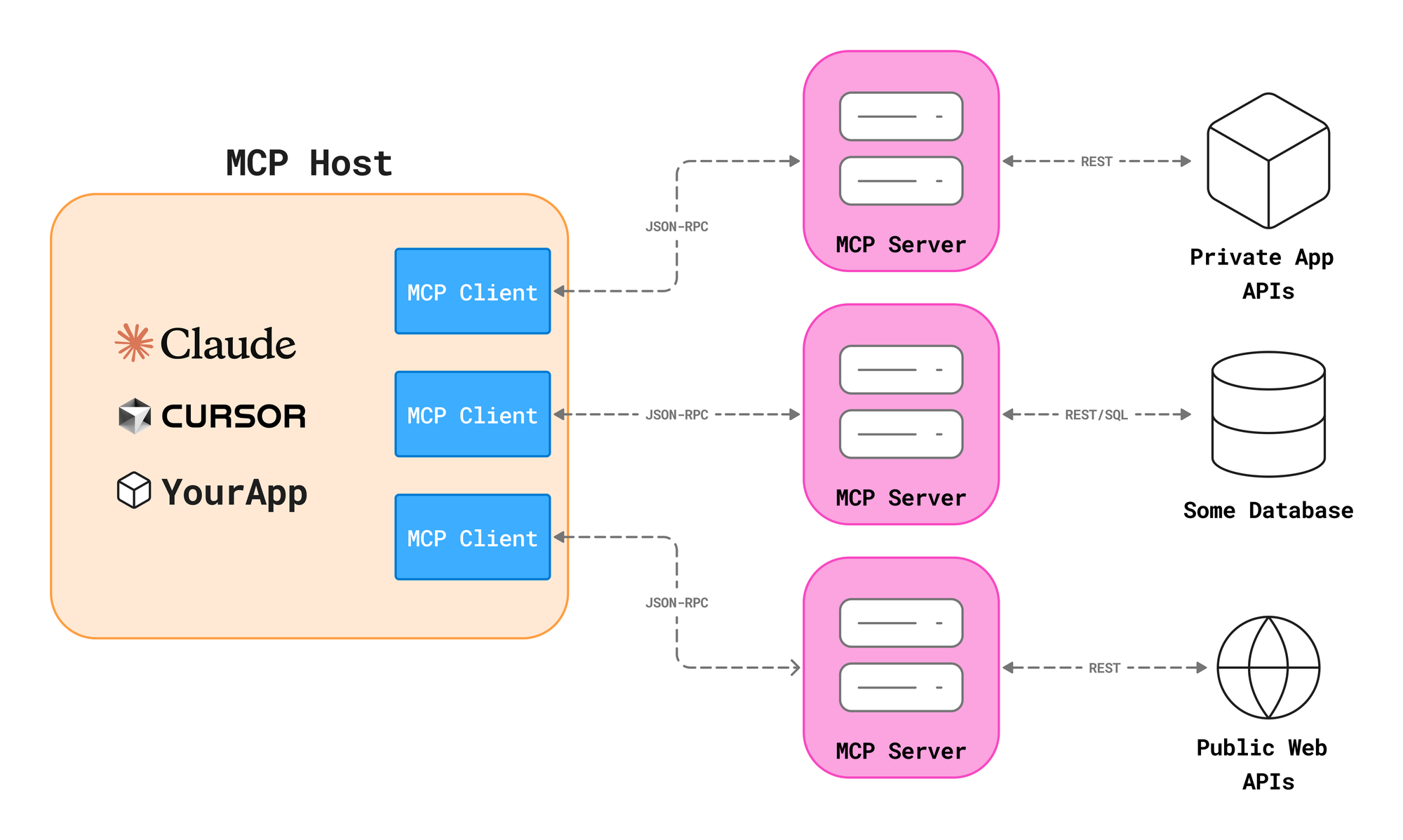

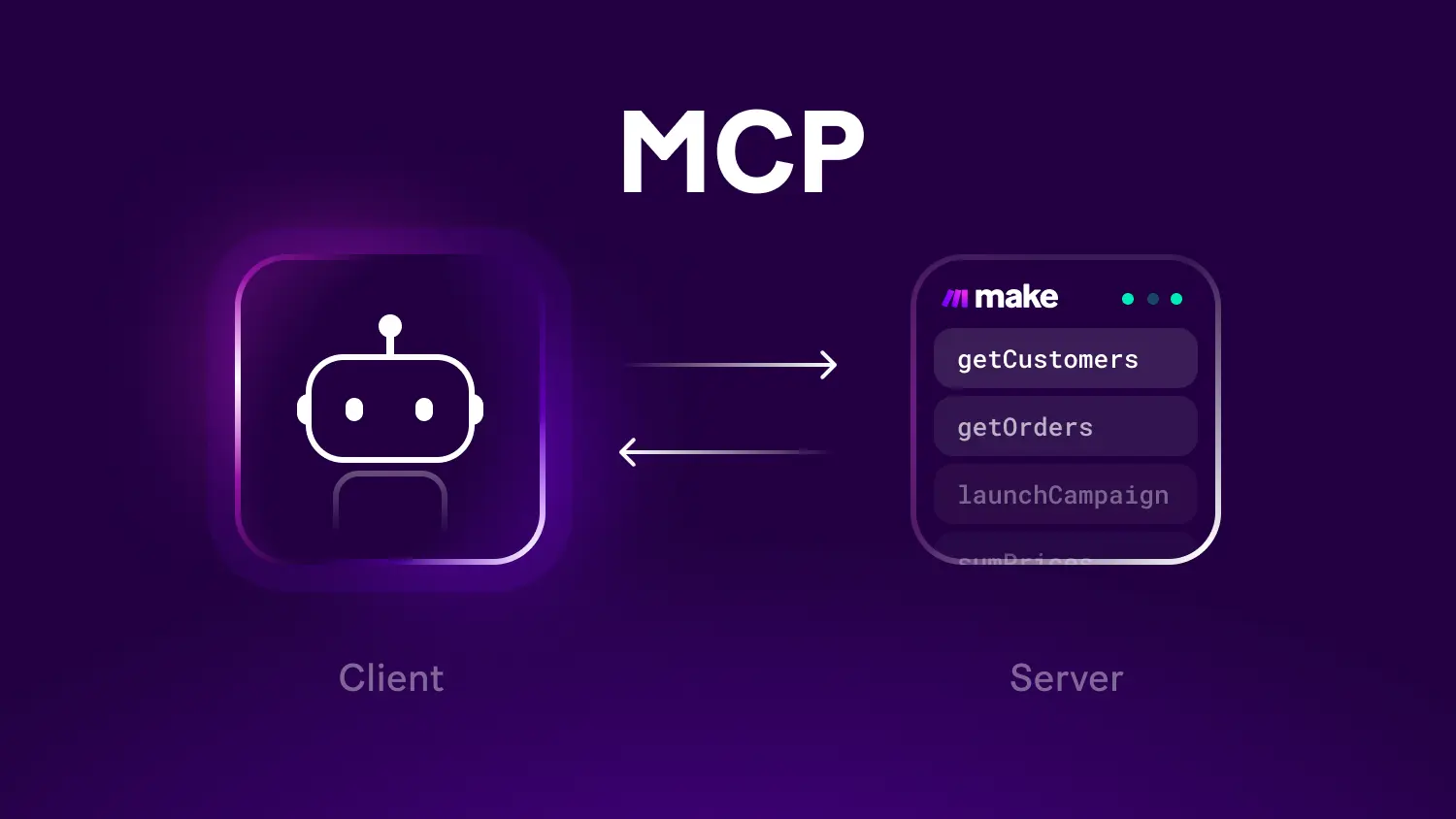

The technical architecture follows a client-server model. MCP clients — things like Claude, ChatGPT, Cursor, or any AI-powered IDE — connect to MCP servers, which are lightweight programs that expose specific capabilities through a unified interface. Those capabilities are organized around three core primitives: Resources, which give agents read-only access to data like documentation or database records; Tools, which allow agents to perform actions like running a shell command or sending an email; and Prompts, which provide standardized templates to give models the context they need for specific tasks.

The whole thing runs on JSON-RPC 2.0, a clean and well-understood messaging standard, and it was deliberately designed to echo the Language Server Protocol — an earlier standard that solved a similar combinatorial problem in the IDE world. Before LSP, supporting a new programming language in a code editor required custom work from every editor team. After LSP, you built one language server and it worked across all compliant editors. MCP applies the same logic to AI connectivity.

SDKs are available in Python, TypeScript, C#, and Java, which covers most of the developer surface area where AI tooling is actually being built today.

Why the Industry Moved So Fast

Protocols fail all the time. The history of technology is littered with technically sound standards that nobody adopted because the timing was wrong, the politics were bad, or the initial implementation was too painful. MCP did not follow that pattern.

There are a few reasons it moved this quickly. First, the timing was right. The AI industry had just spent two years watching model capabilities outpace the infrastructure for actually deploying those capabilities in production. Developers were hungry for anything that reduced integration friction. Second, Anthropic made MCP open-source from day one, which meant anyone could extend it, fork it, or build on top of it without asking permission. That openness created a community of contributors across company lines.

Third, and perhaps most importantly, the protocol was designed to eliminate a problem that nobody had a competitive interest in preserving. "The main goal is to have enough adoption in the world that it's the de facto standard," MCP co-creator David Soria Parra told TechCrunch. "We're all better off if we have an open integration center where you can build something once as a developer and use it across any client."

OpenAI's official adoption in March 2025 was the inflection point. When the dominant AI consumer product endorsed the protocol built by a direct competitor, it sent a clear signal to the rest of the industry: this is going to be infrastructure. Build to it.

The Linux Foundation Move, Explained

The donation of MCP to the Agentic AI Foundation is more significant than it might appear on the surface. It is not just a governance transfer. It is a commitment — from Anthropic, OpenAI, Google, Microsoft, AWS, Block, Cloudflare, and Bloomberg, that the foundational plumbing of the agentic AI era will be neutral, open, and community-governed.

The Linux Foundation comparison matters here. This is the organization that stewards the Linux kernel, Kubernetes, Node.js, and PyTorch. When critical infrastructure ends up in that house, it tends to stay there and grow. The Foundation's track record of maintaining vendor neutrality while enabling massive commercial ecosystems is exactly why this destination was chosen.

MCP joins two other founding projects at the AAIF: Block's Goose, an open-source local-first AI agent framework, and OpenAI's AGENTS.md, a markdown-based standard for giving AI coding agents project-specific instructions. Think of these three projects together as the basic plumbing of the agent era — the pipes, connectors, and instruction manuals that everything else will run on top of.

Mike Krieger, Chief Product Officer at Anthropic, put the rationale plainly at the announcement: "Donating MCP to the Linux Foundation as part of the AAIF ensures it stays open, neutral, and community-driven as it becomes critical infrastructure for AI."

The Competitive Landscape and What It Reveals

The fact that OpenAI co-founded the AAIF alongside Anthropic, with Google and Microsoft as supporting members, is a genuinely unusual moment in tech. These companies compete fiercely for developer attention, enterprise contracts, and model adoption. The fact that they coalesced around a shared infrastructure standard reflects something important: none of them want a world where tool integration is a competitive moat.

It is actually in every major AI platform's interest for MCP to succeed as a neutral standard. If integrations are standardized, competition shifts to model quality, pricing, speed, and developer experience – areas where each company believes it can win. A fragmented integration ecosystem, by contrast, advantages whoever has the largest existing ecosystem. So in a meaningful way, MCP's neutrality levels the competitive playing field.

From the enterprise software side, the membership list tells a similar story. AWS, Cloudflare, Bloomberg, IBM, Oracle, SAP, Snowflake, Docker, and Datadog are all AAIF members at various levels. These are the platforms where enterprise AI actually runs in production. Their presence signals that MCP adoption is not happening at the developer hobby level. It is being designed into the stack that Fortune 500 companies will depend on.

Google has its own extension frameworks within the Gemini ecosystem, and Microsoft has invested heavily in Semantic Kernel as an orchestration layer. Notably, neither company has positioned those internal frameworks as MCP competitors — they have moved toward MCP compatibility instead. That tells you something about where the consensus is landing.

What This Means for Developers

For a developer building an AI-powered product today, the practical implications are direct. If you build an MCP server that exposes your product's functionality, any MCP-compatible AI client can use it. Claude, ChatGPT, Cursor, Gemini, GitHub Copilot — all of them. You write the integration once and it works across the ecosystem.

The flip side also holds. If you are building an AI application and you want to give it access to external tools, you do not have to build custom integrations for each one. You plug into the MCP ecosystem and access the growing library of existing servers.

The larger vision is more ambitious. If tools like MCP, AGENTS.md, and Goose become standard infrastructure, the agent landscape could shift from closed platforms to an open, mix-and-match software world reminiscent of the interoperable systems that built the modern web. The MCP Dev Summit in New York, scheduled for April 2026 as the AAIF's first major public event, will be an early signal of how the foundation plans to manage that community.

The Security Problem Nobody Has Fully Solved

It would be incomplete to write about MCP without addressing the legitimate security concerns that have emerged alongside its rapid adoption.

In April 2025, security researchers released an analysis identifying multiple outstanding issues with MCP, including prompt injection vulnerabilities, tool permissions that could allow data exfiltration when combined, and lookalike MCP servers that can silently replace trusted ones.

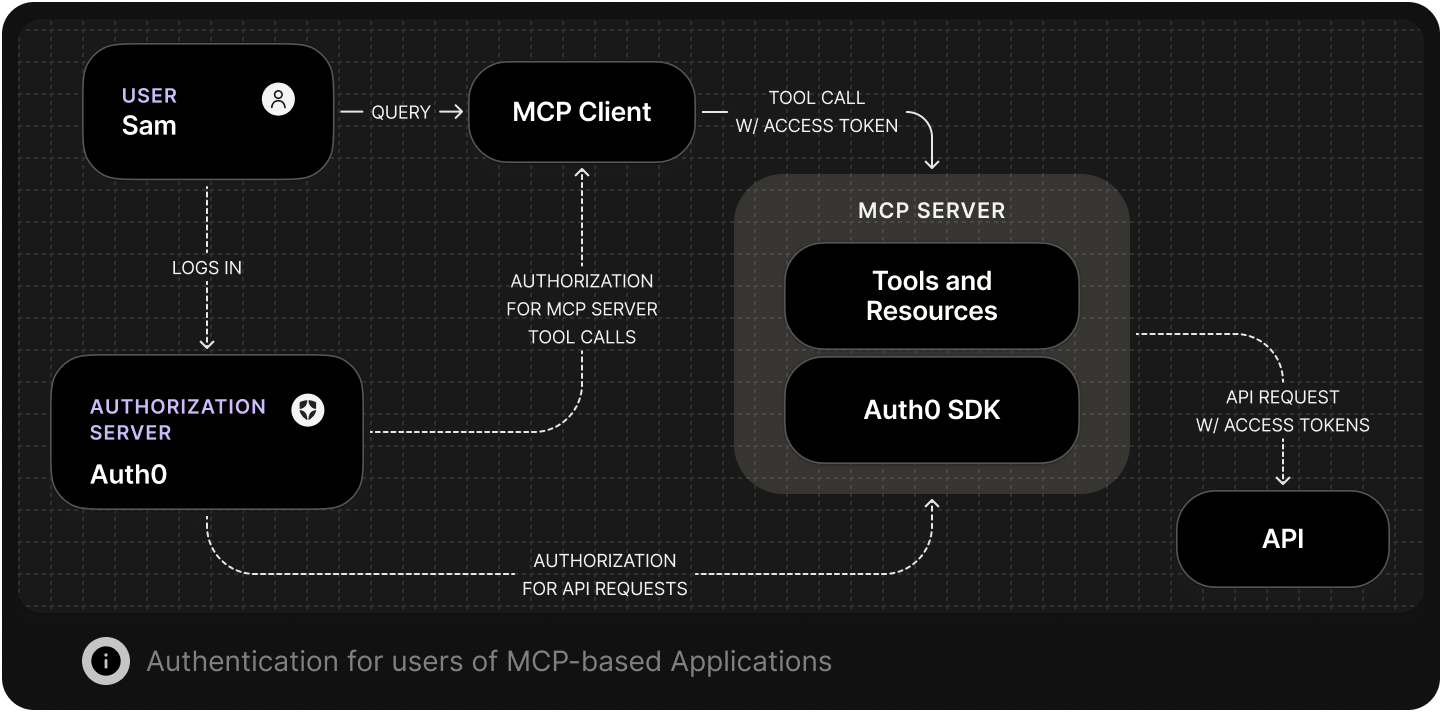

These are not trivial issues. When an AI agent has permission to read your filesystem, send emails on your behalf, and query your database, the attack surface is substantial. The AAIF governance structure is expected to address some of this through community-driven security standards and best practices, but the protocol itself does not currently solve authentication and authorization at enterprise grade.

Organizations deploying MCP in production are handling these concerns through additional layers — API gateways, policy engines, identity federation systems, and output filtering — that sit alongside MCP rather than within it. This is the expected early-stage pattern for any infrastructure standard. TCP/IP did not ship with TLS. The web did not ship with HTTPS. Security layers get built on top of widely adopted foundations, and the same process is underway here. Enterprise buyers evaluating adoption should go in clear-eyed about where the protocol currently stands on security maturity.

What Businesses Should Actually Do With This

For most businesses that are not building AI tools, the immediate implication of MCP is not technical — it is strategic. The emergence of a dominant connectivity standard means that the AI tools you adopt over the next two years should increasingly be evaluated on MCP compatibility.

When your team evaluates a new AI coding assistant, customer service automation platform, or internal knowledge tool, ask whether it supports MCP. That compatibility determines whether the tool can connect to your existing systems cleanly or whether it will require another round of expensive custom integration work.

For companies with development resources, investing in MCP servers for your core internal systems now — your knowledge base, your ticketing system, your customer data platform — means those systems become immediately accessible to any AI agent your team deploys, today or in the future. That is a meaningful multiplier on AI investment.

For enterprises with serious security and compliance requirements, the open governance of MCP is a feature, not a liability. Open standards under neutral stewardship can be audited, extended, and governed in ways that proprietary protocols cannot. The Linux Foundation's track record here is worth something real.

The Road Ahead

MCP is one year old. It already has 97 million monthly downloads, 10,000 active servers, and the backing of every major AI company in the industry. Several trends will determine whether it fulfills its infrastructure potential.

The growth of MCP server directories and marketplaces will determine whether the protocol is as easy to use as it is to understand conceptually. Security tooling built natively for MCP will need to mature quickly to match enterprise requirements. And the protocol's extension to handle more complex agentic workflows — including asynchronous operations, multi-agent coordination, and stateful task management — will determine how well it holds up as AI agents take on longer-horizon work.

The most important signal to watch is enterprise production deployment volume. Developer adoption of open standards is relatively easy to win. Enterprise production adoption is where standards either become infrastructure or get absorbed into proprietary platforms. The membership of the AAIF suggests the major cloud and software vendors are betting on the former outcome.

The USB-C analogy is apt not just because it describes what MCP does, but because it captures what open standards look like when they actually work. One port. Every device. Eventually, you stop noticing it is there — which is exactly the point.

Frequently Asked Questions

What is the Model Context Protocol (MCP)?

MCP is an open-source standard developed by Anthropic that defines how AI models and agents connect to external tools, data sources, and services. Instead of requiring custom integrations for each tool, MCP creates a universal interface — similar to how USB-C provides a single port standard across devices.

Who created MCP and who controls it now?

Anthropic introduced MCP in November 2024. In December 2025, Anthropic donated the protocol to the Agentic AI Foundation (AAIF), a directed fund under the Linux Foundation. The AAIF is co-founded by Anthropic, OpenAI, and Block, with Google, Microsoft, AWS, Cloudflare, and Bloomberg as supporting members.

Has OpenAI adopted Anthropic's MCP?

Yes. OpenAI officially adopted MCP in March 2025 and is now a co-founder of the Agentic AI Foundation alongside Anthropic. OpenAI has integrated MCP into ChatGPT and contributed actively to the protocol's ongoing development.

How does MCP actually work technically?

MCP follows a client-server model using JSON-RPC 2.0 messaging. MCP clients (AI applications like Claude or ChatGPT) connect to MCP servers (programs that expose specific tools or data). The protocol organizes capabilities into three primitives: Resources (read-only data access), Tools (actions an agent can perform), and Prompts (standardized context templates).

What are the security risks of MCP?

Security researchers identified several concerns in 2025, including vulnerability to prompt injection attacks, overly broad tool permissions that could allow data exfiltration, and the risk of lookalike MCP servers impersonating trusted ones. Enterprises deploying MCP should layer in authentication controls, policy-based authorization, and output filtering on top of the base protocol.

Why is MCP being compared to USB-C?

The USB-C analogy refers to the way MCP standardizes connectivity. Just as USB-C eliminated the need for different cables and ports across devices, MCP eliminates the need for custom AI integrations across tools. Build one MCP server for your product and it works with any compatible AI client.

What is the Agentic AI Foundation (AAIF)?

The AAIF is a directed fund under the Linux Foundation focused on open-source infrastructure for AI agents. It launched in December 2025 with three founding projects: Anthropic's MCP, Block's Goose agent framework, and OpenAI's AGENTS.md coding instruction standard. Platinum members include Amazon Web Services, Anthropic, Block, Bloomberg, Cloudflare, Google, Microsoft, and OpenAI.

How widely has MCP been adopted?

As of December 2025, MCP has surpassed 97 million monthly SDK downloads and supports more than 10,000 active servers. It has native support in Claude, ChatGPT, Gemini, Microsoft Copilot, GitHub Copilot, Visual Studio Code, and Cursor, among many other platforms.

Related Articles