For decades, the world has grappled with a fundamental problem in governing dangerous technology at scale: how do you get 193 countries to agree on anything when the science is complicated, the stakes are enormous, and the commercial interests are immense?

The answer, in the case of climate change, was the Intergovernmental Panel on Climate Change. The IPCC did not ban emissions, set regulations, or enforce compliance. It did something subtler and, over time, more consequential: it built a shared, authoritative scientific foundation that made denial progressively harder and coordinated action progressively easier. The Paris Agreement did not happen without the IPCC's 30-year evidence base behind it.

Now the United Nations is trying to replicate that model for artificial intelligence.

On August 26, 2025, the United Nations General Assembly took a historic step in global AI governance by establishing the Independent International Scientific Panel on Artificial Intelligence through Resolution A/RES/79/325. The panel was formally constituted in February 2026 when the General Assembly confirmed its 40 members. The vote was 117 in favour to 2 against, with Paraguay and the United States voting in opposition, and Tunisia and Ukraine abstaining.

The significance of that vote count, and that opposition, tells you almost everything you need to know about the political terrain this new body is entering.

What the Panel Actually Is

The panel's 40 members, approved in a vote by the UN's General Assembly on 12 February, are from 37 nations. The UN says that the panel will act "as an early-warning system and evidence engine, helping to distinguish between hype and reality" and produce "policy-relevant" reports.

The panel will not set policy, issue regulations, or impose binding standards. Instead, it will create a "credible evidence base" to shape how governments, regulators, and the public understand the technology's risks and opportunities. That distinction is important and frequently misunderstood. Critics who frame this as the UN attempting to regulate AI are describing a body that does not exist.

Rather than focusing on a single issue, the new panel will produce a number of scientific reports, year on year, which will be broad ranging, covering not just AI safety but also the economic, social, cultural and developmental aspects of the technology.

Who Is On It

Canadian artificial intelligence pioneer Yoshua Bengio is among the 40 experts named to serve on the panel. Also named among the proposed independent experts is journalist and Nobel Peace Prize laureate Maria Ressa of the Philippines, and Joelle Barral, a French national who is senior director for research and engineering at Google's DeepMind. Ethiopian national Girmaw Abebe Tadesse, a principal research scientist at the Microsoft lab dedicated to the use of AI in sustainability, humanitarian work and health, has also been named to the group.

Members were selected from more than 2,600 candidates in a review process led by three UN technical agencies. They bring expertise spanning machine learning, data governance, public health, cybersecurity, childhood development, and human rights, reflecting AI's far-reaching implications across domains.

The panel's first report is expected to be published in time for the UN Global Dialogue on AI Governance in July. "All members will serve in their personal capacity independent of any government, company, or institution," the secretary-general said.

The IPCC Comparison: Where It Holds and Where It Doesn't

The analogy to the IPCC is both the most useful frame for understanding this new body and the most important one to interrogate carefully, because the parallels are real but the differences are significant.

| Dimension | IPCC | ISP-AI |

|---|---|---|

| Established | 1988 | 2025 |

| Members | Hundreds across working groups | 40 experts from 37 nations |

| Output cadence | Major reports every 5 to 7 years | Annual reports |

| Binding authority | None | None |

| U.S. participation | Full | Opposed; voted against |

| China participation | Full | Represented in membership |

| First major policy outcome | Informed Kyoto Protocol, eventually Paris Agreement | First report due mid-2026 |

Where the analogy holds

Given the high systemic complexity, uncertainty, and ambiguity surrounding the rise of AI and its consequences, a context similar to climate change, creating an IPCC for AI could help build a solid base of facts and benchmarks against which to measure progress.

The IPCC demonstrated that building scientific consensus on a contested, high-stakes issue across dozens of governments and conflicting interests is possible, if painstaking. It also demonstrated that such consensus, even without enforcement mechanisms, can reshape political possibility over time. The AI panel's architects are counting on the same dynamic.

Where the analogy strains

The pace mismatch is significant. Climate change, as a physical process, evolves over decades. The IPCC's multi-year assessment cycle is suited to that cadence. AI capabilities, by contrast, are advancing in months. The field was already "advancing far too fast for a single annual report to capture the pace of change," according to authors of the International AI Safety Report's interim update.

There is also a commercial complexity the IPCC never faced at the same intensity. Climate science does not have trillion-dollar private sector companies with direct financial stakes in the pace and framing of its assessments. AI does. The independence of the panel's 40 members, who serve in a personal rather than institutional capacity, is designed to address this, but the structural pressure will be constant.

The Road That Led Here

The ISP-AI did not emerge from nothing. It is the product of a multi-year institutional process that began with the recognition that AI governance was becoming dangerously fragmented.

In August 2025, the United Nations adopted a resolution forming the International Scientific Panel on AI. This was the culmination of several years of work, beginning with the creation of the secretary-general's AI Advisory Body in 2023. Two of the AI Advisory Body's final recommendations, the ISP-AI and the creation of the Global Dialogues on AI, were included in the Global Digital Compact, which the UN General Assembly adopted as an annex of the Pact of the Future in 2024.

As of the UN's assessment, 118 countries are not party to any significant international AI governance initiative. The growth of AI tools has yet to be matched by effective, internationally agreed rules on how to govern the technology.

That gap between the pace of AI deployment and the pace of governance is precisely what the panel was designed to address. Not by regulating, but by ensuring that governments attempting to regulate, or to decide not to regulate, are working from a shared and credible evidence base rather than competing national narratives and industry-funded assessments.

The new mechanisms look like they will be mostly powerless in practice. They may end up being hampered by their design, funding uncertainties, an intensifying global AI race, the widening divide between AI "haves" and "have-nots," and disruptive technological leaps. That assessment from Chatham House is a useful corrective to uncritical enthusiasm, and it is probably right that the panel's near-term practical power is limited. But the same could have been said about the IPCC in 1990.

The U.S. Opposition: What It Means and What It Doesn't

The United States voted against the panel's formation and, when the panel's 40 members were confirmed in February 2026, objected formally on record. "With sovereignty in mind, the United States wishes to register its strong objection to the establishment of the panel as currently constituted," said that country's representative as she requested a recorded vote on this matter.

The position was elaborated at length at the India AI Impact Summit in New Delhi. White House technology adviser Michael Kratsios said Friday that the United States "totally" rejects global governance of artificial intelligence. "AI adoption cannot lead to a brighter future if it is subject to bureaucracies and centralised control," he said.

The administration's objections went further than general skepticism of multilateral processes. Kratsios argued that "ideological, risk-focused obsessions, such as climate or equity, become excuses for bureaucratic management and centralisation" and that "in the name of safety, they increase the danger that these tools will be used for tyrannical control."

What the U.S. position signals, and what it does not, can be separated into distinct questions:

- Does U.S. opposition prevent the panel from functioning? No. With 117 countries voting in favor, the panel has broad legitimacy and does not require U.S. participation to operate or publish.

- Does it undermine the panel's scientific credibility? Potentially, at the margins. American AI research is disproportionately significant globally, and U.S. institutions are underrepresented if the government is hostile to cooperation.

- Does it prevent individual U.S. researchers from participating? No. Panel members serve in a personal capacity and are not bound by the administration's position.

- Does it signal that the panel's outputs will be ignored by Washington? Almost certainly, under the current administration. Whether future administrations take a different view is the more consequential question.

"Governance of powerful technologies typically begins with shared language: what risks matter, what thresholds are unacceptable," noted Niki Iliadis, director of governance at an AI policy organization. That is precisely the function the ISP-AI is designed to serve, regardless of whether Washington chooses to participate.

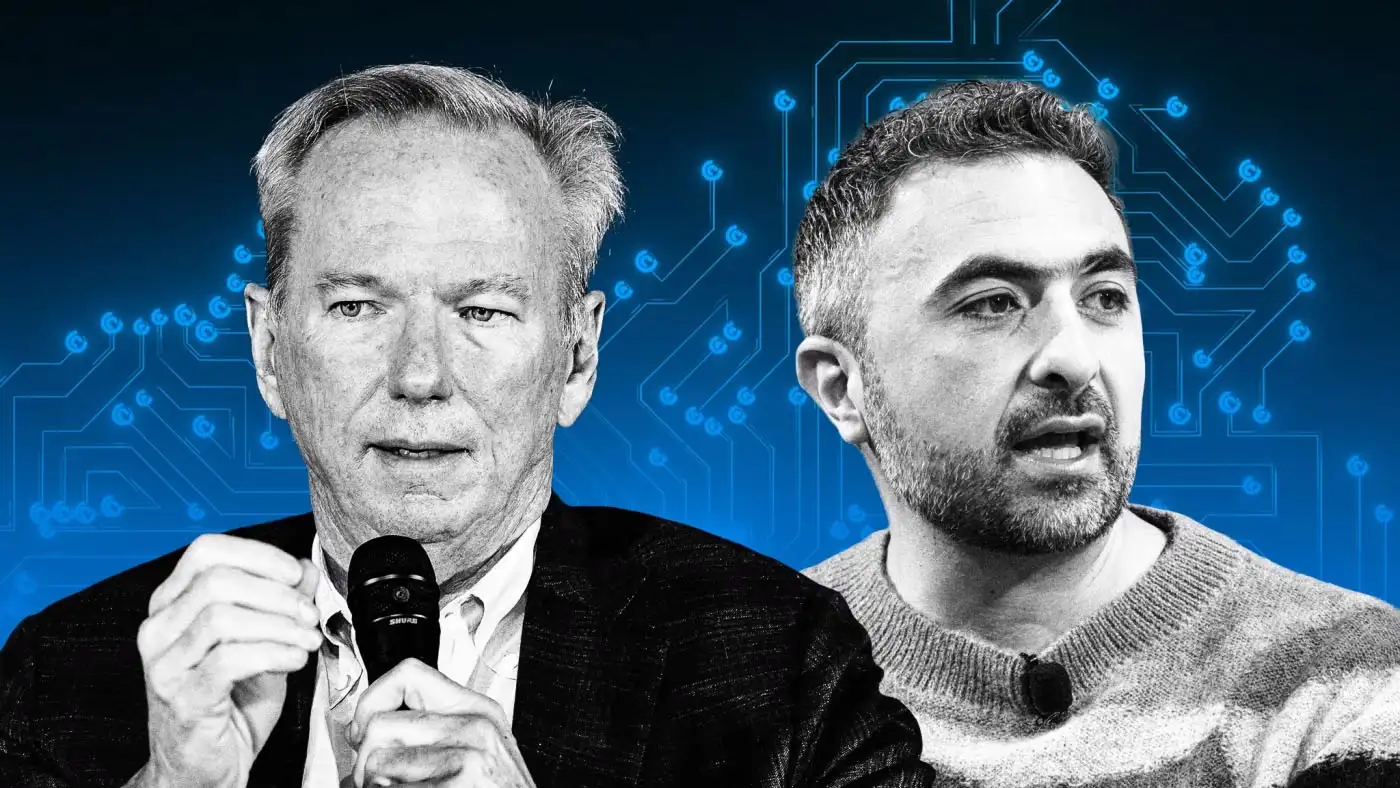

The Yoshua Bengio Thread

Understanding the ISP-AI requires understanding the parallel institution it connects to: the International AI Safety Report.

The 2023 Bletchley Park AI summit established an international panel of 96 AI experts to provide objective, scientifically based reports on AI safety to inform annual global summits of policymakers, emulating the approach of the IPCC. The first full report, at 300 pages, landed in late January 2025 as pre-reading for the Paris AI Summit held in early February.

Yoshua Bengio, the Turing Award-winning computer scientist who chairs that report and is among the ISP-AI's 40 members, has been unusually candid about where the science actually stands relative to the governance response. "Unfortunately, the pace of advances is still much greater than the pace of how we can manage those risks and mitigate them," he said. "And that, I think, puts the ball in the hands of the policymakers."

The second International AI Safety Report, published in February 2026 and led by Bengio with contributions from over 100 AI experts, is backed by over 30 countries and international organizations. It represents the largest global collaboration on AI safety to date.

The relationship between the Safety Report, which covers frontier model risks in particular, and the broader ISP-AI mandate is one of the more interesting institutional design questions the new panel will need to navigate. The Safety Report is focused specifically on frontier AI risks; the new panel has a significantly broader mandate covering economic, social, cultural, and developmental dimensions of the technology. That breadth is both the panel's greatest potential asset and its greatest organizational challenge.

Why the Scope of the Mandate Matters

One of the defining features of the ISP-AI, compared to both the Safety Report and earlier governance efforts, is its explicit commitment to covering AI's impacts across the full range of human concerns, not just the existential risk scenarios that dominate headlines.

The MIT AI Risk Repository has seen its database swell to 1,600 AI risks, organized into seven domains including discrimination and toxicity, misinformation, socioeconomic and environmental harms, and others. Attempts at developing a standardized categorization of risks for policymakers have included the EU AI Act's risk severity levels and the pillars of harm in the U.S. National Institute of Standards and Technology's AI Risk Management Framework.

The panel's annual reports are intended to cut through that fragmentation by providing a unified scientific synthesis across all of these domains. For policymakers in developing economies who lack the institutional capacity to independently assess competing risk frameworks, that synthesis function could prove particularly valuable.

The panel faces a genuine tension here. Moving slowly enough to maintain scientific rigor means lagging a technology that moves in months. Moving quickly enough to remain relevant means accepting that some assessments will be incomplete or overtaken by events before the ink is dry.

What This Means for the U.S. Tech Ecosystem

The practical implications for American companies and institutions are worth examining carefully, because U.S. government opposition to the panel does not mean the panel's outputs will be irrelevant to how American AI products are received globally.

The EU, which voted in favor and has already demonstrated a willingness to use regulatory mechanisms with real market consequences, will almost certainly use the ISP-AI's assessments as inputs to its own governance processes. Countries in the Global South that are making decisions about which AI infrastructure to adopt and which standards to align with will have the panel's reports as reference points.

The U.S. signed the AI Impact Summit declaration alongside other participating countries, signalling support for international cooperation on AI outcomes. Yet, at the same forum, its top science official rejected global governance of AI. That dual posture, endorsing a shared declaration while opposing multilateral oversight, raises questions about how far the U.S. will align with collective frameworks when its interests diverge.

For the technology companies navigating this terrain, that tension is not theoretical. It is the operating environment.

Conclusion

The creation of the Independent International Scientific Panel on Artificial Intelligence is not a governance solution. It does not regulate AI, enforce standards, or constrain what companies or governments can build and deploy. What it does is more foundational and, if the climate analogy holds at all, potentially more consequential over time.

It creates the conditions for a shared global understanding of what AI actually is, what it actually does, and what evidence actually exists about its risks and benefits. That shared understanding is the prerequisite for any meaningful governance that follows.

The IPCC took decades to build the scientific foundation that made the Paris Agreement politically possible. The ISP-AI is working in a technology domain that operates on a fundamentally different time horizon, and it is doing so without the participation of the world's most powerful AI nation. Those are serious constraints.

But the alternative to imperfect, slow, and contested multilateral governance is unilateral governance, which in practice means governance by the companies and governments with the most resources and the most to gain. Imperfect international cooperation is better than no cooperation at all.

Whether the ISP-AI succeeds will depend on what it publishes, who listens, and whether the political will to act on its findings can survive the commercial pressures and geopolitical rivalries already working against it.

Frequently Asked Questions

Q: What is the UN's Independent International Scientific Panel on Artificial Intelligence?

The ISP-AI is the first global scientific body dedicated to assessing how artificial intelligence is transforming societies worldwide. Established by UN General Assembly resolution in August 2025 and formally constituted in February 2026, it comprises 40 independent experts from 37 nations. Its mandate is to produce annual evidence-based reports on AI's capabilities, risks, and societal impacts. It does not set policy, issue regulations, or impose binding standards.

Q: How is the ISP-AI similar to the IPCC?

Both bodies are designed to synthesize scientific evidence into accessible, policy-relevant assessments without themselves setting binding rules. The IPCC built the scientific foundation that informed climate agreements including the Paris Agreement. The ISP-AI aims to play a similar role for AI governance, creating a shared evidence base that governments, regulators, and the public can use to make informed decisions about the technology.

Q: Why did the United States vote against the panel?

The Trump administration has taken a broad position against multilateral AI governance, arguing that global frameworks lead to bureaucratic centralization that stifles innovation. White House technology adviser Michael Kratsios stated at the February 2026 India AI Impact Summit that the U.S. "totally rejects global governance of AI." The administration prefers bilateral and national governance frameworks, and has simultaneously promoted American AI exports through its own international programs.

Q: Who are the key members of the ISP-AI?

The panel includes AI safety researcher and Turing Award winner Yoshua Bengio, Nobel Peace Prize laureate Maria Ressa, and Joelle Barral of Google DeepMind, among experts from academia, civil society, government, and the private sector across 37 nations. Members serve in a personal capacity, independent of any government or institution.

Q: Does the panel have any enforcement power?

No. The ISP-AI has no authority to regulate, sanction, or enforce compliance with any standard or recommendation. Its influence depends entirely on the scientific credibility of its reports and the willingness of governments to treat those reports as genuine policy inputs.

Q: When will the panel's first report be published?

The first annual report is expected in mid-2026, timed to coincide with the UN Global Dialogue on AI Governance. The panel's members serve three-year terms ending in February 2029, during which they will produce annual reports covering AI's opportunities, risks, and societal impacts.

Q: How does the ISP-AI relate to the International AI Safety Report chaired by Yoshua Bengio?

The International AI Safety Report, which began at the 2023 Bletchley Park AI Safety Summit, focuses specifically on frontier model risks. Bengio is involved in both. The ISP-AI has a broader mandate covering the full economic, social, cultural, and developmental dimensions of AI. The two bodies complement rather than duplicate each other.

Q: What does U.S. opposition mean practically for the panel's work?

American researchers can still contribute in their personal capacity, and the panel can function without U.S. government participation. However, the opposition signals the current administration is unlikely to use the panel's outputs as policy inputs, creating a dynamic where American companies may sell into markets whose governments actively reference ISP-AI assessments while Washington has formally rejected the body producing them.

Related Articles