If your company uses AI to screen job applicants, approve loans, recommend content, price products, or automate customer decisions — and at this point, whose company does not — then you are already inside the perimeter of an escalating regulatory war that most business owners are not paying close enough attention to.

This is not a distant policy debate. It is a collision happening right now, between a federal administration trying to suppress state-level AI rules, states that are pressing ahead anyway, a European Union enforcing the world's first comprehensive AI law with penalties that dwarf the GDPR, and 30-plus countries globally building their own frameworks in parallel.

The compliance landscape for AI in 2026 is not just complex. It is actively contradictory and the contradiction is expensive.

According to EY's global survey findings, the majority of C-suite leaders say that non-compliance with AI regulations is the most common AI risk they face. Given what is coming before the end of this year alone, that concern is well-founded.

Why 2026 Is the Year Everything Shifts

For the past several years, AI regulation has been the subject of legislative drafts, working groups, and earnest policy papers that rarely translated into actual consequences for actual businesses. That window has closed.

Multiple laws with hard enforcement teeth now have effective dates in 2026, and "tracking bills" is no longer a sufficient strategy for any company deploying AI in a meaningful way.

The enforcement calendar that businesses cannot ignore:

| Jurisdiction | Law / Requirement | Effective Date |

|---|---|---|

| EU AI Act | High-risk AI full compliance (Annex III) | August 2, 2026 |

| Colorado AI Act | Comprehensive high-risk AI governance | June 30, 2026 |

| California TFAIA | Frontier AI training data transparency | January 1, 2026 |

| Texas RAIGA | AI governance and civil penalty framework | January 1, 2026 |

| Illinois HB 3773 | AI discrimination in employment | January 1, 2026 |

| EU AI Act (GPAI) | Fines for general-purpose AI providers | August 2, 2026 |

These are not proposals or guidance documents. They are enforceable laws with civil penalties, attorney general enforcement powers, and in the EU's case, fines calibrated to be more severe than anything the GDPR produced.

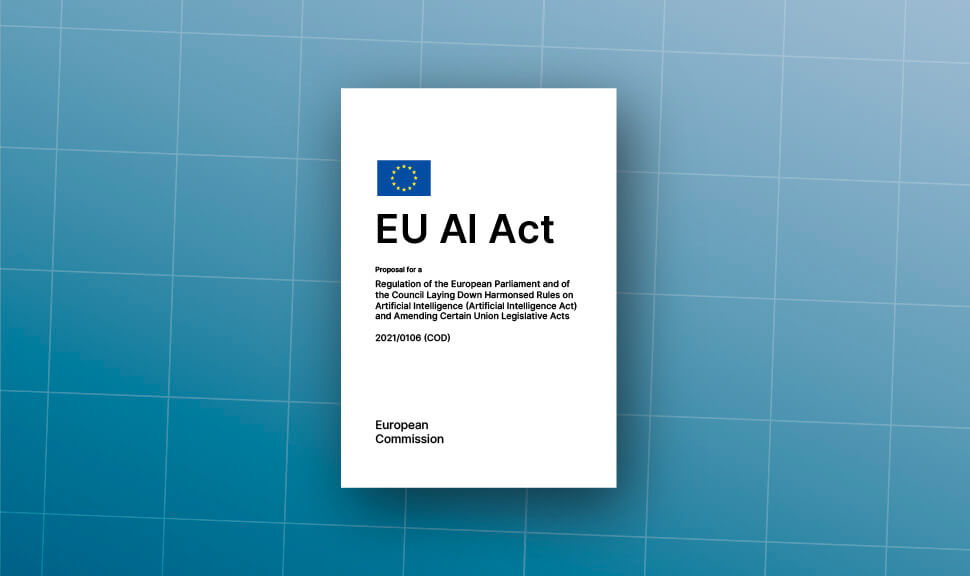

The EU AI Act: What August 2026 Actually Means

The EU's AI Act is the most consequential regulatory development in artificial intelligence to date — not because of its geographic scope alone, but because it applies to any company anywhere whose AI systems affect people within the European Union.

That extraterritorial reach mirrors the GDPR. A US-based company using AI for loan approvals that serves European customers falls within scope, even if the AI models run on servers outside Europe.

The law operates on a four-tier risk classification that determines a company's compliance obligations based on the potential harm of each AI system.

The Risk Tiers That Define Your Obligations

Unacceptable risk — already banned since February 2, 2025:

These practices are prohibited outright. They include social scoring systems used by governments or private entities, AI that manipulates people through subliminal techniques, real-time biometric identification in public spaces (with narrow law enforcement exceptions), and systems that exploit vulnerable populations. Companies still running these must stop immediately.

High-risk AI (Annex III) — full enforcement August 2, 2026:

This is where most businesses will feel the real weight of compliance. High-risk systems are those used in:

- Hiring, promotion, and employment decisions

- Credit scoring and loan approvals

- Insurance underwriting and pricing

- Healthcare diagnostics and treatment recommendation

- Educational assessment and student management

- Biometric identification and access control

- Critical infrastructure management

Companies deploying these systems must complete conformity assessments, maintain detailed technical documentation, implement human oversight mechanisms, conduct ongoing post-market monitoring, and register high-risk systems in the EU's public database. Conformity assessment alone takes six to twelve months to complete, which means organizations that have not started are already behind.

Limited risk — transparency requirements:

AI systems that interact with users — chatbots, customer service bots, AI-generated content — must disclose to users that they are engaging with an AI. The European Commission published a first draft code of practice for labeling AI-generated content in December 2025, with finalization planned for June 2026.

Minimal risk:

Spam filters, basic automation, and similar tools face no specific obligations under the Act.

The Penalty Structure

Penalties are structured in tiers: up to €35 million or 7% of global annual turnover for prohibited practices, up to €15 million or 3% for high-risk non-compliance, and up to €7.5 million or 1% for supplying incorrect information.

To put the top-tier number in context: the EU's GDPR maximum is 4% of global turnover. The AI Act's maximum for the most serious violations is 7%. The EU did not quietly raise that ceiling by accident.

The Digital Omnibus Wildcard

In November 2025, the European Commission proposed a "Digital Omnibus" package that could potentially delay high-risk AI system enforcement from August 2026 to as late as December 2027 — but only if harmonized standards and support tools are unavailable by the deadline.

The critical word is "could." The Digital Omnibus is a proposal, not law. It must pass the European Parliament. Experts warn against assuming delays — treat August 2026 as the binding enforcement date. Organizations starting compliance efforts today barely have enough time for August 2026. Waiting risks catastrophic scrambling if the extension does not materialize.

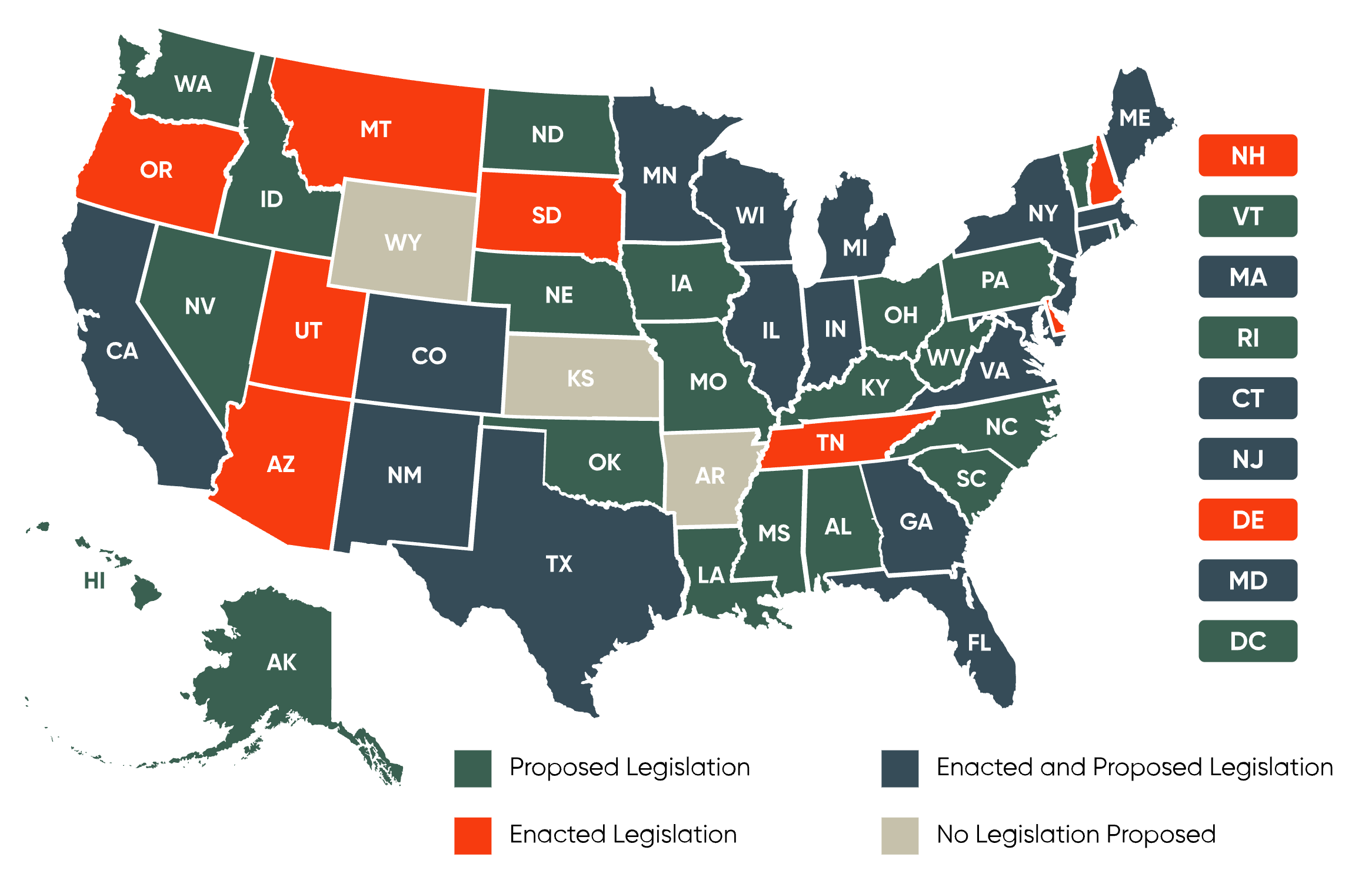

The US Picture

The American AI regulatory landscape in 2026 is not one fight. It is three overlapping fights happening simultaneously — and the outcome of each affects how businesses need to approach compliance.

Fight One: Federal vs. States

On December 11, 2025, President Trump signed an Executive Order titled "Ensuring a National Policy Framework for Artificial Intelligence." The stated goal was to prevent what the administration called a "patchwork of 50 different regulatory regimes" from impeding US AI competitiveness.

The Order directed the Justice Department to establish an AI Litigation Task Force to challenge state AI laws it deems unconstitutional or incompatible with federal policy. It directed the Commerce Department to publish an evaluation of state AI laws deemed "onerous" and to potentially condition states' access to Broadband Equity and Deployment (BEAD) federal funding on their AI regulatory posture. It also directed the FTC to issue a policy statement by March 2026 classifying certain state-mandated bias mitigation requirements as potentially deceptive under federal consumer protection law.

The legal durability of this approach is contested on multiple fronts.

"An executive order doesn't/can't preempt state legislative action. Congress could, theoretically, preempt states through legislation."

— Florida Governor Ron DeSantis, responding to Trump's announcement

Governors in California, Colorado, and New York issued statements indicating the Order will not stop them from passing, or enforcing, their local AI statutes and regulations. Legal scholars across the political spectrum have noted that the EO's preemptive power is constitutionally limited without congressional backing.

An earlier version of the executive order leaked last month and sparked a round of opposition from across the political spectrum. In July, the Senate dropped an AI moratorium from the reconciliation bill it was debating. While Democrats broadly support more AI regulation, the issue has divided Republicans.

The bottom line for businesses is uncomfortable but clear: state AI laws remain legally in effect. The EO creates uncertainty and potential future litigation, but until courts rule or Congress acts, companies cannot safely ignore state requirements in Colorado, California, Texas, or Illinois on the assumption that federal action has neutralized them.

Fight Two: State Lawmakers vs. Industry

Colorado's experience is the clearest illustration of the pressure businesses are applying to state AI legislation — and what it actually produces.

The Colorado AI Act passed in May 2024, making Colorado the first US state with comprehensive high-risk AI regulation. Within weeks, industry groups were warning of crushing compliance costs. Governor Jared Polis signed it with a statement expressing reservations. The legislature convened a special session in August 2025 to revise or repeal portions of it. Multiple compromise proposals collapsed when technology companies objected to liability provisions. The effective date slipped from February 2026 to June 2026.

A study by the Common Sense Institute projects the law will cost Colorado 40,000 jobs and $7 billion in economic output by 2030. The U.S. Chamber, drawing on that methodology, found that applying Colorado's requirements nationwide could slow small businesses' AI investment by 0.17%, potentially resulting in the loss of 92,000 jobs.

65% of small businesses nationwide are concerned about rising litigation and compliance costs from conflicting AI laws and regulations at the state and local level. One-third stated they would scale down AI use and another fifth of owners would be less likely to use AI at all.

These are industry-funded projections and should be read as such. But the underlying compliance burden they describe — risk assessments, documentation requirements, disclosure obligations, bias testing — is real and undeniably disproportionate for smaller organizations without legal and compliance teams.

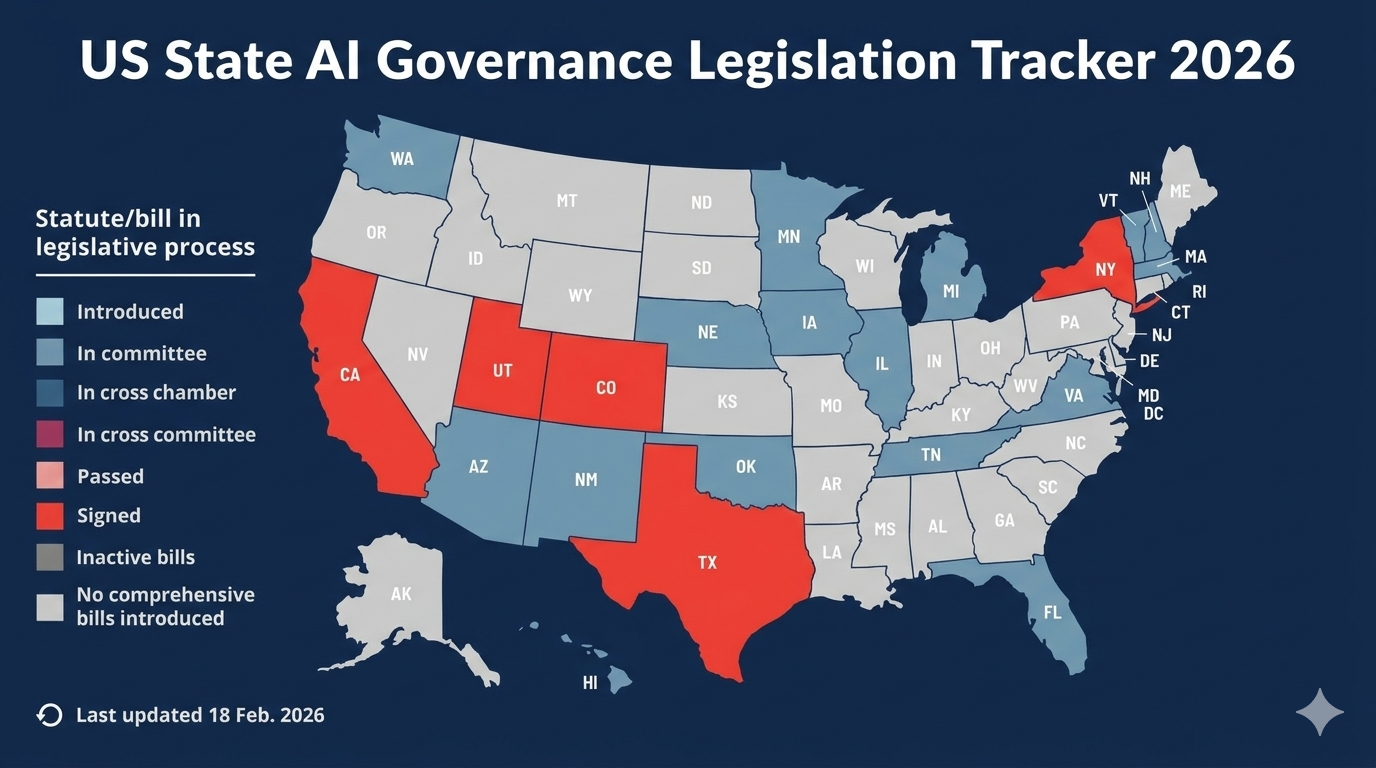

Fight Three: The State-by-State Patchwork

Even setting aside the federal-state confrontation, the diversity of state-level requirements is itself a significant operational challenge for any company that sells products or makes decisions affecting residents across multiple states.

A snapshot of what took effect January 1, 2026:

- California TFAIA: Requires developers of frontier AI models to publish summaries of training datasets including sources, licensing terms, whether personal or synthetic data was used, and any modifications

- Texas RAIGA: Regulates AI systems in high-risk contexts, provides civil penalties, and creates a regulatory sandbox for companies testing under relaxed requirements

- Illinois HB 3773: Prohibits employers from using AI in ways that discriminate against protected classes; requires disclosure when AI assists hiring, promotion, or disciplinary decisions

California's CCPA automated decision-making regulations — which require pre-use notice, opt-out rights, and disclosure for significant decisions affecting consumers — take effect January 1, 2027, giving companies a runway to prepare.

Various industry estimates suggest compliance costs add approximately 17% overhead to AI system expenses. California's privacy and cybersecurity requirements alone could impose nearly $16,000 in annual compliance costs on small businesses.

For a startup or SME trying to operate nationally, the compliance surface is now dozens of overlapping requirements with different definitions, different thresholds, different disclosure formats, and different enforcement mechanisms.

What Counts as "High-Risk"

One of the most consequential practical questions for businesses right now is deceptively simple: does my AI qualify as high-risk?

The answer determines whether you face light-touch transparency requirements or the full weight of conformity assessments, technical documentation packages, human oversight mandates, and post-market monitoring programs.

Both the EU AI Act and the Colorado AI Act center their substantive requirements on AI systems used for "consequential decisions" — the EU's Annex III categories and Colorado's definition of decisions affecting housing, employment, healthcare, education, financial services, and insurance.

The challenge is that these definitions are considerably broader in practice than they appear on paper.

An applied AI study found that 40% of enterprise AI systems have unclear risk classifications — you cannot afford ambiguity.

The EU AI Act's definition of a high-risk system captures any AI used as a "safety component" of a regulated product, any system falling within Annex III's eight categories, and any system that significantly impacts fundamental rights. The Colorado AI Act's "consequential decision" concept has been interpreted broadly enough that AI use in customer service interactions, content recommendations, and even automated scheduling tools in regulated sectors could potentially fall within scope.

The hidden AI problem: Many organizations undercount their AI exposure because they think of AI as the systems they deliberately built. In practice, every major SaaS platform — HR software, lending tools, insurance pricing platforms, marketing automation stacks — now incorporates AI as a default feature. Using a third-party platform that embeds AI to make decisions affecting your customers or employees can make you a "deployer" under both the EU AI Act and state laws, with corresponding compliance obligations.

The EU AI Act applies obligations to importers and distributors, not just developers. Startups may still face indirect compliance and contracting risks when using AI tools or services from vendors that trigger applicability thresholds.

The Global Dimension: It Does Not Stop at the EU

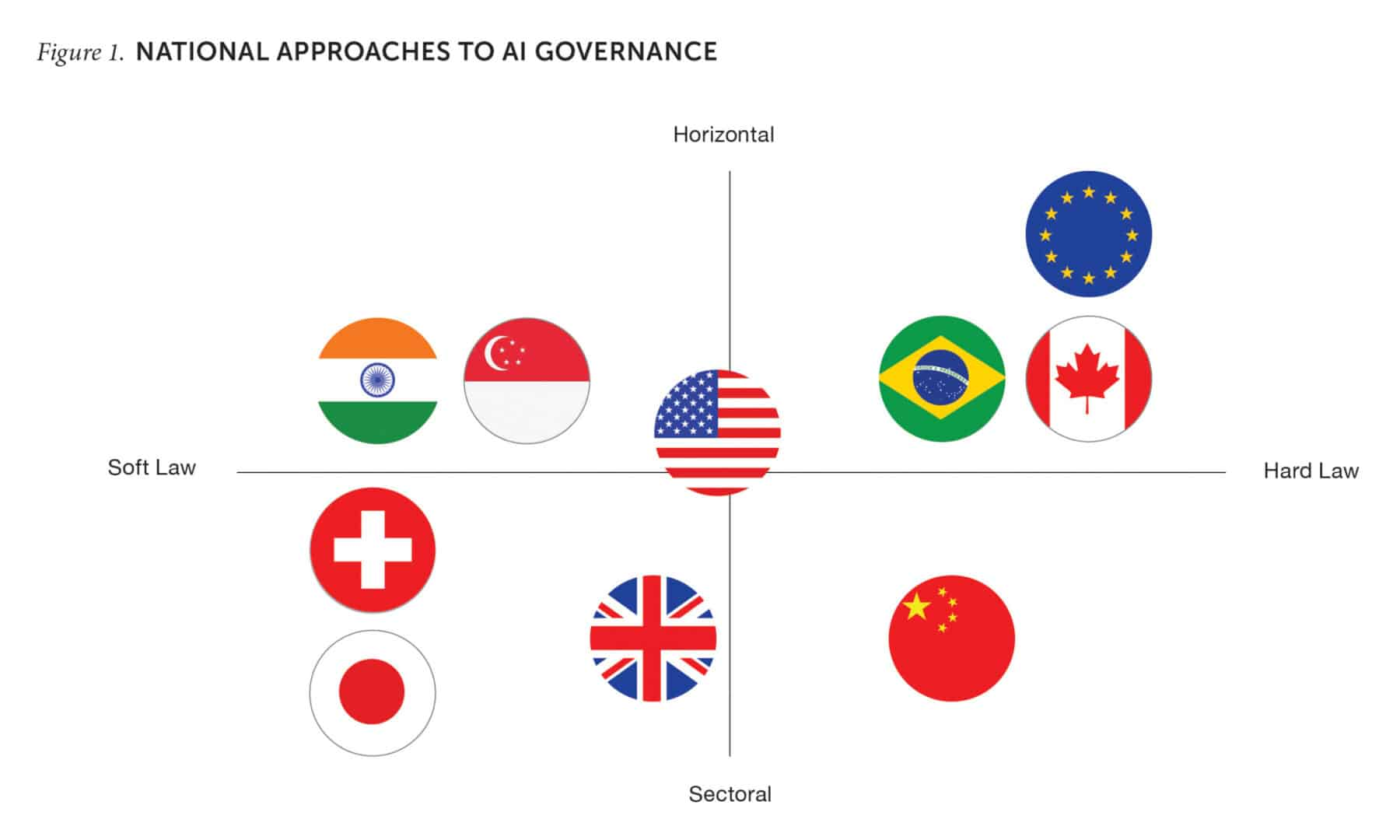

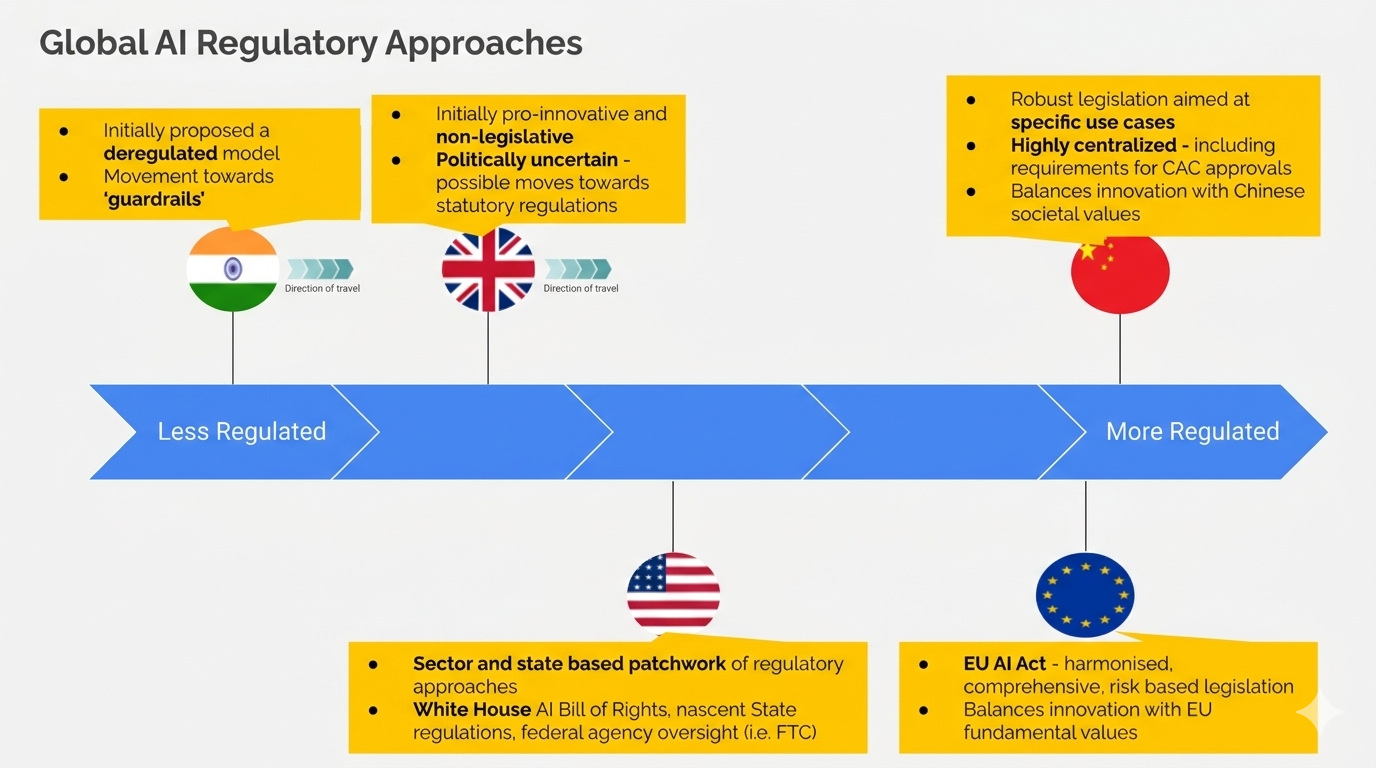

For companies with any international footprint, the compliance picture extends well beyond US state laws and the EU AI Act.

Korea's Basic AI Act and Vietnam's first dedicated AI law are both set to take effect in 2026. Brazil's AI regulation bill uses a similar risk-based classification to the EU AI Act. Over 30 countries are actively developing or implementing AI-specific regulations.

This global convergence around risk-based frameworks — high-risk categories, proportionate penalties, transparency and documentation requirements — reflects a deliberate approach by regulators who studied the EU's model and borrowed its logic. The practical effect for multinationals is that while specific requirements differ, the underlying architecture of compliance is increasingly similar across jurisdictions.

Korea, Kazakhstan, Vietnam, and Brazil have all passed AI laws that use risk-based classification where use cases such as employment, education, and essential services are subject to strict obligations across all frameworks. Compliance with the EU AI Act provides a meaningful head start for these jurisdictions, even if it does not satisfy their specific local requirements.

The UK remains an outlier in this global trend, having chosen a sector-by-sector approach through existing regulators rather than comprehensive AI legislation. As of February 2026, no AI-specific law has passed, though an anticipated government bill did not materialize in 2025 and the question of whether comprehensive UK legislation is coming remains genuinely open.

The Compliance Cost Reality

Setting policy debates aside, the practical question for most businesses is what compliance actually costs — and whether the investment is better spent now or later.

EU AI Act compliance cost estimates by organization size:

| Organization Type | Initial Investment | Annual Ongoing |

|---|---|---|

| Large enterprise (>€1B) — high-risk systems | $8–15M | $2–5M |

| GPAI model providers | $12–25M first year | Variable |

| Mid-size companies | $2–5M | $500K–2M |

| SMEs | $500K–2M | Lower thresholds |

These estimates come from regulatory compliance specialists and will vary considerably based on how many high-risk systems a company operates and how mature its existing data governance practices are. Companies that invested in GDPR compliance have a structural advantage — the documentation disciplines, data governance processes, and cross-functional compliance teams that GDPR required are directly transferable to AI Act compliance work.

For US-focused businesses, the cost structure differs but the principle is similar. The patchwork of state laws imposes compliance overhead that scales with how many state markets you operate in and how deeply AI is embedded in your consequential decision-making.

Various industry estimates suggest compliance costs add approximately 17% overhead to AI system expenses. For early-stage startups building AI-enabled products, this is not an abstract percentage — it affects hiring decisions, product timelines, and whether certain markets are worth entering at all.

What Businesses Should Be Doing Right Now

Given that the regulatory landscape combines active enforcement, genuine legal uncertainty, and significant penalties for getting it wrong, the strategic question is not whether to invest in AI governance. It is how to do it in a way that remains adaptable as the rules continue to shift.

The baseline actions that no company can afford to skip:

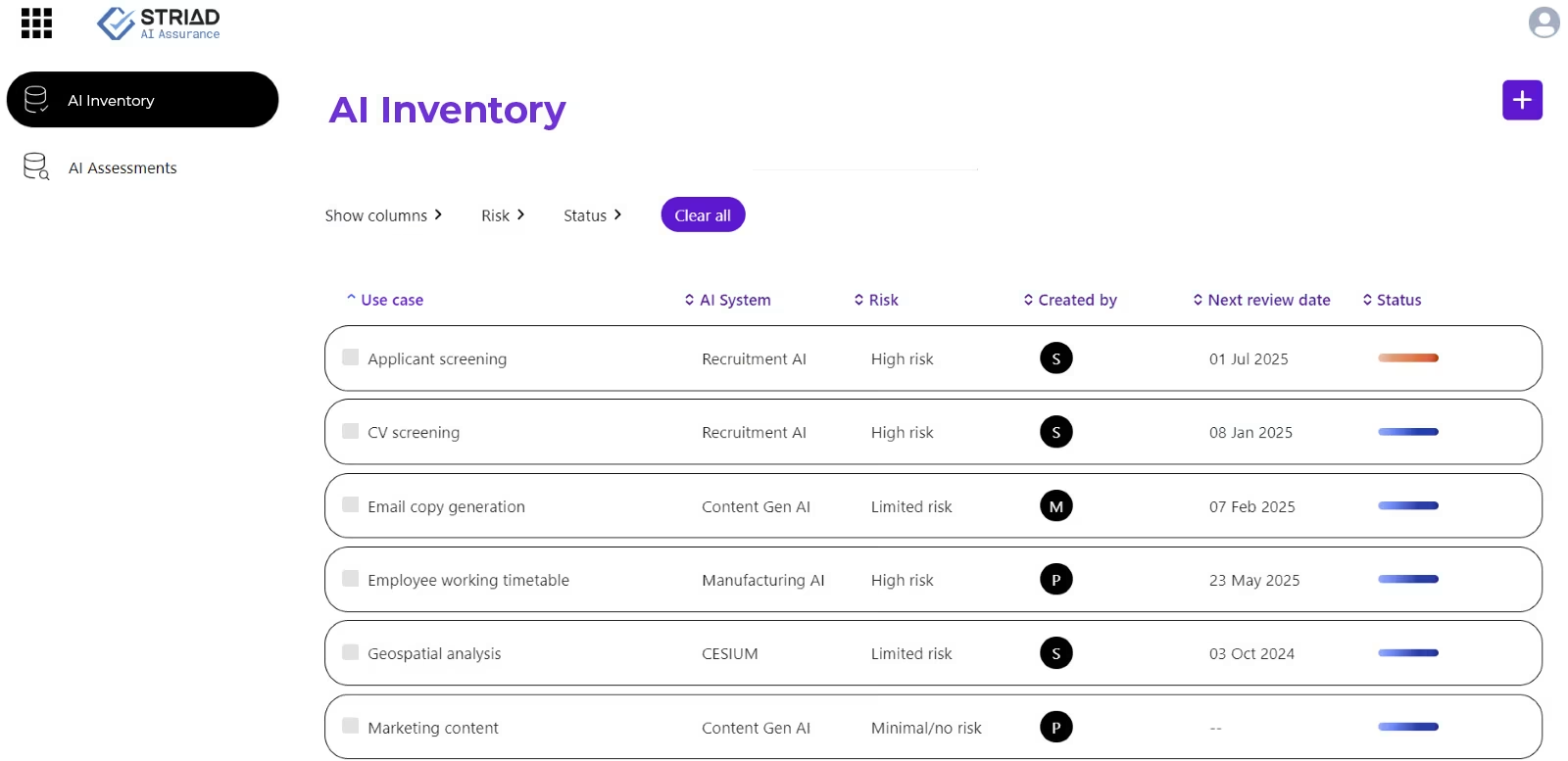

Build an AI Inventory

You cannot govern what you cannot see. The first step for any organization is a complete inventory of every AI system in use — including third-party platforms and embedded AI in existing software. This inventory needs to capture what decisions each system supports or automates, what data it uses, and which individuals or groups are affected by its outputs.

An applied AI study found that 40% of enterprise AI systems have unclear risk classifications. Most organizations that conduct a serious AI inventory discover they have significantly more AI exposure than their technology team's mental model suggested.

Classify Your Systems by Risk Tier

For each system in your inventory, assess whether it falls into any high-risk category under the EU AI Act's Annex III framework or the Colorado AI Act's consequential decision definition. Document the reasoning. If the classification is ambiguous, document that too — regulators will be far more forgiving of organizations that engaged thoughtfully with the classification question than those that clearly never asked it.

Establish a Cross-Functional AI Governance Group

Legal, privacy, security, product, and HR need to be involved together — not sequentially. Forming a cross-functional team to own policies, approve higher-risk deployments, and coordinate compliance under the EU AI Act, GDPR, and the EU Data Act is the foundational structural step. For smaller organizations, this does not require a dedicated headcount — it requires clear accountability and a regular cadence.

Update Vendor Contracts

If you use third-party AI tools that make or assist consequential decisions, your contracts with those vendors need to address training data provenance, bias testing, audit rights, exit terms, and how regulatory responsibility is allocated between you and the vendor. Most vendor contracts signed before 2024 address none of this.

Document Everything

Under both the EU AI Act and the Colorado AI Act, demonstrating "reasonable care" requires documentary evidence of risk assessments, bias testing, monitoring, and human oversight mechanisms. The documentation is not bureaucratic overhead — it is your primary defense in an enforcement action. Organizations that follow recognized frameworks can generate the records needed to show compliance and qualify for safe harbor protections, reduce liability exposure, and reinforce trust with regulators, customers, and stakeholders.

Do Not Assume Federal Preemption Will Bail You Out

The Trump Executive Order creates legal uncertainty around state AI laws — but it does not eliminate them. Since Congress has not yet passed a federal AI law that preempts state AI laws, existing state AI laws, including Colorado's AI Act effective June 30, 2026, and California's TFAIA effective January 1, 2026, will likely not be impacted in the short term by the Executive Order. The most prudent approach is to continue to comply with state AI laws until there is greater clarity.

Betting on executive preemption as a compliance strategy means accepting the risk of significant penalties if the legal challenges fail or are delayed — which legal scholars across the ideological spectrum believe is probable.

The Underlying Tension Nobody Resolves Cleanly

It is worth acknowledging a genuine tension at the heart of this regulatory moment that does not have an easy answer.

The concerns that motivated state AI laws — algorithmic discrimination in hiring and lending, opaque AI systems making consequential decisions without explanation or appeal, AI tools trained on data that embeds historical inequities — are real and documented. The harms are not hypothetical. Employment discrimination claims, discriminatory credit decisions, and biased risk assessments using AI have already generated litigation and regulatory action under existing law.

At the same time, the compliance infrastructure being required to address these concerns is genuinely burdensome for smaller organizations and startups, disproportionately so relative to the large technology companies and enterprise software vendors with the legal teams and capital to absorb it. The US Chamber is not wrong that a patchwork of 50 different state requirements creates operational friction that falls hardest on the smallest actors.

The Trump administration's response to this tension — suppress state regulation, delay EU compliance where possible, minimize federal oversight — resolves it by trading consumer protection for innovation speed. The EU's response — comprehensive risk-based regulation with real penalties — resolves it by trading innovation velocity for consumer protection certainty. Neither answer is wrong in every dimension. Both answers have real consequences for real stakeholders.

What businesses cannot do is opt out of navigating the tension. The regulation exists. The deadlines are real. The penalties are significant. And the underlying question of what standards should govern AI systems that make consequential decisions about people's lives is not going away regardless of which administration is in power.

Frequently Asked Questions

What is the most important AI regulation deadline for US businesses in 2026?

For businesses operating in or selling to EU customers, the August 2, 2026 enforcement date for high-risk AI systems under the EU AI Act is the most consequential. For US-only operations, the Colorado AI Act takes effect June 30, 2026, and multiple California AI laws were already active as of January 1, 2026. Texas and Illinois also have AI laws that took effect January 1, 2026. No compliance timeline can safely be ignored while waiting for federal clarity.

Does Trump's December 2025 Executive Order mean businesses can ignore state AI laws?

No. The Executive Order directs federal agencies to challenge state AI laws through litigation and funding conditions, but it does not preempt state law directly — only Congress can do that. Legal experts broadly advise continuing to comply with applicable state laws until courts rule or Congress acts. Governors in California, Colorado, and New York have confirmed they intend to enforce their laws regardless of the Executive Order.

Does the EU AI Act apply to US companies?

Yes. Like the GDPR, the EU AI Act applies based on where AI systems affect people, not where the company is headquartered. Any US company deploying AI systems that affect EU residents — including AI used in hiring decisions, credit scoring, content recommendations, or customer service — falls within scope if those systems meet the Act's definitions of high-risk or limited-risk AI.

What are the penalties for violating the EU AI Act?

Penalties are tiered. Non-compliance with prohibited AI practices can result in fines of up to €35 million or 7% of global annual turnover, whichever is higher. High-risk AI system violations carry fines up to €15 million or 3% of global turnover. Providing incorrect information to regulators carries fines up to €7.5 million or 1% of turnover. These penalties exceed GDPR maximums and apply per violation, not as a single annual ceiling.

What counts as a "high-risk AI system" under the EU AI Act?

High-risk AI systems are defined in Annex III of the Act and include AI used in hiring and HR decisions, credit scoring and loan approvals, insurance underwriting, healthcare diagnostics and treatment support, education and student assessment, biometric identification, and critical infrastructure management. Many companies discover during their AI inventories that third-party software they use in these areas qualifies as high-risk AI, creating compliance obligations as "deployers" even if they did not build the AI themselves.

What should a small business do to prepare for AI regulation in 2026?

The foundational steps are: conduct a complete AI inventory including all third-party software with AI features; classify each system by risk level using EU Annex III or Colorado's consequential decision framework as a guide; document your reasoning for each classification; review vendor contracts for AI-specific terms; implement disclosure notices for consumer-facing AI interactions; and review your HR and lending processes for any AI-assisted decisions that may trigger state employment or credit laws. NIST's AI Risk Management Framework provides a recognized structure that satisfies safe harbor provisions under several state laws.

How does the patchwork of US state AI laws affect businesses operating nationally?

Companies operating nationally face overlapping, sometimes inconsistent requirements across California, Colorado, Texas, Illinois, and other states. Different laws use different definitions of "high-risk" AI and "consequential decisions," require different forms of disclosure and documentation, and are enforced by different state attorneys general. Industry estimates suggest compliance costs add approximately 17% overhead to AI system expenses under this patchwork model. The most efficient approach is building compliance infrastructure around the most comprehensive requirements — currently California's — and adapting for state-specific variations.

Is the EU likely to delay AI Act enforcement beyond August 2026?

The European Commission's Digital Omnibus proposal could potentially delay high-risk AI enforcement to as late as December 2027, but only conditionally — tied to the availability of harmonized standards — and only if it passes the European Parliament. Legal and compliance experts widely advise treating August 2026 as the binding deadline, as waiting on the Omnibus risks severe penalties if the delay does not materialize or does not cover your specific systems.

Related Articles