When Apple launched the App Store in 2008, the company's own executives did not fully anticipate what they were unleashing.

Within three years, the store had generated more than $1 billion in payouts to developers. Within a decade, it had become a $100 billion-per-year ecosystem that turned individual developers into millionaires and created entirely new categories of business. The platform made Apple richer than almost anyone thought possible, but it also created a generation of builders who had never existed before.

In 2026, a structurally similar moment is unfolding in artificial intelligence, and most people are watching the wrong layer of it.

The attention is concentrated on foundation model companies: OpenAI, Anthropic, Google DeepMind, and the others racing to build more capable, more general AI. That race is real and consequential. But the money that will move most predictably in the next several years is flowing one layer above the foundation models and one layer below the consumer surface.

It is flowing into specialized AI models built for specific industries, specific workflows, and specific professional problems that general-purpose AI handles adequately but rarely handles well.

The market is moving decisively toward:

- Vertical LLMs trained on proprietary, domain-specific datasets

- Small language models optimized for narrow tasks and lower infrastructure costs

- Advanced reasoning frameworks designed for verifiable outputs

In regulated industries like finance, healthcare, and law, relying solely on general AI models is becoming a strategic risk. The companies being built in this layer are already reaching valuations that were implausible three years ago.

The question is not whether specialized AI will become a significant market. It already is. The question is who captures the value, at which layer of the stack, and which business models prove durable once the market matures.

The App Store Analogy: Where It Holds and Where It Breaks

The comparison between the specialized AI model market and the App Store era is appealing and partially accurate, which makes it worth examining carefully rather than accepting uncritically.

Where the analogy holds

In the App Store model, Apple built the platform infrastructure and took 30 percent of every transaction. Developers built the specialized products that made the platform worth using.

The developers who moved first into high-value categories, enterprise productivity, healthcare, and financial tools, captured distribution advantages that later entrants struggled to overcome. Being the fifth general productivity app was hard. Being the first genuinely useful clinical decision tool was a defensible position.

The same dynamic is playing out in AI. Foundation model companies are the platform layer. They provide the underlying capability, the infrastructure, and in some cases the distribution. The value capture for specialized builders comes not from competing with OpenAI or Anthropic on general capability, but from going deeper into a niche than any horizontal tool ever could or will.

Where the analogy breaks

The App Store provided a single discovery and distribution channel with clear rules. The specialized AI market currently has no equivalent.

Enterprise vertical AI companies reach buyers through direct sales, not through a store. The economics are closer to enterprise SaaS than to mobile consumer apps:

- Longer sales cycles

- Seat-based licensing

- Expansion revenue as usage deepens within an account

OpenAI introduced a dedicated App Directory inside ChatGPT in December 2025, where users can browse approved apps and add them directly to their workspace. That is a meaningful step toward a formal marketplace layer, but the revenue-sharing model for developers remains still being worked through. The platform-as-distribution model for AI is still forming, and the companies that will cash in most immediately are not waiting for it.

The Real Money: Enterprise Vertical AI

The clearest case for specialized AI as a value-creation engine is already documented in the funding and revenue data from 2025 and early 2026.

Legal AI: Harvey

Harvey, founded in 2022 to build AI for law firms and corporate legal departments, has compressed several years of a normal SaaS growth story into individual quarters.

Revenue growth:

| Period | ARR |

|---|---|

| End of 2024 | $50M |

| Mid-2025 | $100M |

| End of 2025 | $195M (est. by Sacra) |

The company had expanded to more than 1,000 customers including Comcast and Verizon, with weekly active users growing fourfold year over year. Revenue is seat-based: accounts typically start with a few hundred licenses for research, drafting, and diligence, and internal usage data show that median seat count doubles within 12 months.

Funding trajectory:

| Date | Round | Valuation |

|---|---|---|

| Feb 2025 | Series D, $300M | $3B |

| Jun 2025 | Series E, $300M | $5B |

| Dec 2025 | Series F, $160M | $8B |

| Feb 2026 (in talks) | $200M | $11B |

Total capital raised exceeds $1.2 billion.

The growth is not just a funding story. It reflects genuine product-market fit in a high-value, change-resistant industry. Once a law firm adopts Harvey, usage expands organically: median seat counts double within the first year.

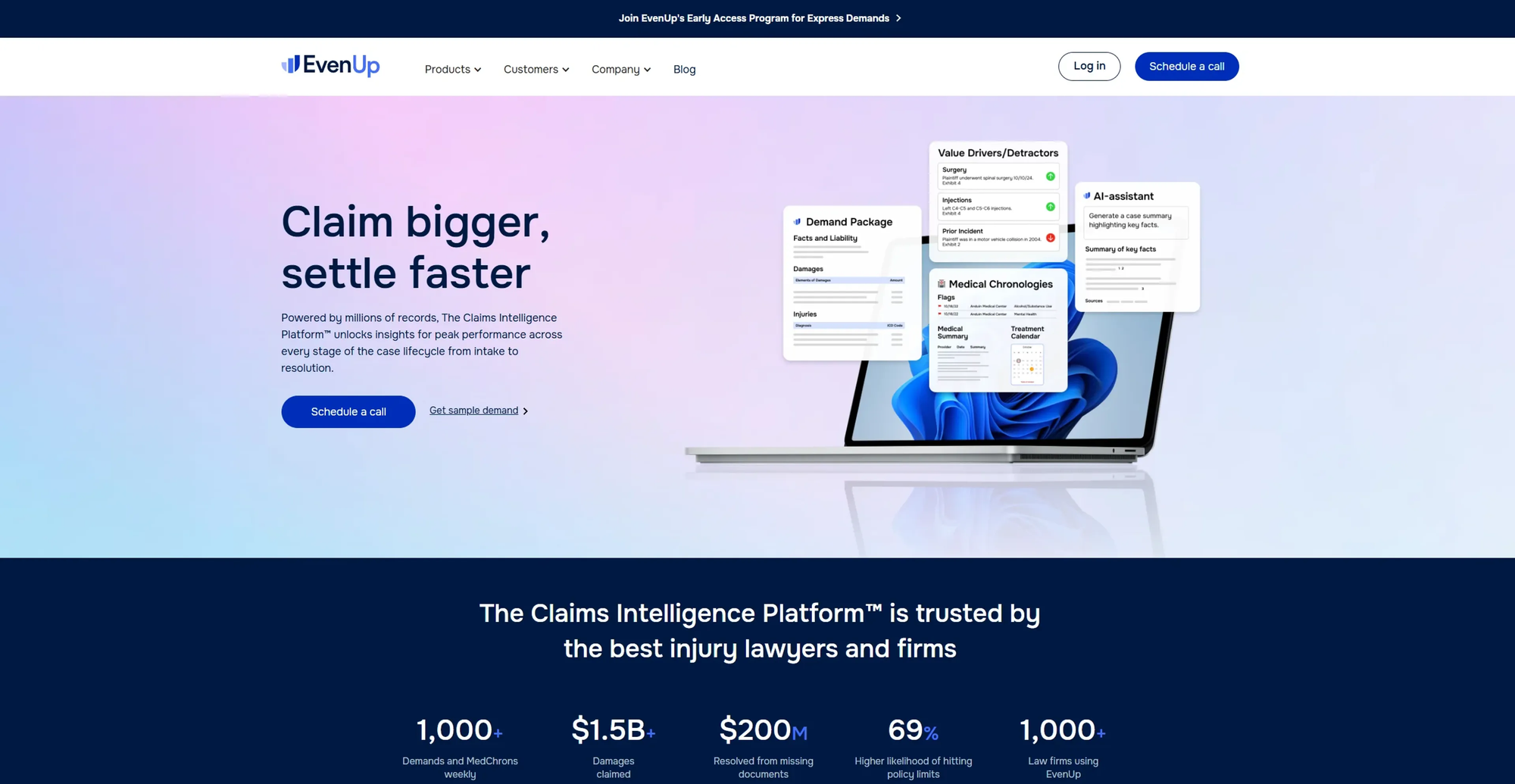

New startups have already emerged targeting specific legal niches within Harvey's broader platform, including EvenUp for personal injury lawyers, Finch for paralegals, and Supio for plaintiff law. The market is fragmenting even as the leaders scale.

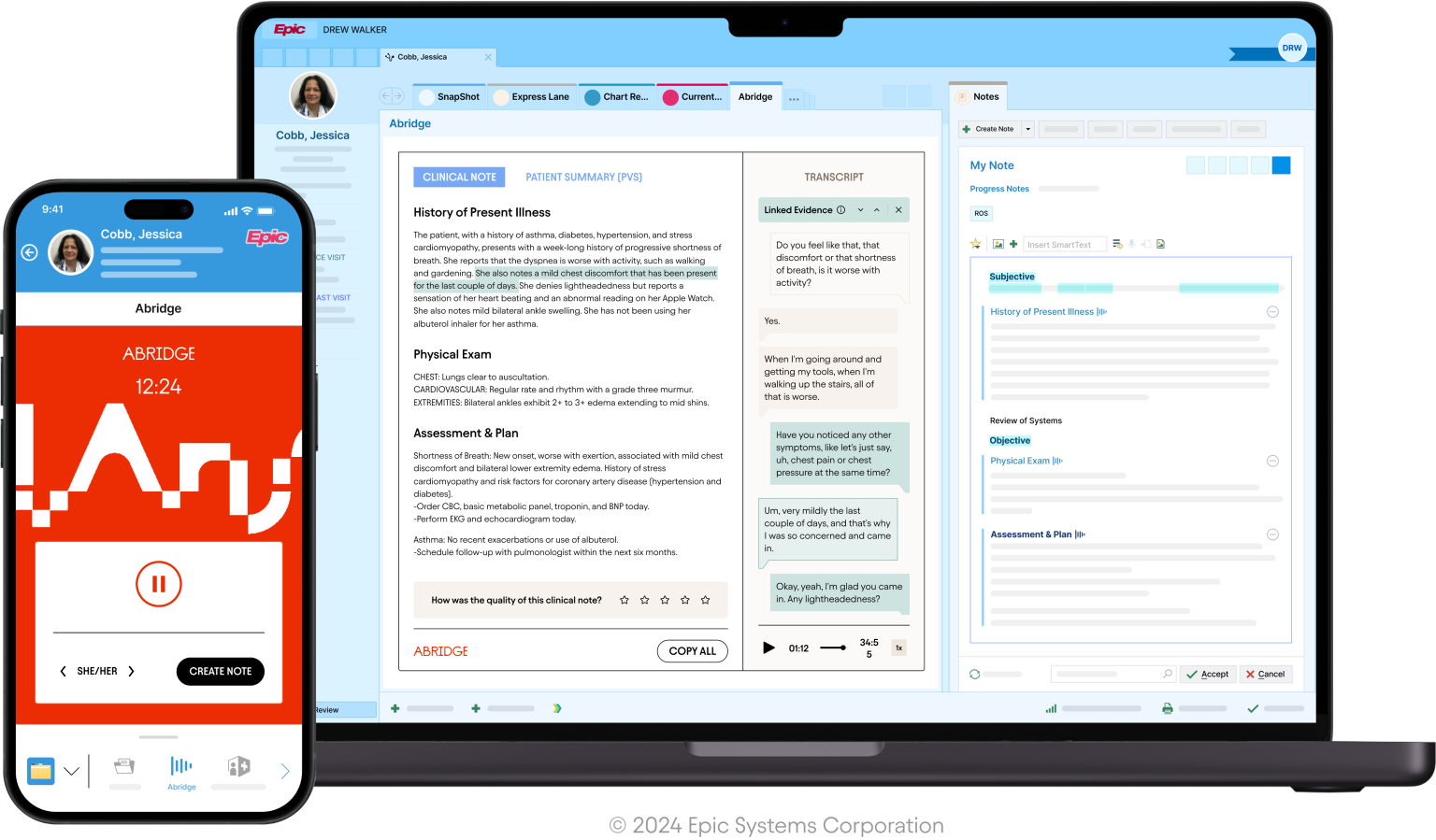

Healthcare AI: Abridge, Ambience, OpenEvidence

Healthcare AI has produced multiple high-valuation companies with different approaches to the same underlying problem: clinical workflows generate enormous quantities of information that takes physician time to process, document, and act on.

Abridge Raised a $300M Series E at a $5.3B valuation, led by Andreessen Horowitz. Focuses on AI-generated clinical notes embedded directly into hospital EHR systems, creating deep integration and significant switching costs.

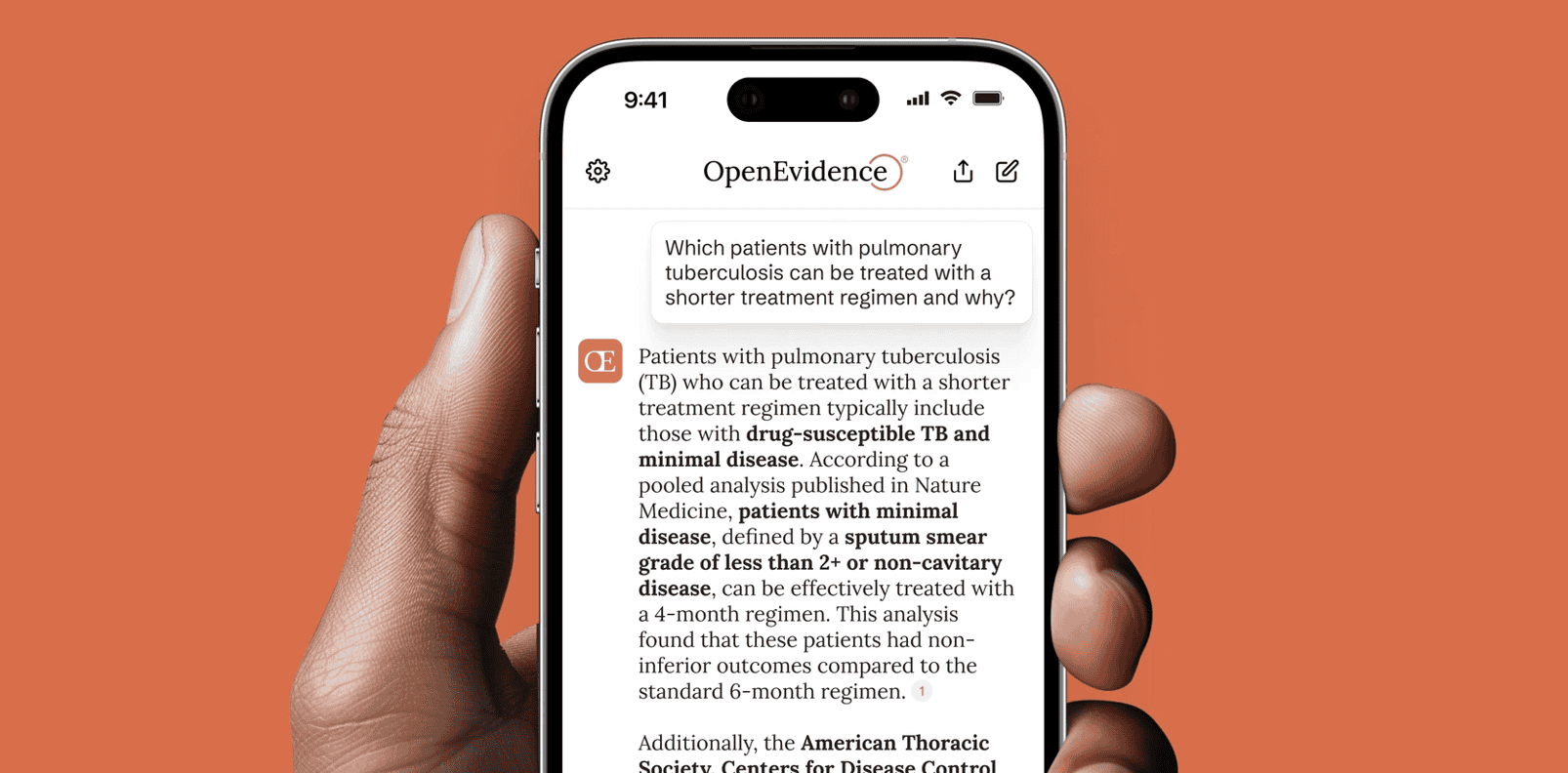

OpenEvidence Took a different route: a free, physician-facing clinical decision support tool that bypasses hospital procurement cycles entirely.

| Metric | Figure |

|---|---|

| 2024 ARR | $7.9M |

| 2025 ARR (est.) | $150M |

| YoY growth | 1,803% |

| Gross margins | ~90% |

| Jan 2026 valuation | $12B |

| U.S. physicians using daily | 40%+ |

OpenEvidence monetizes through pharmaceutical and medical device advertising at CPMs ranging from $70 to $1,000+, compared to $5 to $15 for typical social media platforms. Strategic content licensing agreements with The New England Journal of Medicine and JAMA have strengthened its data moat.

The common thread across all these healthcare companies is clear: the value comes not primarily from model capability, but from data access, workflow integration, and the trust of a specific professional audience. General-purpose AI can answer medical questions. OpenEvidence answers them with sources that clinical professionals accept, in a context that fits how physicians actually work.

Finance and Other Verticals

Hebbia offers a natural-language platform for querying large documents, spreadsheets, and reports. It has partnered with FactSet to launch a solution integrating unstructured and structured financial data, marking its entry into the institutional finance sector.

EvenUp, focused on personal injury law, raised $150M in a Series E round at a $2B+ valuation, more than doubling in under a year.

The broader market:

The vertical AI sector was valued at roughly $10 billion in 2023 and is projected to reach $115 billion by 2034, growing at a compound annual rate of 21.6 percent.

Why General AI Cannot Simply Replace This

The obvious question is whether ChatGPT, Claude, or Gemini will simply absorb the vertical AI market by becoming good enough at specific domains. This is a legitimate competitive risk for every vertical AI company. It is also, based on current evidence, overstated.

General-purpose AI is improving rapidly at broad knowledge tasks. What it does not provide:

- Proprietary data integration

- Regulatory compliance built into the product architecture

- Workflow embedding that replaces manual professional steps

- The reputational assurance a specialized platform for a specific professional audience carries

The key strategic insight:

Vertical AI companies are not trying to out-model the foundation labs. They are building proprietary data moats, workflow integrations, and professional trust that general-purpose tools cannot replicate by improving their general capability. The moat is not the model. The moat is everything around the model.

Vertical AI companies like Harvey in law, Hebbia in finance, and OpenEvidence in medicine win on the basis of proprietary data from partnerships, users, and workflow integration, rather than competing with OpenAI, Anthropic, and Google on general-purpose model capabilities.

In 2025 and 2026, a surge in M&A activity is accelerating as incumbents move aggressively to acquire their way into the AI era. As AI-native startups push deeper into industry-specific workflows, automating insurance claims, legal briefings, and revenue cycle management, traditional SaaS players face a stark choice: evolve or become obsolete.

The Two Routes to Cashing In

The specialized AI market offers two meaningfully different paths to value capture. They are not equally accessible.

Route 1: Build a Vertical AI Company

The venture-backed, enterprise-sales route is what Harvey, Abridge, and OpenEvidence represent. It requires significant capital, long sales cycles in regulated industries, and the ability to build proprietary data advantages that widen over time.

The four conditions for a defensible vertical AI company:

- Deep workflow integration. Products that sit adjacent to workflows are replaceable. Products that sit inside them, embedded in the EHR, connected to the document management system, part of the intake process, are not. Integration depth is the primary moat-building mechanism.

- Proprietary data partnerships. OpenEvidence's licensing agreements with NEJM and JAMA are not incidental to its business. They are the business. The model capability is table stakes; the data access is the competitive advantage.

- Regulatory alignment. In healthcare, finance, and law, compliance is not a feature. It is the prerequisite for enterprise adoption. Vertical AI companies that build compliance into the product architecture rather than bolting it on afterward have a structural advantage over both general-purpose AI tools and horizontal platform extensions.

- Professional trust and community. OpenEvidence reached over 40 percent of U.S. physicians who use the tool daily. That level of professional adoption creates network effects: the platform becomes the standard of practice, which makes leaving it increasingly costly.

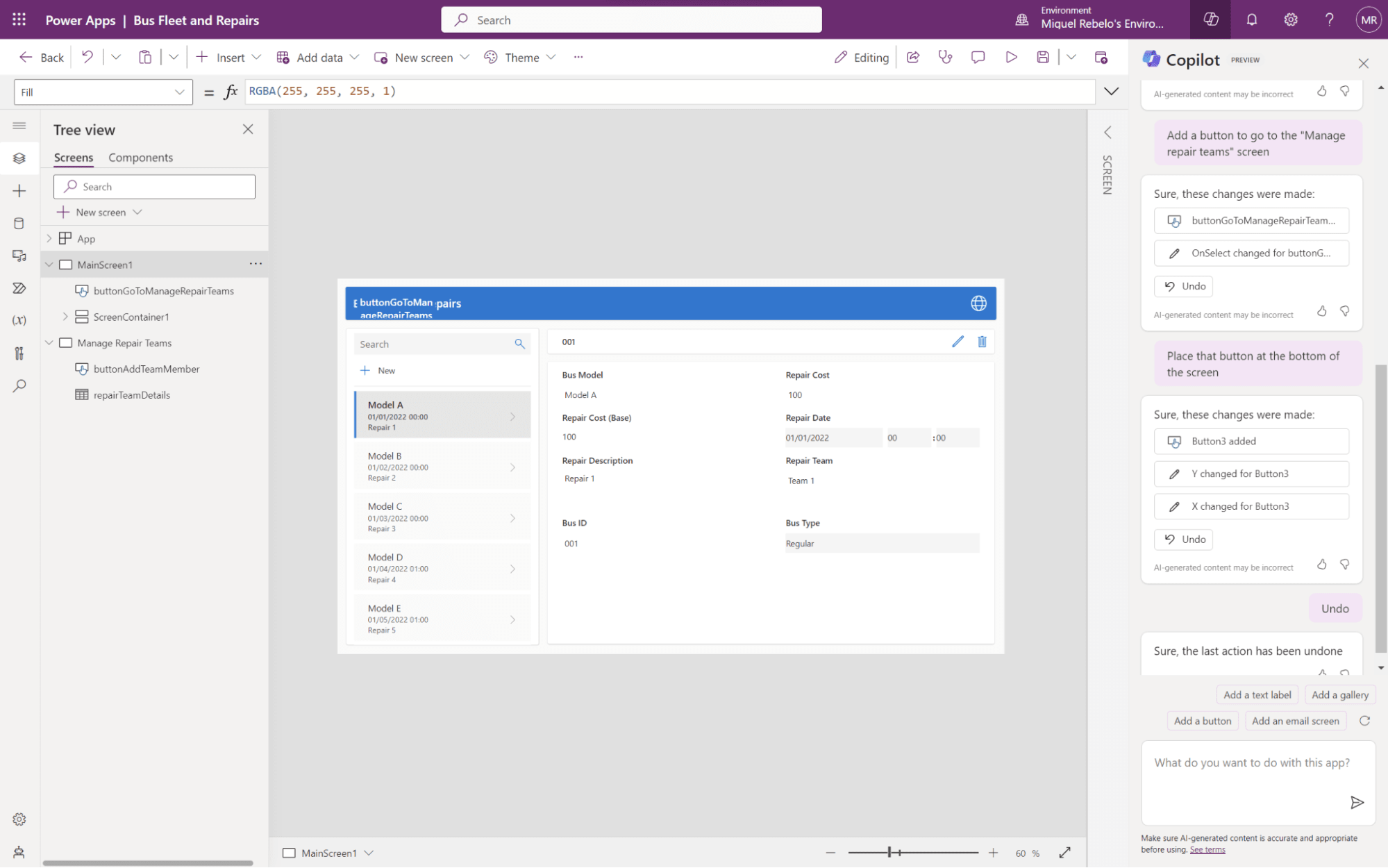

Route 2: Build Domain-Specific Agents and Tools

The second route is accessible to a much wider range of builders: solo developers, small teams, and domain experts who understand a professional problem but do not have the capital to build an enterprise sales organization.

Platforms like n8n, Zapier, and Make allow builders to create specialized AI workflows without deep engineering backgrounds. The most successful target a narrow professional problem with a specific audience: a workflow that automates contract clause extraction for a specific type of legal matter, a research synthesis tool for a specific category of analyst, a documentation agent tuned to a specific EHR system's output format.

Documented revenue ranges at this layer:

| Product type | Revenue model | Range |

|---|---|---|

| Workflow sale | One-time | $500 to $2,000 |

| SaaS access | Monthly subscription | $20 to $100/month |

| Agency white-label | Recurring B2B | Varies significantly |

With the right niche and distribution, these numbers compound meaningfully.

The risk: As foundation models improve at general tasks, specialized workflows built primarily around prompt engineering rather than proprietary data or workflow integration erode in value. The durable builders at this layer are those who combine domain expertise with technical implementation and can iterate faster on the professional use case than a general-purpose tool can address it from above.

The Platform Question: Who Owns Distribution?

The analogy to the App Store raises a question that the mobile era answered clearly but the AI era has not: who controls distribution, and what percentage do they take?

In the mobile app economy, Apple's 30 percent cut was controversial but largely non-negotiable for apps that needed iOS distribution. The AI equivalent has not been settled.

Two scenarios:

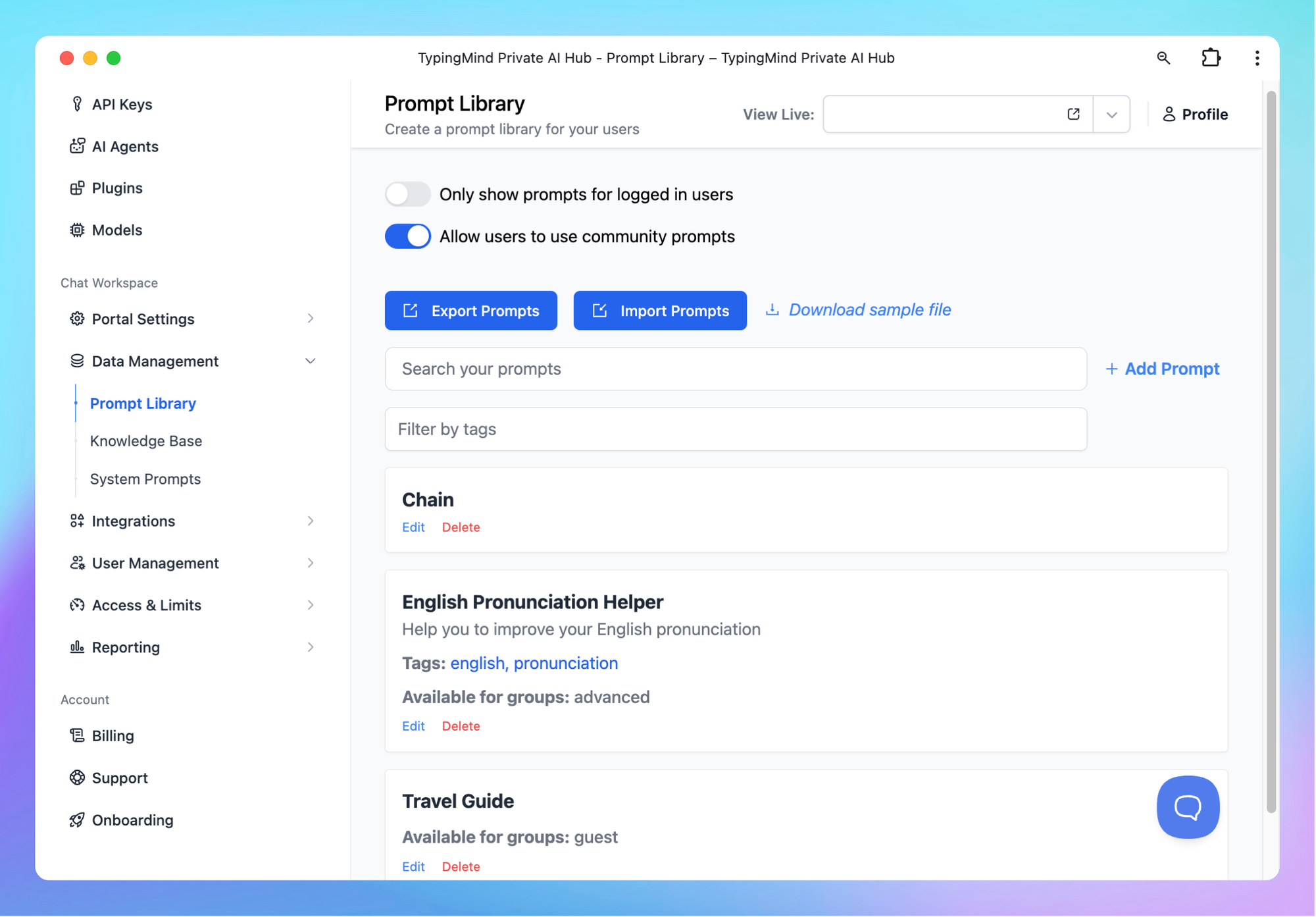

If OpenAI becomes the primary distribution layer for specialized AI tools, it will extract platform economics similar to what Apple built. ChatGPT has already evolved from a standalone tool into an ecosystem, with a two-layer structure of custom GPTs and a newer App Directory, laying the groundwork for a conversational platform closer to an operating system than a chatbot.

If the market fragments across multiple foundation model providers, enterprise platforms, and workflow tools, the distribution advantage will be weaker and the specialized builder retains more margin.

The current evidence suggests fragmentation is more likely in the near term. Enterprise vertical AI companies are selling directly, not through a platform store. The enterprise AI market resembles the enterprise SaaS era more than the mobile consumer era: direct relationships, multi-year contracts, and expansion revenue through upsell.

For consumer-facing specialized AI, the platform distribution question is more open. OpenAI's App Directory and Anthropic's equivalent infrastructure could become meaningful distribution channels. But the revenue-sharing mechanics remain unclear, and the early history of custom GPTs suggests that without a functional monetization mechanism, most builders look for alternative paths.

What Builders Should Watch

For developers, founders, and domain experts trying to position for this market, the signals that matter most are not which foundation model wins the benchmark race. They are structural questions about where durable value is being created.

- Data moats over model quality. The companies accumulating proprietary professional data, clinical records, legal filings, and financial documents through their products are building advantages that better general models cannot erase. The question to ask: does your product get better as more professionals use it, or does it stay the same?

- Workflow depth over surface convenience. Tools that save a professional five minutes are easily displaced by marginally better tools. Tools that sit inside a workflow, replacing steps that previously required manual professional judgment, are significantly harder to displace.

- Regulated verticals as a feature, not a bug. The compliance requirements in healthcare, finance, and law make these markets harder to enter but also harder to disrupt once entered. The extra time and cost required to build a compliant product creates a moat against both new entrants and general-purpose tools that are not willing to take on regulatory risk.

- Watch the M&A activity. Enterprise AI revenue reached $37 billion in 2025, up more than three times year over year. Traditional software companies that have not yet integrated vertical AI will accelerate acquisitions rather than building from scratch. The vertical AI companies being built today are acquisition targets as much as they are independent long-term businesses.

Frequently Asked Questions

What is vertical AI and how is it different from general-purpose AI?

Vertical AI refers to models and platforms built specifically for one industry or professional domain, such as healthcare, legal services, or financial analysis, rather than general tasks. These systems are trained on industry-specific data, integrated into professional workflows, and designed to meet the compliance requirements of regulated industries. General-purpose AI is broadly capable but rarely handles domain-specific professional problems as precisely or as safely as a purpose-built system.

Which industries are seeing the most vertical AI investment in 2026?

Legal, healthcare, and financial services are the three most active verticals by funding volume and company valuation. Legal AI companies like Harvey have reached $8 to $11 billion in valuation. Healthcare AI companies including Abridge, Ambience, and OpenEvidence have raised hundreds of millions each. Financial data AI platforms like Hebbia are expanding from document analysis into integrated financial workflows. Beyond these three, cybersecurity, insurance claims processing, and scientific research are receiving significant investment.

Is the GPT Store a viable way to monetize specialized AI models?

Currently, OpenAI's GPT Store offers distribution but limited direct monetization. The revenue-sharing program for builders has been discussed but remains narrowly available as of early 2026. Most serious specialized AI businesses are monetizing through their own SaaS pricing, enterprise contracts, or third-party platforms rather than relying on the GPT Store's engagement-based payments. The store is more useful as a discovery and lead-generation channel than as a primary revenue source.

How do vertical AI companies build defensible competitive advantages?

The primary moats in vertical AI are proprietary data partnerships, deep workflow integration, regulatory compliance architecture, and professional community adoption. Companies that can accumulate usage data that improves their model's domain performance over time, while embedding deeply enough into workflows that switching carries real cost, are building the most defensible positions. Model capability alone is not a durable moat because foundation models improve continuously.

Can individual developers and small teams compete in the vertical AI market?

Yes, but at a different layer than enterprise vertical AI companies. Small teams and solo developers can build specialized workflow agents, domain-specific tools, and professional automation products that serve narrower use cases than enterprise platforms address. The most durable of these products combine genuine domain expertise with technical implementation and proprietary data or workflow access that general-purpose tools cannot replicate cheaply. The risk is commoditization as foundation models improve, which makes domain depth more important than technical implementation as the primary differentiator.

What happens to vertical AI companies if foundation models become much more capable?

Foundation model capability improvements pose a genuine long-term competitive risk to vertical AI companies built primarily on prompt engineering and basic customization. Companies building on proprietary data partnerships, workflow integrations, and professional trust are significantly more durable because these advantages do not erode with general capability improvements. The healthcare, legal, and financial services companies seeing the largest valuations have all invested heavily in data moats and workflow depth rather than relying on model differentiation alone.

Related Articles