Scientific research has always been constrained by the same bottleneck: human attention. Hypotheses must be formed, experiments designed, results analyzed, and new hypotheses generated in cycles that take weeks or months per iteration. A skilled researcher might generate around ten new molecules in two years. A well-funded team might run a few hundred experiments in a given study.

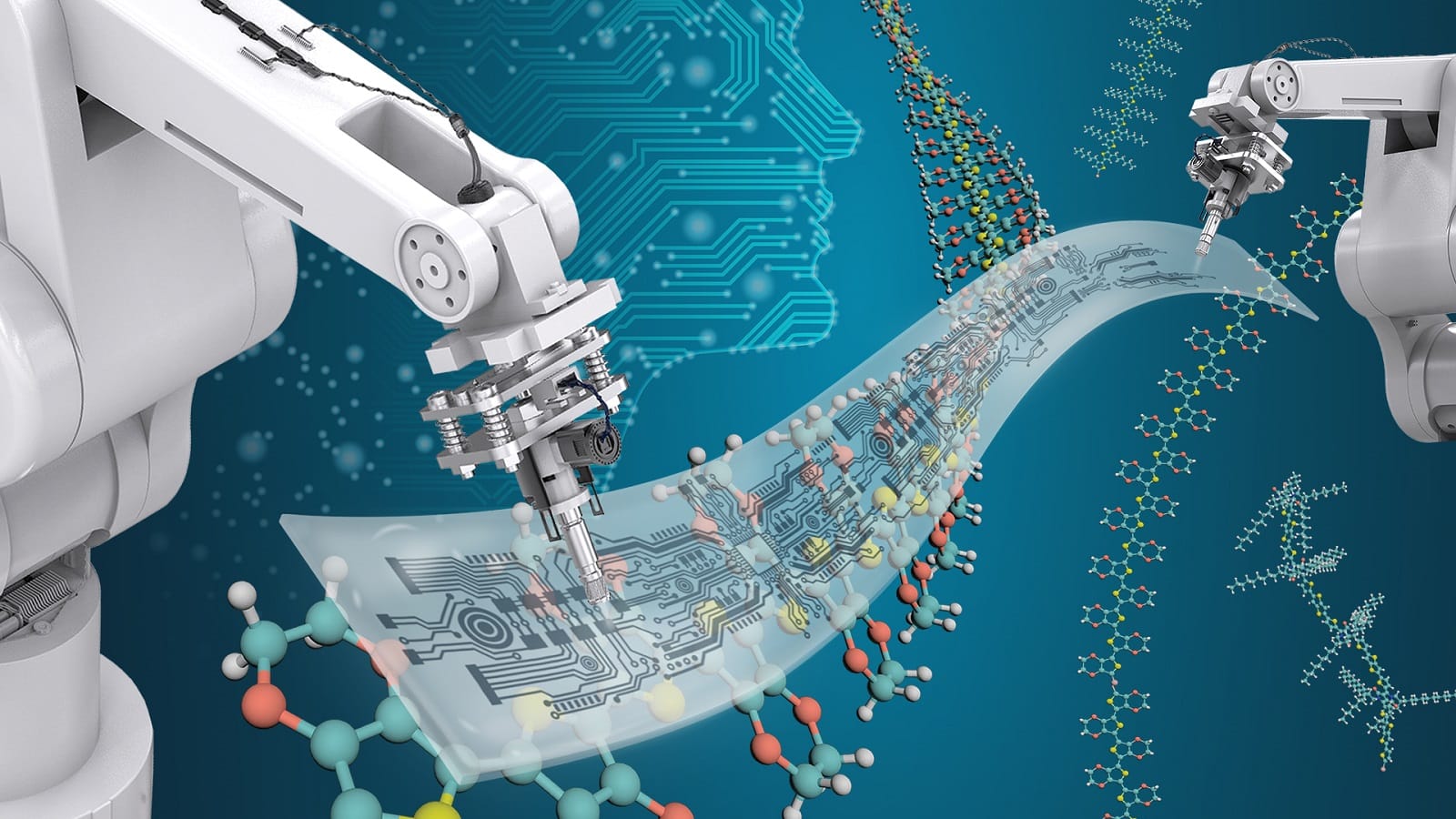

Self-driving laboratories are changing that equation in ways that are no longer speculative. These systems combine AI decision-making with robotic hardware to run experiments continuously, around the clock, without waiting for a human to review the last result before deciding what to try next.

The results are already published and peer-reviewed. At Argonne National Laboratory, a platform called Polybot ran more than 6,000 battery chemical experiments in five months. Researchers estimate that the same scope of work would have taken many years through traditional methods. At Lawrence Berkeley National Laboratory, the A-Lab system successfully synthesized 71 to 74 percent of the new inorganic materials it was presented as targets. A team at North Carolina State University published a self-driving flow chemistry lab in Nature Chemical Engineering in July 2025 that collects ten times more data per experiment than conventional approaches by switching from static batch methods to real-time dynamic chemical flows.

These are not prototypes awaiting funding. They are operating laboratories producing scientific results today.

What a Self-Driving Lab Actually Is

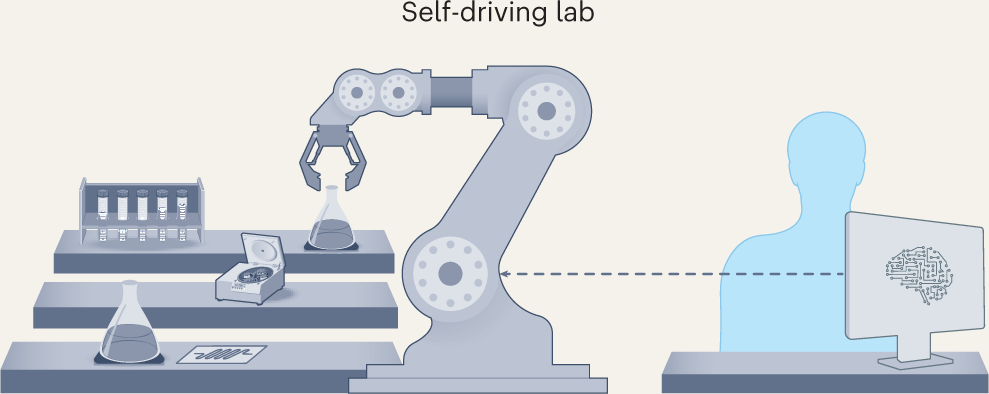

The term "self-driving lab" was coined by analogy to autonomous vehicles, and the analogy is imperfect but useful. Just as a self-driving car uses sensors, AI decision-making, and actuators to navigate without a human at the wheel, a self-driving lab uses instruments, AI algorithms, and robotic systems to navigate experimental space without a human directing each step.

A July 2025 review in Royal Society Open Science describes today's most capable self-driving labs as automating nearly the entire scientific method: hypothesis generation, experimental design, experiment execution, data analysis, and updating hypotheses for subsequent rounds of discovery or optimization.

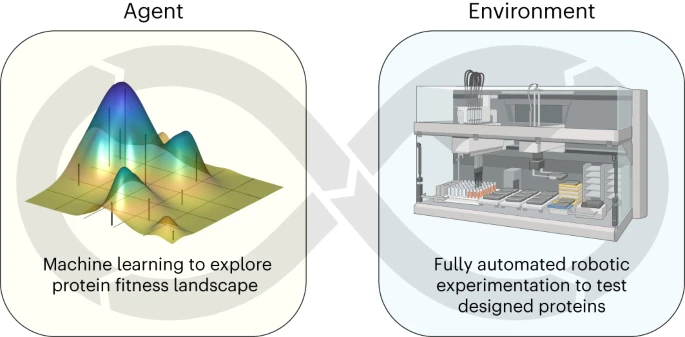

The core architecture is a closed loop. An AI model proposes an experiment, a robotic system executes it, and the results flow back to the model. The model updates its understanding and proposes the next experiment, without any human deciding what to try between cycles. Each cycle runs in minutes rather than days.

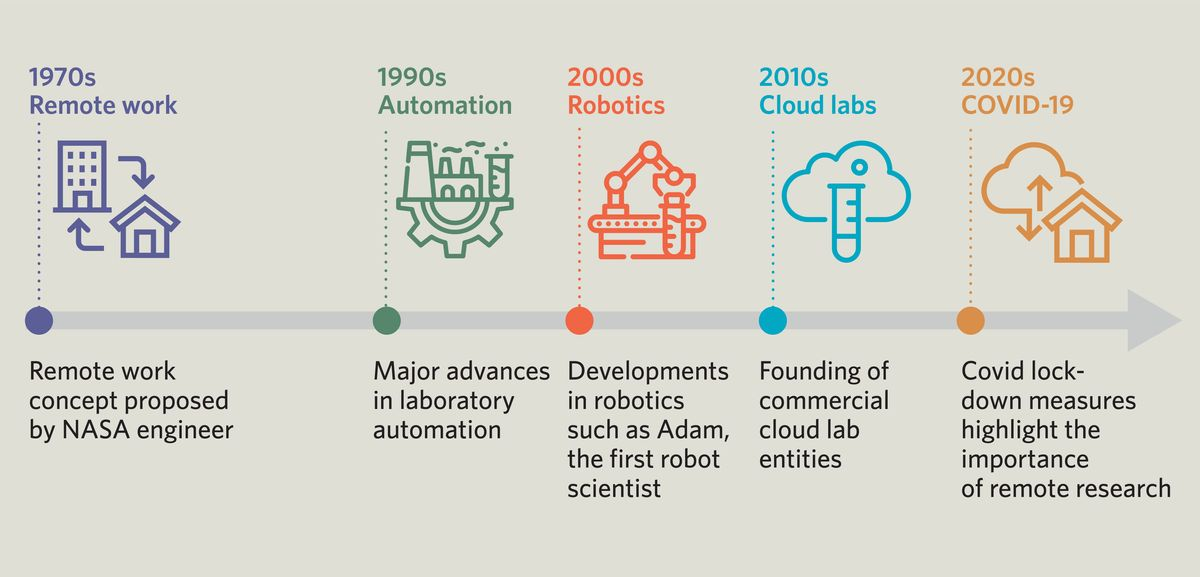

This is a meaningful departure from what laboratory automation has historically meant. Automated labs since the mid-1980s have used robots to run liquid handling, pipetting, and sample processing faster than humans. Self-driving labs add a fundamentally different element: autonomous decision-making about which experiments to run, not just faster execution of a predetermined list.

The Experiments That Are Actually Running

The current generation of self-driving labs is producing real scientific results across three domains: materials science, chemistry, and biology.

Materials Science: Battery and Electronics Research

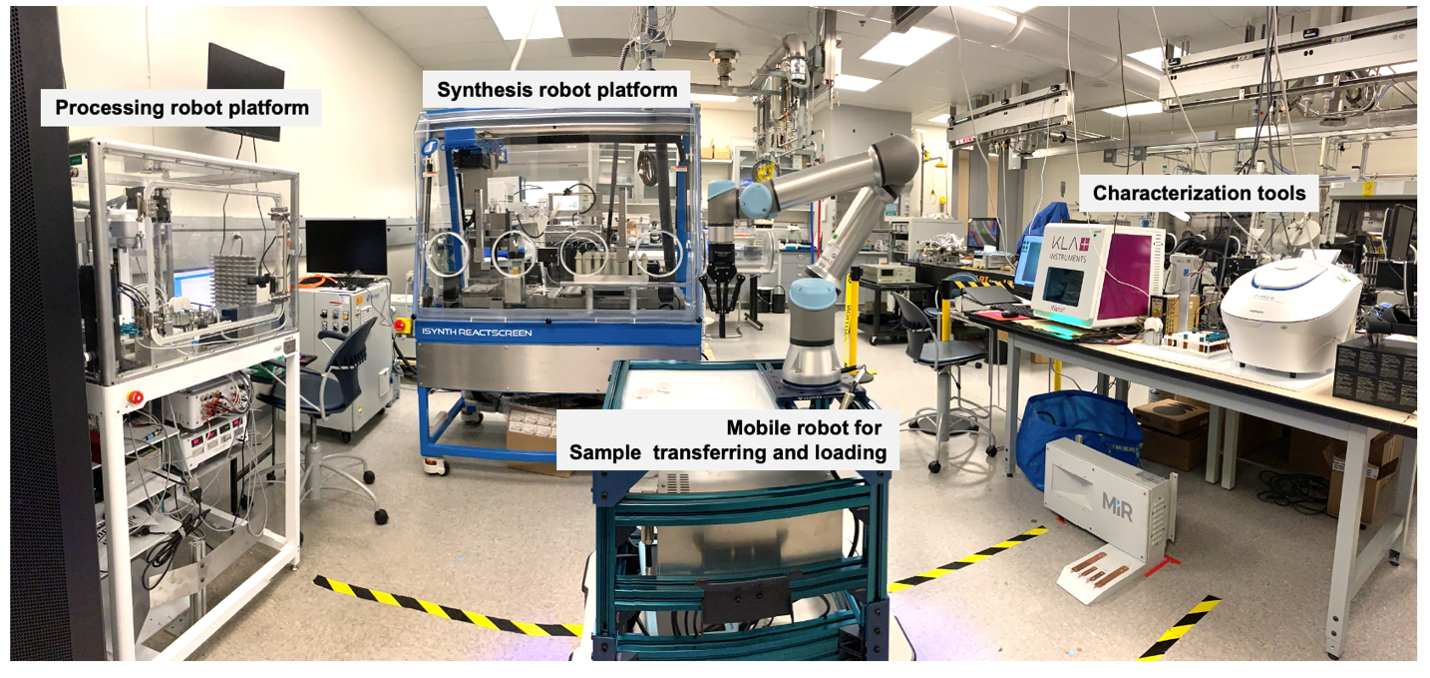

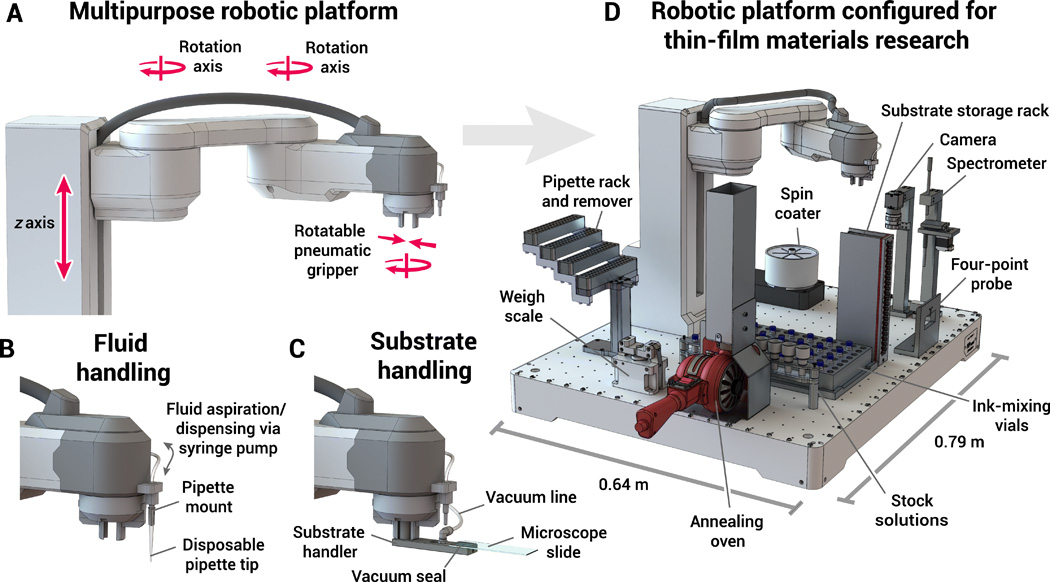

Polybot at Argonne National Laboratory is the most documented US example of a fully closed-loop self-driving lab in operation. The system consists of three robots (one synthetic, one for processing, one mobile for transporting samples), analytical instruments, and machine learning software that analyzes results and adjusts parameters for the next round.

Argonne scientist Jie Xu has described the challenge Polybot addresses with a concrete number: synthesizing all possible variations of a target electronic polymer would require roughly half a million different experiments. That is impossible for human teams to approach, and even a self-driving lab cannot exhaust the space. What Polybot does instead is navigate it intelligently, using Bayesian methods and active learning to identify the most informative experiments rather than testing randomly.

In one documented campaign, Polybot simultaneously optimized two properties of electronic polymer thin films: conductivity and coating defects. It reached average conductivity levels comparable to the field's highest standards, running continuously without human intervention between experimental cycles.

The A-Lab at Lawrence Berkeley National Laboratory uses a different approach. Starting with a database of 30,000 existing synthesis protocols for known materials, its AI identifies how closely new target materials resemble existing ones and extrapolates synthesis routes from established procedures. In its first published results, A-Lab successfully synthesized 71 to 74 percent of the novel inorganic materials it targeted. The system characterizes results autonomously using X-ray diffraction and feeds those results back into its planning algorithm without human review at each step.

Chemistry: Autonomous Reaction Planning

Coscientist, developed by the Gomes group at Carnegie Mellon University in collaboration with Emerald Cloud Lab, demonstrated that a large language model can design complex chemical experiments, write executable code for a cloud lab, and run those experiments to completion. The system also includes an internet-searching module that the authors found significantly improves synthesis planning by pulling from current literature rather than relying only on training data.

CRISPR-GPT, published in Nature Biomedical Engineering in 2025, extended the agentic automation concept to gene-editing experiments, automating the design and planning of CRISPR workflows that previously required specialist expertise to set up.

Biology: Protein Engineering and Functional Genomics

Adam, the earliest and most canonical example of a robot scientist, was designed for functional genomics research in yeast. Using abductive inference, Bayesian scoring, and active learning to generate and rank hypotheses, Adam autonomously cycled through dozens of hypotheses and executed hundreds of experiments in the time a human-only research team would have found impractical. The system identified twelve genes responsible for catalysing specific reactions in the metabolic pathways of Saccharomyces cerevisiae.

A more recent example comes from the University of Wisconsin-Madison, where the SAMPLE system was used to engineer heat-tolerant glycoside hydrolase enzymes. In four separate runs, the autonomous system produced enzyme sequences stable at 12°C higher than previously known versions, exploring protein sequence space in ways that human researchers would not have approached through intuition alone.

The Real Shift: Removing the Hypothesis Bottleneck

Self-driving labs are often described as making experimentation faster. The more significant change is subtler and more disruptive.

Traditional experimental science is hypothesis-driven. A scientist forms a belief about what might work, designs an experiment to test it, interprets the result, revises the belief, and designs the next experiment. This process is constrained by human intuition, which is itself constrained by training, prior literature, and cognitive bandwidth. Researchers tend to test what they think is most likely to work.

Self-driving labs, particularly those using Bayesian optimization and active learning, do not need a hypothesis in the same sense. They explore experimental space according to what is most informative, not what is most plausible based on prior human reasoning. They find solutions in regions of parameter space that a human scientist might never have thought to explore.

At Argonne and Lawrence Berkeley, autonomous platforms now run experiments where the model proposes synthesis conditions, the robot fabricates and tests them, and the model updates its next proposal in minutes. No human decides what to try next between cycles.

The consequence is not just speed. It is access to non-intuitive regions of chemical and materials space that hypothesis-driven experimentation systematically avoids. As one analysis put it: "It finds solutions in non-intuitive regions of phase space. It exploits interactions you wouldn't test. It converges on materials you can validate but not fully rationalize."

That is a different kind of scientific productivity from anything that laboratory automation has previously delivered.

The Commercial Layer: Cloud Labs and Research-as-a-Service

Alongside the academic and national laboratory programs, a commercial infrastructure is emerging that makes self-driving lab capabilities available without requiring organizations to build their own hardware.

Cloud labs like Emerald Cloud Lab offer researchers access to experimental capabilities on a subscription basis. Researchers upload experimental protocols, the cloud lab executes them using robotic infrastructure, and results return as data. When AI decision-making is layered on top, as demonstrated by Coscientist, the result is autonomous research capability available to any institution that cannot afford to build a closed-loop robotic lab on site.

Lila Sciences, a startup in Cambridge, Massachusetts, operates approximately 22,000 square meters of automated lab space under the name AI Science Factory. The company received UK government funding in 2026 to test whether its self-driving system, called AI NanoScientist, can autonomously synthesize and improve the stability of colloidal nanoparticles. Lila's model is to provide autonomous R&D services to pharmaceutical companies, materials-science firms, and other research-intensive organizations as a contracted capability rather than an installed product. Periodic Labs launched in San Francisco in 2025 with a similar service model.

The Acceleration Consortium, a global network based at the University of Toronto focused on autonomous science, has been working to standardize infrastructure and share tools across institutions. Merck KGaA collaborated with the Consortium to open-source BayBE, a Bayesian optimization tool designed to serve as the AI decision-making layer for self-driving labs, released in December 2023 under the Apache 2.0 license. Making these components open source lowers the entry barrier for institutions that have robotic infrastructure but lack the AI planning layer to close the loop.

What Scientists Are Still Required For

Self-driving labs have consistently raised a question that sits alongside the technical achievements: what happens to the scientists?

The honest answer from both proponents and critics is that the role changes rather than disappears, at least in the near term and in the domains where these systems are currently operating.

Scientists continue to define the goals that self-driving labs pursue. A system optimizing electronic polymer conductivity cannot decide on its own that conductivity matters, or that it should be optimized over synthesizability, stability, or cost. Those decisions require human judgment about what is scientifically valuable and practically useful. The system navigates within a space defined by humans, but it does not define the space itself.

Scientists also handle what systems fail to handle. Novel experimental conditions, instrument failures, unexpected results that suggest the underlying problem was misconceived, and ethical questions about which research directions to pursue all require human judgment that current systems cannot supply. A 2024 cross-disciplinary workshop on the future of self-driving labs described the most useful near-term model as a hybrid approach: human scientists set goals and interpret results, while machines handle optimization and iteration.

The harder question, raised in the Royal Society Open Science review, is what happens to the scientists who were trained specifically to do the manual experimentation that self-driving labs now handle. The review notes that this workforce and training pipeline question deserves more direct policy attention than it currently receives.

Wrap up

Self-driving laboratories have crossed the threshold from concept to operational reality. Polybot at Argonne ran 6,000 experiments in five months. A-Lab at Lawrence Berkeley synthesized novel materials at a 71 to 74 percent success rate. SAMPLE engineered more heat-stable enzymes than prior human efforts had achieved. These are published, peer-reviewed results from running systems.

The transformation these systems represent is not simply that experiments run faster, though they do. The deeper shift is that the hypothesis-selection bottleneck that has constrained scientific productivity for generations is being bypassed. AI-guided exploration covers parameter space that human intuition avoids, runs overnight without fatigue, and updates on every result rather than waiting for a researcher to clear their schedule.

The role of the scientist is changing, not disappearing. Goal-setting, interpretation, ethical judgment, and novel problem framing remain human work. The mechanical and iterative parts of experimentation increasingly do not. For chemistry, materials science, and biology, this is not a future scenario. It is what is happening in laboratories now.

Frequently Asked Questions

What is a self-driving laboratory?

A self-driving laboratory is a research system that combines AI decision-making with robotic experimental hardware in a closed loop. The AI proposes an experiment, the robotic system executes it, results flow back to the AI, and the AI proposes the next experiment without human intervention between cycles. The most capable examples automate the entire scientific method: hypothesis generation, experimental design, execution, data analysis, and hypothesis updating. The name was coined by analogy to autonomous vehicles.

What have self-driving labs actually discovered or produced?

Published results include Polybot at Argonne National Laboratory running more than 6,000 battery chemical experiments in five months; the A-Lab at Lawrence Berkeley successfully synthesizing 71 to 74 percent of novel inorganic materials it was given as targets; the SAMPLE system at the University of Wisconsin-Madison engineering enzyme variants stable at 12°C higher than previously known versions; and the NC State flow chemistry lab collecting ten times more data per experiment than conventional methods. Adam, one of the earliest robot scientists, autonomously identified twelve genes involved in yeast metabolic pathways.

How do self-driving labs decide which experiments to run?

Most current self-driving labs use Bayesian optimization or active learning algorithms that identify which experiment would be most informative given the results so far, rather than testing combinations randomly or according to a human-defined list. This allows the system to explore experimental space efficiently, including regions that human scientists might not have prioritized based on prior assumptions. Self-driving labs often find solutions in parameter space that hypothesis-driven experimentation would not have explored.

Do self-driving labs replace scientists?

Not in current systems. Scientists define the goals that self-driving labs pursue and interpret the results. A system optimizing material conductivity cannot decide on its own that conductivity is the right property to optimize, or how to weigh it against cost and synthesizability. Scientists also handle unexpected results, instrument failures, and ethical questions about research directions. The most common framing among researchers in this area is that self-driving labs change what scientists do rather than eliminating the role, with the repetitive and mechanical parts of experimentation increasingly handled by the system.

What is cloud lab research-as-a-service?

Cloud labs are remote facilities packed with robotic and analytical equipment that researchers can access on a subscription basis. Rather than building their own automated lab, researchers upload experimental protocols and the cloud lab executes them, returning data. When AI decision-making is layered on top, as demonstrated by Coscientist at CMU, the result is autonomous research capability available to organizations without the capital to build closed-loop robotic labs in-house. Companies like Lila Sciences offer AI-driven R&D as a contracted service to pharmaceutical and materials science organizations.

What are the safety risks of self-driving labs?

The primary concern is dual use. The same autonomous capability that allows a self-driving lab to plan and execute chemistry experiments without expert human intermediaries could be directed toward harmful synthesis by actors without traditional training barriers. The Coscientist authors noted this directly in their published work. The research community generally distinguishes between cloud labs with institutional monitoring and controls, which provide more protection, and fully autonomous remotely accessible systems, which present higher risk. The safety infrastructure for this technology is still developing alongside the technical capabilities.

Related Articles