For years, "physical AI" was a phrase used almost exclusively in keynote presentations and investor decks.

The robots danced on stage. The drones flew in choreographed formations. The wearables tracked heart rate variability and called it intelligence. Then everyone went home and nothing much changed.

2026 feels different, and not just because the announcements are louder.

At CES in January, Nvidia CEO Jensen Huang declared that "the ChatGPT moment for physical AI is here." That line got the headlines. What got less attention were the specifics behind it:

- Boston Dynamics announcing Atlas entering commercial production for Hyundai factories

- Agility Robotics deploying its Digit robot across Toyota's Canadian manufacturing plants

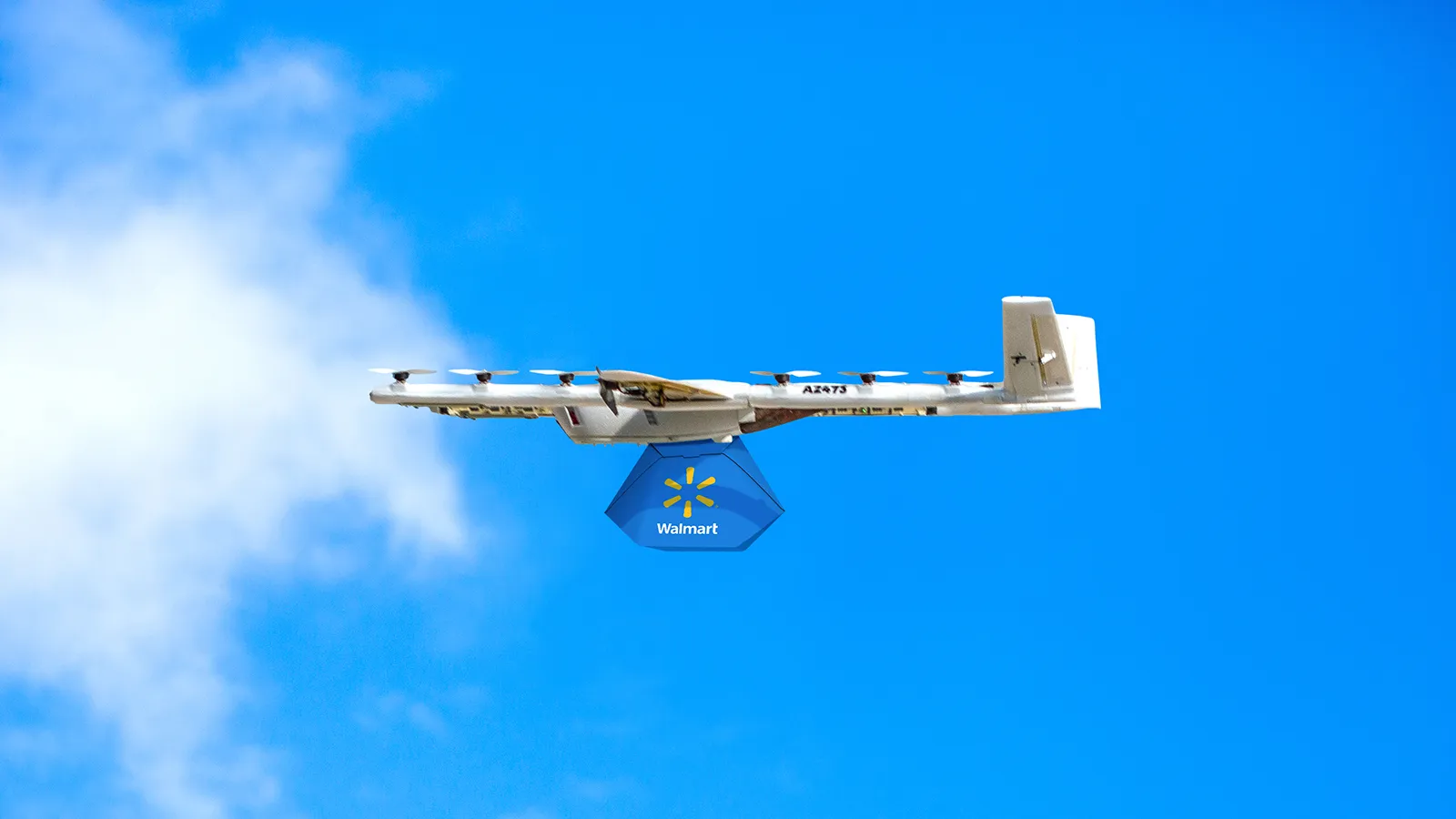

- Wing expanding drone delivery to 150 more Walmart stores

- Nvidia revealing 2,000-plus autonomous delivery bots already operating in US cities, covering food deliveries for roughly $1 per trip

These are not demos. They are production systems, operating at scale, generating revenue and performance data in the real world.

The shift from demonstration to deployment is the defining story of physical AI in 2026. It does not mean the problems are solved. It means the problems have become engineering problems rather than science problems — and that is a fundamentally different situation than where the industry was twelve months ago.

What "Physical AI" Actually Means

The term gets thrown around loosely, so it is worth being precise.

Physical AI refers to AI systems that perceive, reason, and act in the three-dimensional physical world — as opposed to systems that process text, generate images, or answer questions in a purely digital environment.

The defining characteristic is that the AI's outputs directly control physical behavior: a robot arm gripping an object, a drone adjusting its flight path around an obstacle, a wearable adjusting its health recommendations in response to sensor data.

This requires a fundamentally different kind of AI than the systems that power chatbots or image generators. Physical AI needs to handle real-time perception across multiple sensor types simultaneously — cameras, lidar, radar, ultrasonic sensors, IMUs, microphones — and translate that input into reliable physical action in environments it may not have encountered before.

The margin for error is different. A language model that produces a slightly wrong answer can be corrected. A robot that misidentifies an obstacle at the wrong moment cannot.

Why 2026 Is the Inflection Point

The infrastructure for physical AI has been maturing for years. What has changed is the convergence of three factors now arriving together for the first time:

| Factor | What Changed |

|---|---|

| Hardware costs | Dropped to ranges that make commercial deployment viable beyond a handful of high-margin niches |

| Foundation models | Now generalize across new situations rather than requiring explicit programming for every scenario |

| Simulation tooling | Sophisticated enough to generate training data without millions of hours of real-world robot operation |

The result is an industry moving from "could this work?" to "how do we scale this?" — which is a considerably more commercially interesting question.

Robots: From Research Demos to Factory Floors

The humanoid robot story in 2026 is best understood not as a single breakthrough but as a competitive race that has moved from concept validation to production reality — with different companies at very different stages of that journey.

Boston Dynamics and Atlas: The Most Credible Deployment

Boston Dynamics has begun commercial production of the final version of Atlas and solidified plans to deploy tens of thousands of units at Hyundai Motor Group manufacturing facilities.

Hyundai — Boston Dynamics' majority shareholder — is starting deployment at its Robot Metaplant Application Center. The company has also announced a $26 billion investment in US manufacturing that includes a robotics factory capable of producing 30,000 bots per year.

Atlas now functions in three modes:

- Full autonomy

- Tablet-controlled operation

- VR teleoperation

The useful metric for humanoid robots in 2026 is not whether they can do backflips. It is whether they can pick up a component, carry it across a factory floor, and place it correctly, thousands of times per day, without failing in ways that halt production. That is a different — and considerably harder — engineering challenge than anything captured in a viral demo reel.

Agility Robotics and the Toyota Deal

Agility Robotics is arguably the most consequential commercial deployment story in humanoid robotics this year.

In February 2026, Agility deployed more than 7 Digit units at Toyota Motor Manufacturing Canada in a Robot as a Service deal. Toyota's Canadian operation is one of the company's largest outside Japan — over 8,500 employees and more than 535,000 vehicles produced in 2025.

Agility is also working toward ISO functional safety certification for Digit, which would make it the first humanoid cleared to work cooperatively alongside people without physical barriers. That milestone matters considerably more for real-world commercial scaling than any benchmark performance number.

Tesla Optimus: Behind the Curve but Not Out

Tesla's Optimus narrative in 2025 was a story of missed targets.

Elon Musk's claim that Tesla would produce 5,000 to 10,000 Optimus robots in 2025 ran up hard against the embarrassing reality that Optimus was not only behind the curve but likely lacked any autonomous capabilities at all. The Gen 3 reveal was pushed from late 2025 to early 2026. A key engineering leader departed mid-year.

The 2026 picture is more credible but still primarily internal:

- Gen 3 mass production commenced at the Fremont factory in January 2026

- 22-degree-of-freedom hands demonstrated

- Robots in Tesla's Austin Gigafactory are handling box movement and basic parts sorting — autonomous, but narrow

- First commercial customers expected in late 2026

Tesla's genuine advantage is manufacturing scale. If the software catches up, producing humanoid robots using automotive supply chain economics could be transformative. The if-and-when is doing a lot of work in that sentence.

Figure AI and the Helix Breakthrough

Figure's Helix 02 represents a full-body autonomy breakthrough. The company's integration of large language models with motor control enables natural language task instruction — telling the robot what to do rather than programming it to do specific things.

Figure raised $1 billion in funding in 2025 and has expanded alpha testing into commercial environments.

The China Factor: 85-90% of Global Shipments

China controls approximately 85 to 90 percent of the humanoid robotics market by shipment, with Unitree targeting 10,000 to 20,000 units in 2026 alone.

Unitree, in particular, has achieved something notable: shipping capable humanoid robots at price points that significantly undercut Western competitors. The company's G1 completed more than 130,000 autonomous steps in minus 47 degrees Celsius conditions — a meaningful demonstration of hardware reliability under extreme conditions.

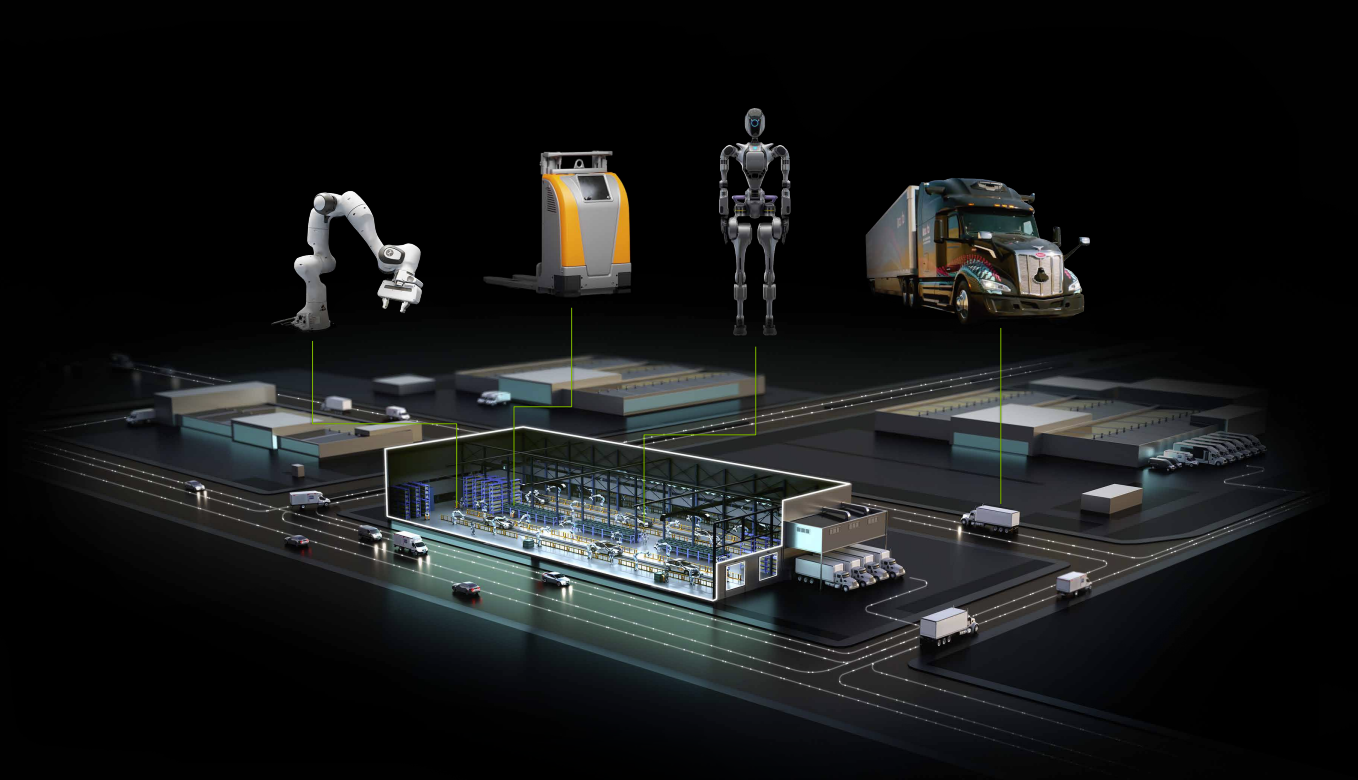

Nvidia's Platform Strategy: The Android of Robotics

What makes 2026's robot story materially different from previous years is not just the hardware. It is the platform infrastructure that now exists to support it.

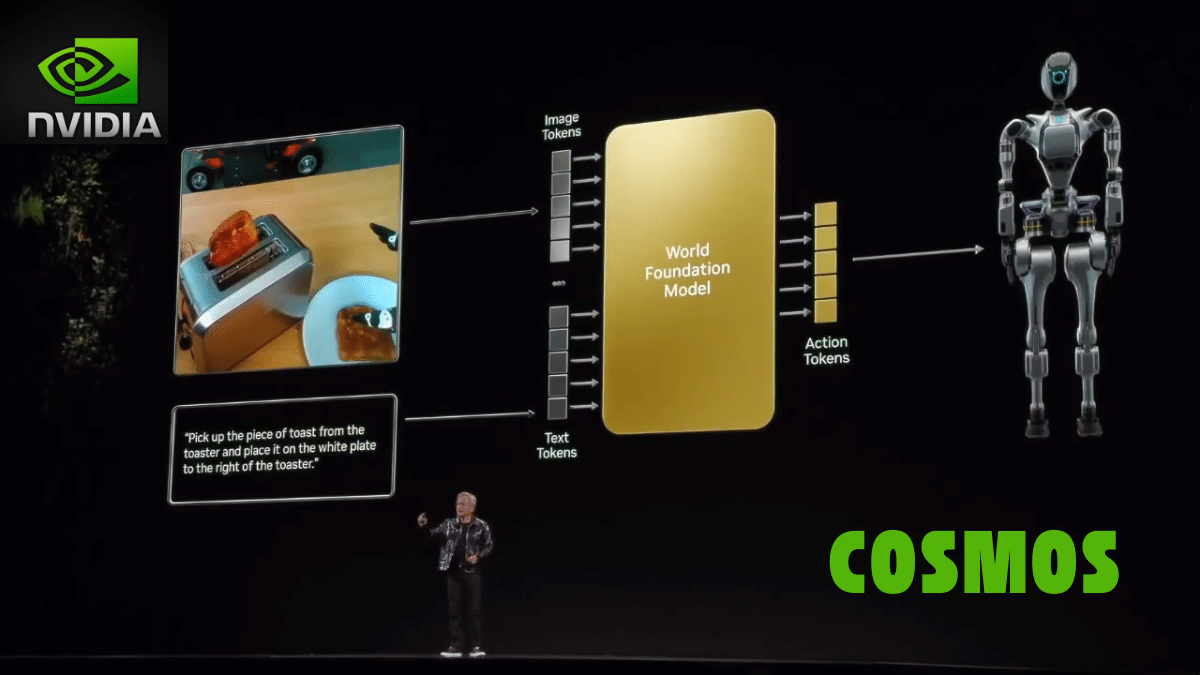

At CES 2026, Nvidia released new open models, frameworks, and AI infrastructure for physical AI, with partners including Boston Dynamics, Caterpillar, Franka Robotics, and NEURA Robotics debuting next-generation machines built on Nvidia technologies.

The Nvidia physical AI stack:

| Component | What It Does |

|---|---|

| GR00T N1.6 | Vision-language-action model for humanoid full-body control |

| Cosmos Reason 2 | Reasoning model enabling machines to see, understand, and act contextually |

| Isaac Lab-Arena | Open-source simulation framework for virtual testing before real-world deployment |

| OSMO | Edge-to-cloud compute framework for simplifying robot training workflows |

| Jetson T4000 | Edge compute module at $1,999/unit — 4x previous generation performance |

Nvidia is trying to do in robotics what Google did in mobile: own the default development platform, not the devices. The company that owns that layer owns the position everything else depends on.

The collaboration with Hugging Face integrates Isaac and GR00T into the LeRobot open-source framework, connecting Nvidia's 2 million robotics developers with Hugging Face's 13 million AI builders. The open-source strategy mirrors what proved effective in AI software — make the tools freely available, monetize the compute.

Drones: Delivery Is No Longer a Pilot Program

The drone story in 2026 is in some ways the most commercially mature of the three physical AI categories, because it has already cleared the hardest hurdle: it has proven unit economics.

Wing and Walmart: Routine Deliveries at Scale

Wing is expanding its Walmart drone delivery operations, adding service to 150 more US stores and extending coverage from Los Angeles to Miami.

By the end of 2026, Walmart and Wing project that roughly 40 million Americans could have access to the service.

In the Dallas-Fort Worth market — where Wing has operated longest — some customers order drone deliveries multiple times per week. New sites are reportedly hitting 100 daily deliveries within two days of launch. The novelty has worn off, which is exactly what genuine commercial traction looks like.

Wing's two-platform approach reflects the engineering reality of different delivery contexts:

- Platform 1 handles longer-range deliveries from distribution hubs

- Platform 2 focuses on neighborhood-level home delivery, dynamically repositioning between docks based on demand

Zipline: 125 Million Autonomous Miles and Counting

Zipline is valued at $7.6 billion, has flown 125 million autonomous commercial miles, and is expanding manufacturing capacity to produce up to 15,000 drones per year.

The company's trajectory is particularly notable because it achieved commercial scale in a harder environment first — medical supply delivery in Rwanda and Ghana — and is now applying those operational learnings to the US market.

A company that has flown 125 million autonomous miles is not doing R&D anymore. It is doing logistics.

Ground-Level Delivery: The $1 Per Trip Story

Not all drone-equivalent delivery is aerial.

Nvidia highlighted its 2,000-plus sidewalk delivery robots operating in US cities, covering short food deliveries for roughly $1 per trip. Serve Robotics, operating in Los Angeles and other markets, has demonstrated that the economics of autonomous last-mile delivery work at the neighborhood level without flying.

The combination of aerial and ground-based autonomous delivery represents the emerging infrastructure of a distributed logistics network that human couriers cannot match on cost at volume.

Industrial Drones: The Quiet Revenue Story

Beyond delivery, AI-enabled drones are finding durable commercial value in areas that do not generate consumer headlines but generate consistent revenue:

- Warehouse inventory management — navigating between shelves and scanning barcode and QR codes autonomously

- Infrastructure inspection — replacing helicopter flyovers and climber inspections in construction and energy

- Agricultural monitoring — large-scale crop and terrain analysis

- Environmental monitoring — water quality assessment in rivers, ports, and coastal areas

The pattern is consistent across physical AI categories: the highest early commercial value comes from replacing dangerous or expensive human tasks in structured environments — not from replicating the full range of human capability in unstructured ones.

The Regulatory Shift That Is Enabling All of This

New US security rules are limiting foreign-made drones, creating pressure on domestic manufacturers to scale. This is simultaneously a headache for the industry and an opportunity for US-based manufacturers who can now compete on a more level playing field.

In Europe, the regulatory picture is maturing in parallel. The European roadmap for large-scale operations in very low-level airspace, in force between 2026 and 2029, is enabling Beyond Visual Line of Sight operations and recurring autonomous deployments that would have been legally complicated to approve just a few years ago.

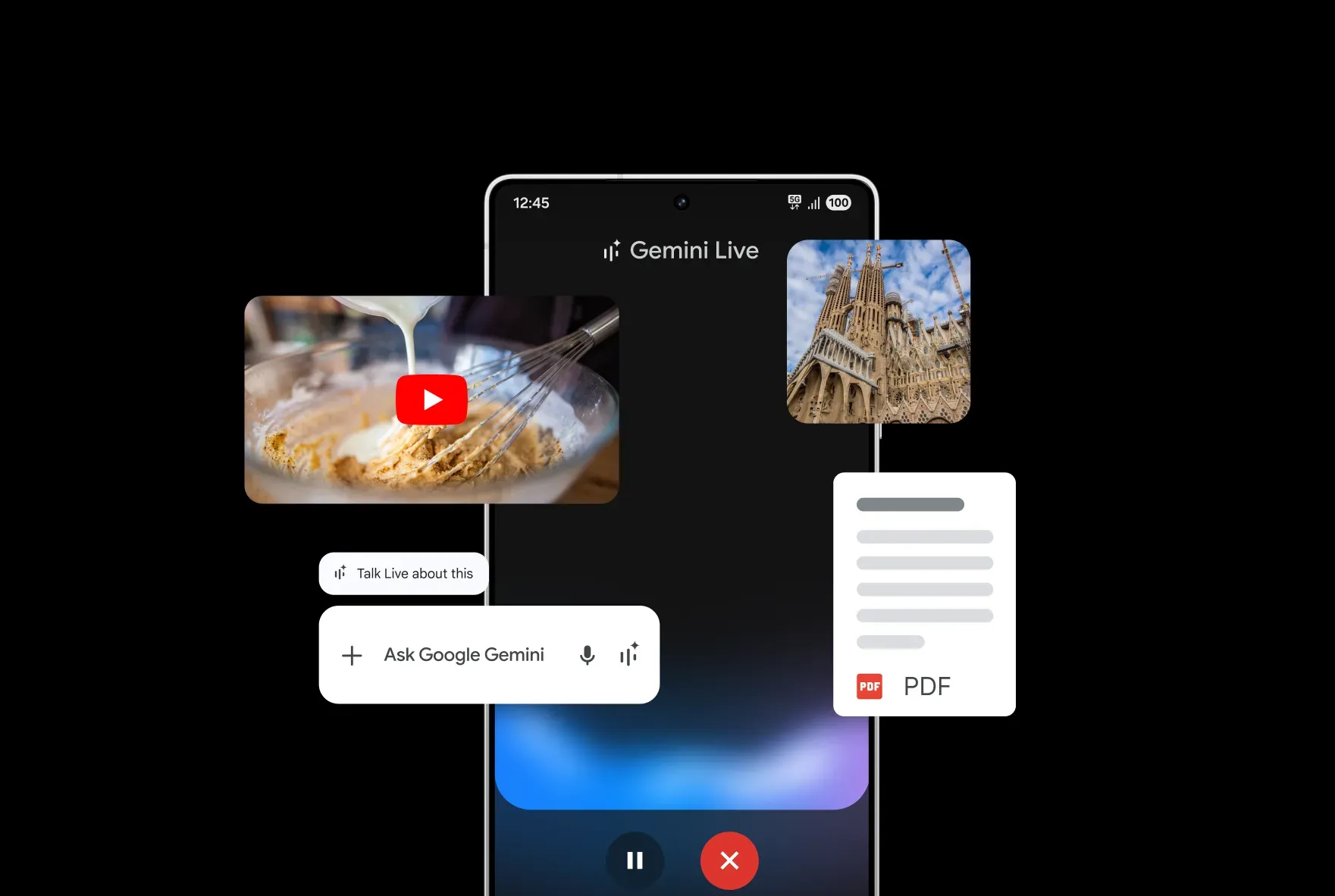

Wearables: The Intelligence Layer Gets Smarter

The wearable technology story in 2026 is not primarily about new form factors. It is about a fundamental shift in what wearables do with the data they collect.

For most of the category's history, wearables were measurement devices that displayed graphs. You could see your heart rate trend. You could see your sleep stages. What you did with that information was largely up to you.

The AI layer being added now changes that into a coaching and prediction system — one beginning to approach what would previously have required a human health professional to interpret.

Smart Rings: 49% Growth and a Market Inflection

Smart ring shipments are on track for a 49% jump in 2025, according to IDC — far outpacing an estimated 6% gain by smartwatches. (Bloomberg)

That growth rate signals a category transition from enthusiast product to mainstream consideration. The reasons are practical:

- Fingers have thinner skin than wrists, giving optical heart rate sensors cleaner signal with less noise

- Rings stay on when watches come off — during showers, sleep, charging

- The absence of a screen is a feature for users who want health tracking without constant notification pressure

Current market snapshot:

| Brand | Market Share (H1 2025) | Key Differentiator |

|---|---|---|

| Oura Ring 4 | 74% | Best-in-class sleep tracking, AI health coaching, metabolic integration |

| Ultrahuman Ring Air | 9% | No subscription, body composition focus |

| Samsung Galaxy Ring | 9% | Android ecosystem integration, no subscription |

| RingConn Gen 2 | 5% | Subscription-free, competitive price point |

The AI component matters here because the core value proposition of a ring is continuous passive monitoring. A ring that generates a number you have to interpret yourself adds modest value. A ring whose AI layer surfaces the right insight at the right time — a warning that readiness is low before a hard workout, a pattern suggesting illness before symptoms appear — is a meaningfully different product.

Oura's most recent platform update added a cumulative stress metric and blood test integrations for US users. Integrating blood test results with continuous biometric monitoring is the beginning of a genuine health intelligence system, not just activity logging.

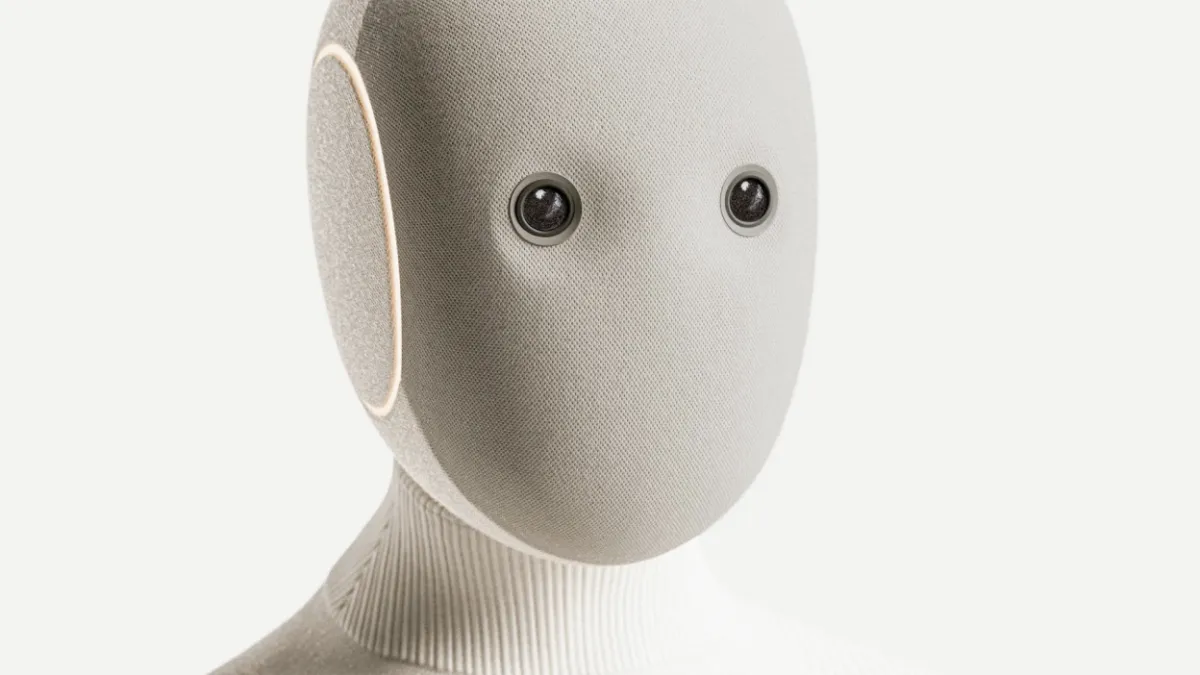

Ambient AI Wearables: Beyond Health Tracking

CES 2026 showcased a category of wearable that goes beyond health monitoring into ambient intelligence — devices designed to understand your context and assist you in real time.

Notable examples from the show floor:

- Omi ($89) — worn on the temple, listens and summarizes conversations in real time

- Looki L1 — a wearable AI camera that captures your day and generates an automatic vlog

- Meta Ray-Ban smart glasses — the most commercially successful ambient AI wearable to date, positioning AI as an everyday companion accessed through audio rather than a screen

Google's AI-enabled smart glasses running Android XR and Gemini are expected to provide serious competition in 2026. Both the Meta and Google products are notable because they make the AI layer useful without a screen — surfacing information, translation, and context through audio rather than demanding your visual attention.

These products carry genuine concerns around privacy and consent that health wearables largely avoid. A device that continuously records and processes audio from your environment requires a different level of societal conversation about appropriate use than one that tracks your heart rate.

Industrial Wearables: A Quieter Story With Real Impact

Away from consumer gadgets, industrial wearables represent one of the more immediately impactful applications of physical AI in 2026.

Wearable fatigue alerts are reducing factory injuries by 15%, according to a Gartner survey.

Exoskeletons and wearable robotic support systems are moving from pilot programs into operational deployment for workers in physically demanding roles. The value proposition is direct and calculable: reduced injury rates, extended worker careers, lower workers' compensation costs. These are metrics that operations managers can evaluate on a spreadsheet, which makes adoption decisions easier than in consumer markets where the value is more diffuse.

The Infrastructure Making All of This Possible

Physical AI systems do not operate in isolation. They depend on a technical stack that has been quietly maturing alongside the headline hardware.

Simulation and Synthetic Data: Solving the Training Problem

One of the most fundamental bottlenecks in physical AI has been data.

Training a robot to handle a new object requires either millions of physical training examples — expensive, time-consuming, and physically wearing on the hardware — or a simulation environment sophisticated enough that behaviors learned virtually transfer reliably to the real world.

Nvidia's Cosmos platform generates physics-based videos from combinations of text, image, video, and robot sensor data, built for physically based interactions, object permanence, and high-quality generation of simulated industrial environments.

The ability to generate diverse synthetic training data at scale is what makes the current pace of physical AI deployment economically viable. Without it, every new deployment would require months of on-site training runs.

Cosmos WFMs have been downloaded over 3 million times on Hugging Face.

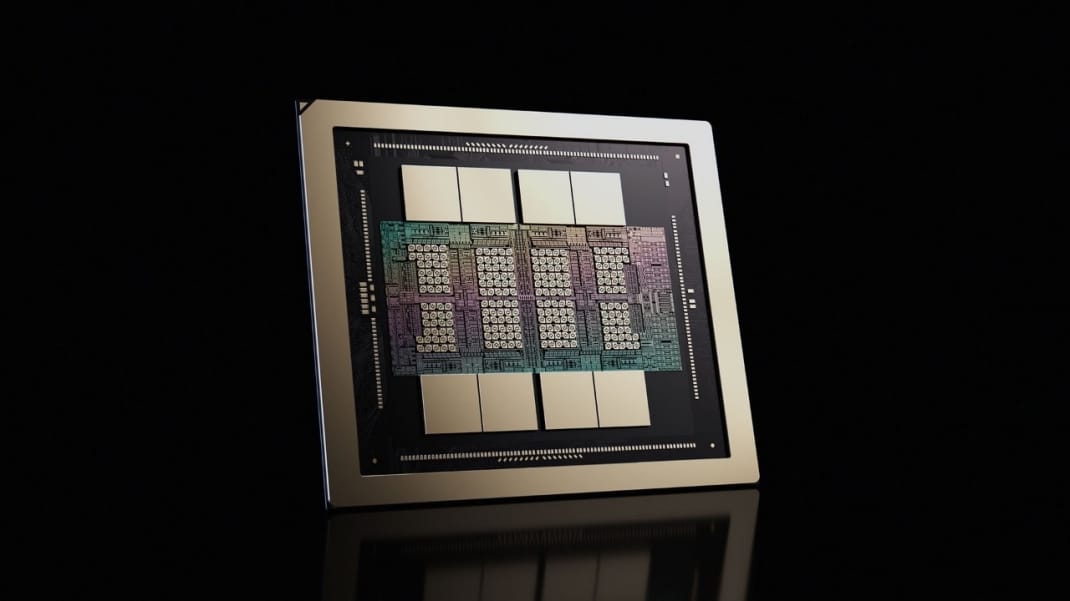

Edge Computing: The Intelligence Has to Run On-Board

Physical AI systems need to process sensor data and make decisions in milliseconds.

Sending that data to a cloud server and waiting for a response is not viable for a robot arm that needs to adjust its grip in real time, or a drone that needs to respond to a sudden wind gust.

Nvidia's Jetson T4000 module — priced at $1,999 at volume — delivers:

- 2,070 FP4 TFLOPS compute

- 64GB of memory

- 70-watt power envelope

That combination of high compute and low power is what makes sophisticated real-time AI inference viable in a wearable or a drone running on a battery for hours.

Digital Twins: Testing Without Breaking Things

Digital twins replicate entire facilities, letting engineering teams test hundreds of robot behaviors overnight without halting production lines.

For industrial deployments, digital twins have become the critical bridge between simulation and deployment. A company planning to deploy robots on a factory floor can build a precise digital replica, train and test behaviors in simulation, identify failure modes before they occur in the real world, and deploy with a substantially de-risked operation.

Business Implications: Who Should Pay Attention Right Now

For Enterprises and Operations Leaders

Physical AI is no longer a research budget item. It is a capital expenditure item — evaluated on the same ROI basis as any other infrastructure investment.

Early data points on returns:

- 25%+ warehouse throughput improvement in trials (Deloitte 2025)

- Robotaxi cost per mile under $0.50 in select Phoenix corridors (Waymo data)

- 15% reduction in factory injuries from wearable fatigue alerts (Gartner)

For logistics and warehousing operations, the business case is becoming clearer: labor shortages in physical fulfillment roles, rising wage costs, and increasingly capable robots at declining price points create a capital substitution dynamic that will play out across the decade.

Companies that build operational familiarity with physical AI systems now will have a meaningful advantage when those systems reach the capability threshold for their specific use cases.

For Developers and Builders

Robotics software development is now accessible to the much broader population of AI developers who already know how to work with foundation models.

Building a capable robot software stack previously required deep expertise in robotics-specific domains. It increasingly requires general AI development skills plus access to hardware. GR00T N models and Isaac Lab-Arena are now available directly in the Hugging Face LeRobot library — no specialized robotics knowledge required to start experimenting.

Robotics is now the fastest-growing category on Hugging Face, with Nvidia's open models leading downloads.

For Consumers

The consumer timeline for physical AI remains longer than the enterprise timeline.

Home humanoid robots are being pre-ordered — 1X's NEO is taking orders at $20,000 — but widespread consumer adoption of capable household robots is more likely a late-decade story than a near-term one.

What is arriving faster for consumers:

- AI wearables that genuinely improve health decision-making

- Ambient AI assistants that reduce friction in daily workflows

- Drone delivery infrastructure making same-day delivery viable without a human driver

What Still Does Not Work

Honesty requires acknowledging that physical AI in 2026, for all its real progress, remains significantly constrained.

| Limitation | Current Reality |

|---|---|

| Unstructured environments | Robots that work reliably in controlled factories can fail unpredictably in new settings. The home — messy and variable — remains the hardest target. |

| Battery life | Humanoid robots and advanced drones have meaningful operational time constraints before needing to recharge, limiting deployment scenarios. |

| Safety certification | Regulatory infrastructure for robots working alongside people is still being built. Agility's ISO certification push shows how much work remains. |

| Cost | Atlas: $140,000–$150,000. Most capable humanoids: above $100,000. Tesla's $20,000–$30,000 target is a target, not a current reality. |

These are engineering problems, not fundamental barriers. But they are real constraints on the pace and breadth of deployment today.

What Comes Next

The trajectory for physical AI in the next two to three years is toward faster generalization, lower hardware costs, and more aggressive commercial deployment in high-labor-cost sectors.

The current year is when the companies that will define physical AI as a market are establishing the positions they will hold for the decade:

- Nvidia's platform strategy could give it the same position in robotics that it holds in AI training — not the most visible player, but the one everything else depends on

- Boston Dynamics' Hyundai deployment, if it scales as planned, gives it operational data at a volume most competitors cannot match

- China's manufacturing scale, led by Unitree and Agibot, is creating cost pressure on Western hardware that will accelerate the timeline for commercial viability across the whole market

The chatbot moment for physical AI may not arrive with a single dramatic release the way ChatGPT did. It is more likely to arrive as a series of accumulating data points — delivery robots in more cities, humanoids on more factory floors, wearables that genuinely catch health issues before they become crises — until the cumulative weight of deployment becomes undeniable.

That accumulation is already underway.

Frequently Asked Questions

What is physical AI, and how is it different from regular AI?

Physical AI refers to artificial intelligence systems that directly perceive and act in the three-dimensional physical world, rather than processing text or generating digital content. The key difference is that the AI's outputs control physical behavior — a robot arm, a drone's flight path, or a wearable device's sensor response — in real time, with no tolerance for the kind of errors that are acceptable in language or image generation systems.

Which companies are leading in humanoid robots in 2026?

Boston Dynamics (Atlas), Agility Robotics (Digit), Figure AI, and Tesla (Optimus) lead in the US. China's Unitree and Agibot account for the largest share of global humanoid shipments at 85 to 90 percent. Boston Dynamics and Agility Robotics currently have the most credible commercial deployments, with Atlas entering Hyundai factories and Digit deployed at Toyota's Canadian manufacturing plants.

When will drone delivery actually be mainstream for US consumers?

Wing and Zipline are already mainstream in limited geographic markets. Wing covers parts of Dallas-Fort Worth with enough frequency that some customers order multiple times per week. The expansion to 40 million Americans by end of 2026, if it happens on schedule, would represent a genuine inflection point. Full national availability is a longer timeline, constrained more by airspace regulation and infrastructure than by technology.

Are smart rings worth buying in 2026?

For users who prioritize sleep tracking, recovery monitoring, and continuous health data over notification management and display functionality, smart rings now offer a genuinely compelling product. Oura Ring 4 remains the most capable option. Samsung Galaxy Ring is the best choice for Android users who want ecosystem integration. The category has matured enough that the products work reliably, though the subscription model for full AI features in Oura and others is a meaningful ongoing cost.

What is Nvidia's role in physical AI?

Nvidia is attempting to become the default platform for physical AI development, providing open foundation models (GR00T for humanoid robots, Cosmos for world simulation), simulation frameworks, and edge computing hardware. The strategy mirrors its position in AI training: not building robots itself, but providing the infrastructure that robot builders depend on. Global partners including Boston Dynamics, Caterpillar, LG Electronics, and NEURA Robotics are already building on Nvidia's robotics stack.

Will physical AI replace human workers?

In specific, structured tasks — repetitive manufacturing assembly, warehouse fulfillment, last-mile delivery, infrastructure inspection — physical AI systems are already beginning to substitute for human labor in environments where those tasks are hazardous or where labor shortages exist. Wholesale displacement across the labor market is a much longer timeline, constrained by the difficulty of generalizing AI behavior to unstructured environments. The near-term picture is more substitution at the task level than elimination at the job level.

What are the main barriers still holding back humanoid robots?

Cost remains the primary barrier, with most capable humanoids priced between $100,000 and $150,000. Generalization to unstructured environments is a technical barrier that limits deployment beyond controlled factory settings. Battery life constrains operational duration. Safety certification for human-facing deployments is still evolving. And the software to make robots genuinely reliable across the full range of consumer home tasks is not yet mature enough for broad deployment.

How does the Nvidia Cosmos platform accelerate robot development?

Cosmos generates physics-based synthetic training data that allows robots to learn new behaviors in simulation before deploying to hardware. This dramatically reduces the cost and time required to train robots on new tasks. It also includes world models that allow robots to predict how physical environments will behave, enabling more reliable real-world operation. The platform is now available through Hugging Face, making it accessible to the broad AI developer community rather than only specialized robotics teams.

Related Articles