OpenAI released GPT-5.3 Instant on March 3, 2026, and the announcement carried an unusual degree of candor. The company acknowledged, in its own words, that the previous model's tone could "feel cringe" – overbearing, emotionally presumptuous, and prone to the kind of performative empathy that had become a reliable source of user frustration and internet mockery. The update does not just tune a few parameters. It reflects a deliberate repositioning of what the company believes a good AI assistant should actually sound like.

GPT-5.3 Instant is now the default model for all ChatGPT users. The changes span three interconnected dimensions: conversational tone and flow, web search quality and synthesis, and measurable reductions in hallucination rates across a range of domains. Each dimension addresses documented feedback from real users. Together, they represent one of the more substantive course corrections OpenAI has made to its consumer product since GPT-5's original launch.

The Tone Problem Was Real, and OpenAI Knows It

The frustration that preceded this release was not quiet. On the ChatGPT subreddit, in tech media, and across social platforms, users had been accumulating evidence of a model that treated every interaction as an opportunity for emotional intervention. A question about a dating situation would be met with "you're not broken, and it's not just you." A request for help with a difficult work task might prompt a lengthy preamble about self-care. Phrases like "Stop. Take a breath." entered the cultural record as emblems of an AI that had overcorrected toward therapeutic persona at the expense of usefulness.

Some users were reportedly canceling their subscriptions over the insufferable tone, with the ChatGPT Reddit hosting substantial discussion of the problem before OpenAI's Pentagon deal redirected the community's attention.

OpenAI's announcement acknowledged the dynamic directly. The company stated that GPT-5.2 Instant would sometimes refuse questions it should have been able to answer safely, or respond in ways that felt overly cautious or preachy, particularly around sensitive topics. The specific example OpenAI chose to illustrate the change is instructive: a user expressing personal frustration would previously receive a response beginning with "First of all — you're not broken," whereas GPT-5.3 Instant acknowledges the difficulty of the situation and proceeds directly to something useful.

GPT-5.3 Instant significantly reduces unnecessary refusals, while toning down overly defensive or moralizing preambles before answering the question.

Why This Pattern Develops in the First Place

The drift toward over-caution in AI models is not accidental, and understanding it is useful context for evaluating whether GPT-5.3 actually solves the problem or simply recalibrates it temporarily. Models are trained through reinforcement learning from human feedback, a process in which human raters evaluate responses and signal which behaviors to reinforce. When raters penalize responses that seem insufficiently careful around sensitive topics, models learn to lead with caution. When the range of topics treated as sensitive expands, caution migrates from genuinely high-stakes domains into ordinary conversation.

OpenAI is simultaneously navigating lawsuits that allege its products have contributed to negative mental health outcomes in vulnerable users, which creates genuine organizational pressure toward conservative, protective language. The challenge is that the protective language, over-applied, undermines trust and utility for the overwhelming majority of users who have no such vulnerability. As TechCrunch noted, there is a delicate balance between responding with empathy and providing quick, factual answers — and Google, notably, never asks you about your feelings when you are searching for information.

The recalibration GPT-5.3 represents is directionally sound, but it is worth noting that OpenAI has shipped similar-sounding corrections before. Whether this version holds, or whether the balance drifts again under future training pressures, is a question that only sustained use will answer.

What Actually Changed: A Feature-Level Breakdown

Conversational Flow and Directness

The most immediately perceptible change for everyday users is the model's willingness to answer questions without prefacing those answers with extensive framing about what it will and will not say. GPT-5.3 Instant gets right into the response, whereas GPT-5.2 Instant would first explain its safety boundaries at length before eventually answering the question.

This is not a minor cosmetic adjustment. In conversational AI, the pattern of a response shapes user perception of the model's capability and trustworthiness as much as the content of the response itself. A model that consistently hedges before answering trains users to expect hedging, and to read the hedging as a signal that the model may not actually be able to help. Removing that pattern where it is not warranted restores the basic utility contract that makes a conversational assistant worth using.

Web Search Quality and Synthesis

The previous model's web search behavior had a documented problem: it tended to either over-index on retrieved content, producing long lists of links and loosely connected information, or fail to integrate what it found online with its own knowledge and reasoning. GPT-5.3 Instant more effectively balances what it finds online with its own knowledge and reasoning — for example, using its existing understanding to contextualize recent news rather than simply summarizing search results. It does a stronger job of recognizing the subtext of questions and surfacing the most important information, especially upfront, resulting in answers that are more relevant and immediately usable.

OpenAI's own example compares the two models responding to a question about a recent sports signing. GPT-5.2 Instant returned information from the previous offseason that was technically accurate but not responsive to what the user was actually asking about. GPT-5.3 Instant correctly identified the move people were talking about from the most recent offseason, and contextualized that signing against the league's broader trend toward talent concentration and widening payroll disparities.

The distinction here is between a model that retrieves information and a model that reasons about what the user actually needs. The former produces answers that are technically grounded but frequently miss the point. The latter produces answers that are useful even when the underlying information is the same.

Hallucination Reduction: The Numbers

This is where OpenAI has offered specific, measurable claims that are meaningful to evaluate. The company ran two internal evaluations: one focused on higher-stakes domains including medicine, law, and finance, and a second measuring hallucination rates on de-identified ChatGPT conversations that users had flagged as containing factual errors.

On the higher-stakes evaluation, GPT-5.3 Instant reduces hallucination rates by 26.8% when using the web and 19.7% when relying only on its internal knowledge, compared to prior models. On the user-feedback evaluation, hallucinations decrease by 22.5% with web use and 9.6% without web access.

These are OpenAI's own internal evaluations, not independent third-party benchmarks, and the company has a clear interest in presenting its models favorably. That caveat noted, the methodology described — using flagged user conversations as evaluation data rather than controlled benchmark sets — is a meaningful design choice. Benchmarks measure performance on anticipated problems. User-flagged conversations capture the actual error distribution that real users encounter in practice, which is a harder and more representative signal.

The variance between web-enabled and knowledge-only performance across both evaluations also reveals something useful: web access provides a larger absolute improvement, which suggests the model is getting better at knowing when to trust retrieved information versus when to rely on its own reasoning, and at synthesizing the two more reliably.

| Evaluation Type | With Web Access | Without Web Access |

|---|---|---|

| Higher-stakes domains (medicine, law, finance) | 26.8% reduction | 19.7% reduction |

| User-flagged conversation errors | 22.5% reduction | 9.6% reduction |

Access, Availability, and What Comes Next

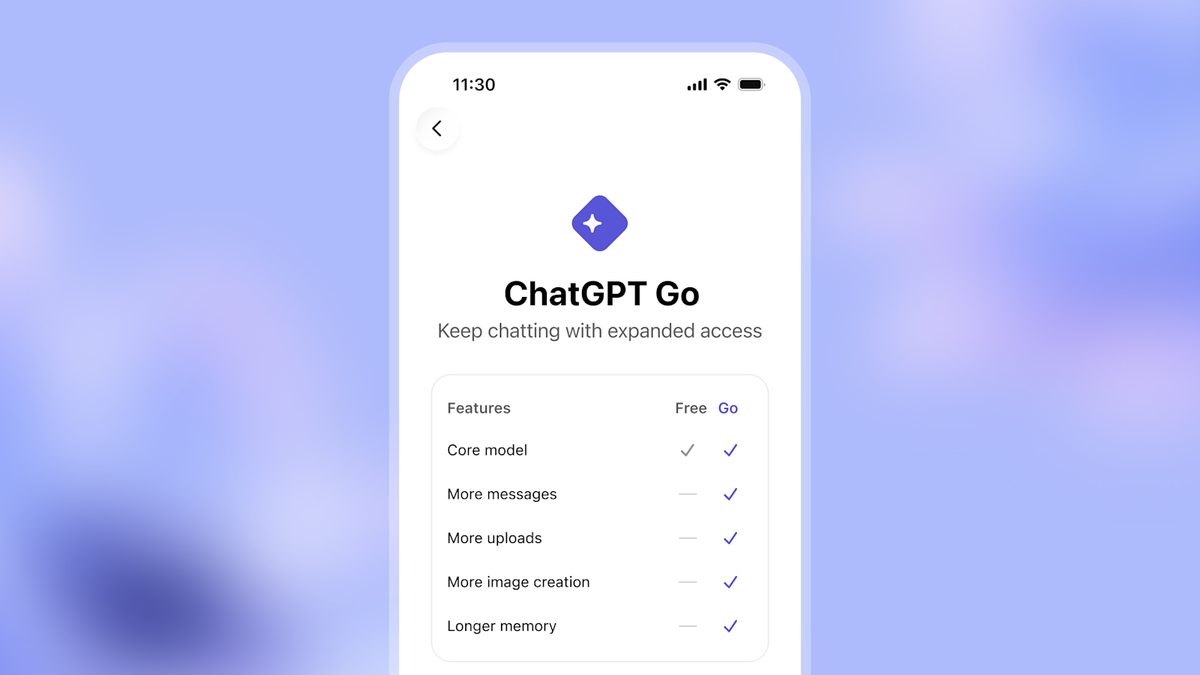

GPT-5.3 Instant is available to all ChatGPT users as of the release date. The model is the default for all logged-in users, with free-tier users able to send up to 10 messages every five hours before the system switches to a mini variant, and Plus users limited to 160 messages every three hours.

GPT-5.2 Instant will remain available to paid users in the Legacy Models section until June 3, 2026, giving organizations and users who depend on its specific behavior a transition window before it is retired. The model is also available to developers through the API under gpt-5.3-chat-latest.

Microsoft simultaneously announced the addition of GPT-5.3 Instant to Microsoft 365 Copilot and Copilot Studio, with priority access for Microsoft 365 Copilot licensed users and standard access for others.

GPT-5.4 Is Already in the Pipeline

OpenAI did not take long to signal that 5.3 is a bridge rather than a destination. Within an hour of the GPT-5.3 announcement, the company posted on X: "5.4 sooner than you think." References to GPT-5.4 had already appeared in Codex pull requests and the model selector in late February, and users reported being pulled into A/B tests for what appeared to be a new version before the official announcement. Reports have since surfaced of a build appearing in Arena under the codename "Galapagos," with speculation circulating that a formal release is imminent.

The iteration pace OpenAI is maintaining is worth observing in its own right. The GPT-5 series has moved from 5.0 to 5.3 within a relatively short window, with each point release targeting specific, user-identified problems rather than sweeping capability improvements. This is a different product development rhythm than the major version releases that characterized the GPT-3 to GPT-4 transition period, and it reflects the realities of competing in a consumer market where user experience is as important as benchmark performance.

The Broader Significance: What "Fixing the Tone" Actually Means

The conversation around GPT-5.3 has focused heavily on the "cringe" framing, which is an accurate description of what annoyed users but undersells the deeper issue the release is addressing. The over-cautious, over-empathetic tone of GPT-5.2 was not an aesthetic quirk. It was a symptom of a training incentive structure that had drifted toward optimizing for the absence of harm signals at the cost of optimizing for genuine helpfulness.

That tradeoff matters at scale. OpenAI's own release notes acknowledged these problems explicitly: tone, relevance, and conversational flow are nuanced problems that do not always show up in benchmarks, but they shape whether ChatGPT feels helpful or frustrating. The implication is that benchmark performance, which OpenAI's models have continued to improve across standard evaluations, is a necessary but insufficient condition for a product that people want to use. The product dimension — what it actually feels like to get an answer — is distinct from the capability dimension, and it requires separate attention.

This distinction has implications beyond OpenAI. The entire field of conversational AI has been quietly struggling with the same tradeoff: models trained to be safe often develop patterns of behavior that are experienced as patronizing, unhelpful, or actively counterproductive. The specific failure modes vary across models and companies, but the underlying cause is similar in each case. GPT-5.3's approach, using user-flagged conversations and explicit tone-focused training, is one proposed solution. Whether it proves durable is an open question.

What is not in question is that the problem OpenAI is solving with this release is real, widely experienced, and commercially significant. An AI assistant that users find irritating enough to cancel subscriptions over is not winning the long-term consumer market regardless of what it scores on ARC-AGI-2. The shift in priority this release represents, treating experiential quality as a first-class engineering problem rather than a secondary polish pass, is the right move, even if the specific implementation continues to evolve.

Conclusion

GPT-5.3 Instant is a meaningful update to the most widely used AI model in the world, and its primary contributions — reduced over-caution, better web synthesis, and measurably fewer hallucinations — address documented friction points that had accumulated enough user frustration to become a genuine product liability. The fact that OpenAI shipped the correction quickly, acknowledged the problem directly in its own announcement, and is already iterating toward 5.4 suggests the company is operating in a tighter feedback loop with its user base than it has in some previous cycles.

The test is not whether the tone improved in the first week of deployment, but whether the training incentives that produced the problem in the first place have been structurally addressed, or whether they will gradually reassert themselves as the model's future training iterations accumulate new constraints. The history of this particular challenge in AI development does not suggest that point releases permanently resolve it. What GPT-5.3 demonstrates is that the problem is taken seriously, and that the tools for managing it are improving. That is not nothing.

Frequently Asked Questions

Q: What is GPT-5.3 Instant and how does it differ from GPT-5.2?

GPT-5.3 Instant is the updated default model for all ChatGPT users, released on March 3, 2026. The three primary improvements over GPT-5.2 Instant are a more direct conversational style that significantly reduces unnecessary refusals and moralizing preambles, better web search synthesis that integrates retrieved information with the model's own reasoning rather than simply returning lists of links, and measurably lower hallucination rates across a range of domains. Hallucinations were reduced by up to 26.8% in higher-stakes domains like medicine, law, and finance according to OpenAI's internal evaluations.

Q: Who has access to GPT-5.3 Instant?

GPT-5.3 Instant is available to all ChatGPT users regardless of subscription tier. Free users can send up to 10 messages every five hours before the system reverts to the mini model variant. Plus users have a limit of 160 messages every three hours. The model is also available to enterprise users and through the API under gpt-5.3-chat-latest. Microsoft simultaneously rolled out the model to Microsoft 365 Copilot users.

Q: Why did ChatGPT develop such an over-cautious, preachy tone in the first place?

The pattern emerges from how large language models are trained through reinforcement learning from human feedback. When raters penalize responses that seem insufficiently careful around sensitive topics, models learn to lead with caution. Over time, as the definition of sensitive topics expands, protective language migrates from genuinely high-stakes situations into ordinary conversation. OpenAI is also facing litigation alleging its products have contributed to negative mental health outcomes, which creates organizational pressure toward conservative responses. GPT-5.3 recalibrates that balance by drawing on user-flagged conversation data rather than solely on controlled benchmark evaluations.

Q: How reliable are OpenAI's hallucination reduction claims?

OpenAI conducted two internal evaluations: one on higher-stakes domains and a second using de-identified conversations that users had flagged as factually incorrect. The methodology of using real user-flagged conversations is more representative of actual error distribution than standard benchmark sets, though both evaluations were conducted by OpenAI rather than independent researchers. The figures should be treated as directionally credible but not as independently verified performance claims. Third-party evaluations on public benchmarks will provide a more complete picture over the coming weeks.

Q: What happens to GPT-5.2 Instant?

GPT-5.2 Instant remains accessible to paid users in the Legacy Models section of the model picker until June 3, 2026, after which it will be retired. Organizations and users who depend on the specific behavior of GPT-5.2 have a three-month transition window before they are moved to the new default.

Q: Is GPT-5.4 actually coming soon?

OpenAI posted "5.4 sooner than you think" on X within an hour of the GPT-5.3 announcement, and references to GPT-5.4 had already appeared in Codex pull requests and the model selector in late February. A build has since been spotted in Arena under the codename "Galapagos." OpenAI has confirmed that improvements to the Thinking and Pro model tiers are coming in the near term, which likely corresponds to GPT-5.4. No official release date has been announced.

Q: Does GPT-5.3 Instant change how OpenAI handles safety and content refusals?

The update significantly reduces what OpenAI characterizes as unnecessary refusals, meaning cases where the model declined to answer questions it should have been able to handle safely. This is distinct from OpenAI removing safety guardrails for genuinely sensitive content. The framing in OpenAI's own announcement is that GPT-5.3 Instant is better calibrated to distinguish between cases that warrant caution and cases where providing a direct, useful answer is the appropriate response. The model's behavior around genuinely high-stakes content categories remains governed by the same underlying policies.

Related Articles