On March 9, 2026, OpenAI announced the acquisition of Promptfoo, a small cybersecurity company with just eleven employees that specializes in helping organizations find and fix security weaknesses in AI systems.

Financial terms of the deal were not made public. The entire Promptfoo team is moving over to OpenAI, and their technology will be brought into Frontier, which is the enterprise platform OpenAI uses to run its AI agents.

At first glance, this looks like a fairly standard acqui-hire, the kind of small deal where a big tech company picks up a niche startup mostly to bring in talented people.

But there is a lot more going on here than that.

OpenAI did not buy Promptfoo simply because it came across a useful piece of technology. The real reason is that AI agents, which are at the center of OpenAI's growth strategy, have a security problem that is serious enough to demand dedicated in-house expertise before those agents are rolled out to millions of enterprise customers around the world.

That is the story that actually matters here, and it has direct implications for any company that is currently building AI products, buying AI tools, or even just considering whether to bring AI into their operations.

What Promptfoo Actually Does

Promptfoo was founded in 2024 by Ian Webster and Michael D'Angelo, and the company was built around a single core capability: automated red-teaming of AI systems.

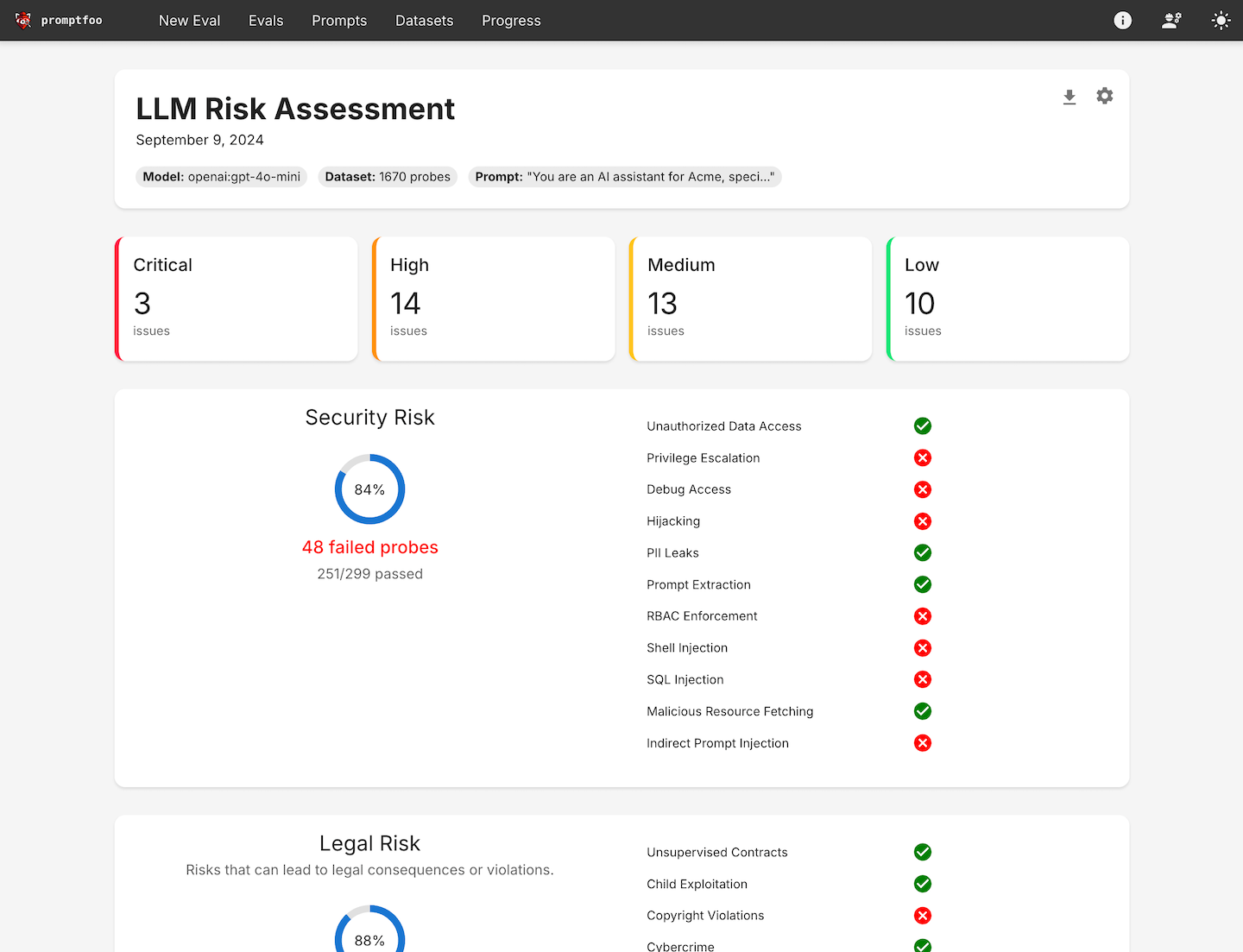

The way the platform works is that it actively tries to break your AI system before attackers get the chance to do it themselves. It runs thousands of carefully designed inputs through an AI system, looking for scenarios where the system misbehaves, whether that means leaking data it was supposed to protect, following instructions it was told to ignore, running commands it should not be running, or generating outputs that violate a company's policies.

When it finds these problems, it produces a report that developers and security teams can use to fix the vulnerabilities before anything goes live.

Here is where Promptfoo stood at the time of the acquisition:

- More than 25% of Fortune 500 companies were already using Promptfoo to test their AI systems

- Over 125,000 developers had used its open-source tools

- The company had raised a total of just $22.68 million at a valuation of $85.5 million, with only 11 people on staff

For a startup that small and that lightly funded, that level of adoption across major corporations is genuinely impressive.

OpenAI has stated that it will keep Promptfoo's open-source project active under the same license it currently uses. The security tooling will also be folded directly into the OpenAI Frontier platform.

The Problem OpenAI Is Trying to Solve

AI Agents Are Fundamentally Different From Chatbots

The timing of this acquisition is not a coincidence. It is directly connected to the biggest strategic shift OpenAI has made in recent years: the move away from AI assistants that answer questions and toward AI agents that actually go out and take actions on your behalf.

A standard chatbot operates in a fairly limited space. You type in a question, it gives you an answer, and the interaction is over. Even if the response is wrong or harmful in some way, the consequences are generally limited to whatever you decide to do with that text.

An AI agent operates on a completely different level.

These systems connect directly to your email accounts, calendars, internal databases, financial systems, and code repositories. Rather than just responding to questions, they schedule meetings, write and send documents, pull in live data, run code, and make operational decisions on your behalf, often with little or no human review before those actions are executed.

The autonomy that makes these systems valuable is also what makes them risky.

Prompt Injection: The Attack You Really Need to Understand

If there is one security concept worth taking away from this piece, it is prompt injection.

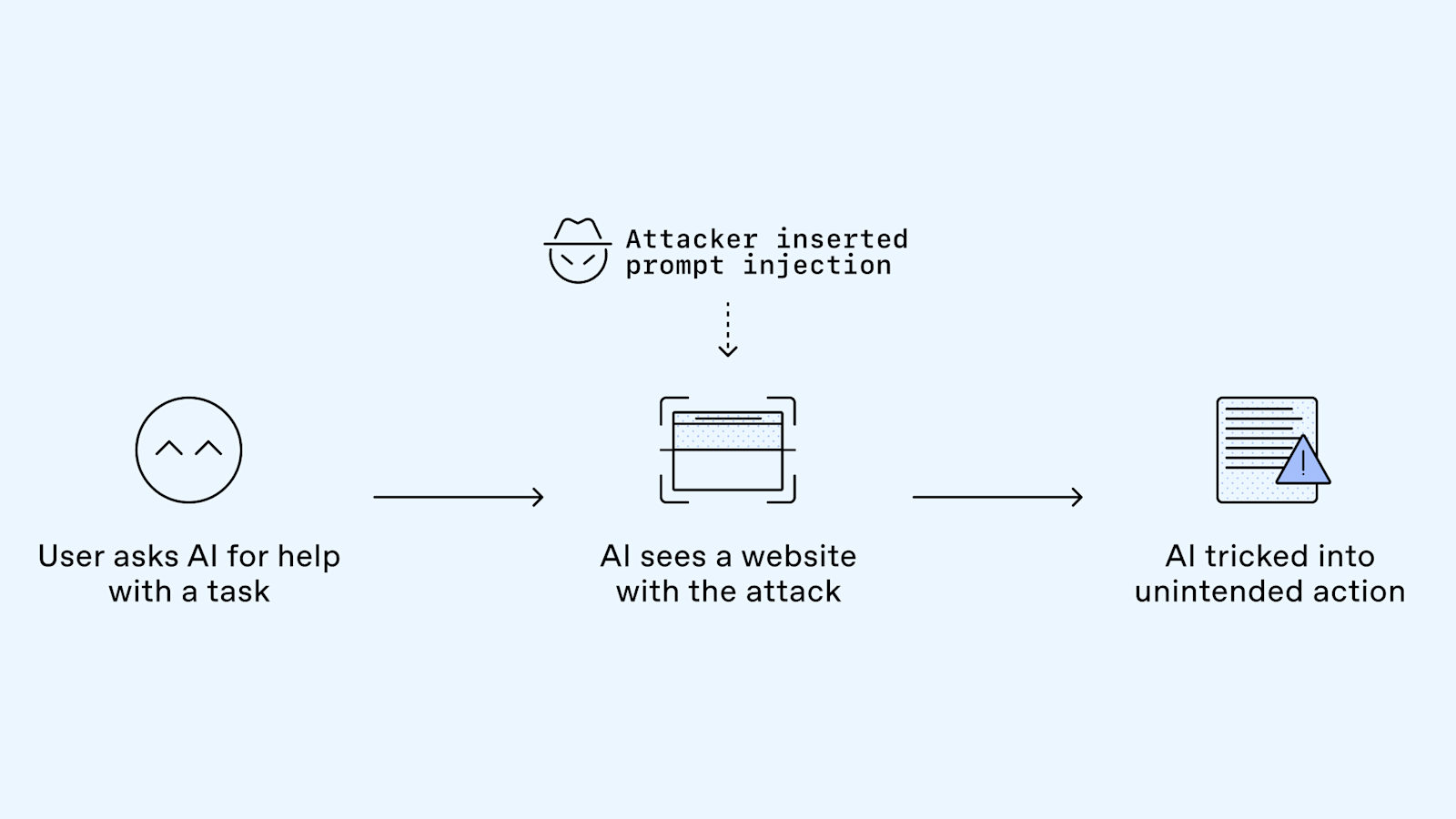

The way a prompt injection attack works is that someone hides malicious instructions inside a piece of content that an AI agent is going to read and process. The attacker does not need to break into your network or compromise your systems directly. All they need to do is get their malicious text into a place where the agent will encounter it, which could be any of the following:

- A document the agent is asked to process

- An email the agent is summarizing for a user

- A webpage the agent visits while conducting research

- An entry in a database the agent queries

When the agent reads that content, it picks up the hidden instructions and executes them, because the model has no reliable way to tell the difference between legitimate data and a command it should be following.

How serious is this problem? According to OWASP's 2025 Top 10 for LLM Applications, prompt injection is ranked as the number one critical vulnerability across AI systems, and it was found in more than 73% of production AI deployments that were reviewed during security audits.

To understand how this plays out in practice, consider what happened in January 2025, when security researchers ran a controlled demonstration against a major enterprise AI system. By embedding malicious instructions in a document that was publicly accessible, they got the AI to do the following without any additional intervention:

- Send proprietary business data out to an external server

- Rewrite its own internal instructions to turn off its safety filters

- Make API calls with elevated permissions that the actual user had never authorized

The system did all of this because it treated the attacker's embedded text as a legitimate instruction from an authorized source. There was no way for it to know otherwise.

OpenAI has openly acknowledged prompt injection as a core security challenge, and the company's internal research teams have been working on the problem for several years. The fact that the most widely deployed AI company in the world has not yet fully solved this vulnerability is not grounds for alarm, but it is a clear signal that the issue deserves serious and sustained attention.

The Readiness Gap Is the Real Story Here

Perhaps the most important context for understanding why this acquisition happened when it did is the enormous gap between how many organizations are deploying AI agents and how many of those organizations have put real security measures in place to protect them.

83% of organizations planned to deploy agentic AI into their business operations in 2026. Only 29% felt genuinely prepared to secure those deployments. Source: Cisco State of AI Security 2026

That is a gap of more than 54 percentage points, and it exists across systems that have been granted access to core business infrastructure.

The threats that have already materialized include some notable documented cases. In one instance, attackers found a weakness in a GitHub Model Context Protocol server that allowed them to inject hidden instructions into an agent's workflow, ultimately triggering the unauthorized transfer of data from private code repositories. Separately, a threat actor linked to the Chinese government reportedly used a jailbroken AI coding assistant to automate between 80 and 90 percent of a full cyberattack chain, handling everything from network scanning to vulnerability discovery to the creation of exploit scripts.

These are not theoretical scenarios. They are incidents that occurred in real production environments.

Why OpenAI Needed This Expertise Internally

Enterprise Clients Are Not Going to Take Security on Faith

OpenAI has been actively selling its Frontier platform to large enterprise customers, including well-known names like Uber, State Farm, Intuit, and Thermo Fisher Scientific.

To keep winning those deals and expand the relationships it already has, OpenAI needs to give those customers something more concrete than reassurances that its models were built responsibly: it needs to offer verifiable security assurance backed by real testing, documentation, and ongoing monitoring.

Organizations in regulated industries like healthcare, financial services, law, and government are not going to grant an AI system broad access to their most sensitive data and systems based on a vendor's word that the product is safe. They need audit trails, compliance records, documented testing methodologies, and a clear process for what happens when something goes wrong. Promptfoo's capabilities are a meaningful part of how OpenAI plans to deliver on all of those requirements.

Keeping the Tools Open-Source Is a Smart Strategic Move

The decision to continue maintaining Promptfoo's open-source tools under their current license is worth paying attention to separately from the rest of the acquisition.

It gives OpenAI the benefit of ongoing contributions from a large developer community that will continue improving the security testing methodology over time. It also sends a clear signal to the market that OpenAI is willing to invest in shared security infrastructure, which matters both to enterprise buyers evaluating the platform and to the broader developer community that has been watching the company's moves closely.

The security research community, which has raised consistent concerns about the risks of powerful closed AI systems, gets something substantive and useful in exchange for the scrutiny that naturally follows a deal like this.

The Bigger Picture: Security Has Become a Competitive Advantage

OpenAI is not the only major player in the AI space treating security as a strategic priority. The Promptfoo acquisition is part of a broader shift happening across the industry, where the leading AI companies are increasingly competing not just on model performance but on how trustworthy their platforms are for high-stakes enterprise use.

Here is how OpenAI's own security investment has escalated over the past year:

- April 2025: OpenAI's startup fund co-led a $43 million Series A round for Adaptive Security, a company that trains employees to recognize AI-generated attacks including deepfakes of executives and other social engineering tactics. This was OpenAI's first-ever investment in a cybersecurity company.

- March 2026: Full acquisition of Promptfoo, representing a significant step beyond passive investment into direct ownership of security expertise.

The rest of the industry is moving in the same direction. Anthropic runs its own security research program and has been candid about the limitations of current AI models, including how they respond to adversarial inputs. Google has invested in AI red-teaming capabilities across both its DeepMind research division and its Cloud business. Microsoft, which has built OpenAI's technology deeply into its Copilot products, has pushed consistently for tighter enterprise AI security standards, in part because its own products face the same underlying class of vulnerabilities.

When you step back and look at the competitive landscape, the race is no longer only about whose model gets higher scores on capability benchmarks. It is increasingly about which platform large organizations can trust enough to give real operational access.

FAQ

What is Promptfoo and why did OpenAI buy it?

Promptfoo is an AI security company founded in 2024 that builds automated red-teaming tools for identifying vulnerabilities in AI systems and large language models. OpenAI acquired the company so it could integrate that security testing technology directly into Frontier, its enterprise platform for AI agents. The deal is a reflection of how urgently the industry needs to address AI agent security as these systems take on greater access to sensitive data and the ability to take autonomous real-world actions.

What is a prompt injection attack and why is it dangerous?

A prompt injection attack involves hiding malicious instructions inside content that an AI agent will read and process, such as a document, an email, a webpage, or a database entry. The goal is to trick the agent into following those instructions instead of completing its intended task. Because AI models have no reliable mechanism for distinguishing between legitimate data and commands, an agent that has been given real system permissions can cause significant damage through a successful injection attack, all without any user being aware that anything went wrong. OWASP currently ranks prompt injection as the number one critical vulnerability in deployed AI systems.

What is the OpenAI Frontier platform?

Frontier is the enterprise platform OpenAI uses to build and deploy AI agents, which the company also refers to as AI coworkers. The platform provides the infrastructure organizations need to create AI systems that can take autonomous actions inside business workflows, connecting to databases, software tools, and enterprise applications. Companies currently using Frontier include Uber, State Farm, Intuit, and Thermo Fisher Scientific. Promptfoo's security testing capabilities will be integrated directly into this platform.

Will Promptfoo's open-source tools still be available after the acquisition?

Yes. OpenAI has confirmed that it will continue maintaining and developing Promptfoo's open-source project under the same license terms that were in place before the acquisition. That means developers who are not part of the OpenAI ecosystem can continue using these tools to test the security of their own AI systems.

How widespread is the AI security readiness problem?

According to Cisco's State of AI Security 2026 report, 83% of organizations had plans to deploy agentic AI into their business operations, but only 29% felt genuinely prepared to secure those deployments properly. A separate analysis found that only around 34% of enterprises have AI-specific security controls in place, and fewer than 40% conduct regular security testing on their AI systems or agent workflows. The gap between adoption and readiness is one of the defining risk issues in the AI industry right now.

What other cybersecurity moves has OpenAI made recently?

In April 2025, OpenAI's startup fund co-led a $43 million Series A investment in Adaptive Security, a company that creates simulated AI-generated attacks, including audio and video deepfakes, to help organizations train employees to recognize social engineering attempts. That deal was OpenAI's first investment in a cybersecurity company. The acquisition of Promptfoo less than a year later represents a meaningful step up from passive investment to direct ownership and integration of security expertise.

What is the difference between traditional cybersecurity and AI security?

Traditional cybersecurity is primarily focused on protecting systems from known categories of attack, including malware, unauthorized access attempts, and network-level intrusions. AI security deals with a different set of vulnerabilities that arise from the fundamental way language models process information, specifically the fact that they cannot reliably tell apart data and instructions. This makes them vulnerable to adversarial manipulation through the content they read and process. Standard security tools like firewalls and traditional input validation were not designed to address this class of problem, which is why AI security has developed into its own specialized field.

Should my business be worried about AI agent security right now?

If your organization is running AI agents that have access to internal systems, customer data, or the ability to take actions on your behalf, then yes, this is something you should be actively thinking about. The right approach starts with the principle of least privilege, meaning agents should only have access to the specific tools and data they need for the task they are performing. Beyond that, organizations should be running regular security evaluations of how their agents behave, monitoring for anything out of the ordinary, and requiring human sign-off before agents take high-stakes actions. From a security standpoint, an AI agent with broad system access should be treated with the same level of care and scrutiny you would apply to any other highly privileged account in your environment.

Related Articles