Four days separated two of the most consequential AI hardware announcements in recent memory.

On March 16, Jensen Huang took the stage at GTC 2026 in San Jose and declared that Nvidia sees at least $1 trillion in purchase orders for its Blackwell and Vera Rubin chip platforms through 2027. That figure doubled the $500 billion forecast he had given at the same conference a year earlier. On March 21, Elon Musk walked into a defunct power plant in Austin and announced Terafab: a $20 to $25 billion joint chip fabrication facility between Tesla, SpaceX, and xAI, targeting one terawatt of annual AI computing power, produced at 2-nanometer process nodes, at a scale he called "the most epic chip-building exercise in history by far."

One announcement comes from the company that currently controls roughly 85 percent of the AI accelerator market and is booking orders faster than any semiconductor company in history. The other comes from a man who has never fabricated a chip.

Both deserve serious analysis, which means treating them differently. One is a live, shipping, revenue-generating business that just raised its own revenue forecast by $500 billion in twelve months. The other is a construction announcement for a facility that has never manufactured anything and is targeting specifications that would take the most experienced chipmakers on Earth years to achieve.

Understanding the actual competitive landscape of AI compute requires holding both of these truths simultaneously, alongside a third dimension that neither headline fully captures: the hyperscalers, including Google, Amazon, Meta, and Microsoft, are quietly eroding Nvidia's position from a direction Terafab does not address at all.

What Nvidia Actually Announced at GTC 2026

Jensen Huang did not simply announce faster chips at GTC 2026. The more accurate framing is that he announced Nvidia's intention to graduate from chip supplier to AI infrastructure architect, and backed that ambition with a product roadmap, an acquisition, and a demand number designed to silence questions about the durability of the company's position.

The $1 trillion figure: Huang was specific about what this covers. The trillion dollars is a combined purchase orders figure for Blackwell and Vera Rubin through 2027, not speculative demand but "high confidence, strong visibility, demand forecast, purchase orders," as he described it to analysts after the keynote. "It's not Feynman, it's not Rubin Ultra. It's not any of those things. It's not Vera stand-alone. It's not Groq. Blackwell plus Rubin, we have high confidence, strong visibility, demand forecast, purchase orders of $1 trillion plus."

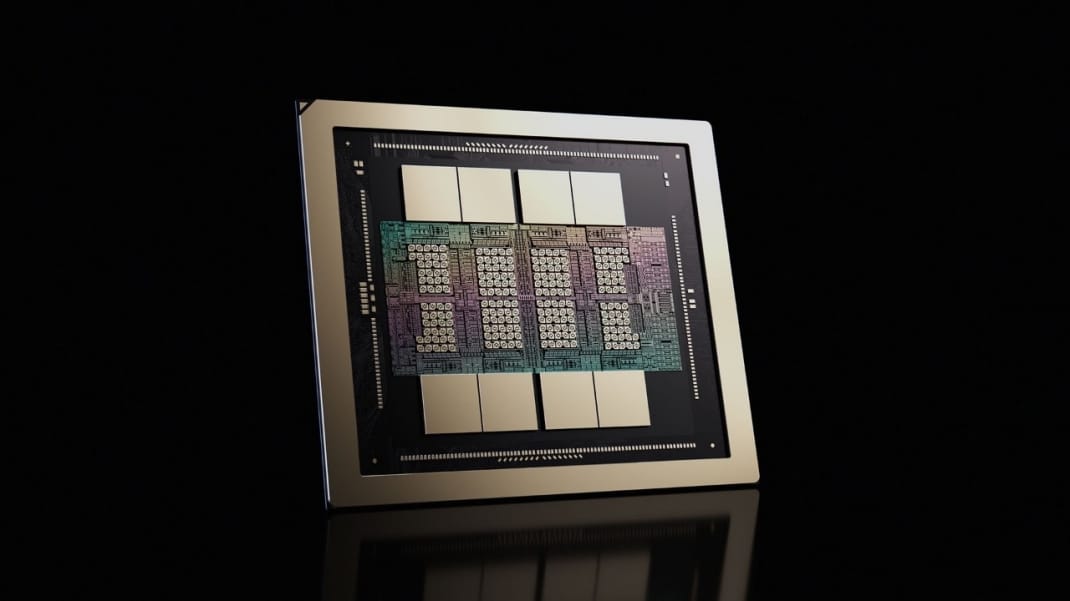

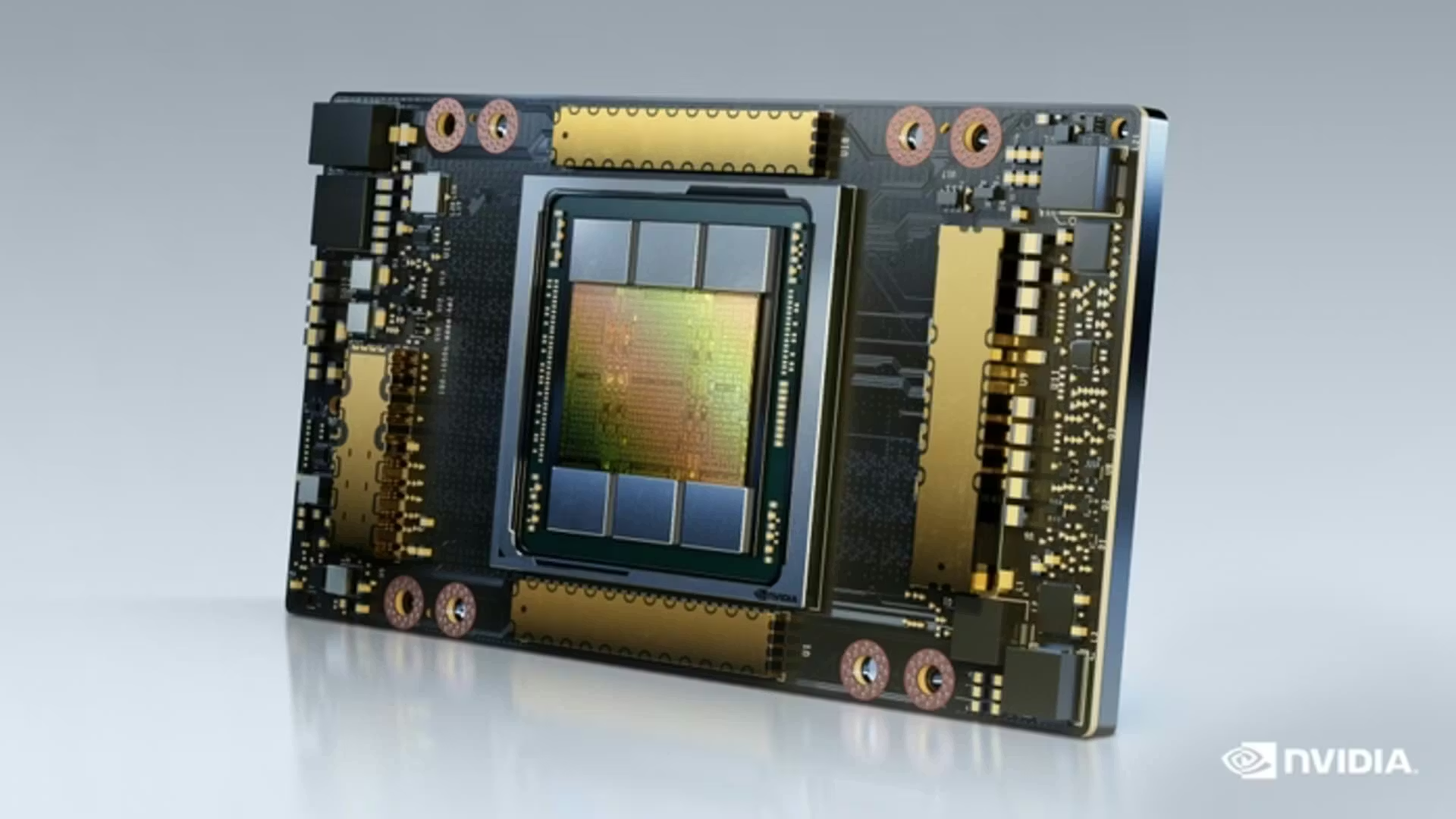

Vera Rubin: The platform Nvidia calls a generational leap consists of seven chips across five rack-scale systems. Compared to Grace Blackwell, Vera Rubin delivers 10 times more performance per watt at one-tenth the cost per token, according to Nvidia's own benchmarks. Vera Rubin NVL72, the center of the platform, pairs 72 Rubin GPUs with 36 Vera CPUs in a single chassis designed as a drop-in upgrade for Blackwell installations. The first Vera Rubin system is already running in Microsoft Azure. Full customer shipments begin later in 2026.

The Groq acquisition: In December 2025, Nvidia completed a $20 billion purchase of Groq's intellectual property, its largest deal ever. The rationale matters. Groq's Language Processing Unit architecture was designed to address the one genuine criticism competitors had landed against Nvidia: GPUs handle low-latency inference poorly. The Groq 3 LPX rack holds 256 LPUs alongside a Vera Rubin system, with Nvidia's Dynamo software routing token generation between the two architectures automatically. Huang claimed the combined system achieves 35 times higher throughput per megawatt versus Blackwell alone. The Groq acquisition addressed the competitive argument that Nvidia's architecture had a structural weakness. Nvidia's response was to buy the company that had made that argument most effectively.

The roadmap cadence: Nvidia has shifted to an annual architecture release cycle, a deliberate strategy Huang has described as making himself "chief revenue destroyer," accelerating obsolescence of previous generations before competitors can match current ones. After Vera Rubin in 2026 comes Vera Rubin Ultra in 2027, then Feynman in 2028. The Feynman platform, previewed at GTC, integrates 144 GPUs in vertical compute trays (the Kyber rack architecture) for increased density. Nvidia is not just shipping hardware; it is releasing architectural generations faster than competitors can respond.

CUDA at 20: Huang spent significant time at GTC marking the 20th anniversary of CUDA and explaining why it remains the central strategic asset of the business. The CUDA ecosystem spans every cloud, every major AI research institution, and is embedded in the training infrastructure of every major frontier AI lab. Nvidia's data center revenue reached $51.2 billion in the most recent quarter, up 66 percent year over year, representing 90 percent of total company revenue of $57 billion. The business is not theoretical.

What Terafab Actually Is

Musk's announcement was real, recent, and backed by a specific dollar figure. It was also, by any fair reading of semiconductor industry history, extremely early-stage.

The stated ambition: Terafab is a joint venture between Tesla, SpaceX, and xAI. The facility will be built near Tesla's Gigafactory in Austin and is designed to produce 2-nanometer chips at high volume. Musk targets 100,000 wafer starts per month initially, scaling toward one million, with the stated goal of producing one terawatt of annual AI computing capacity. At full stated capacity, Terafab would represent roughly 70 percent of TSMC's entire current global output from a single facility.

The immediate context: Musk confirmed that Tesla's fifth-generation AI chip, called AI5, will begin small-batch production at Terafab later in 2026 with volume production in 2027. xAI, now a subsidiary of SpaceX following a February 2026 acquisition, operates the Grok AI system and has a Memphis data center already running at two gigawatts of compute capacity. The strategic rationale is genuine: Musk's companies face compute demand from three directions simultaneously (vehicles and robots for Tesla, AI training and inference for xAI, radiation-hardened chips for SpaceX satellites), and existing foundry relationships with TSMC and Samsung cannot meet that timeline on his terms.

The claimed economics: Musk asserts Tesla's chip design could consume one-third the power of Nvidia's Blackwell GPU while costing roughly one-tenth as much to produce. These claims have not been independently validated and are, at this stage, design targets rather than manufacturing results.

The execution challenge: This is where the Battery Day parallel becomes relevant. In September 2020, Tesla announced a plan to manufacture its own battery cells with revolutionary efficiency and cost. By 2025, cell manufacturing remains far from the stated targets, and Musk publicly acknowledged in mid-2025 that the Dojo 2 training supercomputer was "an evolutionary dead end," disbanded the team, and pivoted Tesla toward buying more chips from Nvidia and AMD.

Building a 2-nanometer fab from scratch is, by most industry accounts, one of the hardest technical challenges in existence. A single 2nm fab with 50,000 wafer starts per month costs roughly $28 billion and takes approximately 38 months to construct in the United States under favorable conditions. TSMC spent $165 billion and several decades building its capacity at advanced nodes, with Arizona Fab 21 still not reaching 2nm production until the 2025 to 2026 timeframe. Musk gave no specific timeline for Terafab's volume production, and Tesla ended 2025 with $44 billion in cash, against a capital expenditure plan already exceeding $20 billion that, as Tesla's CFO acknowledged in January, did not yet include the full Terafab cost.

Terafab is a project initiation, not a factory opening. Small-batch production of AI5 chips in late 2026 and volume production in 2027 are the nearest-term milestones with any specificity attached. Everything at the Terafab scale is procurement paperwork at this point.

The competitive pressure it does create, even now: A Terafab that reaches even 30 percent of its stated capacity would introduce a new source of advanced AI compute into a market where Nvidia's ability to price Blackwell and Vera Rubin at current levels depends on there being no credible alternative foundry. Lower upstream compute costs eventually compress the API and subscription pricing that everyone pays for AI services. The announcement alone creates pressure on pricing dynamics, even if the facility takes years to realize.

The Third Force: Hyperscaler Custom Silicon

The most consequential competitive threat to Nvidia's long-term market share is not Terafab. It is the systematic effort by the hyperscalers to replace Nvidia GPUs with custom silicon for their own internal workloads.

The scale of this shift is already visible.

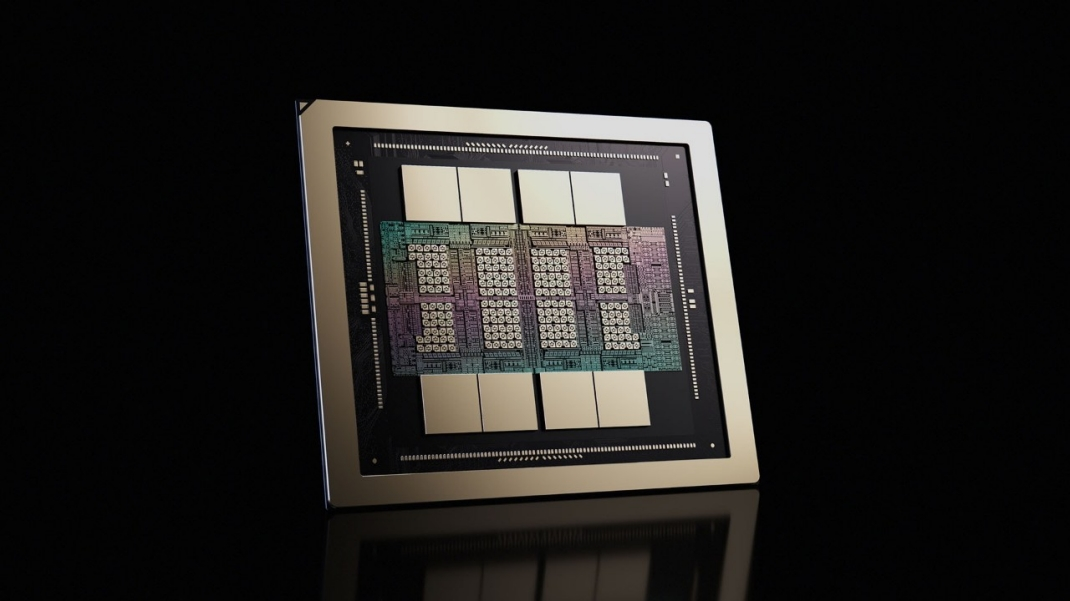

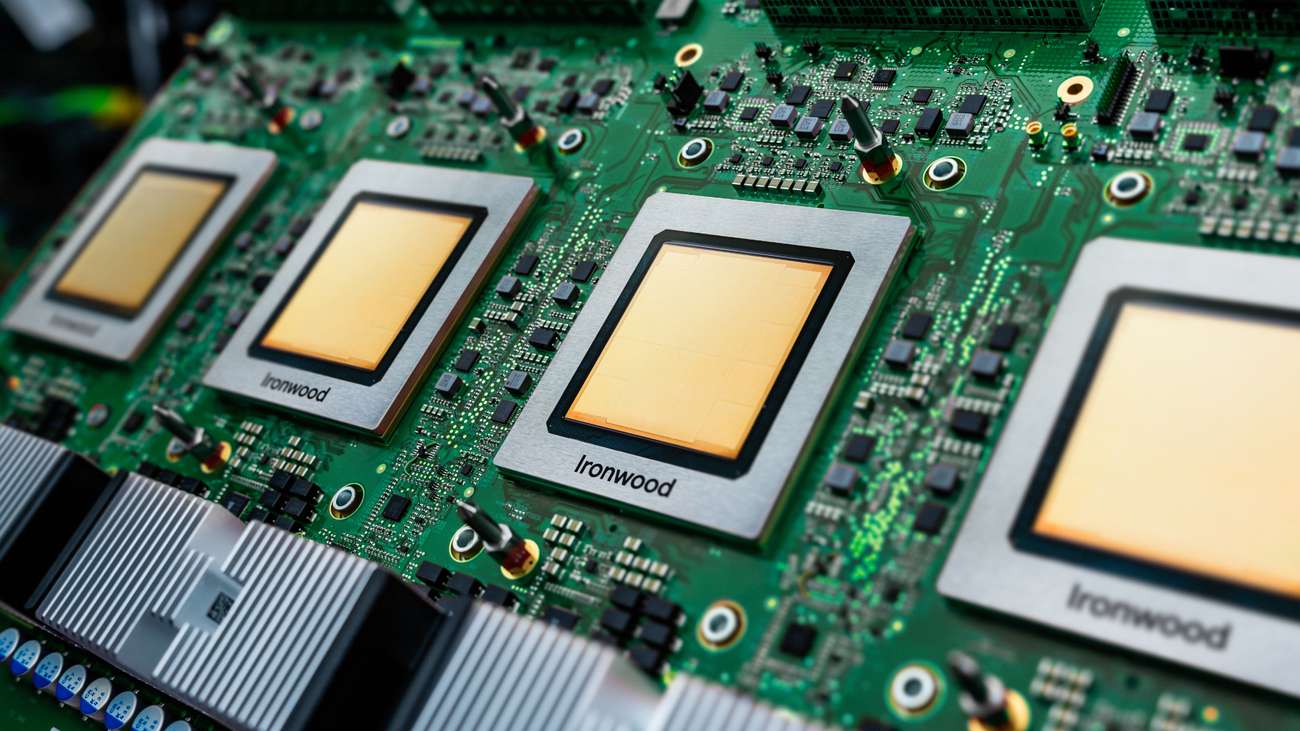

Google Ironwood (TPU v7): Google released its seventh-generation TPU in November 2025, a decade after making its first custom ASIC for AI in 2015. Over 75 percent of Google's Gemini model computations now run on internal TPUs. In November 2025, Anthropic committed to hundreds of thousands of Trillium TPUs in 2026, scaling toward one million chips by 2027, in what Google described as the largest TPU deal in its history. Midjourney and Safe Superintelligence (Ilya Sutskever's company) are among other prominent TPU customers.

Amazon Trainium 2: AWS filled its largest AI data center with half a million Trainium2 chips for Anthropic's model training. The chips are now exposed externally via AWS instances, and Amazon claims up to 50 percent cost savings over GPUs for certain workloads. AWS's Graviton5 CPU, built on 3nm technology, delivers 25 percent better performance than its predecessor for general tasks.

Meta MTIA: Meta's Training and Inference Accelerator is designed specifically for the company's recommendation systems, content ranking, and ad targeting on Facebook, Instagram, and WhatsApp. Meta reports a 44 percent total cost of ownership reduction versus GPUs for these workloads, saving billions of dollars annually at Meta's scale. A separate Meta training chip is in testing with mass production targeted for 2026. Meta's overall CapEx guidance of $60 to $72 billion for 2025 was still predominantly Nvidia GPUs, but in October 2025 The Information and Reuters confirmed Meta was in advanced talks with Google for a multibillion-dollar TPU deployment beginning mid-2026.

Microsoft Maia: Microsoft's Maia 200, delayed from 2025 to 2026, is now shipping and is built on TSMC 3nm with 216GB of HBM3e. Internal usage currently powers Azure OpenAI Services and Copilot workloads. Still, 70 percent of Azure AI workloads run on Nvidia, reflecting that custom silicon supplements rather than replaces for Microsoft's customer-facing products.

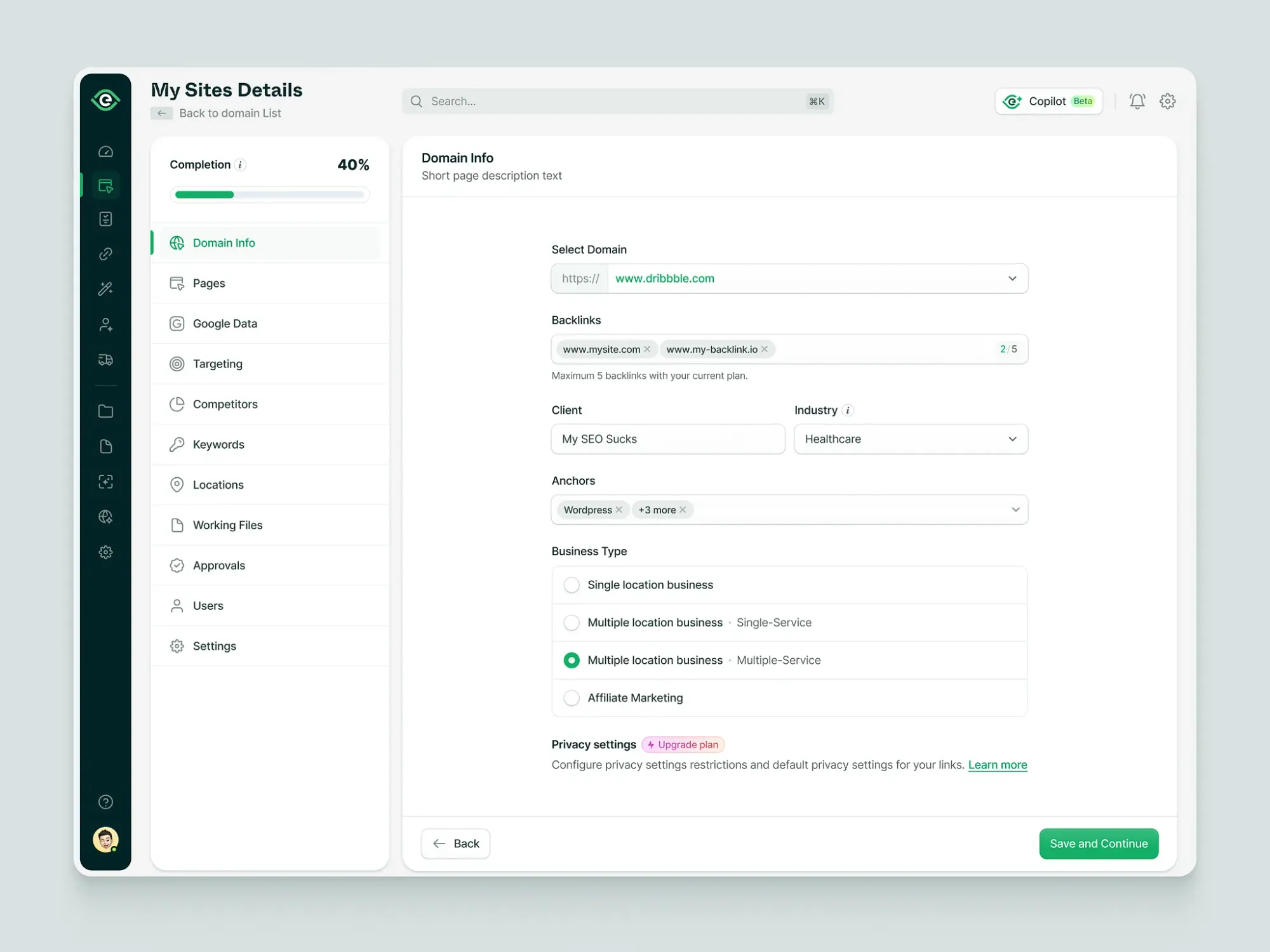

| Company | Custom chip | Current Nvidia dependence | Notable development |

|---|---|---|---|

| TPU Ironwood (v7) | Moderate (TPUs handle 75%+ of internal AI) | Anthropic 1M TPU deal | |

| Amazon | Trainium 2 / Inferentia | High (external workloads still Nvidia-heavy) | 500K chips for Anthropic training |

| Meta | MTIA Artemis | High ($60-72B CapEx mostly Nvidia) | TPU talks for Llama inference |

| Microsoft | Maia 200 | Very high (70% Azure AI on Nvidia) | Delayed, now shipping |

| Tesla / xAI | AI5 (Terafab) | Still high (Musk confirmed large Nvidia orders) | Small batch late 2026 |

The pattern is consistent: custom silicon works well for internal workloads with predictable patterns. Google running Gemini queries, Amazon running Alexa, Meta running recommendation systems, all share the characteristic of high-volume, stable, well-understood compute demand that justifies the upfront engineering cost of a custom ASIC. Nvidia's GPUs remain dominant for general-purpose workloads, new model training, and any deployment that requires flexibility across clouds, on-premises systems, and edge devices.

The AI data center total addressable market is expected to grow roughly fivefold, from $242 billion in 2025 to more than $1.2 trillion by 2030. As the market grows, custom silicon is expected to normalize Nvidia's share toward 75 percent from over 85 percent currently. But those changes are likely to be gradual.

The CUDA Moat, Honestly Assessed

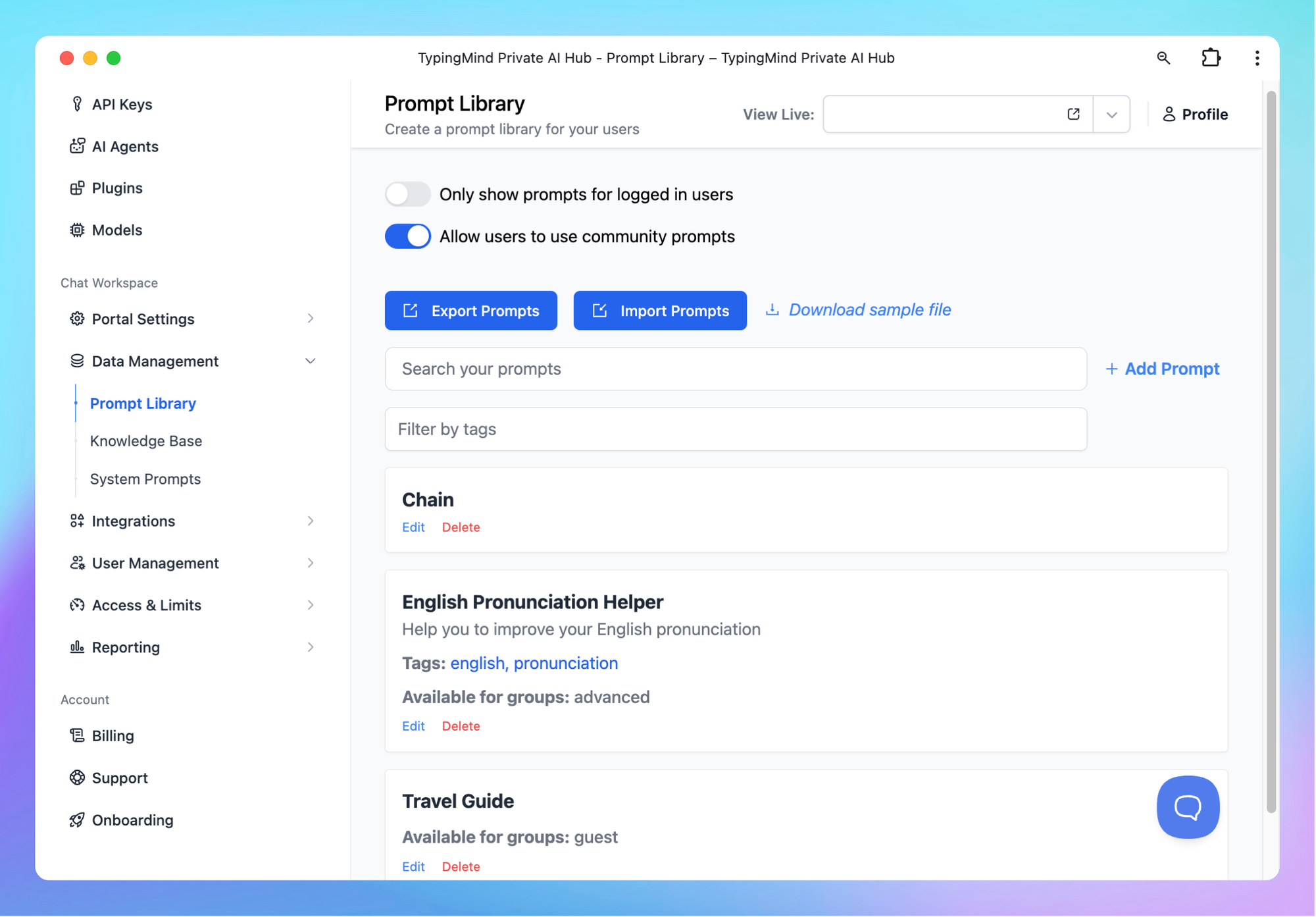

Nvidia's strongest competitive defense is not hardware performance. It is the CUDA software ecosystem, which is 20 years old, sits inside every major cloud, and has been the default programming environment for AI research for the better part of a decade.

Huang described it to analysts as a flywheel: a large installed base attracts developers, developers create new algorithms, those breakthroughs open new markets, new markets expand the installed base. CUDA's developer activity is estimated at 10 times that of its nearest competitor.

The moat has real depth, but it is not impenetrable. Stanford's CS229, the foundational machine learning course, added JAX and TPU as the default framework in Winter 2025. MIT, Berkeley, and CMU followed. Google's CUDA competitor, Triton, is gaining traction among frontier AI researchers. The educational pipeline is slowly shifting.

More immediately, the Groq acquisition closed one of the most persistently effective arguments against Nvidia's architecture. Groq's LPU was designed specifically to address GPU inefficiency in low-latency inference, which is the workload pattern that drives most of the economics in a production AI deployment. By acquiring Groq and integrating the LPU alongside Vera Rubin, Nvidia addressed the competitive argument without conceding the point.

Nvidia's response to every competitive pressure has been the same: accelerate the roadmap. Vera Rubin ships in 2026. Vera Rubin Ultra ships in 2027. Feynman in 2028. The cadence makes it structurally difficult for competitors to match current-generation performance because by the time they do, the next generation is already in customer hands.

Who Controls the AI Brain?

The honest answer is that Nvidia controls the AI brain right now, by a large margin, with real visibility into continuing to do so through at least 2027.

The $1 trillion in purchase orders is not a marketing number. It is a documented demand signal across hyperscalers, sovereign AI programs, enterprise deployments, and AI-native companies that have nowhere else to go for the combination of training performance, flexibility, and software ecosystem that Nvidia provides.

Terafab does not threaten that position on any near-term timeline. A facility that begins small-batch production in late 2026 and targets volume production in 2027 is not a 2026 competitive factor in the AI chip market. If Terafab executes, it becomes relevant to Musk's own companies first, and to the broader market only after years of production scaling. Tesla's history with analogous manufacturing ambitions warrants skepticism about the execution timeline, even if the strategic rationale is sound.

The more credible medium-term threat to Nvidia's share comes from the hyperscalers, who collectively represent the largest share of Nvidia's customer base and are systematically reducing their dependence on Nvidia for their most predictable, high-volume internal workloads. As custom silicon at Google, Amazon, Meta, and Microsoft matures, Nvidia will serve a growing market with a declining share of the largest customers' total compute spending.

The structural question for Nvidia is not whether Terafab can match Vera Rubin. It is whether the CUDA ecosystem and the annual cadence of architectural advancement can maintain Nvidia's relevance for the workloads that custom silicon handles poorly: new model training, flexible multi-cloud deployment, rapid iteration on new architectures, and the long tail of enterprise and research workloads that do not have the volume to justify custom chip development.

On that question, the 20-year investment in CUDA and the company's software layer suggest Nvidia's position is durable even in a scenario where it loses significant share of hyperscaler internal inference workloads. The company is expanding from chip supplier to system architect, storage vendor, networking layer, orchestration software, and agentic platform provider. Huang said at GTC that Nvidia is no longer competing chip-to-chip. It is competing system-to-system, rack-to-rack, and platform-to-platform.

Whether Terafab ultimately matters depends on execution timelines and capital that do not yet exist. Whether Nvidia's $1 trillion forecast materializes depends on demand conditions that are already documented. Those are very different levels of certainty, and they should be treated as such.

Frequently Asked Questions

What is Terafab and when will it be operational?

Terafab is a joint chip fabrication facility announced by Elon Musk on March 21, 2026, as a venture between Tesla, SpaceX, and xAI. It will be located near Tesla's Gigafactory in Austin, Texas, and is designed to produce custom AI chips at 2-nanometer process nodes. Small-batch production of Tesla's AI5 chip is targeted for late 2026. Volume production is projected for 2027. The $20 to $25 billion facility has no confirmed construction timeline for full-scale operations, and the full stated ambition of one terawatt of annual AI compute capacity does not have a completion date attached.

Why did Jensen Huang claim $1 trillion in Nvidia chip orders at GTC 2026?

At GTC 2026 on March 16, Huang stated that Nvidia sees at least $1 trillion in high-confidence purchase orders and demand forecasts for its Blackwell and Vera Rubin chip platforms through 2027. This doubled his $500 billion forecast from GTC 2025. The figure covers only those two platforms (not future Rubin Ultra or Feynman generations) and reflects documented orders rather than market projections.

What is Vera Rubin and how does it compare to Blackwell?

Vera Rubin is Nvidia's next-generation platform, consisting of seven chips across five rack-scale systems. Nvidia claims it delivers 10 times more performance per watt at one-tenth the cost per token compared to Grace Blackwell. The first Vera Rubin system is already running in Microsoft Azure. Full customer shipments begin in 2026. A Vera Rubin Ultra follows in 2027 and a Feynman platform is on track for 2028.

What is Nvidia's CUDA moat and why does it matter?

CUDA is a 20-year-old programming platform that allows developers to write software that runs efficiently on Nvidia GPUs. It is installed across every major cloud provider and used by nearly every AI research institution. Because AI researchers, engineers, and companies have built their workflows on CUDA, switching to a different hardware architecture requires substantial software rewriting. This creates significant inertia in Nvidia's customer base. CUDA's developer activity is estimated at 10 times that of its nearest competitor.

How are Google, Amazon, Meta, and Microsoft competing with Nvidia?

Each major hyperscaler has developed custom AI accelerators for their internal workloads: Google's TPU Ironwood, Amazon's Trainium 2, Meta's MTIA, and Microsoft's Maia 200. These chips are optimized for predictable, high-volume internal workloads such as recommendation systems, ad targeting, and internal AI inference, where the upfront cost of custom chip development is justified by the savings at scale. Meta claims 44 percent total cost of ownership reduction versus Nvidia GPUs for its core recommendation workloads. Custom silicon is expected to reduce Nvidia's market share from above 85 percent toward 75 percent over the coming years, but changes are expected to be gradual.

Can Terafab realistically challenge Nvidia's dominance?

Not on any near-term timeline. Terafab begins small-batch production of AI5 chips for Tesla's own use in late 2026. Full-scale capacity targeting Terafab's stated ambition of matching 70 percent of TSMC's global output from a single facility would require capital, construction timelines, and manufacturing expertise that have no precedent outside the established semiconductor foundry industry. Terafab's most credible near-term impact is on pricing pressure and competitive signaling, not direct hardware competition. If the facility executes across a 5 to 10 year timeline, it could supply Musk's companies with captive chip capacity that reduces their dependence on TSMC and Nvidia, which is the actual strategic objective.

Related Articles