On February 17, 2026, Nvidia and Meta announced what may be one of the largest technology supply agreements in corporate history.

The details were deliberately vague. No specific price tag was disclosed. But chip analyst Ben Bajarin of Creative Strategies, who was briefed on the deal, put it plainly:

"The deal is certainly in the tens of billions of dollars."

The partnership covers:

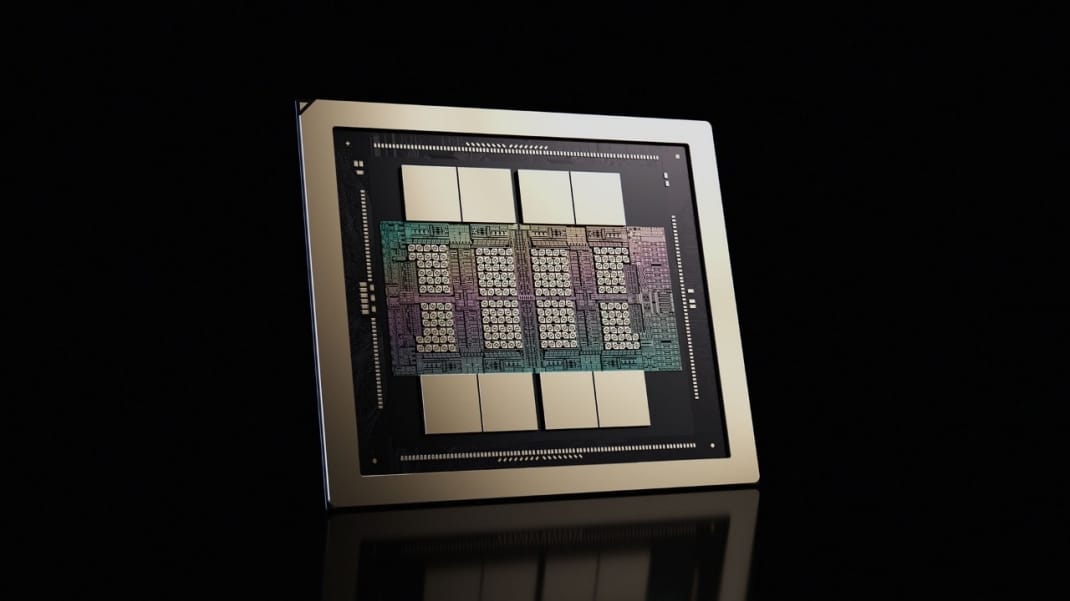

- Millions of Nvidia's Blackwell and Rubin GPUs

- The first large-scale standalone deployment of Nvidia's Grace CPUs in a customer's data centers

- Nvidia's Spectrum-X Ethernet networking fabric

- Plans for next-generation Vera CPUs in 2027

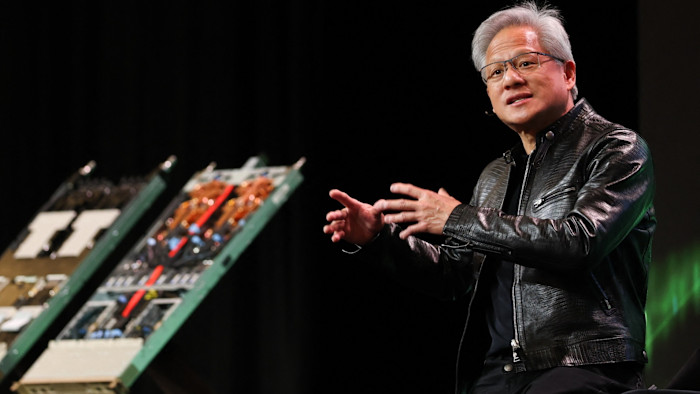

Jensen Huang, Nvidia's founder and CEO, described it as "deep codesign across CPUs, GPUs, networking and software."

But the announcement was not just about two companies making a deal. It was a signal about the trajectory of AI infrastructure investment and what it means for the products that billions of people use every day.

The Scale of What Is Actually Being Built

To understand the Meta-Nvidia deal in context, you need to look at the full picture of what the technology industry is spending on AI infrastructure in 2026.

The $690 Billion Buildout

The five largest hyperscalers, Amazon, Alphabet, Microsoft, Meta, and Oracle, are collectively on track to spend between $660 billion and $690 billion on capital expenditure this year. That is a 36 percent increase over 2025.

Roughly 75 percent of that spend, or approximately $450 billion, is directly tied to AI infrastructure: servers, GPUs, data centers, and networking.

Here is how each player's 2026 spending breaks down:

| Company | 2026 Capex Commitment |

|---|---|

| Amazon | ~$200 billion |

| Alphabet | $175–$185 billion |

| Microsoft | $120 billion+ |

| Meta | $115–$135 billion |

| Oracle | ~$50 billion |

For context: the combined 2026 capex of these five companies is more than four times what the entire publicly traded U.S. energy sector spends to drill, extract, refine, and deliver oil and gas.

Meta's Specific Infrastructure Plans

Meta announced in January that it would spend between $115 billion and $135 billion in 2026, up from $72.2 billion in 2025.

That includes:

- Prometheus — a 1-gigawatt data center under construction in New Albany, Ohio

- Hyperion — a facility in Richland Parish, Louisiana, that could eventually scale to 5 gigawatts

- 30 total data centers planned, 26 of them in the United States

- A stated commitment to spend $600 billion on AI infrastructure by 2028

How It Is Getting Funded

The numbers are staggering enough that internal cash flows cannot cover them.

Hyperscalers raised over $121 billion in new debt during 2025 alone. Projections suggest the technology sector may need to issue as much as $1.5 trillion in new debt over the coming years to keep pace.

Analysts at Barclays modeled Meta's free cash flow dropping by nearly 90 percent in 2026.

When Meta's CFO Susan Li was asked about the capital allocation tradeoffs on an earnings call, she was direct:

"The highest order priority is investing our resources to position ourselves as a leader in AI."

What Meta Is Actually Buying, and Why

The Nvidia deal is more than a procurement contract. It is an architectural commitment.

Meta's planned capital expenditures have risen from $35–$40 billion in 2024 to $115–$135 billion in 2026. Analysts estimate the Nvidia deal alone could be worth up to $50 billion over its multi-year term, which would make Meta one of Nvidia's most significant customers, accounting for an estimated 9 percent of Nvidia's revenue.

The Grace CPU Deployment: The Most Significant Detail

The GPU procurement makes headlines, but the technically significant element of this deal is the Grace CPU deployment.

Meta is becoming the first company to deploy Nvidia's Grace central processing units as standalone chips in its data centers, without pairing them alongside GPUs in a server as has been standard practice.

Bajarin explained why this matters:

"They're really designed to run those inference workloads, run those agentic workloads. Meta doing this at scale is affirmation of the soup-to-nuts strategy that Nvidia's putting across both sets of infrastructure: CPU and GPU."

What Else Is in the Deal

Spectrum-X Networking Nvidia's Ethernet networking platform handles how GPUs communicate across large-scale data centers. Meta has adopted it across its entire infrastructure footprint. Networking has become a genuine bottleneck in large AI deployments — raw GPU performance means little if the chips cannot exchange data fast enough.

Confidential Computing Meta has also adopted Nvidia Confidential Computing, a feature designed to protect user data privacy as AI workloads run on the hardware.

Multi-Generation Coverage The deal spans current and future chip architectures. Zuckerberg confirmed Meta will use Nvidia's Vera Rubin chips to power what he calls a "personal superintelligence platform."

Nvidia claims the Vera Rubin platform can:

- Reduce the cost of AI inference by tenfold

- Reduce the number of GPUs needed to train models by fourfold

Those efficiency gains mean the same hardware budget buys dramatically more compute capability over time.

Meta's In-House Chip Ambitions Hit Turbulence

The scale of Meta's commitment to Nvidia tells a secondary story: its in-house chip program is running into trouble.

Meta has been developing its own AI silicon for years under a project called MTIA. The first generation was designed for inference workloads. A second generation, intended to handle model training, had been targeting a broad rollout in 2026.

The Financial Times reported, citing anonymous sources, that Meta has encountered "technical challenges" with the new chips.

The question that raises itself: if the in-house training chips were on track, why would the company simultaneously commit to tens of billions of dollars in external GPU procurement?

Why Custom Silicon Has Not Replaced Nvidia

This pattern is not unique to Meta. Every major hyperscaler is pursuing its own silicon program:

- Google — TPUs

- Amazon — Trainium and Inferentia

- Microsoft — Maia

None has fully displaced Nvidia. The company holds an estimated 80 to 90 percent of the AI accelerator market, and by some counts as high as 95 percent.

Custom silicon tends to excel at specific workloads but struggles to match the generality, software ecosystem, and years of optimization that Nvidia has built into its platform. Zuckerberg's stated rationale for MTIA, that it would be more energy-efficient and better optimized for Meta's unique workloads, remains sound. The timeline for realizing those advantages has just shifted.

The AMD Deal, and Why Meta Is Diversifying Anyway

One week after the Nvidia announcement, Meta signed another major chip deal.

On February 24, Meta committed to deploying up to six gigawatts of AMD GPU infrastructure in what analysts described as a roughly $100 billion multi-year agreement.

Key terms:

- AMD's Instinct MI450-based GPUs and 6th Gen EPYC CPUs

- First gigawatt deployment shipments expected in the second half of 2026

- AMD issued Meta a performance-based warrant for up to 160 million shares of AMD common stock, vesting upon reaching specific shipment and technical milestones

Why This Deal Is Structurally Different From Nvidia

The AMD deal includes customized GPUs designed specifically for Meta's workloads. That customization is AMD's competitive pitch. Bajarin noted: "It's a good way for them to win some of these deals."

AMD's Helios platform, which underpins the agreement, represents the first meaningful large-scale competition to Nvidia's Grace Blackwell systems.

The broader implication is straightforward: no single supplier relationship is sufficient at Meta's scale. Every delay in chip delivery affects the timeline of the products the company is trying to ship. AMD's growing viability as a second option does not threaten Nvidia's dominance in the near term, but it gives hyperscalers more negotiating leverage and more resilience against supply disruptions.

The Strategic Bet: Superintelligence as a Product

To understand why Meta is spending this aggressively, you need to understand what the company is actually building toward.

Meta Superintelligence Labs

In 2025, Zuckerberg reorganized Meta's research operations into a new division called Meta Superintelligence Labs, led by Alexandr Wang, formerly CEO of Scale AI, whom Meta acquired for $15 billion.

The mandate is not a modest research goal. It is a corporate mission statement: build toward superintelligence.

The Product Roadmap

Here is what is currently in development or recently launched under that mission:

- Llama 4 (live) Powers the Meta AI assistant across Facebook, Instagram, WhatsApp, and Messenger.

- "Avocado" (in development) A proprietary model targeting advanced coding and complex problem-solving. Unlike Llama, this one will not be open-sourced.

- "Mango" (in development) A video generation model to power generative AI video features across Meta's platforms.

- Ray-Ban Meta Glasses (live) Evolved into "AI-First" devices with real-time translation, object recognition, and voice-activated Meta AI.

- "Orion" AR Glasses (anticipated late 2026) Meta's full AR glasses, moving beyond the current smart glasses category.

The Agentic AI Direction

The most commercially significant near-term thread is agentic AI.

Meta has been testing business agents that handle customer support, product questions, and commerce directly within messaging platforms. On the consumer side, the company envisions AI assistants that take actions on behalf of users: booking reservations, managing schedules, navigating apps.

This is the shift from an AI that answers questions to an AI that does things. And it is the workload that requires far more compute than anything Meta is running today — which is precisely why the Grace CPU deployment and the Vera Rubin roadmap exist.

What the Power Grid Has to Say About All of This

There is one constraint that money alone cannot immediately solve, and it is shaping every timeline in this arms race: electricity.

AI data centers are extraordinarily power-intensive. Microsoft currently holds $80 billion in unfulfilled Azure orders that it cannot fill, not because of missing chips, but because it cannot source enough electricity to run the GPUs already sitting in its warehouses. Satya Nadella has acknowledged this publicly.

The demand exists. The capital exists. The silicon exists. The power does not, at least not yet.

Meta's Power Strategy

Meta's Prometheus and Hyperion data centers are being built with energy efficiency as a core design principle.

- The 1-GW Prometheus site in Ohio is being expedited, reportedly using tents to accelerate construction

- The 5-GW Hyperion facility in Louisiana, if fully built, would consume roughly the electricity of a medium-sized American city

- States are already considering restrictions on new data center construction as grid operators grapple with surging demand

The shift to Grace CPUs is partly a performance-per-watt decision. Arm-based processors consume significantly less power for inference workloads than traditional x86 architectures. Nvidia's Vera Rubin roadmap is partly justified by its claimed ability to deliver the same inference capacity at a fraction of the energy cost of current systems.

What the Arms Race Means for the AI Tools You Actually Use

All of this infrastructure investment ultimately flows into the products that real users interact with. The connection between a multi-billion dollar Nvidia deal and your Meta AI experience in WhatsApp is not abstract.

For Everyday Users

More compute translates directly into:

- Faster AI response times across Meta's apps

- Lower inference costs that make AI features economically viable to deploy at scale

- More capable underlying models powering the assistant

- More aggressive feature rollouts across Facebook, Instagram, WhatsApp, and Meta hardware

The agentic features in development require substantially more compute per interaction than a simple text reply. An AI agent that books a restaurant reservation is running a more complex, multi-step operation than answering "what's the weather today." Building the infrastructure to run those interactions for billions of users is exactly what the current capex cycle funds.

For Creators and Small Businesses

Meta's ad system is already being optimized by AI to the point where the company can generate ad creative automatically, matching images and copy to individual user profiles without manual input from advertisers.

More compute enables:

- More complex optimization across larger audience segments

- More personalized ad creative generated at scale

- Higher effective rates for the same ad inventory

For Developers

The open-source ecosystem around Llama reached 1 billion downloads by March 2025, making it one of the most widely adopted AI model families in history.

The decision to keep the forthcoming Avocado model proprietary marks a significant departure. It reflects the competitive pressure of an arms race where the cost of building frontier models has grown too high to give them away freely. The infrastructure investment required to build these models has outpaced the point where open-sourcing them makes strategic sense.

The Unanswered Question

Behind every announced number, every data center groundbreaking, and every chip deal, the central question for every company in this race remains unanswered: will the returns justify the investment?

The Revenue Gap

Goldman Sachs projects total hyperscaler capex from 2025 through 2027 will reach $1.15 trillion.

OpenAI, which helped catalyze this buildout, ended 2025 with approximately $20 billion in annual recurring revenue, a threefold increase from the prior year. That growth is impressive. But the combined revenues of all pure-play AI model companies remain a fraction of the infrastructure being built on their behalf.

The Financial Strain

- Analysts at Barclays modeled Meta posting negative free cash flow in 2027 and 2028

- Morgan Stanley projects total hyperscaler borrowing could top $400 billion in 2026 alone

- The five largest tech companies are on pace to spend approximately 90 percent of their operating cash flow on capex this year — a ratio that resembles capital-intensive industrial companies, not the asset-light software businesses they were a decade ago

Two Ways to Read It

Investors watching this have two legitimate interpretations.

The bull case: Companies building the most compute capacity now are securing durable, asymmetric advantages that will compound for years — priority chip access, faster model iteration, developer lock-in, and the ability to deploy AI features competitors cannot afford to run at scale.

The bear case: Spending is outpacing realistic demand, and the companies building most aggressively will face years of infrastructure overhang before the AI revenue thesis actually materializes.

The Meta-Nvidia deal does not answer that question. What it does is make clear that Meta has committed to the first framework so completely that the second is no longer a live option for the company.

FAQ

What is the Meta-Nvidia deal and how large is it?

Announced on February 17, 2026, the Meta-Nvidia deal is a multiyear, multigenerational strategic partnership covering the large-scale deployment of millions of Nvidia Blackwell and Rubin GPUs, the first ever standalone large-scale deployment of Nvidia Grace CPUs, Nvidia Spectrum-X Ethernet networking, and future Vera CPU deployments planned for 2027. The precise value was not disclosed, but analysts estimate it could be worth up to $50 billion over its term, which would make Meta one of Nvidia's most significant customers.

Why is Meta spending so much on AI infrastructure in 2026?

Meta announced capital expenditure guidance of $115 billion to $135 billion for 2026, up from $72.2 billion in 2025. The company is building toward what Zuckerberg has described as "personal superintelligence," which requires massive compute for training advanced models and for running inference at the scale of Meta's billions of daily users. The company also has a stated commitment to spend $600 billion on AI infrastructure by 2028.

What is the AI infrastructure arms race?

The AI infrastructure arms race refers to the parallel escalation of capital spending among the world's largest technology companies on data centers, GPUs, networking, and related hardware. The five largest hyperscalers — Amazon, Alphabet, Microsoft, Meta, and Oracle — are collectively expected to spend $660 to $690 billion on capital expenditure in 2026, with roughly 75 percent of that directed at AI infrastructure. Each company faces a dynamic where slowing down risks falling behind competitors in compute capacity, model capability, and developer ecosystem adoption.

How will Meta's chip investment affect the products users see?

More infrastructure investment translates into faster AI response times, lower costs that make AI features viable to deploy at scale, more capable underlying models, and more aggressive feature rollouts across Facebook, Instagram, WhatsApp, and Meta's hardware products. The agentic AI features Meta is developing — including AI that can take actions on a user's behalf — require substantially more compute per interaction than current systems and depend on the infrastructure being built now.

What is Nvidia's Grace CPU and why does Meta's deployment matter?

Nvidia's Grace central processing unit is an Arm-based processor designed to run AI inference and agentic workloads. Previously deployed as a companion chip alongside Nvidia GPUs in servers, Meta is the first company to deploy Grace CPUs as standalone chips at large scale. The deployment matters because inference — the compute consumed each time a user interacts with an AI product — is the dominant AI operating cost at Meta's scale, and Grace CPUs are significantly more power-efficient than traditional x86 processors for these workloads.

Is Meta also buying chips from AMD?

Yes. One week after the Nvidia deal, Meta signed a roughly $100 billion multi-year agreement with AMD to deploy up to six gigawatts of GPU infrastructure using AMD's Instinct MI450-based GPUs. The deal includes customized chips designed specifically for Meta's workloads, which AMD offered as a competitive differentiator. As part of the agreement, AMD issued Meta a performance-based warrant for up to 160 million shares of AMD common stock. The two deals reflect Meta's strategy of supplier diversification at this scale of spending.

What is Meta Superintelligence Labs?

Meta Superintelligence Labs is a division created in 2025 to consolidate Meta's AI research and infrastructure teams under a single mission focused on building toward superintelligence. It is led by Alexandr Wang, acquired from Scale AI in a $15 billion deal. The division oversees development of the Llama model series, the forthcoming proprietary Avocado model, and the infrastructure teams responsible for running AI at Meta's scale.

What are the risks of the AI infrastructure arms race for investors and users?

For investors, the primary risk is that infrastructure spending outpaces the pace of AI revenue generation. Analysts at Barclays have modeled Meta posting negative free cash flow in 2027 and 2028. The five hyperscalers are collectively expected to spend approximately 90 percent of their operating cash flow on capex in 2026, forcing heavy reliance on debt markets. For users, the main risk is concentration: as the cost of building frontier AI infrastructure becomes prohibitive for anyone outside a small group of hyperscalers, the competitive dynamics that drive innovation narrow considerably.

Related Articles