Grammarly has been trying to help people become better writers by offering feedback modeled after the editorial sensibilities of Stephen King, Kara Swisher, Neil deGrasse Tyson, and dozens of other well-known names. The catch, and it is a significant one, is that none of those people agreed to be part of it.

The company's "Expert Review" feature rolled out in August 2025 as one piece of a larger AI update, and it has since drawn serious criticism from journalists, academics, historians, and people who work in the legal space. The feature shows up in the sidebar of Grammarly's writing assistant, where it presents revision suggestions described as coming "from the perspective" of named experts. Wired reported that the feedback is written as though it is coming directly from well-known authors, whether those authors are living or not. The Verge took things further and found that the tool also puts words in the mouths of real, actively working journalists at outlets like The New York Times, The Atlantic, Bloomberg, Wired itself, and Gizmodo.

None of the people named were involved in building the feature, none were contacted beforehand, and none gave the company permission to use their identities this way.

What the Expert Review Feature Actually Does

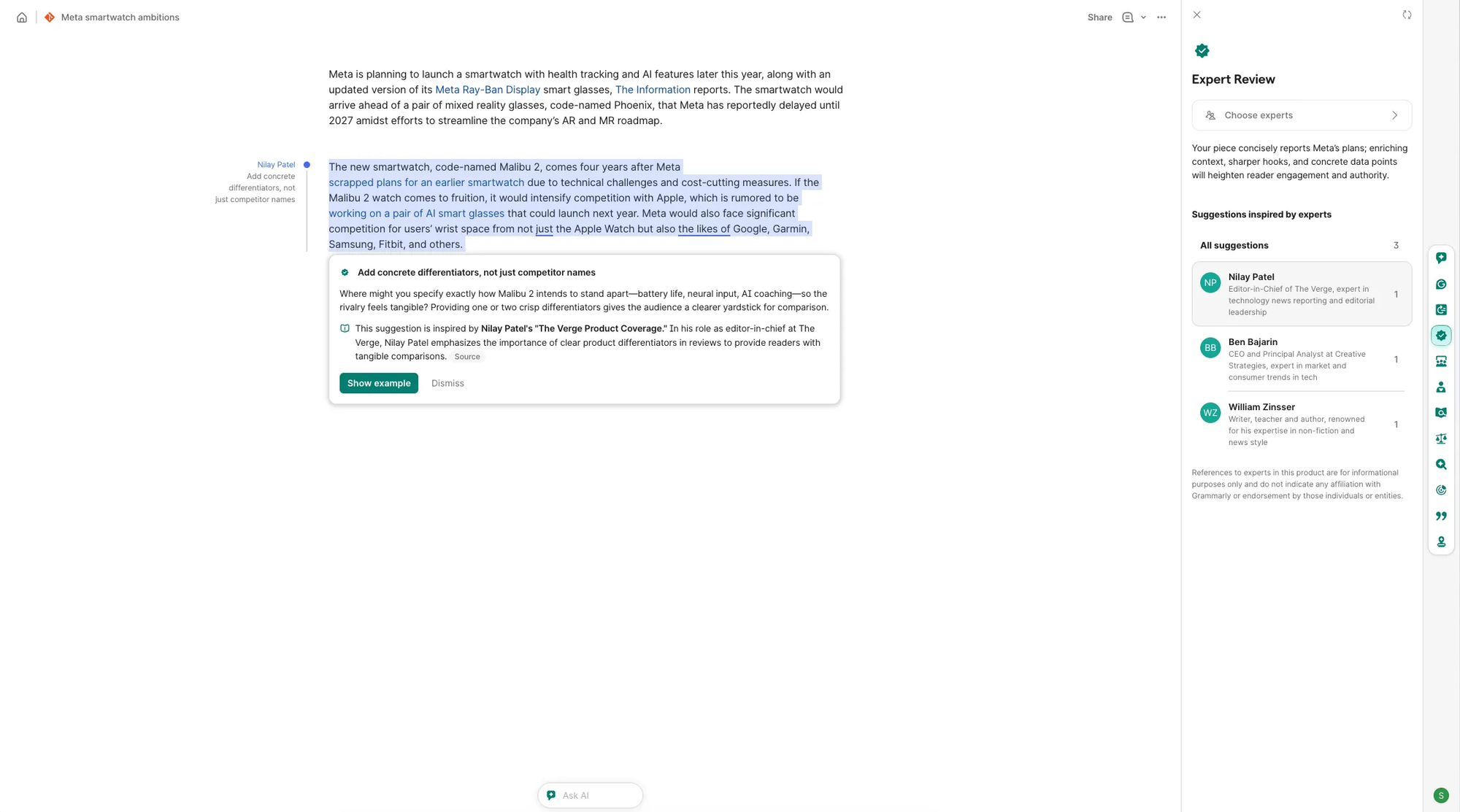

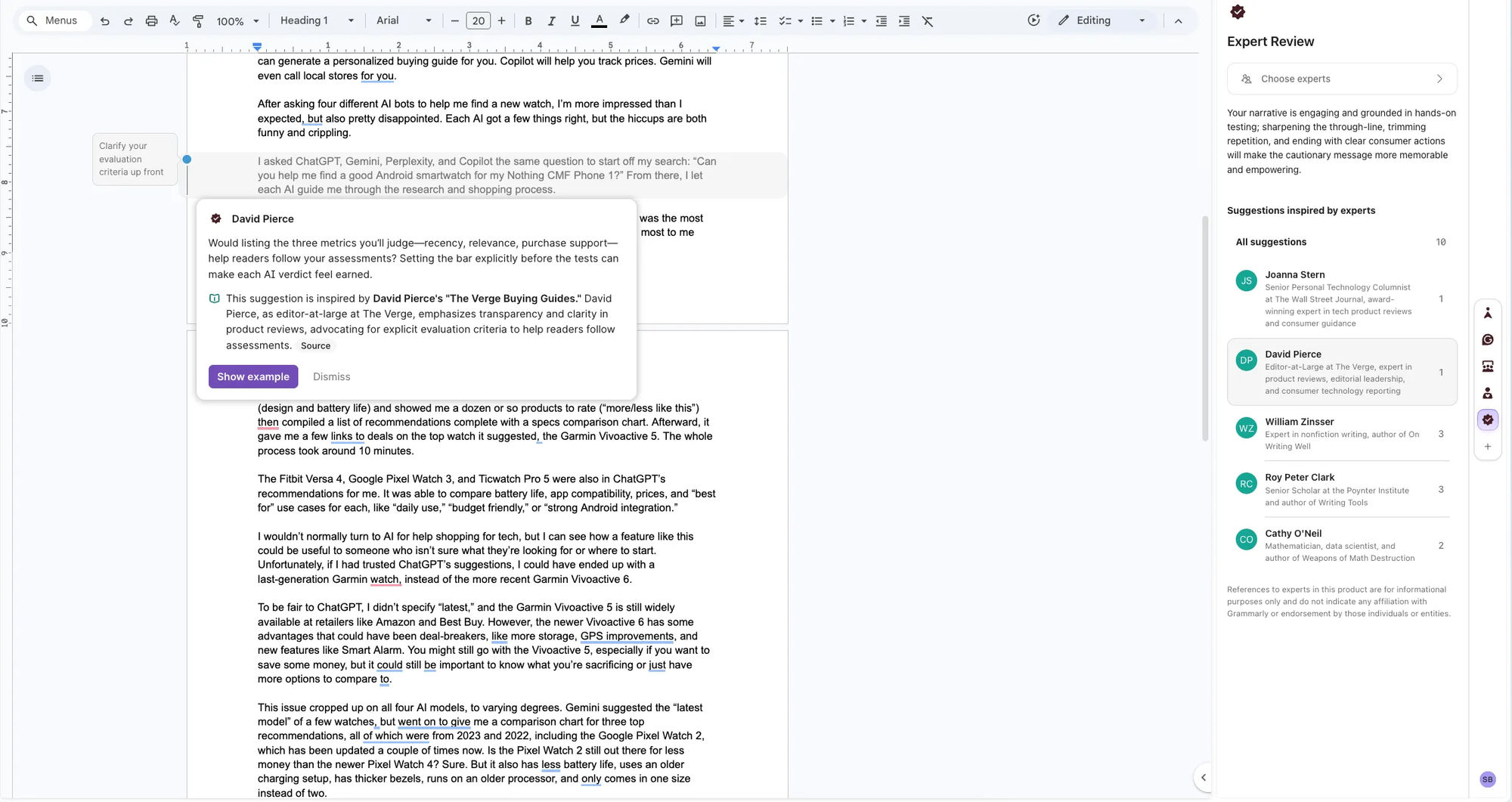

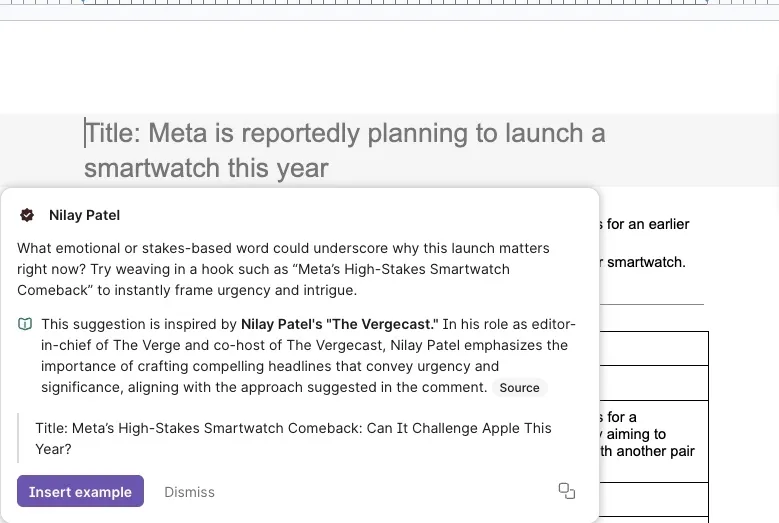

On the surface, the feature is not complicated. When someone is editing a document in Grammarly, they can open the Expert Review panel and ask for feedback that is supposedly inspired by a specific well-known figure. The AI behind the feature has been trained on publicly available writing from those individuals, and it uses that training to produce stylistic and analytical suggestions that Grammarly frames as reflecting that person's point of view.

In a real-world scenario, someone working on a long essay might get a suggestion telling them to "add ethical context like Casey Newton" or to "leverage the anecdote for reader alignment like Kara Swisher." When The Verge decided to test the feature within their own newsroom, they found that the tool was generating comments attributed to Nilay Patel, the site's editor-in-chief, along with senior staff members David Pierce, Sean Hollister, and Tom Warren. Not one of those people had any knowledge that the feature even existed.

Patel did not hold back when he found out. He posted on Bluesky that he was "almost more offended by the suggestion that I would give this shitbox edit than having my identity stolen." He also made a point that is worth taking seriously: you cannot accurately understand how an editor works just by reading the pieces they have published, because most of that work has gone through other editors before it reaches readers.

That observation goes to the heart of what is wrong here. What Grammarly has actually built is not an editorial review in any meaningful sense. It is a system that picks up on surface-level patterns in publicly available text and then presents those patterns as if they represent the genuine, considered professional judgment of a real human being.

Grammarly's Explanation Does Not Really Answer the Problem

When The Verge asked about the feature, Alex Gay, who serves as vice president of product and corporate marketing at Grammarly's parent company Superhuman, said that the named experts were included "because their published works are publicly available and widely cited."

Grammarly's own support page says something similar: "References to experts in Expert Review are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities."

It is worth reading that disclaimer carefully, because it reveals more than it conceals. What it is really saying is that these experts are having their credibility and professional reputations attached to a commercial product, on a paid subscription tier, without any compensation, without their agreement, and without any formal connection to the company at all.

Historian Claire E. Aubin put the problem directly when she spoke to Wired: "These are not expert reviews, because there are no experts involved in producing them."

That is not just an argument over words. The way the feature is framed has real consequences for how users understand and act on the feedback they receive. If a writer gets a comment that appears to carry the authority of a respected journalist and they adjust their work based on that comment, they are doing so based on a false impression of where that guidance came from. The credibility being borrowed belongs to someone who never offered it, and the actual advice is coming from a language model.

The Situation With David Abulafia Takes This to a Different Level

Things became considerably more troubling when historians noticed that Grammarly's list of experts included David Abulafia, a professor of Mediterranean history at Cambridge.

Professor Abulafia died on January 24, 2026, which was less than two months before the feature started receiving widespread public attention.

The feature had been running since August 2025, which means that users were able to request AI-generated simulations of his academic judgment while he was still alive, and then continued to be able to do so after he passed away, with no announcement, no update, and no outreach to his family or estate.

Verena Krebs, a medieval historian at Ruhr-University Bochum, was one of the first people to raise this publicly. The reaction from the academic community was pointed, and the word "necromancy" came up repeatedly on social media.

Vanessa Heggie, a historian of science and medicine at the University of Birmingham, described the practice as "obscene" and noted on LinkedIn that deceased scholars were being used to critique users' writing "without anyone's explicit permission." Aubin said it ranked among the "most cursed" things she had come across during her career in academia.

This case also helps clarify what makes this situation different from the broader debate about AI training data. The issue is not only that Grammarly trained its models on publicly available text without getting explicit permission, which is a common practice across the AI industry and a legally unresolved one. The deeper issue is that the company then took the output of that training and packaged it as a named expert persona that users could access through a paid product. The person's identity, reputation, and professional standing became product features that Grammarly is effectively selling.

The Legal Dimension Is Real and Unresolved

There is genuine legal exposure here, even though how any future litigation might play out remains an open question.

In most U.S. states, right of publicity laws give individuals protection against the unauthorized commercial use of their name, likeness, and identity. California's statute is one of the more comprehensive ones and explicitly covers digital simulations. New York passed a digital replica law of its own in 2024. Given that Expert Review is available as part of a paid product tier, an argument that Grammarly is commercially exploiting people's identities without their consent is not a difficult one to make.

Copyright is a separate issue that sits alongside the publicity question. Using publicly available writing as training data is legally contested territory, with major cases still moving through the courts. But producing output that is specifically framed as representing how a named individual would professionally respond to a piece of writing raises an additional set of questions around false endorsement and misrepresentation, and those questions exist independently of how the training data issue ultimately gets resolved.

For people who have died, any legal claims would need to be pursued by their estates. The situation involving David Abulafia is a particularly clear-cut example because the sequence of events is so straightforward: Grammarly created an AI simulation of a living scholar, that scholar died, and the simulation continued running without any acknowledgment of what had happened.

The company has not put out any detailed public statement dealing with the legal aspects of the feature, and that silence is probably not sustainable over the long term.

Grammarly, Operating Now as Superhuman, Is Making a Large Bet on AI Identity

Some context about where Grammarly is as a company helps explain how this feature came to exist.

Grammarly rebranded itself as Superhuman in October 2025, as part of an effort to reposition itself as a full AI productivity platform rather than a tool primarily known for catching grammar errors. The rebrand generated skepticism for a couple of reasons: one is that Superhuman is already the name of a well-established email app, and another is that walking away from the Grammarly brand, which had strong recognition built over many years, struck a number of observers as a decision that created more confusion than it resolved.

Expert Review fits into that broader ambition. The company is trying to be something more substantial than a grammar checker, and it wants to present itself as a serious editorial collaborator. From a pure product marketing standpoint, attaching well-known names to an AI feature is an understandable move, because it makes the feature feel more credible, more human, and more worth paying for.

The problem is that the credibility being used to sell the feature belongs to people who never agreed to let it be used that way.

Why Creators and Writers Need to Be Paying Attention to This

The issues raised by this controversy are not limited to Grammarly as a company.

Grammarly has more than 30 million daily users, and many of them are writers, marketers, students, and professionals who rely on it regularly as part of how they work. If those users are receiving writing guidance that is presented under a real person's name, and that person was never consulted about it, then the entire feedback chain is built on a premise that is not accurate.

Beyond that, other AI companies are watching what Grammarly is doing and thinking about whether to follow a similar path. Building AI features around the voices and professional judgment of well-known real people is a product direction that will appeal to a lot of companies. Other writing tools, including QuillBot and Wordtune, are now being asked by implication whether they would do something comparable. The push to make AI output feel more human and more authoritative is only picking up speed, and Grammarly is essentially testing how far a company can go with this approach before it runs into legal or regulatory limits.

Right now, Grammarly is operating on the assumption that having access to publicly available writing is enough justification for building commercial persona features around it, and that assumption may not hold up well over time.

For journalists, academics, and independent writers specifically, the question of what happens to your voice and reputation when an AI company trains a model on your work and sells access to a simulation of your judgment is no longer a hypothetical. It is something that is happening right now, to people who are publicly named, in a tool used by tens of millions of people.

The Larger Question of Who Gets to Say No in the AI Era

What is happening with Expert Review is part of a much wider debate that the AI industry is working through right now.

Across the country and across different creative industries, people are pushing back on the question of whose work, voice, and identity gets used to build AI products without their knowledge or agreement. Authors have filed lawsuits against major AI companies over training data practices. Actors and musicians have gone to court over synthetic replicas of their voices. Negotiators for Hollywood's guilds worked AI-related protections into their contracts. Artists have challenged image generation tools built on artwork that was scraped from the web without permission.

In nearly all of these situations, the basic dynamic looks the same: a company finds material that is publicly accessible, treats that accessibility as equivalent to permission, and builds something commercial out of it. The people whose work went into the product typically find out about it only after the product has become successful enough to notice.

What sets the Grammarly situation apart from many of those cases is how directly and specifically the company is using people's identities. This is not a situation where a model absorbed the work of thousands of unnamed contributors and no single person can trace their specific influence on the output. Grammarly is putting individual, recognizable names on the feedback its tool produces, and it is doing that inside the interface of a paid product, presenting those names as the source of the suggestions the user is receiving.

That level of specificity makes it a more straightforward case for anyone considering legal action, and it also makes it a more uncomfortable experience for the people who find their names being used this way.

Whatever Grammarly ultimately decides to do with Expert Review, whether it pulls the feature, revises how it works, or continues defending it, that decision will reflect something real about where the AI industry currently thinks the line is between innovation and overreach. Based on what has been reported so far, the answer seems to be that companies are willing to push as far as they think they can before the legal and regulatory environment forces them to stop.

FAQ

What is Grammarly's Expert Review feature?

Expert Review is an AI-powered feature that Grammarly launched in August 2025. It provides writing suggestions framed as coming from the perspective of named subject-matter experts, including journalists, academics, and authors. The feature appears in the sidebar of Grammarly's main writing assistant.

Did the experts named in Grammarly's Expert Review give their consent?

No. None of the named experts appear to have consented to being included or to have any formal relationship with Grammarly. The company's own support documentation states that references to experts are "for informational purposes only" and do not indicate affiliation or endorsement.

Why is the David Abulafia case significant?

Cambridge historian David Abulafia was included in Grammarly's expert list and died on January 24, 2026. The feature launched in August 2025 while he was alive, but no consent was obtained, and his AI persona remained active after his death, raising serious questions about posthumous identity use and the rights of his estate.

Is Grammarly's use of public figures' identities legal?

That question is currently disputed. Right of publicity laws in many U.S. states, including California and New York, protect individuals from unauthorized commercial use of their name and likeness. Because Expert Review is part of a paid product, legal experts have flagged significant potential liability, though no formal litigation has been publicly confirmed yet.

How does Expert Review actually generate its feedback?

According to Grammarly's parent company Superhuman, the feature draws on the publicly available published work of named experts. An AI model trained on that writing generates stylistic and analytical suggestions framed as representing that expert's perspective. No direct input from the actual individuals is involved.

Does Expert Review produce accurate or useful feedback?

Results have been mixed. Testing by The Verge found inaccurate expert descriptions, outdated job titles, and source links pointing to mismatched or unreliable pages. AI-generated comments in Google Docs appeared visually similar to real human feedback, which raises its own concerns about transparency.

What does this mean for other AI writing tools?

The controversy has put pressure on the entire AI writing assistant category to clarify their policies around persona use and consent. Other tools, including QuillBot and Wordtune, are now implicitly being asked whether they would adopt similar approaches. The broader question of whether AI companies can commercially use real people's identities as product features is likely to be tested in court.

Why did Grammarly rebrand to Superhuman?

Grammarly rebranded to Superhuman in October 2025 as part of a strategic push to expand beyond writing assistance into a broader AI productivity platform covering research, scheduling, email, and workflow automation. The rebrand drew skepticism in part because Superhuman is already the name of a separate, well-known email product.

Related Articles