Google spent most of 2025 proving that AI image generation could be both fast and genuinely capable. With Nano Banana 2, it's arguing those two things no longer have to be a tradeoff.

Released on February 26, 2026, Nano Banana 2 — built on the Gemini 3.1 Flash Image architecture — is Google's latest image generation model. It replaces Nano Banana Pro as the default across most of Google's consumer products, including the Gemini app, Google Search, Google Ads, and the AI filmmaking tool Flow. The company is positioning it as the sweet spot between the breakneck generation speed of the original Nano Banana and the high-fidelity, reasoning-heavy output of Nano Banana Pro.

This isn't just a minor version bump. The model ships with capabilities that were previously gated behind paid tiers or only accessible in Pro, and it's rolling out to users broadly — including free-tier Gemini users — starting today.

A Quick Recap: How Nano Banana Got Here

To understand why Nano Banana 2 matters, it helps to remember how fast this product line has moved.

The original Nano Banana launched in August 2025 and became what Google itself describes as a "viral sensation," redefining expectations for conversational image generation and editing. The model was fast and surprisingly capable, but it had real limitations in photorealism, complex instruction following, and advanced subject consistency.

Google answered those gaps in November 2025 with Nano Banana Pro — a model that delivered studio-quality output and deeper reasoning, but at the cost of the snappy, iterative generation speed that made the original feel fun and accessible.

Nano Banana 2 is Google's attempt to have both. Built on Gemini 3.1 Flash Image, the model brings Pro-class features down to Flash speed, while adding new capabilities neither predecessor offered.

What's Actually New in Nano Banana 2

Speed Meets Pro-Level Intelligence

The headline improvement is straightforward: Nano Banana 2 generates images faster than Nano Banana Pro while delivering comparable quality. For users who were living in the Pro tier for serious work, that means shorter wait times during iteration-heavy workflows. For users on the free tier who were previously limited to the original Nano Banana, it means a meaningful jump in output quality without any additional cost.

Speed matters more than it might sound for image generation. Creative workflows — building a product campaign, storyboarding a video, iterating on marketing assets — depend heavily on the ability to generate, evaluate, and refine quickly. A model that makes you wait breaks that rhythm. Nano Banana 2 is designed to keep it moving.

Real-World Knowledge Baked Into Visual Generation

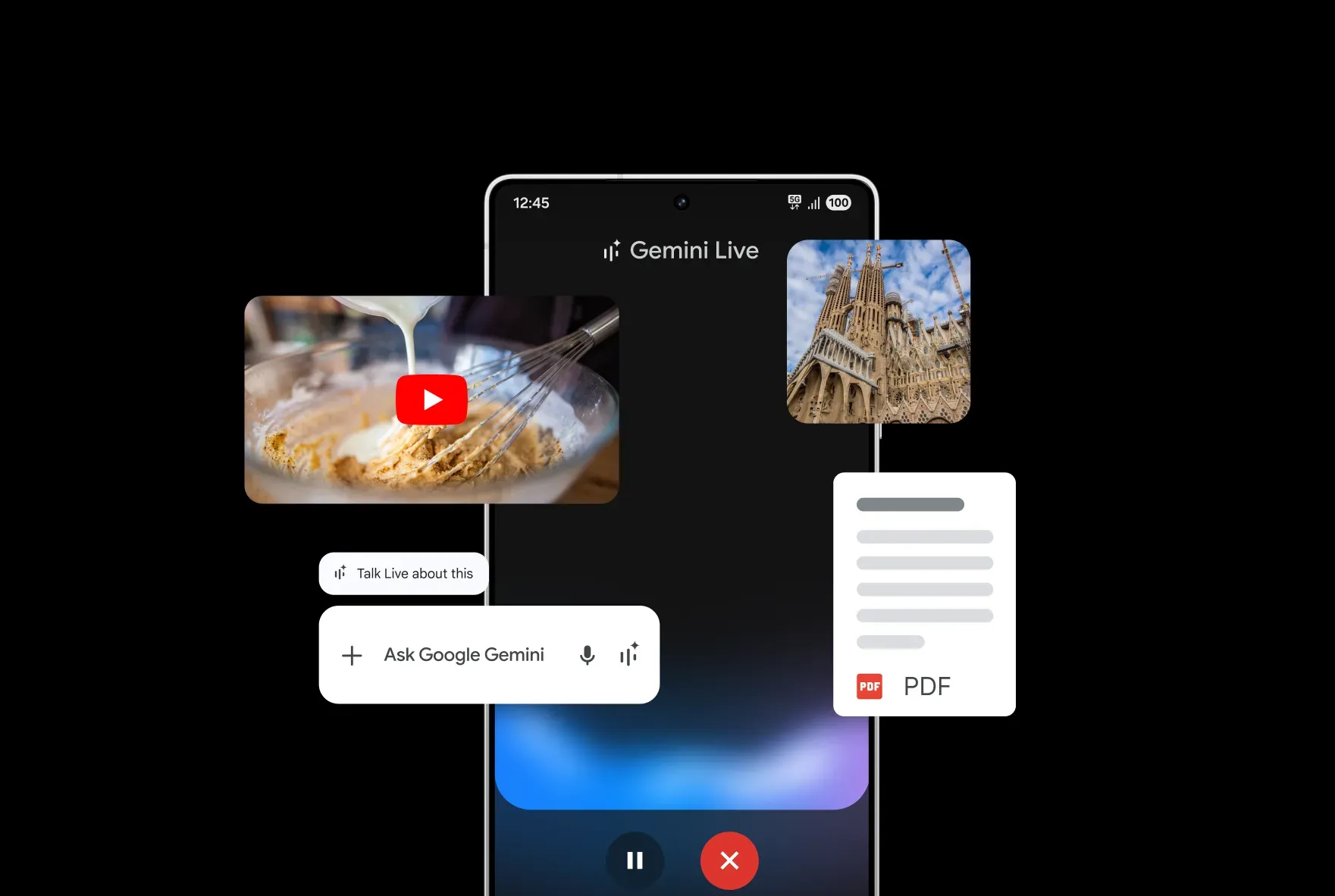

One of the most significant additions is what Google calls "advanced world knowledge" — the model now pulls from Gemini's real-world knowledge base and, critically, from real-time information and images via web search integration.

In practice, this means that if you ask Nano Banana 2 to generate an image of a specific building, a particular species of animal, a real product, or a documented historical scene, the model has actual visual reference to draw from rather than hallucinating what something might look like. It also means the model is grounded in current context, not just training data from a fixed cutoff.

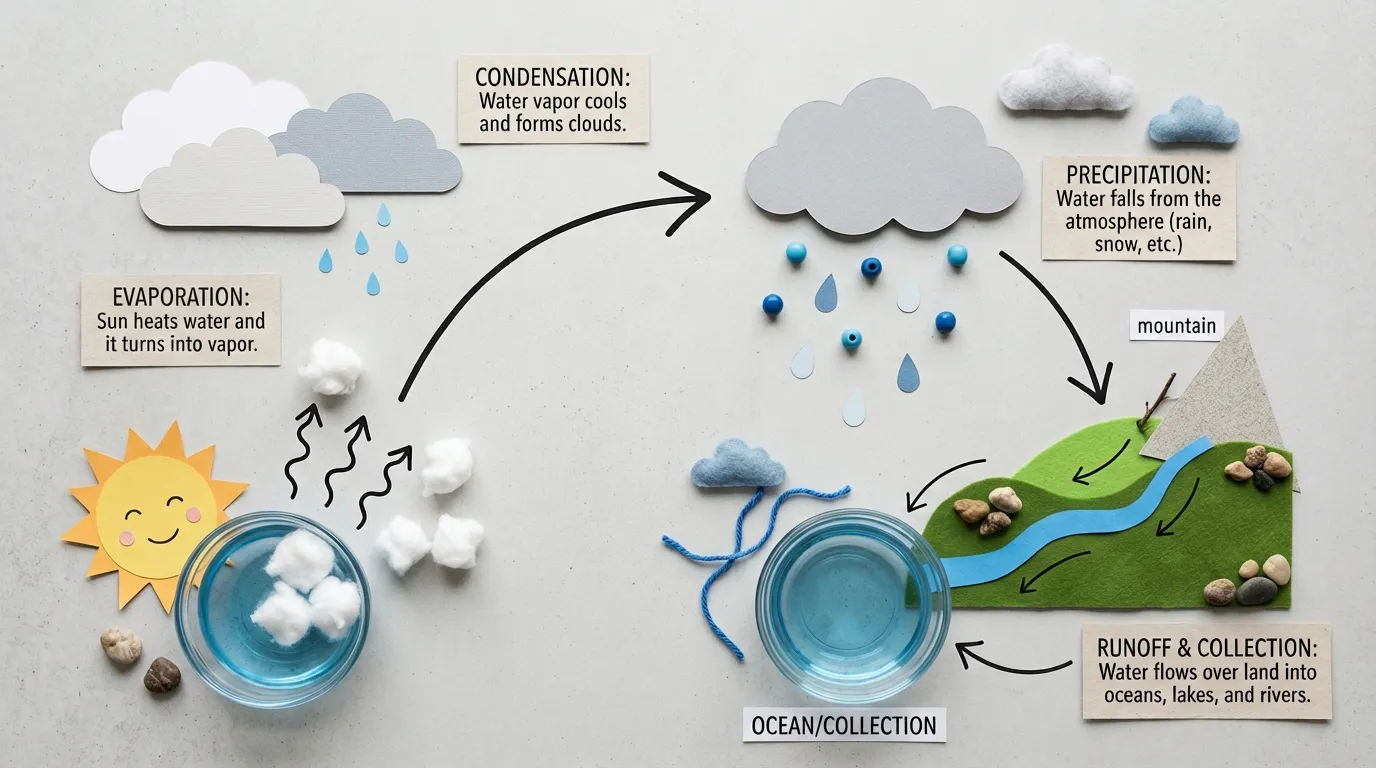

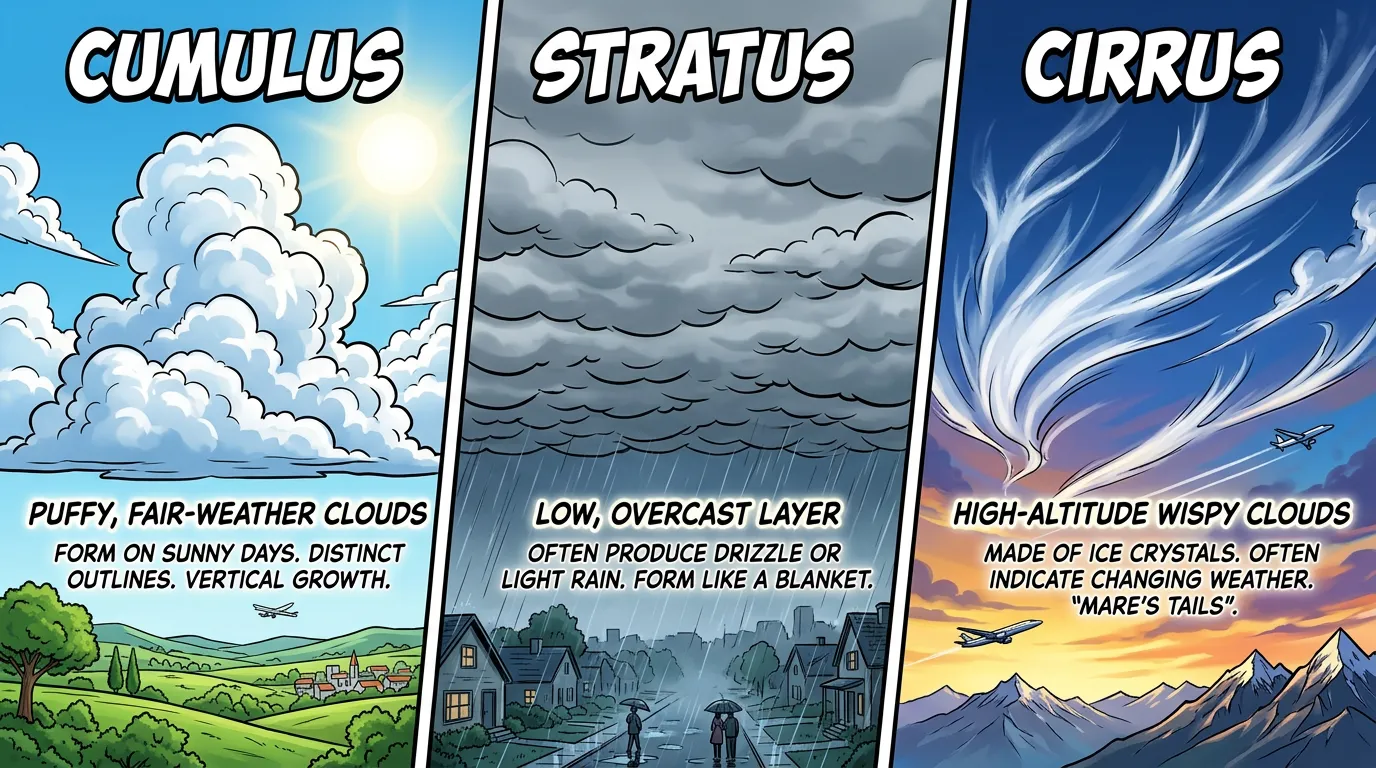

This capability directly unlocks more reliable generation for infographics, data visualizations, and diagrams. Asking the model to turn a set of notes into a visual flowchart or render a chart that accurately reflects real-world data is now a legitimate use case rather than an exercise in frustration.

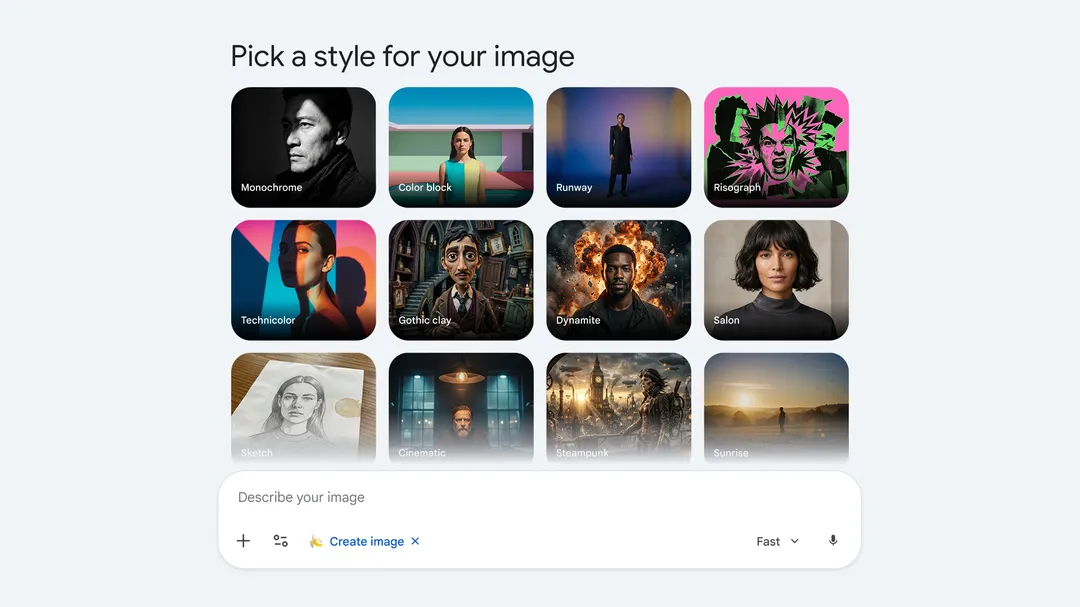

Precision Text Rendering — And Translation

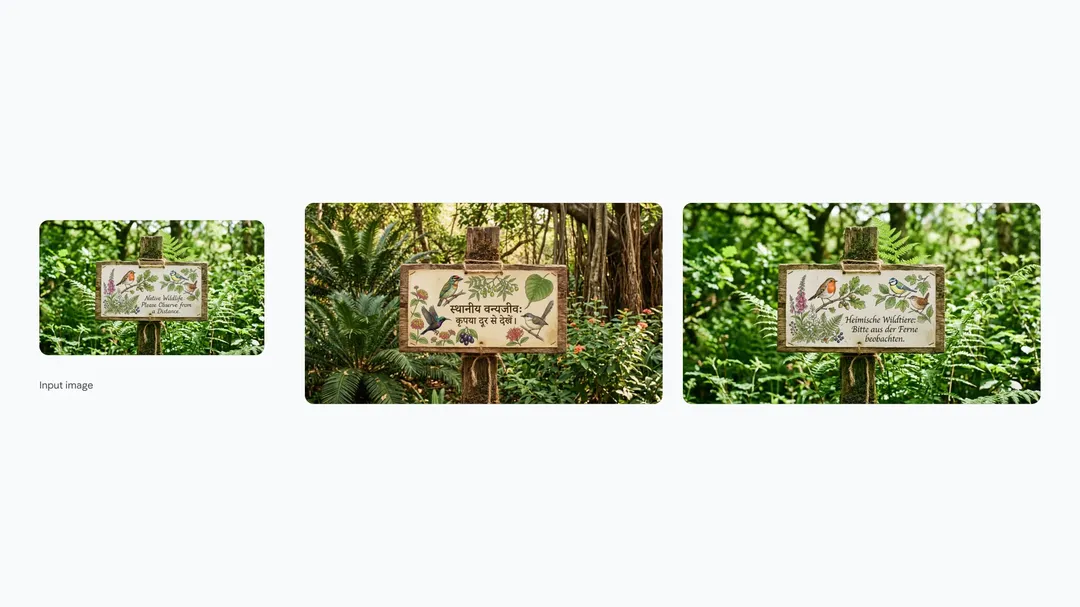

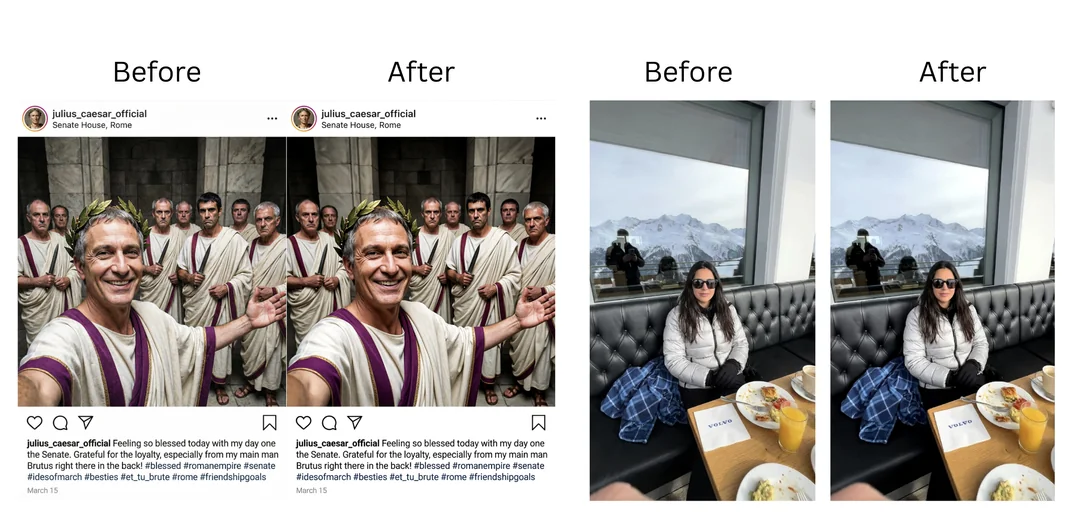

Getting readable, accurate text into AI-generated images has been a persistent weakness across the industry. Midjourney, DALL-E 3, and earlier Nano Banana models all struggled with it to varying degrees. Nano Banana 2 specifically addresses this with what Google describes as precision text rendering — the ability to generate legible, properly styled text in marketing mockups, greeting cards, signage, and other formats.

More interesting is the translation and localization capability. Users can now take a generated image containing text and ask the model to translate that text into another language, with the localized version rendered into the image. For anyone doing multilingual marketing, global campaigns, or content localization, this is a meaningfully practical feature.

Subject Consistency Across Complex Workflows

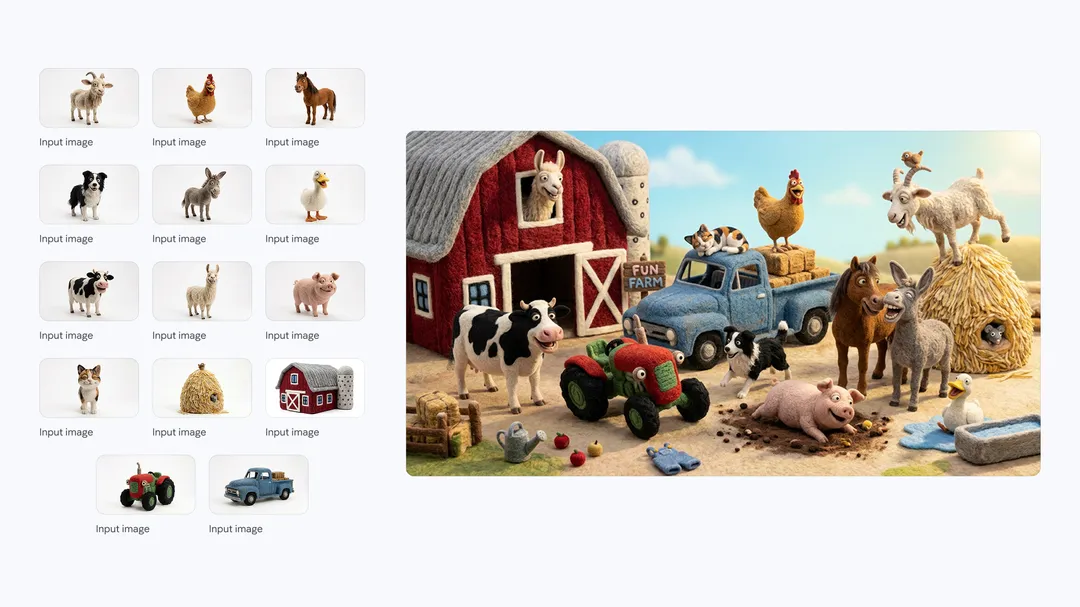

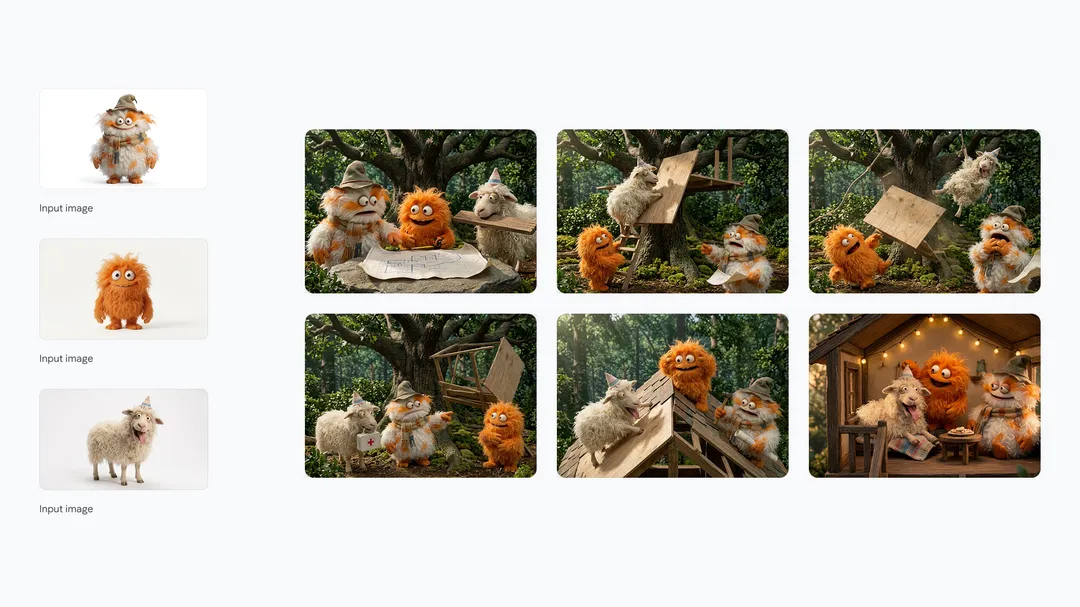

For creators building narratives — storyboards, sequential illustrations, comic-style storytelling — subject consistency has been a major pain point. Keeping a character looking like themselves across multiple generated frames, especially when the angle, lighting, and composition change, typically required significant manual effort or purpose-built fine-tuning workflows.

Nano Banana 2 handles up to five characters and 14 objects with consistent appearance across a single workflow. That's a significant number for most creative projects, and it opens up genuinely new production possibilities for independent creators, game developers, marketers building branded visual systems, and anyone telling stories with AI-generated imagery.

Production-Ready Output Specifications

The model supports aspect ratios and resolutions ranging from 512px up to 4K. That range matters because it means output is no longer confined to social-media-sized squares. A 4K wide-screen render is usable for professional presentations, video backdrops, print-ready marketing materials, and billboard-scale applications. Combined with the text rendering improvements, this pushes Nano Banana 2 into territory where the output can go directly into production workflows without extensive post-processing.

Where Nano Banana 2 Is Rolling Out

Google is pushing Nano Banana 2 broadly across its ecosystem from launch day.

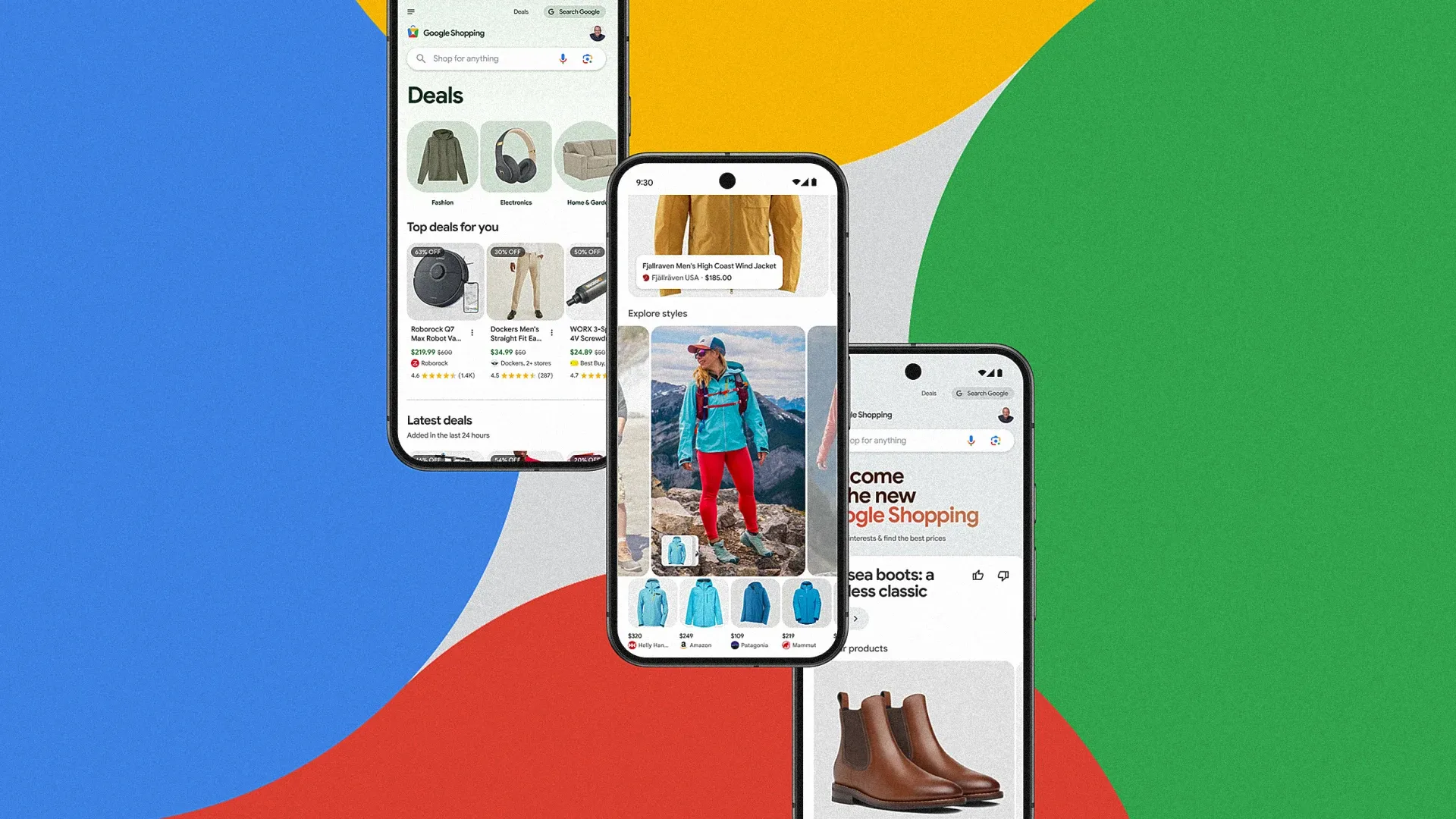

- Gemini app: Nano Banana 2 becomes the default image model across Fast, Thinking, and Pro model tiers. Paid subscribers (Google AI Pro and Ultra) retain access to Nano Banana Pro for tasks that genuinely need maximum fidelity, accessible via the three-dot regeneration menu.

- Google Search: Rolling out in AI Mode and Google Lens, across the Google app and both mobile and desktop browsers. Google is expanding availability to 141 new countries and territories and eight additional languages — a scale that underscores how seriously the company is treating this as a global infrastructure update, not just a feature launch.

- Google Ads: Available now for ad creative generation. This is arguably the most commercially significant deployment. Advertisers generating campaign assets at scale stand to benefit immediately from both the speed improvements and the text rendering accuracy.

- Flow: Nano Banana 2 is now the default image generation model in Google's AI filmmaking tool, available to all Flow users at zero credits. For creators building with Flow, this is a direct upgrade to the visual quality of their outputs at no additional cost.

- AI Studio and Gemini API: Available in preview, with pricing published in the API documentation. This opens Nano Banana 2 to developers building applications on top of Google's image generation infrastructure.

- Google Cloud / Vertex AI: Also available in preview via the Gemini API in Vertex AI, extending access to enterprise teams building on Google Cloud.

The Provenance Story: SynthID and C2PA

One aspect of the Nano Banana 2 launch that deserves attention beyond the generation capabilities is Google's continued push on AI content identification.

Every image generated by Nano Banana 2 carries a SynthID watermark — an imperceptible digital signature embedded directly into the pixel data. SynthID has been Google DeepMind's answer to the growing need for reliable AI-generated content identification, and it works even when images are cropped, filtered, screenshotted, or otherwise modified.

With Nano Banana 2, Google is pairing SynthID with C2PA Content Credentials — an interoperable industry standard for content provenance developed by the Coalition for Content Provenance and Authenticity. Where SynthID tells you whether an image was AI-generated, C2PA credentials provide a fuller picture: not just if AI was involved, but how and when.

Since launching its SynthID verification feature in the Gemini app in November 2025, Google reports the tool has been used more than 20 million times across various languages. That's a meaningful adoption signal for a feature that most users never actively sought out, suggesting that provenance tools are becoming a standard part of how people interact with AI-generated media rather than a niche concern.

C2PA verification is coming to the Gemini app soon, Google says, which would give users an integrated way to inspect the provenance of images they encounter — not just those they generate.

How Nano Banana 2 Fits Into the Competitive Picture

The AI image generation market has become genuinely competitive in the past twelve months. Midjourney V7 raised the bar for artistic quality. OpenAI's GPT-4o image generation integrated visual creation directly into a conversational interface. Adobe Firefly has been steadily improving its commercial-safe generation with deep integration into the Creative Cloud ecosystem. Stability AI has continued releasing open-weight models that give developers maximum flexibility.

Google's strategy with Nano Banana 2 is distinct from most of these. Rather than competing purely on raw output quality or artistic style, Google is betting on ecosystem integration and practical utility. The web search grounding that feeds real-world knowledge into generation, the text rendering and translation that enables localization workflows, the tight distribution across Search, Ads, Gemini, and Flow — these are capabilities that only Google can realistically deliver at this scale.

For a marketing team using Google Ads who needs fast, accurate, localized visual assets, Nano Banana 2 is already sitting inside the tools they use every day. That distribution advantage is harder to replicate than any individual model improvement.

The more direct comparison point is OpenAI's image generation, which is also tightly integrated into a widely-used AI assistant and can pull from real-world context via web search. Google's advantage here is the scale of its search infrastructure and the depth of its visual index — the same assets that power Google Images and Lens. That's a genuinely differentiated data moat for grounded image generation.

What This Means for Creators and Businesses

For individual creators

If you've been using Gemini for image generation and hitting the limits of the original Nano Banana — particularly around complex prompts, character consistency, or text in images — Nano Banana 2 is a direct answer to those frustrations. The upgrade is automatic and doesn't require any change in workflow.

The subject consistency feature, in particular, opens up new use cases. Building a branded mascot or recurring character for a content series, maintaining visual consistency across a product line's marketing materials, or storyboarding a short film — these are now feasible without investing in fine-tuning or elaborate prompt engineering.

For marketing and advertising teams

The combination of fast generation, precision text rendering, localization support, and direct integration into Google Ads makes Nano Banana 2 the most practical AI image tool for performance marketing that Google has shipped. The ability to generate localized ad creative variants — same visual concept, different language, different market context — at the speed of Flash opens up campaign localization workflows that previously required either human designers or painful workarounds.

The 4K output ceiling means generated assets are usable in high-resolution formats including display advertising, out-of-home placements, and print.

For developers

The Gemini API preview gives developers access to Nano Banana 2's capabilities programmatically. The web search grounding feature is particularly interesting for applications where visual accuracy to real-world subjects matters — travel apps, e-commerce product visualization, educational tools, and anything that needs to render specific real-world objects reliably.

The Honest Assessment

Nano Banana 2 is a legitimate product improvement with real utility across several use cases that were previously underserved by the Nano Banana lineup. The web search grounding, text rendering, and subject consistency capabilities are not incremental polish — they represent new capabilities that change what the model is actually useful for.

That said, a few things worth watching. Google's claim of subject consistency across "up to 14 objects" invites skepticism until independent testing establishes how reliably it performs at that ceiling and under what conditions it breaks down. The gap between benchmark performance and real-world use cases for character consistency has been significant across the industry.

The text rendering improvements also warrant independent testing. Getting accurate, styled text into generated images is genuinely hard, and models frequently perform well on clean demo prompts while struggling with the messier edge cases that show up in actual production workflows.

What isn't in question is Google's distribution. With Nano Banana 2 as the default across Gemini, Search, Ads, and Flow from day one, this model will have more users testing its real-world limits within the next week than most competing models will see in months.

FAQ

What is Google Nano Banana 2?

Nano Banana 2 is Google's latest AI image generation model, built on the Gemini 3.1 Flash Image architecture. It was released on February 26, 2026, and combines the Pro-level features of Nano Banana Pro with the faster generation speed of Gemini Flash. It is now the default image generation model across most Google products.

How is Nano Banana 2 different from Nano Banana Pro?

Nano Banana Pro prioritized maximum fidelity and factual accuracy at the cost of slower generation. Nano Banana 2 delivers comparable quality at significantly faster speeds, making it better suited for iterative workflows. Nano Banana Pro remains available to paid Google AI Pro and Ultra subscribers for specialized high-fidelity tasks.

Is Nano Banana 2 free to use?

Yes. Nano Banana 2 is available to free-tier Gemini users and rolls out as the default model across the Gemini app, Google Search, and Google Flow at no additional cost. Developer access via the Gemini API is available in preview with separate API pricing.

What products include Nano Banana 2?

Nano Banana 2 is available in the Gemini app, Google Search (including AI Mode and Google Lens), Google Ads, Google Flow, AI Studio, the Gemini API, and Google Cloud's Vertex AI.

Can Nano Banana 2 generate text in images?

Yes. Nano Banana 2 includes precision text rendering, allowing accurate, legible text to be generated in images for use cases like marketing mockups, greeting cards, and signage. It also supports translation and localization of text within generated images.

What is subject consistency in Nano Banana 2?

Subject consistency allows the model to maintain the visual appearance of up to five characters and 14 objects across multiple generated images in a single workflow. This is particularly useful for storyboarding, sequential illustration, branded character development, and any use case that requires visual continuity across multiple outputs.

How does Nano Banana 2 use web search?

The model integrates real-time information and images from Google Search to ground its generation in accurate visual references. This improves the accuracy of generating specific real-world subjects — buildings, animals, products, people — and enables more reliable data visualization and infographic creation.

What is SynthID and how does it apply to Nano Banana 2?

SynthID is Google DeepMind's AI watermarking technology, which embeds an imperceptible digital signature into every AI-generated image. All images generated by Nano Banana 2 carry a SynthID watermark. Google is also adding support for C2PA Content Credentials, an industry-standard provenance format that describes how and when AI was used in content creation.

Related Articles