On March 17, 2026, Google removed the paywall from one of the most significant AI features it has built in years. Gemini Personal Intelligence, which connects Google's AI assistant to your Gmail, Google Photos, YouTube history, and Search data, is now available at no cost to every US user on a personal Google account. Until that point, the feature had been restricted to paying subscribers on the AI Pro and AI Ultra tiers since its beta launch in January 2026.

The rollout covers three surfaces: AI Mode in Google Search, the Gemini app, and Gemini inside Chrome. Google framed the expansion in a blog post as moving toward "a deeper, more personal Google experience." The more direct description is that the AI you've been using to answer general questions can now answer personal ones, provided you give it permission to access your data.

What Personal Intelligence Actually Does

The practical pitch for Personal Intelligence centers on context. Most AI assistants know things about the world but nothing about you specifically. Personal Intelligence changes that for users who opt in, by giving Gemini access to a connected body of personal data to reference when answering questions.

Google's VP of the Gemini app, Josh Woodward, described the feature's two core strengths in the January launch post as "reasoning across complex sources and retrieving specific details from, say, an email or photo to answer your question."

The examples Google has used to illustrate the feature give a useful picture of what it can do in practice:

- A user standing in a tire shop who can't remember their car's tire size asks Gemini, which retrieves the vehicle specs and then recommends all-weather tires after noticing family road trip photos in Google Photos

- A user planning a trip gets tailored recommendations based on past hotel bookings in Gmail and previous travel photos, with the AI skipping tourist traps based on apparent preferences

- A user who needs their license plate number can ask Gemini, which locates it from a photo of the car in their Photos library

- A user shopping for a coat gets suggestions that account for their preferred brands, upcoming travel from flight confirmations in Gmail, and expected weather

The common thread is that Gemini replaces the manual process of switching between apps to gather context. Instead of locating a confirmation email, cross-referencing a photo, and then asking a separate question, Gemini handles all of that in a single query.

Where the Feature Lives and Who Can Use It

Personal Intelligence is available on three surfaces.

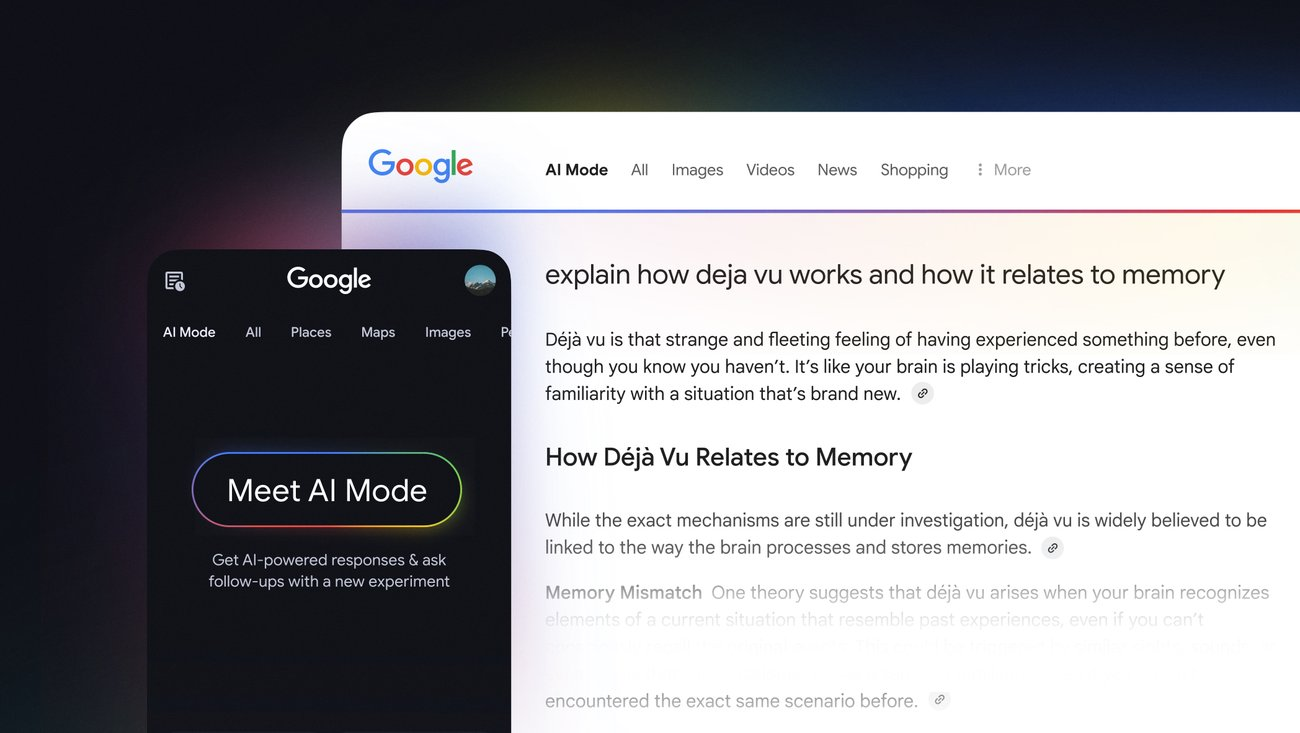

AI Mode in Google Search is the most significant distribution point. AI Mode had reached 75 million daily active users by January 2026, roughly comparable to Bing's entire user base. With Personal Intelligence now active in that context, searches can draw on a user's personal data to produce responses tailored to their specific circumstances.

The Gemini app has been the original home of Personal Intelligence since the beta launched in January 2026. The app version is designed for longer, more conversational use, making it the natural interface for open-ended personal planning tasks.

Gemini in Chrome brings the feature into the browser itself, accessible from the address bar. The initial rollout of this surface focused on Mac and Windows desktop users.

The feature is limited to US users on personal Google accounts in English. Google Workspace accounts for business, enterprise, and education are excluded. No international rollout timeline has been confirmed, and Personal Intelligence uses Gemini 3 for processing.

The Privacy Picture: What Google Says and What It Doesn't

Google has been explicit about one thing: Gemini does not train directly on your Gmail inbox or Google Photos library. The company's official position, stated in the feature's launch post and repeated in its privacy documentation, is that the raw content of your emails and photos is used only to generate a response, not to train the underlying model.

What Google does train on is narrower but worth understanding. The company trains on specific prompts you send to Gemini, and on the model's responses to those prompts. Google says it takes steps to filter or obfuscate personal data from those training inputs, but the distinction matters more in practice than it might appear.

When you ask Gemini to help plan a trip based on your previous travel, the prompt sent to Google's servers may include flight confirmation details pulled from Gmail, location data from Photos, and preferences inferred from your YouTube history. That prompt, along with Gemini's response, can be used for model training purposes. You have not handed Google your inbox wholesale, but you have handed them a curated stream of your most sensitive questions, framed against your most sensitive documents.

Google has acknowledged this in its privacy documentation, framing it as intentional design: "We train the model with things like my specific prompts and responses, only after taking steps to filter or obfuscate personal data from the conversation I have with Gemini."

Several things remain publicly undefined: what specifically constitutes "limited prompt and response data," how long that data is retained, and whether any derivatives inform future model updates. The feature is still labeled as experimental.

A separate concern applies specifically to the free-tier expansion. A Malwarebytes report cited in coverage of the rollout found that nine in ten survey respondents expressed concern about AI using their data without consent. When a feature this intimate carries no price tag, adoption patterns shift considerably, and the volume of personal queries flowing through Personal Intelligence will be substantially larger in the free tier than it was behind a paywall.

What the Feature Can't Do Yet

Personal Intelligence is currently read-oriented. Gemini can find, summarize, and reason about information in your Gmail and Calendar, but it does not take actions like sending emails, creating calendar events, or making purchases through the standard interface.

Google has been expanding its agentic capabilities over time, and some Workspace plans include more active actions through Gemini in Gmail and Calendar directly, but those capabilities are separate from what Personal Intelligence delivers in its current form.

Google also acknowledges limitations in the feature's current state. Inaccurate responses and what it calls "over-personalization" are identified as known issues, where the model makes connections between unrelated topics. Timing and nuance present specific challenges, particularly around relationship changes or contextual misreads.

Google's own example is illustrative: if a user's Photos library contains hundreds of images from a golf course, the model might conclude they love golf when in fact they attended because of a family member who plays. The fix is telling Gemini directly that the assumption is wrong.

How to Check Your Settings

If you have a personal US Google account, Personal Intelligence may already be available to you across AI Mode, the Gemini app, and Gemini in Chrome. The feature is off by default and requires explicit opt-in to connect any apps.

To review your current settings and see which apps are already connected, go to myaccount.google.com, then Data and Privacy, then "More Google features." From there you can see connected apps and revoke any connections you didn't intend to authorize. You can also review and delete your Gemini Apps Activity from the same account settings area.

Google's Temporary Chat option provides an alternative for users who want to query Gemini without generating activity that could feed into personalization or training. Chats conducted in temporary mode do not appear in activity history and are not used to train or personalize Gemini.

Wrap up

The free rollout of Gemini Personal Intelligence marks the most significant expansion of Google's AI product into personal data since the company launched AI Overviews in Search. Moving a feature that allows an AI assistant to read your email, search your photos, and cross-reference your search and watch history from a paid tier to a free one changes who uses it and at what scale.

The practical utility of the feature is genuine. For users who have historically managed context manually, switching between Gmail, Photos, and a search interface to gather what they need for a question, Personal Intelligence offers a meaningful reduction in that friction.

Whether the trade-off is worth enabling depends on how comfortable a given user is with the data handling model: specifically, the fact that the prompts they send, which may contain personal details drawn from connected apps, can be used to improve Google's models over time. For anyone who uses Gmail for sensitive personal or financial conversations, the question is not simply whether Google reads your emails. It is whether you want the questions you ask about those emails, and the AI's answers to those questions, to become part of how the model learns.

Frequently Asked Questions

What is Google Gemini Personal Intelligence?

Personal Intelligence is a feature that connects Gemini to a user's Google apps, including Gmail, Google Photos, YouTube history, and Search history, so the AI can answer personal questions using a user's actual data rather than only general knowledge. It became free for all US users with personal Google accounts on March 17, 2026. The feature is opt-in and off by default.

What can Gemini do with access to your Gmail and Photos?

With Gmail access, Gemini can find specific emails, summarize threads, retrieve flight confirmations, and pull details from purchase receipts or booking confirmations. With Photos access, it can locate images from specific trips or events, identify objects in photos like license plates or vehicle details, and use visual context to inform recommendations. With YouTube history and Search history connected, it can infer interests and preferences to personalize suggestions for travel, shopping, books, and content.

Does Google train its AI on your personal emails and photos?

Google says Gemini does not train directly on the raw content of your Gmail inbox or Google Photos library. However, the prompts you send to Gemini, which may include personal details drawn from connected apps, and the model's responses to those prompts, can be used to improve the model over time. Google says it takes steps to filter or obfuscate personal data from those training inputs, but the specific retention periods and processes are not publicly defined.

Is Personal Intelligence available outside the US?

No. As of the March 2026 rollout, Personal Intelligence is available only to US users on personal Google accounts using English. No international rollout timeline has been publicly confirmed. The feature is also not available on Google Workspace business, enterprise, or education accounts.

How is this different from how Google already uses your data?

Google has long used Gmail and Photos data to power features within those apps, such as email search, smart compose suggestions, and photo organization. Personal Intelligence is different because it actively combines data from multiple apps and surfaces it within a conversational AI interface in response to your direct questions. The questions you ask, and what you ask them about, become a new category of data that Google processes and potentially uses to improve its models.

Who can use this feature and how do you enable it?

Any US user with a personal Google account can enable Personal Intelligence in the Gemini app settings, in AI Mode in Google Search, or through Gemini in Chrome. The feature is off by default. You enable it by connecting specific apps through the Gemini settings interface, where you can also choose which apps to connect and revoke access at any time. To review existing connections, go to myaccount.google.com, then Data and Privacy, then "More Google features."

How does this compare to what Microsoft and OpenAI are doing?

OpenAI's memory features in ChatGPT allow the assistant to retain and reference personal context across conversations. Anthropic's Projects feature in Claude maintains context within defined workspaces. Microsoft's Copilot has been expanding with long-term memory and integrations with third-party services. The key architectural difference from Apple Intelligence is that Google's implementation involves cloud processing, while Apple emphasizes on-device processing that keeps personal data on the device.

What are the known limitations of the feature?

Personal Intelligence can produce inaccurate responses and "over-personalization," where the model incorrectly connects unrelated topics. The feature currently cannot take actions such as sending emails or creating calendar events, only read and summarize information. Performance on large inboxes may be imperfect, the feature is labeled as experimental, and it is limited to US users on personal accounts with no confirmed timeline for broader availability.

Related Articles