When GitHub released its annual Octoverse report in late 2025, the headline figure was nearly impossible to contextualize: close to one billion commits pushed in a single year, representing a 25 percent increase over 2024. For a platform that once measured its trajectory in thousands of repositories and millions of developers, the absolute scale of activity has become abstracted from the actual work it is supposed to represent.

Behind the raw volume, however, a more consequential transformation is underway. The developers using GitHub in 2025 are not simply doing what their predecessors did, but faster. They are doing something structurally different: delegating cognitive tasks to AI systems, adopting entirely new programming languages based on how well machines can reason about them, and watching automated agents open pull requests, resolve bugs, and push code without a human ever touching a keyboard. The data does not simply describe platform growth. It describes a profession in the middle of redefining itself.

Understanding what that redefinition means – for individual developers, for engineering teams, for open source communities, and for the security of software at scale – requires looking beneath the headline numbers and into the structural shifts that produced them.

The Scale of What Changed in 2025

A Platform Operating at a Fundamentally Different Speed

The figures from GitHub's Octoverse 2025 report are not simply impressive in the aggregate. They are significant for what they reveal about the velocity of change unfolding beneath the surface activity.

| Activity Metric | 2025 Result | Year-over-Year Change |

|---|---|---|

| Total commits pushed | Nearly 1 billion | +25.1% |

| Single-month commit record | ~100 million (August) | New record |

| Pull requests merged (monthly avg.) | 43.2 million | +23% |

| New repositories created | 230+ per minute | Record pace |

| Open source contributions (public repos) | 1.12 billion | +13% |

| Merged pull requests (total) | 518.7 million | Record |

| New developers joining platform | 36+ million | One per second, all year |

| Total active developer population | 180+ million | Fastest absolute growth rate in platform history |

Growth in the developer population was equally significant. March 2025 marked the largest single month of new open source contributors in GitHub's recorded history, with 255,000 first-timers joining the platform. Over the full year, the rate of new arrivals exceeded one developer per second, and that pace was sustained for twelve consecutive months.

Those figures do not reflect a simple linear extension of prior growth trends. The release of GitHub Copilot Free in late 2024 coincided with a step-change in developer sign-ups that exceeded prior projections. The causal relationship is difficult to establish with precision, but the correlation is structurally meaningful: when AI assistance became free, a materially larger number of people concluded that they could plausibly become developers. The barrier to entry did not disappear, but it shifted – from the ability to write code from scratch toward the ability to specify, evaluate, and iterate on code that an AI system produces.

Not All Growth Represents the Same Activity

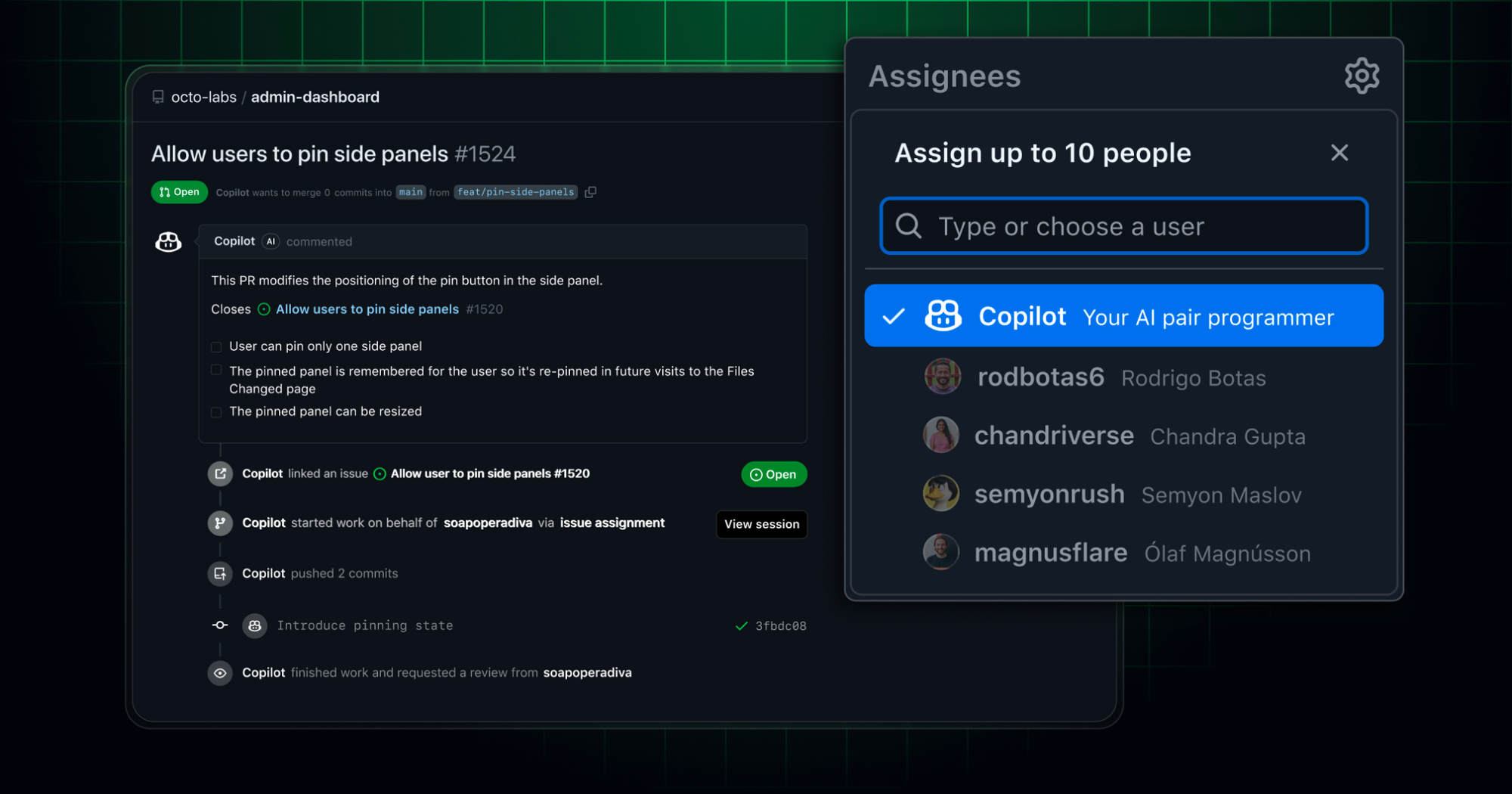

Volume alone does not tell the complete story of what GitHub's 2025 data represents, and the distinction is analytically important. The same period that produced record commit counts also produced record automation. Over one million pull requests were authored by the Copilot coding agent between May and September 2025 alone – a figure representing AI-generated contributions logged on the platform in exactly the same format as human-authored ones.

A meaningful and growing share of the activity registered on GitHub is therefore not human-initiated in the traditional sense. It is the output of AI agents completing tasks that developers assigned them, then reviewed and approved before merging. When a developer assigns an issue to the Copilot coding agent, the agent clones the repository, analyzes the relevant codebase, opens a draft pull request, implements a proposed solution, and requests human review. The commit that results from that workflow is logged like any other commit.

The one billion figure, understood in this context, is not a count of one billion discrete human creative acts. It is a count of one billion outputs from a workflow in which the human role has become increasingly supervisory. That is not a criticism of the technology – it is a clarification of what the metric now measures, and what that reclassification implies for how organizations interpret developer productivity data going forward.

How Copilot Became the Default Starting Point

From Suggestion Engine to Autonomous Agent

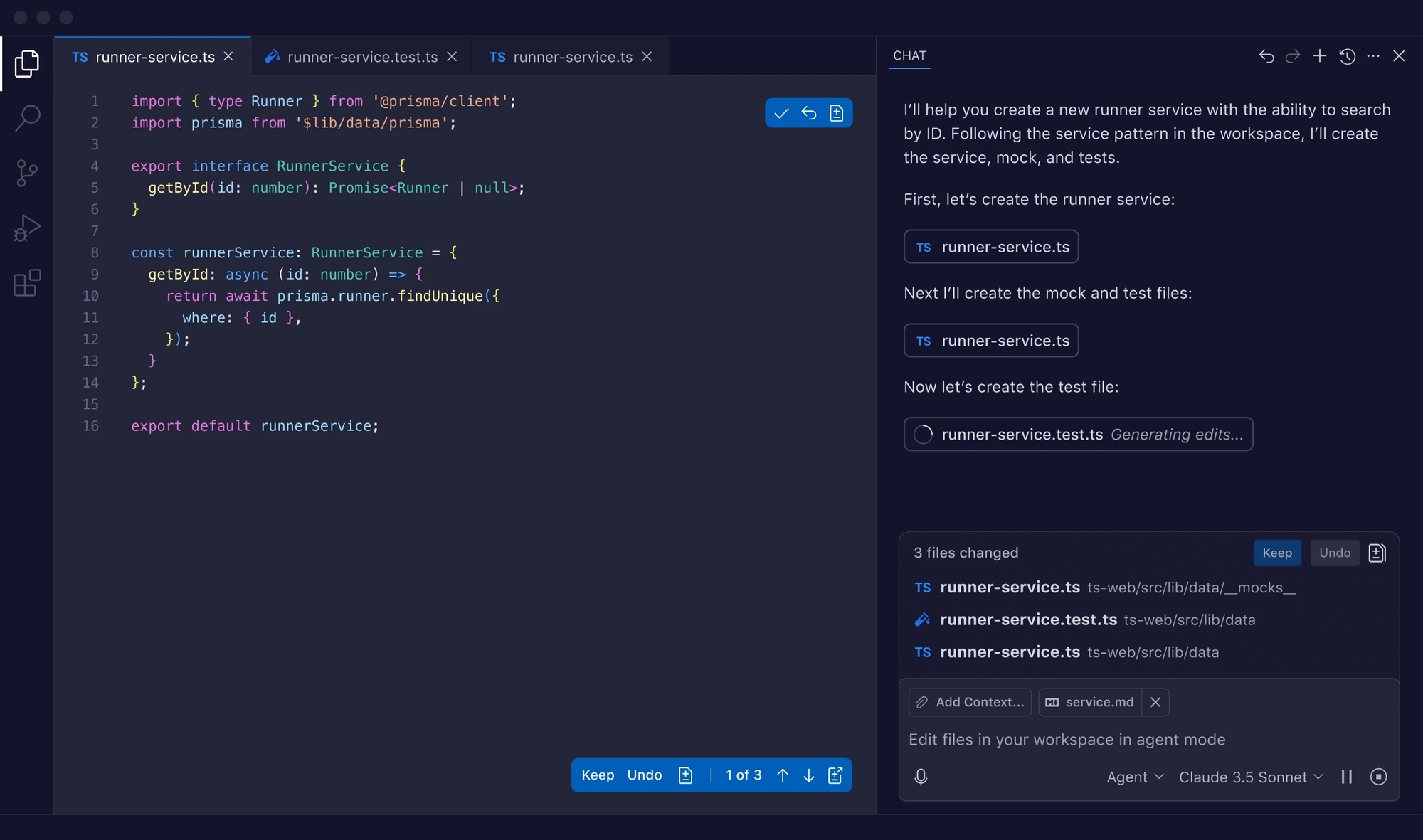

GitHub Copilot launched in 2021 as an inline code completion tool – a sophisticated autocomplete capable of suggesting entire functions based on comments and surrounding context. By 2025, the product had been substantially redesigned around a fundamentally different operational premise: that developers should be able to delegate entire tasks to an AI system, step away from the keyboard, and return to evaluate the results.

At Microsoft Build 2025, GitHub announced that Copilot would include an asynchronous coding agent embedded directly into the platform and accessible from VS Code, creating what the company described as an "Agentic DevOps loop" across its primary development environments. Kate Holterhoff, a senior analyst at RedMonk, described the shift as moving Copilot from an in-editor assistant to a genuine collaborator in the development process – one capable of being assigned implementation tasks, freeing developers to concentrate on higher-order architectural and strategic work.

The adoption data from that transition carries significant implications that extend well beyond market share. Eighty percent of new developers on GitHub now use Copilot within their first week on the platform. For an entire incoming cohort of developers, AI assistance is not an add-on to an established workflow. It is the starting condition from which all other development habits and mental models form. The question of what it means to "know how to code" is already shifting – not because anyone has formally declared a new standard, but because the practical definition is being renegotiated at the point of professional entry.

The Market Structure Forming Around GitHub's Advantage

The AI coding assistant market reached $7.37 billion in 2025, growing from $4.91 billion in 2024, with projections indicating the market will reach $30.1 billion by 2032 at a 27.1 percent compound annual growth rate. GitHub Copilot holds 42 percent market share, while Cursor captured 18 percent within 18 months of its launch – demonstrating that credible alternatives can emerge rapidly, but also that the leader's position is reinforced by structural factors that model quality alone cannot overcome.

GitHub's competitive advantage in this market derives from a position that no standalone AI coding tool can easily replicate: it controls the repository layer where code lives and the pull request layer where code is reviewed and approved. Any AI coding assistant seeking to integrate seamlessly into the software development lifecycle must operate through or alongside infrastructure that GitHub owns. The Copilot coding agent, by contrast, operates with full awareness of a codebase's structure, branch protection rules, and review policies in a deeply integrated manner that external tools can only approximate through API access.

The aggregate trajectory reflects these structural dynamics. Copilot surpassed 20 million users in July 2025, up from 15 million in April 2025 – adding 5 million users in three months. Gartner forecasts that 90 percent of enterprise software engineers will use AI coding assistants by 2028, compared with less than 14 percent in early 2024, indicating that the industry is approaching a threshold at which AI assistance becomes a baseline professional expectation rather than a differentiating capability.

The Language Shift No One Predicted

TypeScript Dethrones Python and JavaScript

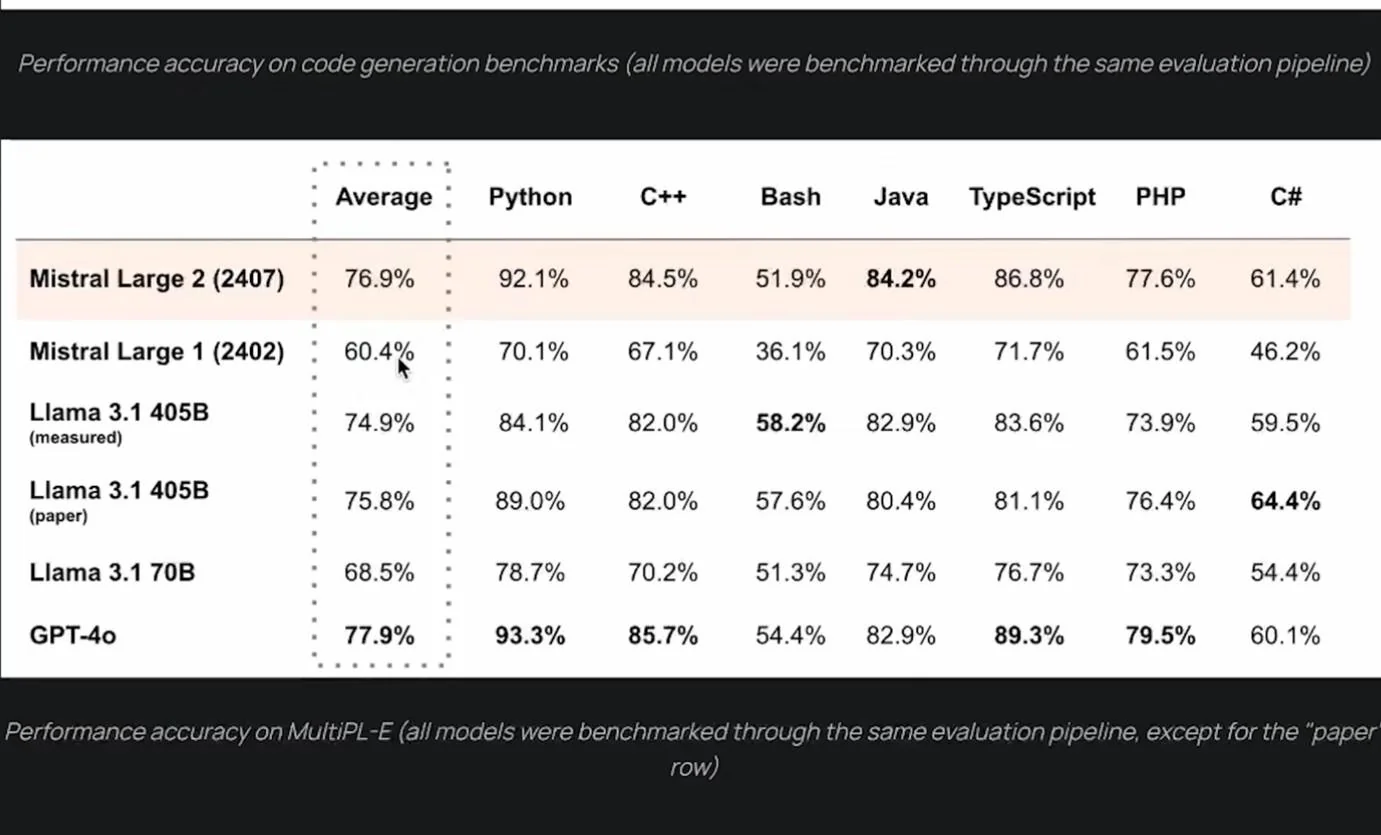

Among the most analytically striking findings in the Octoverse 2025 data is one that does not concern AI tools directly, but rather what AI tools have done to programming language preferences at a systemic level. For the first time in its history, GitHub saw TypeScript overtake both Python and JavaScript in August 2025 to become the most-used language on the platform – a shift that GitHub itself characterized as the most significant language movement in more than a decade.

TypeScript reached 2,636,006 monthly contributors in August 2025, a year-over-year increase of 66.63 percent, representing approximately 1.05 million new contributors acquired in a single year. The scale of that shift is notable not merely as a competitive achievement for Microsoft's typed superset of JavaScript, but for what the underlying mechanism of the shift reveals about how AI is reshaping technology decision-making at a structural level.

The Convenience Loop: How AI Reselects the Winners

GitHub developer advocate Andrea Griffiths coined the term "convenience loop" to describe the mechanism driving these shifts, and it merits careful unpacking because its implications extend well beyond the TypeScript story.

The mechanism operates through a specific technical advantage that statically typed languages hold in AI-assisted development workflows:

- When a developer declares a variable as a string in TypeScript, the AI immediately eliminates all non-string operations from its consideration, producing more contextually correct and reliable suggestions

- In untyped JavaScript, where any variable could contain any value, the AI must reason through substantially more possibility space, producing correspondingly less reliable output

- A 2025 academic study found that 94 percent of LLM-generated compilation errors were type-check failures – meaning TypeScript's strict type system catches AI mistakes automatically, before they become production problems

- Developers experience this reliability improvement as TypeScript simply feeling easier to work with under AI assistance

The self-reinforcing cycle that results is the convenience loop itself: developer preference for TypeScript generates more TypeScript training data, which makes AI models better at TypeScript, which makes TypeScript feel even more natural to work with under AI assistance, which further concentrates developer preference. The aggregate effect over 2025 is what appears in GitHub's data as a dramatic language shift – but is in structural terms something more significant: AI reselecting the winning technologies, not through deliberate design, but through the cumulative weight of millions of frictionless developer interactions.

The trend extends beyond TypeScript, reinforcing the interpretation that it reflects a broader dynamic rather than TypeScript-specific factors:

| Language | YoY Growth | Driver |

|---|---|---|

| TypeScript | +66% (contributors) | Static typing optimizes AI suggestion reliability |

| Luau (Roblox) | +194% | Gradual typing reduces AI output error rates |

| Typst | +108% | Strong typing in LaTeX alternative |

| LLM SDK usage (public repos) | +178% | Mainstream AI integration across stacks |

The strategic framing that GitHub offered is worth taking seriously at face value: the relevant optimization criterion for technology selection is no longer language aesthetics, ecosystem familiarity, or even raw capability – it is the shared leverage that a language provides to both developers and AI systems working in combination. The languages that survive the next decade will not necessarily be the ones developers love most. They will be the ones that give humans and machines the most productive common ground.

The Security Reckoning Concealed Within the Growth Data

Speed Has Structurally Outpaced Scrutiny

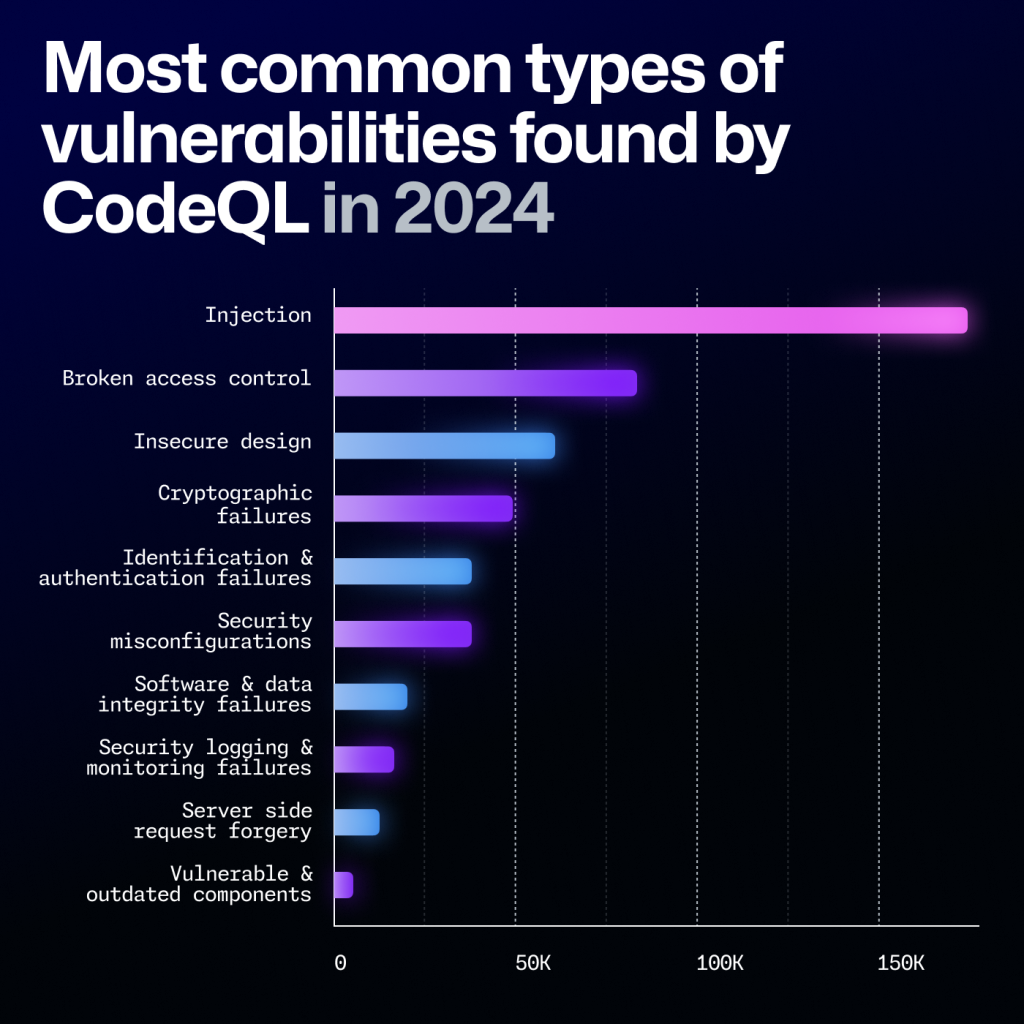

The same Octoverse report that documented record commit volumes also registered a security signal that demands analytical attention proportional to its severity. Broken Access Control overtook Injection as the most common CodeQL security alert in 2025, flagged in over 151,000 repositories, representing a 172 percent year-over-year increase. Much of this growth traces directly to misconfigured permissions in CI/CD pipelines and AI-generated scaffolds that omit critical authentication checks – a pattern consistent with what research on AI code generation would predict.

The research confirms the connection with measurable specificity. Veracode analyzed code produced by over 100 LLMs across 80 real-world coding tasks and found that while generative AI excels at producing syntactically correct, functionally plausible code, it introduces security vulnerabilities in 45 percent of cases. The underlying explanation, as the company's CTO framed it, is structural rather than incidental: developers using AI assistance do not need to specify security constraints in order to receive functional code – which means they often do not, effectively delegating those decisions to models that make the wrong choice nearly half the time.

The vulnerability profile of AI-generated code is specific and measurable:

| Vulnerability Category | AI-Generated Code vs. Human-Written |

|---|---|

| Improper password handling | 1.88x more likely |

| Insecure object references | 1.91x more likely |

| Cross-site scripting (XSS) | 2.74x more likely |

| Insecure deserialization | 1.82x more likely |

| Spelling and naming errors | 1.76x less likely (AI advantage) |

| Test coverage issues | 1.32x less likely (AI advantage) |

The pattern that emerges is a technology that reliably improves certain lower-stakes dimensions of code quality while introducing elevated risk in higher-stakes security dimensions – a tradeoff that organizations adopting AI coding tools at scale need to understand and plan for explicitly rather than discovering through production incidents.

The Process Problem: Output Velocity Exceeding Review Capacity

OX Security's analysis of over 300 software repositories, including 50 that used AI coding tools including Copilot, Cursor, and Claude, provides a more nuanced diagnosis of where the risk actually originates. AI-generated code is not, in aggregate, more vulnerable per line than human-written code. The risk is a function of velocity. The bottlenecks that historically slowed code from initial implementation to production deployment – code review, debugging sessions, team-based quality assessment – have been substantially compressed or eliminated. Software that previously took months to build can now be completed and deployed in days, meaning vulnerable code reaches production environments before anyone has had adequate opportunity to examine or harden it.

As Eyal Paz, VP of Research at OX Security, stated: "Functional applications can now be built faster than humans can properly evaluate them. Vulnerable systems now reach production at unprecedented speed, and proper code review simply cannot scale to match the new output velocity."

A counterintuitive finding from a multi-institutional research collaboration adds an additional dimension to the security analysis that carries significant implications for organizations attempting to use AI tools to improve their security posture. Researchers from the University of San Francisco, the Vector Institute for Artificial Intelligence, and the University of Massachusetts Boston analyzed 400 code samples across 40 iterative improvement rounds using prompting strategies that explicitly instructed LLMs to fix vulnerabilities and improve security. The result was a 37.6 percent increase in critical vulnerabilities after just five iterations. The assumption that AI systems can remediate their own security weaknesses through successive refinement appears to be empirically unsupported – and the act of attempting that remediation may actively worsen the underlying exposure.

The implication is not that AI coding tools should be abandoned. It is that the organizational security infrastructure surrounding these tools – the review processes, the security testing pipelines, the architectural governance frameworks – has not kept pace with their deployment, and the gap is manifesting as measurable and increasing vulnerability exposure at scale.

What Agentic Development Actually Looks Like in Practice

The Structural Shift From Assistance to Delegation

The Copilot coding agent represents a categorically different product from the suggestion-based tool that developers adopted over the preceding three years, and the distinction has substantial implications for how software teams are organized, how quality is maintained, and how risk is managed.

The contrast between the two interaction models is illuminating:

| Dimension | Inline Suggestion Tool | Autonomous Coding Agent |

|---|---|---|

| Developer role | Active author, evaluating suggestions in real time | Task specifier and output reviewer |

| AI role | Sophisticated autocomplete, offering options | Autonomous implementer, producing pull requests |

| Control pattern | Continuous -- developer accepts or rejects each suggestion | Deferred -- developer evaluates completed implementation |

| Cognitive demand | Sustained engagement throughout coding session | Front-loaded specification, back-loaded review |

| Failure mode | Isolated bad suggestion accepted without review | Architectural or security flaw embedded in complete implementation |

| Analogous management model | Pair programming with a capable junior developer | Delegating a task to an independent contractor |

When a developer assigns an issue to the Copilot coding agent, the interaction structure fundamentally inverts relative to prior AI coding experiences. The agent works autonomously in a GitHub Actions-powered environment, completes development tasks assigned through GitHub issues or Copilot Chat prompts, and creates pull requests with the results for human review and approval. The developer's primary cognitive contribution shifts from writing code to writing issues that accurately describe what needs to be accomplished – and then evaluating whether the agent's implementation actually satisfies those requirements.

The practical experience, for teams that have adopted agentic workflows, is closer to managing a capable but context-limited junior developer than to using a software tool. That analogy carries important implications: the attendant responsibilities around clear specification, thorough review, and quality oversight that junior developer management implies do not disappear because the "developer" in question is an AI system. They become more important, because the rate of output from the AI agent substantially exceeds what any human team member could produce.

Open Source Governance Under Pressure

The same velocity dynamics that accelerate individual developer productivity are creating structural strain in open source communities that the GitHub data illuminates with some clarity. The platform's record contribution volumes – 1.12 billion contributions to public repositories in 2025, with 43.2 million pull requests merged monthly – become difficult to sustain when a significant and growing share of that volume requires extensive rework or outright rejection before it can be incorporated.

Some maintainers have already begun deploying AI tools defensively, using them to triage incoming issues, detect duplicate submissions, and handle routine labeling tasks as a form of triage against contribution volumes that exceed what human review capacity can absorb. The approach is a reasonable adaptation, but it is also a stopgap measure that addresses the symptom without resolving the underlying structural tension.

The phenomenon has been described, borrowing a phrase from the early history of internet communities, as an "Eternal September" problem: when the friction of creating and submitting a pull request approaches zero, the social contract embedded in open source contribution – the implicit commitment to quality, responsiveness, and community engagement that makes open source projects sustainable – comes under pressure from volume alone. That is a governance and cultural challenge without an obvious technical resolution, and the 2025 data suggests it is intensifying rather than stabilizing.

The Productivity Paradox: What the Numbers Actually Prove

The Individual-Level Case Is Compelling

The productivity argument for AI coding tools is supported by evidence that is genuinely compelling at the level of individual task performance, and simultaneously complicated by evidence that is equally credible at the level of team and organizational outcomes.

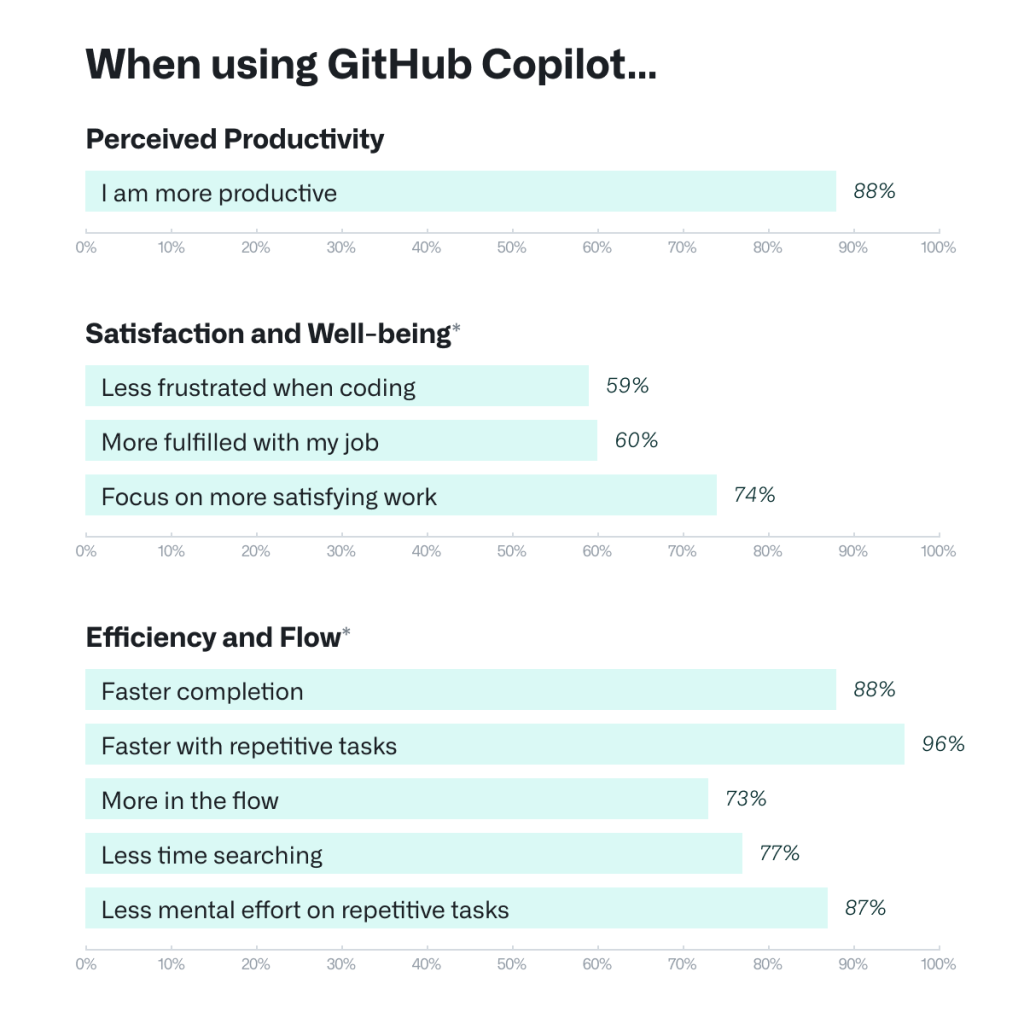

At the individual level, the data is favorable. Research involving 4,800 developers found that tasks are completed 55 percent faster with GitHub Copilot. Pull request cycle time decreased from 9.6 days to 2.4 days among Copilot users, and successful build rates increased 84 percent. Those figures, evaluated in isolation, represent productivity gains of a kind that would historically have required years of process optimization or significant headcount increases to achieve.

The System-Level Picture Is More Ambiguous

The system-level picture, however, introduces significant complications that the more optimistic narratives around AI-assisted development tend to elide. Google's DORA report found that developers who adopted AI coding tools estimated their individual effectiveness improved by approximately 17 percent – while software delivery instability increased by nearly 10 percent over the same period. Perhaps more significantly, 60 percent of developers work in teams that suffer from either lower development speeds, greater delivery instability, or both – suggesting that individual-level gains frequently do not translate into organizational-level improvements.

The explanation for this apparent contradiction lies in the misalignment between what AI tools optimize for and what software organizations fundamentally need:

| Optimization Dimension | What AI Tools Deliver | What Organizations Need |

|---|---|---|

| Code output velocity | High -- dramatic acceleration | Moderate -- balanced with review capacity |

| Syntactic correctness | High -- reliable and improving | High -- necessary but not sufficient |

| Functional plausibility | High -- code that runs | High -- but must be maintainable, not just functional |

| Security consideration | Low -- frequently omitted | Critical -- non-negotiable in production |

| Architectural coherence | Low -- locally correct, globally inconsistent | Essential for long-term maintainability |

| Test coverage | Moderate -- AI can generate tests | Must match the complexity of what is being tested |

The consequence of this misalignment is measurable in the GitHub data itself. The average developer checked in 75 percent more code in 2025 than in 2022. More code is not, in itself, better software – it represents more surface area for bugs, greater maintenance burden, and an accumulated base of technical debt that will require remediation over years rather than weeks. As OX Security framed it, the development of organizational security practices and review infrastructure has not kept pace with the deployment velocity that AI tools enable, and the gap is growing.

The most honest assessment of the current evidence is that AI coding tools provide genuine, measurable acceleration for well-specified individual development tasks, while creating measurable systemic risk when the organizational infrastructure surrounding those tasks – review processes, security testing, architectural governance, quality standards – does not scale proportionally to accommodate the increased output velocity they generate.

What This Means for the Developer Profession

A Question the Industry Has Not Formally Answered

The volume and trend data from GitHub's Octoverse 2025 report collectively raise a question that no major institution has formally resolved, but that individual developers, engineering managers, and computer science educators are already navigating in practice: what does it mean to be a software developer in a professional environment where AI systems can write, review, test, and deploy code?

The answer is not that developers become irrelevant. Demand for software continues to grow faster than the supply of people capable of specifying, evaluating, and taking organizational responsibility for what gets built. The expanded developer population enabled by AI assistance reflects the barrier to entry shifting, not the underlying need for human judgment disappearing.

The skills that remain distinctly human and are correspondingly increasing in professional value include:

- Systems thinking: the ability to understand how individual components interact at scale, where architectural decisions will create friction in production over years rather than weeks, and how to design for long-term maintainability rather than immediate functional correctness

- Security judgment: the capacity to understand not merely whether a piece of code works as specified, but what threat model it operates under, what assumptions it encodes, and where those assumptions may fail under adversarial conditions

- Specification quality: the ability to translate ambiguous business requirements into precise technical tasks that an AI agent can execute faithfully, and to evaluate whether the resulting implementation actually satisfies the underlying intent

- Architectural governance: the organizational capability to identify when AI-generated code is locally correct but globally problematic – when it solves the immediate problem in a way that will complicate the next ten problems

The developers whose value is increasing under the current trajectory are those who understand that AI-generated code that passes tests and functions as specified may nonetheless be insecure, architecturally incoherent, or unmaintainable at scale. The developers whose contributions AI tools most directly commoditize are those whose primary value was producing syntactically correct implementations of clearly specified tasks – precisely the dimension of software development where AI performs most reliably.

The Road Ahead: What 2026 Signals for the Next Phase

Productive Imbalance, and the Infrastructure Still Being Built

The transition from AI as a suggestion tool to AI as an autonomous agent is not a future development that organizations can plan toward at their own pace. It is already underway, documented in the Octoverse data, and proceeding at a pace that is outrunning the security, governance, and quality assurance infrastructure necessary to sustain it responsibly.

The current state is one of productive imbalance: the tools are ahead of the practices. Organizations are experiencing the genuine benefits of dramatically accelerated development output while the costs of that acceleration – security debt, architectural inconsistency, open source governance strain, and the unresolved challenge of review processes that cannot scale to match AI output velocity – are only beginning to accumulate at a scale demanding systematic organizational response.

Gartner's projection that 90 percent of enterprise software engineers will use AI coding assistants by 2028 implies that the industry has approximately two years to build the organizational and governance infrastructure necessary to manage agentic development responsibly. If that infrastructure does not materialize proportionally, the result will be a substantially larger, more distributed, and more difficult-to-remediate vulnerability surface than exists today – one built at record pace precisely because the tools that built it were optimized for speed rather than security.

GitHub's Octoverse 2025 concluded with a framing that is accurate and worth preserving: the story of 2025 is not AI versus developers. It is about the evolution of developers in the AI era – as orchestrators of agents, as architects of language ecosystems, and as stewards of the open source infrastructure that the rest of the software economy depends upon.

That framing is forward-looking and fundamentally correct. It is also incomplete without acknowledging that orchestrating agents, governing language ecosystems, and ensuring the quality of machine-generated code require skills, organizational disciplines, and institutional structures that the profession is still actively developing. The nearly one billion commits of 2025 represent both an extraordinary achievement in scale and an unresolved question about quality – and the answer to that question will shape the reliability and trustworthiness of the systems that the next generation of AI-assisted developers builds.

Frequently Asked Questions

How many commits did GitHub record in 2025, and what drove the growth?

GitHub's Octoverse 2025 report recorded nearly one billion commits in 2025, a 25.1 percent increase over the prior year. The growth reflects both a significant expansion in the developer population – over 36 million new developers joined the platform – and a measurable increase in per-developer activity attributable to AI coding tools. The release of GitHub Copilot Free in late 2024 coincided with a step-change in sign-ups that exceeded prior projections. A meaningful and growing share of commit activity reflects agentic AI workflows, in which autonomous agents complete development tasks and create pull requests without direct human code authorship – meaning the one billion figure measures outputs from an increasingly supervisory human role rather than one billion discrete human creative acts.

What is the GitHub Copilot coding agent, and how does it differ from earlier AI coding tools?

The Copilot coding agent, announced at Microsoft Build 2025 and made generally available to paid subscribers by late 2025, operates asynchronously and autonomously rather than providing real-time inline suggestions. A developer assigns an issue to the agent, which then clones the relevant repository, analyzes the codebase, implements a proposed solution, and opens a draft pull request for human review and approval. The agent cannot push directly to main or master branches without human authorization. This architecture represents a structural shift from AI as a suggestion-based productivity enhancement to AI as a task-completion system – fundamentally changing the developer's primary role from authoring code to specifying requirements and evaluating AI-generated implementations.

Why did TypeScript overtake Python and JavaScript on GitHub in 2025?

TypeScript surpassed both Python and JavaScript to become the most-used language on GitHub by monthly contributors in August 2025, reflecting a 66 percent year-over-year increase. The shift is attributable in significant part to what GitHub developer advocate Andrea Griffiths calls the "convenience loop": AI coding assistants perform substantially more reliably with statically typed languages, because type declarations give models explicit constraints that reduce suggestion error rates. A 2025 academic study found that 94 percent of compilation errors generated by LLMs were type-check failures, meaning TypeScript's strict type system catches AI mistakes automatically before they become production problems. Developer preference for TypeScript accordingly increases, generating more TypeScript training data, making AI better at TypeScript, and reinforcing the cycle. Python remains dominant for AI and machine learning research workloads, where model training rather than AI-assisted application development is the primary activity.

What are the security risks of AI-generated code, and how severe are they?

Research from Veracode, analyzing code produced by over 100 LLMs across 80 real-world coding tasks, found that AI-generated code introduces security vulnerabilities in 45 percent of cases. Specific vulnerability categories – including broken access control, XSS vulnerabilities, improper password handling, and insecure object references – appear at significantly elevated rates in AI-generated code compared with human-authored equivalents. GitHub's Octoverse data confirms the systemic trend: Broken Access Control became the most common CodeQL security alert in 2025, flagged in over 151,000 repositories with a 172 percent year-over-year increase. A multi-institutional research study found that asking LLMs to iteratively improve the security of existing code actually increased critical vulnerabilities by 37.6 percent after five rounds of refinement – a counterintuitive finding that demonstrates AI self-correction cannot substitute for rigorous human security review.

How is AI affecting open source development and the sustainability of open source communities?

The dramatic reduction in friction for creating and submitting pull requests, which AI tools enable, is placing significant structural strain on open source maintainers. Record contribution volumes – over 1.12 billion contributions to public repositories in 2025 – mean that maintainers in many projects face submission volumes that exceed what human review capacity can absorb meaningfully. Some projects have adopted AI tools defensively, deploying them to triage incoming issues, identify duplicate submissions, and handle routine labeling as stopgap measures. The broader concern, framed as an "Eternal September" problem, is that high-volume, low-friction contribution at scale erodes the community norms, governance standards, and implicit social contracts that make open source projects sustainable over time – a challenge without a clear technical resolution.

What do GitHub's 2025 statistics mean for the future of software developer roles?

The Octoverse 2025 data does not support the conclusion that AI tools are eliminating the need for software developers. Demand for software continues to grow faster than the supply of engineers capable of specifying, evaluating, and taking responsibility for what gets built. What the data does indicate is a meaningful shift in which skills carry the most professional value. Systems thinking, security judgment, architectural governance, and the ability to translate ambiguous requirements into precise specifications and evaluate AI-generated output critically are all becoming more differentiated and economically valuable – while routine implementation tasks are increasingly delegated to AI agents. Developer compensation is already reflecting this shift, with AI-fluent roles commanding substantially higher salaries than positions defined primarily by code authorship.

How is the global geography of software development changing, and what does it imply for AI tools?

India emerged as the largest contributor base to public and open source projects on GitHub in 2025, having overtaken the United States, with more than 5 million new developers joining the platform and representing over 14 percent of all new global accounts. GitHub projects India will have 57.5 million developers by 2030, representing approximately one in three new developers worldwide. Significant growth is also occurring across Africa, Southeast Asia, and Latin America. This geographic redistribution carries direct implications for AI tool design, because the current generation of tools was developed primarily by and for English-language developer communities. As the center of global development activity shifts toward populations for whom English is a secondary language, the cultural appropriateness and contextual reliability of AI coding tools will require deliberate investment that has not yet materialized at the necessary scale.

Related Articles