By the time YouTube noticed the pattern, the damage was already spreading.

AI-generated videos showing Doctor Mike, a physician with 14 million subscribers, apparently recommending a "miracle supplement" were circulating on TikTok and bleeding onto YouTube. The clips looked real. The voice sounded right. The face tracked convincingly. None of it was him.

"I've spent over a decade investing in garnering the audience's trust and telling them the truth. It obviously freaked me out." Mikhail Varshavski (Doctor Mike), speaking to CNBC, December 2025

His case is not unusual. It is now a baseline risk for anyone who has been on YouTube long enough to have a recognizable face and voice in a substantial amount of publicly accessible footage. That footage is the raw material that AI video tools require to build a convincing replica, and those tools have become significantly easier to use.

YouTube has spent the past two years building a layered response. The response involves mandatory disclosure rules, automated detection systems, likeness protection tools for creators, and a policy framework that is still evolving fast enough that even experienced creators are catching up with it.

Understanding that framework matters whether you are a viewer trying to evaluate what you are watching, a creator concerned about your own likeness, or someone using AI tools in your production process.

The Three Problems YouTube Is Actually Trying to Solve

Deepfakes on YouTube are not a single category of harm. The platform is dealing with several distinct problems simultaneously, each driven by different actors with different motivations.

1. Scam content using creator likenesses

The most commercially motivated form involves AI-generated videos using a creator's face and voice to promote fraudulent products, supplements, investment schemes, or giveaways. These clips are designed to be convincing enough that a viewer who trusts the real creator will follow through. A CBS News investigation found that complaints about deepfake misuse of creator likenesses more than doubled in 2025.

2. Political manipulation

During Indonesia's 2024 election, fabricated videos of presidential candidates circulated widely across platforms including YouTube, documented by CNN and the BBC. YouTube responded by tightening its rules around synthetic political content, establishing that any AI-altered portrayal of a real individual requires disclosure and may still face reduced distribution even when labeled.

3. High-volume AI spam content

From around 2023 onward, YouTube was flooded with AI-generated content that was not deepfaking any specific individual but was entirely synthetic: AI narration over stock footage, algorithmically assembled true crime stories presented as real, AI-voiced fake news with the visual language of journalism.

One channel, True Crime Case Files, had over 83,000 subscribers and was removed entirely after posting more than 150 videos narrating AI-generated murder stories as fact. One falsely described a crime in Colorado and generated actual local media inquiries.

These three problems require different solutions. The policy and enforcement infrastructure YouTube has built since 2024 addresses all three, with varying degrees of effectiveness.

What YouTube's Policies Actually Say

YouTube does not ban AI-generated content. The platform treats AI as a legitimate creative tool. The relevant question, as YouTube frames it, is not whether AI was used, but whether the content could reasonably mislead viewers into thinking a real person said or did something they did not.

What requires disclosure

The mandatory disclosure rule, introduced in March 2024 and enforced more aggressively from 2025 onward, applies to:

- AI voice clones of real people

- Deepfake face swaps or realistic facial manipulation

- Synthetic performances where a person appears to say or do something they never recorded

- AI-generated scenes depicting real-world events that did not occur

The rule applies to videos, Shorts, and livestreams.

When disclosure is required, creators activate a toggle in YouTube Studio during upload. The platform then automatically adds an "Altered or synthetic content" banner beneath the video player. Viewers can click through for an explanation of what synthetic elements are present.

What does not require disclosure

- AI used for scriptwriting or brainstorming

- Thumbnail generation

- Audio cleanup or color correction

- Background removal

- Standard editing enhancements

- Obviously animated or stylized AI art

- Clearly fantastical scenarios

The test is always whether the content realistically depicts real people in situations they did not participate in.

The July 2025 inauthentic content update

Alongside disclosure, YouTube updated its inauthentic content policy in July 2025, tightening what counts as spam or low-quality content. The update specifically targeted channels uploading high volumes of AI-generated content with no meaningful human contribution:

- Identical-sounding narration across dozens of videos

- Recycled scripts with minimal variation

- AI-generated imagery without editorial context

Content that fails the "significantly original and authentic" test is ineligible for monetization, and channels exhibiting spam-farm patterns face suspension.

The Likeness Detection System

The most technically ambitious piece of YouTube's response is its likeness detection system, built on the same infrastructure as Content ID but applied to faces and voices rather than audio fingerprints.

How it works

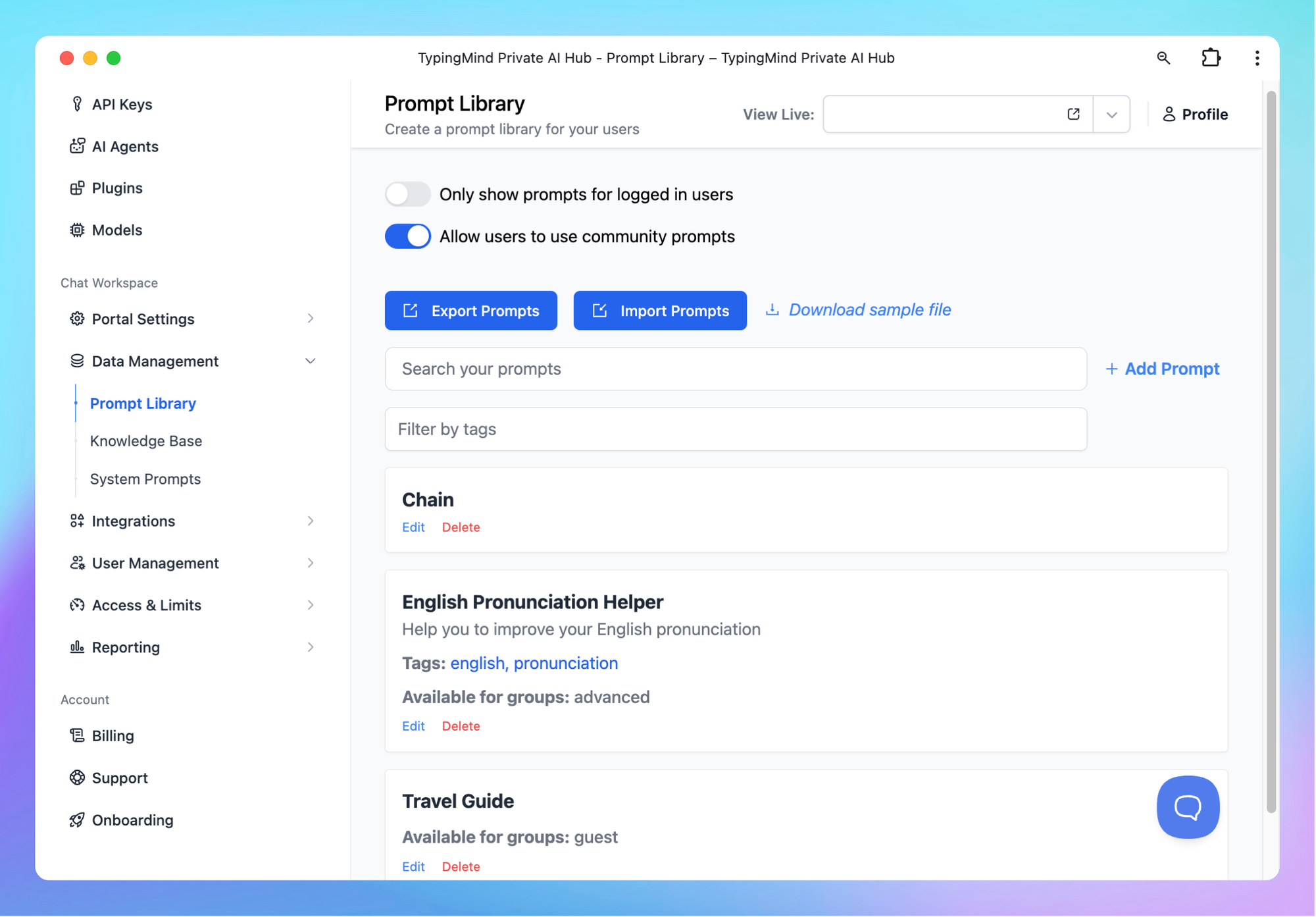

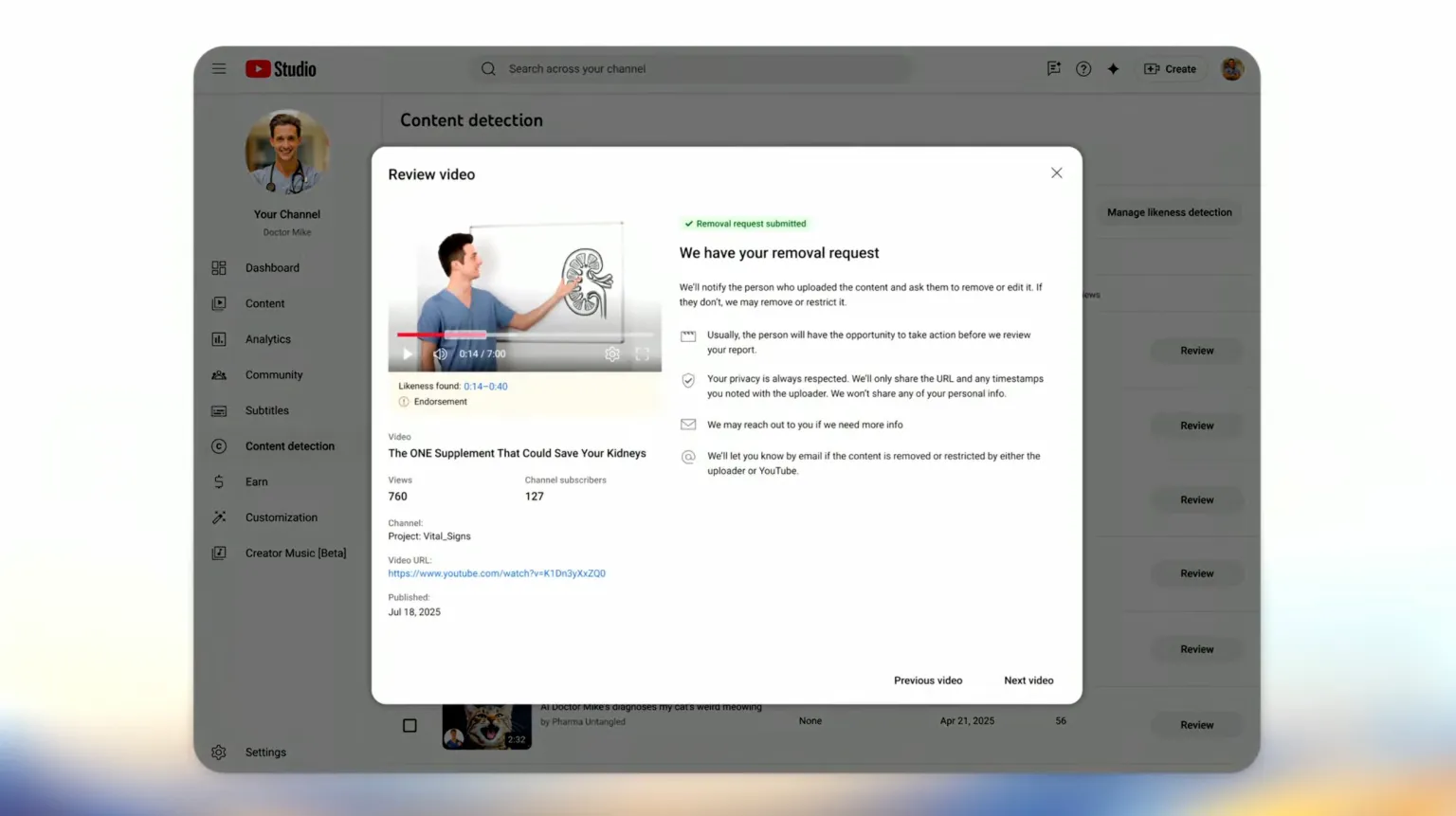

YouTube launched the likeness detection tool in October 2025 for YouTube Partner Program creators, expanding it to journalists, government officials, and political candidates in March 2026.

Enrollment process:

- Go to YouTube Studio, "Likeness" tab

- Complete Google's identity verification using a government-issued ID and a short selfie video

- YouTube builds a face template from the reference data

- The system automatically scans all uploaded content across the platform

- Frames, thumbnails, voice patterns, and on-screen metadata are compared against the verified reference

- When a potential match is found, the creator is notified and can request removal

Detection does not guarantee removal. YouTube has stated it will preserve content in the public interest, including parody and satire, even when it uses a real person's likeness. The distinction between a scam deepfake and a legitimate satirical video is a judgment call the platform makes case by case.

The privacy controversy

Because enrollment requires submitting biometric data to Google, critics including intellectual property experts raised concerns about how that data may be used beyond detection. YouTube told CNBC that Google has never used creators' biometric data to train AI models, though it declined to change the underlying policy language. The terms of service as written leave the door open for future uses not explicitly prohibited.

Participation is voluntary. Creators who opt out are not scanned, and the system removes their data within 24 hours of withdrawal. However, non-participation means non-protection: a creator who does not enroll cannot use the tool to identify or request removal of deepfakes of themselves.

What This Means for Creators

If you use AI in your production

The practical implications are manageable provided disclosure is treated as standard workflow.

Does not require disclosure:

- Using ChatGPT to draft a script

- Generating thumbnails with Midjourney

- Cleaning up audio with AI processing tools

- AI translation for a dubbed version of your own voice (when not mimicking another person)

Requires the disclosure label:

- A video in which a public figure appears to make statements they never made

- An AI voice clone of yourself that sounds indistinguishable from your natural voice, used for localization

- Realistic footage of events that did not occur

Penalties for non-disclosure:

- Limited ad serving

- Demonetization

- Age gates

- Reduced distribution

- Removal from the YouTube Partner Program

Promotional content faces additional scrutiny. If a creator appears to endorse a product via AI-generated likeness without consent and disclosure, the consequences escalate beyond standard penalties.

If your likeness is being used without your permission

The likeness detection system is the most direct protective tool available on the platform. The privacy trade-off of submitting a government ID and biometric video to Google is real and worth weighing individually. For creators in health, finance, or other high-trust niches where impersonation is directly used to harm their audience, the calculation often favors enrollment.

What This Means for Viewers

YouTube's disclosure labels and inauthentic content enforcement have created a more visible, if imperfect, transparency layer. The "Altered or synthetic content" label appears at the watch page level when creators have disclosed AI use, without requiring the viewer to seek it out.

The honest limitation: the system depends substantially on creator compliance. A creator who uses AI to fabricate statements by a public figure and simply does not disclose it is relying on YouTube's automated detection to catch the violation. That detection system is improving but not yet comprehensive. Sophisticated deepfakes designed to evade automated systems will still circulate until they are reported or otherwise surfaced.

The practical guidance for viewers has not changed fundamentally:

- Content making extraordinary claims about what a public figure said or did should be verified through other sources before being shared

- The presence of an "Altered or synthetic content" label is useful but not authoritative

- The absence of a label does not confirm the content is authentic

The Broader Policy Picture

YouTube has been explicit that technology alone is not sufficient. The platform publicly supports the NO FAKES Act, introduced in 2025 by Senators Chris Coons and Marsha Blackburn, which would establish a federal right of publicity covering AI-generated digital replicas of individuals.

As YouTube's VP of creator products, Amjad Hanif, told Axios: "A responsible AI future needs two things: clear legal frameworks like NO FAKES, and the scalable technology to actually enforce those principles on our platform."

The current enforcement system is the technology side of that equation. The legal framework remains incomplete. Without federal legislation establishing individual rights over AI-generated likenesses, platform policies operate in a space where the rules are set entirely by the platform and can change as its commercial interests evolve. That is a structural limitation that no improvement to YouTube's detection system fully addresses.

For now, YouTube's approach represents the most developed response to AI-generated content of any major video platform: the most visible disclosure labeling, the most technically sophisticated detection system, and the most active enforcement of inauthentic content rules. It is also a system still catching up to the pace at which the tools it is trying to regulate are improving.

Frequently Asked Questions

Does YouTube ban AI-generated content?

No. YouTube treats AI as a legitimate creative tool and allows AI-generated content on the platform. What YouTube prohibits is undisclosed synthetic content that could realistically mislead viewers, high-volume automated spam with no meaningful human contribution, and AI-generated content that simulates real people in ways that violate privacy or harassment policies.

What content requires an AI disclosure label on YouTube?

Disclosure is required for realistic content involving AI voice clones of real people, deepfake face swaps or facial manipulation, synthetic performances depicting real people saying or doing things they never did, or AI-generated scenes of real-world events that did not occur. It is not required for AI used in scriptwriting, thumbnails, audio cleanup, color correction, or other production assistance that does not involve simulating real people.

How does YouTube's likeness detection system work?

Creators in the YouTube Partner Program can enroll by submitting a government ID and a selfie video through YouTube Studio's "Likeness" tab. YouTube builds a face template and scans uploaded content for matches. When a potential match is found, the creator is notified and can request removal. Detection does not guarantee removal, as YouTube preserves content considered satire or parody in the public interest.

What are the risks for creators who do not disclose AI use?

Penalties for undisclosed synthetic content include limited ad serving, demonetization, age-gating, reduced distribution, or removal from the YouTube Partner Program. Promotional content involving AI-generated likeness without disclosure and consent faces additional scrutiny. Channels showing patterns of high-volume, low-effort AI content generation risk suspension.

Can viewers tell if YouTube content is AI-generated?

When creators have properly disclosed AI use, YouTube adds an "Altered or synthetic content" label visible on the watch page. However, the system relies substantially on creator compliance, and sophisticated deepfakes may circulate before automated detection flags them. The absence of a label does not confirm authenticity, and any extraordinary or surprising claim about a public figure should be verified through additional sources.

Is submitting biometric data to YouTube's likeness detection system safe?

YouTube has stated that Google has not used creators' biometric data to train AI models. However, intellectual property experts raised concerns about the policy language, which leaves open potential future uses of the data. YouTube declined to change its underlying policy. Participation is voluntary and data can be removed by opting out. Each creator must weigh the protective value of enrollment against the privacy trade-off of submitting biometric information to the platform.

Related Articles