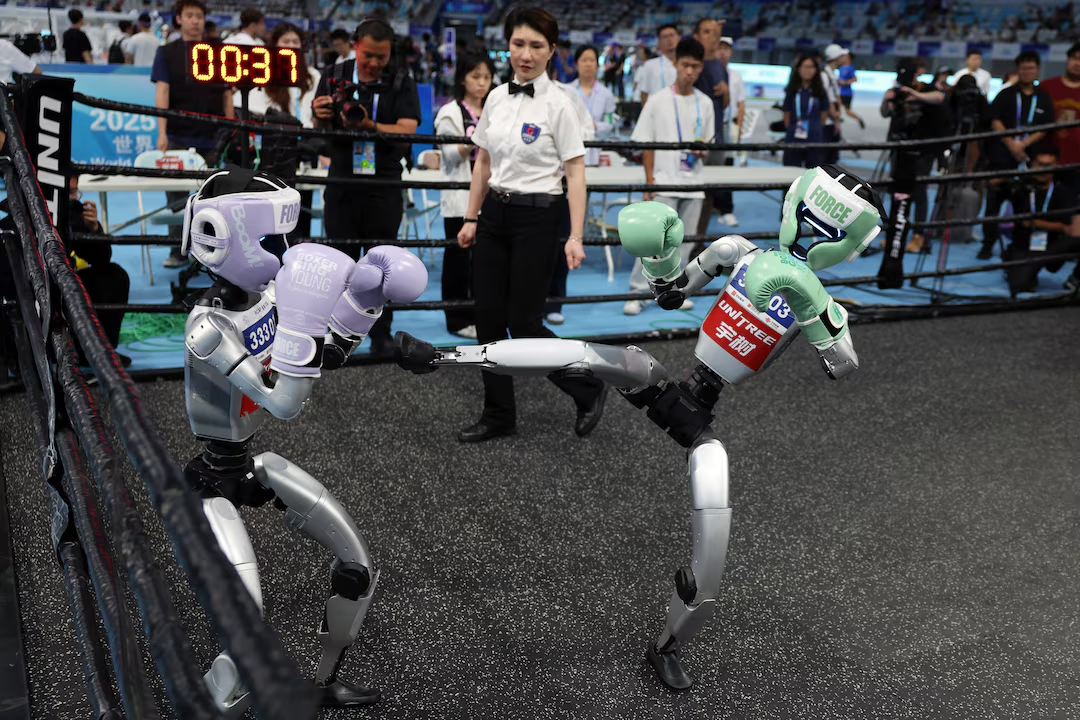

Nearly a decade after Google sold Boston Dynamics to SoftBank for a fraction of what the robotics lab was worth, the two companies are working together again.

And this time, the stakes are much higher.

At CES 2026 in Las Vegas, Boston Dynamics and Google DeepMind announced a formal AI partnership built around one goal: making the new Atlas humanoid robot genuinely intelligent. The deal pairs Boston Dynamics' thirty-plus years of mechanical expertise with DeepMind's Gemini Robotics foundation models — a combination that, on paper at least, represents the most credible attempt yet to close the gap between what robots can do in a lab and what they can do on a factory floor.

Atlas won Best Robot at CES 2026 before the week was out. But the award was almost beside the point.

The partnership signals something larger about where the humanoid robot industry is headed, which companies are positioned to lead it, and how the race for physical AI is reshaping the competitive landscape across manufacturing, logistics, and beyond.

Why This Reunion Matters

Google originally acquired Boston Dynamics in 2013, drawn by the engineering capability of a team that had built BigDog, Petman, and the early Atlas hydraulic prototypes.

But Google never quite figured out what to do with a robotics lab that made extraordinary machines no one had commercialized. In 2017, it sold the company to SoftBank. Hyundai Motor Group then acquired Boston Dynamics in 2020 for $880 million.

Since then, Boston Dynamics has been methodically building a real commercial business:

- Spot, the quadruped robot, is now deployed in more than 40 countries

- Stretch, the warehouse robot, has unloaded over 20 million boxes globally since its 2023 launch

- Atlas, unveiled at CES 2026, is the company's first true commercial humanoid — and all 2026 production is already committed

The company has learned, slowly and sometimes painfully, that making a robot go viral on YouTube is easy. Making it useful enough that industrial customers will pay for it, train their staff around it, and stake operational outcomes on it — that is the hard part.

The new Atlas is the company's most ambitious step yet. And Boston Dynamics made clear it needed an AI partner that could match the ambition of the hardware.

"We are building the world's most capable humanoid, and we knew we needed a partner that could help us establish new kinds of visual-language-action models for these complex robots. Nobody in the world is better suited than DeepMind to build reliable, scalable models that can be deployed safely and efficiently across a wide variety of tasks and industries."

— Alberto Rodriguez, Director of Robot Behavior for Atlas, Boston Dynamics

What Gemini Robotics Actually Does

To understand why this partnership is significant, it helps to understand what Gemini Robotics is — and what problem it solves.

The Limits of Traditional Industrial Robots

Traditional industrial robots are programmed. They execute the same movement, in the same sequence, with the same tolerances, thousands of times per shift. That works beautifully in controlled environments where nothing changes — the kind of precision welding and stamping operations that define traditional automotive manufacturing.

But it breaks down the moment variability enters the picture:

- A part placed slightly differently on a conveyor

- An object the robot has never seen before

- An instruction given in plain language by a human worker

The robotics industry's central challenge for the past decade has been teaching robots to generalize — to handle the variability of the real world without requiring every possible scenario to be hand-coded in advance.

Gemini Robotics is Google DeepMind's attempt to solve that problem at scale.

How VLA Models Work

Gemini Robotics is built on a vision-language-action (VLA) architecture — three capabilities that were previously handled separately, now unified:

| Component | Function |

|---|---|

| Vision | Perceives the environment through cameras and sensors |

| Language | Understands natural language instructions from humans |

| Action | Translates perception and reasoning into physical motor commands |

In plain terms: the robot can look at a scene, understand a natural language instruction, and figure out how to accomplish the task — even if it has never encountered that specific combination of objects, environment, and instruction before.

What makes Gemini Robotics different from previous attempts is the scale of training data and the quality of the underlying model. It was trained on thousands of hours of real-world teleoperated robot demonstrations, combined with the broader knowledge baked into Gemini 2.0 from web-scale training. The result is a model that draws on genuine world understanding — how objects behave, how physics works — when deciding how to move.

The Layered Architecture

DeepMind has built out a full stack for robotics deployment:

Gemini Robotics-ER 1.5 — The high-level reasoning layer. Handles task planning, progress estimation, and visual-spatial understanding. Can call Google Search for information and invoke other models or functions to execute tasks.

Gemini Robotics On-Device — A lighter-weight version that runs locally on robot hardware. Designed for applications where low latency or limited connectivity makes cloud inference impractical. Can be fine-tuned for specific tasks with as few as 50 demonstrations.

This layered architecture — cloud reasoning, on-device action, fine-tuned specialization — is what Boston Dynamics gains access to through the partnership.

The Hardware: What the New Atlas Actually Is

The partnership only makes sense if the hardware underneath it is real. The new production Atlas is, by any reasonable measure, a serious machine.

Atlas at a G lance

| Spec | Value |

|---|---|

| Height | 6.2 feet (1.9m) |

| Weight | 198 lbs (90kg) |

| Degrees of freedom | 56, most fully rotational |

| Reach | 7.5 feet (2.3m) |

| Lift capacity | 110 lbs (50kg) |

| Battery life | ~4 hours (autonomous swap in 3 min) |

| Operating temp range | -4°F to 104°F (-20°C to 40°C) |

| Vision | 360-degree cameras |

What Those Specs Actually Mean

56 degrees of freedom means 56 independently controllable joints. Most industrial robot arms have six. A human body has roughly 200. Atlas sits meaningfully between those extremes — human enough to navigate environments built for humans, engineered enough to exceed human physical capabilities in specific dimensions like reach and sustained lifting without fatigue.

Fully rotational joints are particularly significant for industrial work. A human worker picking parts from a shelf has to reposition their feet to reach around an obstacle. Atlas simply rotates the relevant joint — reducing the time and physical footprint needed to complete tasks in tight spaces.

Autonomous battery swapping is more than a convenience. When the battery runs low, Atlas navigates to a charging station, swaps its own batteries, and returns to work without human intervention. For a robot operating in a continuous manufacturing shift, that is the difference between a machine that runs like an appliance and one that requires constant babysitting.

Built for the Supply Chain It Lives In

Boston Dynamics made a deliberate design choice: every component in the production Atlas is compatible with automotive supply chains. Hyundai Mobis supplies the actuators, building a highly reliable component supply chain with competitive pricing from automotive-scale production.

Hyundai has committed to deploying tens of thousands of Boston Dynamics robots across its manufacturing facilities globally, and recently announced a $26 billion investment in U.S. operations that includes plans for a robotics factory capable of producing 30,000 units per year.

The Deployment Plan

The partnership is not purely a research arrangement. All Atlas deployments are already fully committed for 2026, with fleets scheduled to ship to Hyundai's Robotics Metaplant Application Center (RMAC) and Google DeepMind in the coming months.

That last part deserves a close read.

Google DeepMind is not just providing the AI model — it is receiving its own fleet of Atlas robots. That fleet will be used to train and test new foundation models, creating a feedback loop where real-world performance data from operating robots improves the AI that runs them, which improves the next generation of robots.

This is the flywheel the entire physical AI industry is trying to build. Google and Boston Dynamics are building it together.

The RMAC and the Data Advantage

Hyundai's Robotics Metaplant Application Center, scheduled to open in 2026 in the U.S., sits at the center of the deployment strategy.

The cycle works like this:

- Data collected from Hyundai factories flows into the RMAC

- That data trains AI models on specific manufacturing tasks

- Trained models are deployed back into live factory environments

- New real-world data feeds back into the next training cycle

The Hyundai Motor Group — which includes Hyundai Motor, Kia, Hyundai Mobis, and Hyundai Glovis — operates manufacturing facilities on multiple continents. The volume and diversity of operational data available is a genuine competitive advantage that few robotics companies can replicate.

The Phased Rollout

| Timeline | Milestone |

|---|---|

| 2026 | Atlas production begins in Boston; fleets ship to RMAC and DeepMind |

| 2026 | RMAC opens in the U.S. |

| 2028 | Atlas deployed at Hyundai Metaplant America (Savannah, GA) for parts sequencing |

| 2030 | Expansion to component assembly and more complex operations |

Where This Fits in the Humanoid Robot Race

The Boston Dynamics and DeepMind partnership is the latest move in an industry that has accelerated dramatically over the past eighteen months.

The Current Competitive Landscape

| Company | Robot | AI Model | Key Deployment |

|---|---|---|---|

| Boston Dynamics + DeepMind | Atlas | Gemini Robotics (VLA) | Hyundai factories |

| Figure AI | Figure 02 | Helix VLA | BMW factory |

| Agility Robotics | Digit | Custom | Amazon warehouses |

| Physical Intelligence | Various | pi-zero | Commercial availability |

| Tesla | Optimus | Custom | Tesla factories (limited) |

| Nvidia | Infrastructure | Cosmos / Isaac | Cross-platform |

What Boston Dynamics Brings

Most humanoid robot startups are building both hardware and AI from scratch simultaneously. That is extremely hard. Hardware development is slow, expensive, and unforgiving. Software development is fast, flexible, and iterative. Doing both at the same time means neither gets the full attention it deserves.

Boston Dynamics has thirty years of hardware expertise. Its robots move differently from competitors — with a fluidity and physical confidence built from decades of research into dynamic locomotion, balance, and manipulation. The original hydraulic Atlas was considered the most capable bipedal robot in the world for years before it was retired in 2024. The electric successor inherits that biomechanical DNA.

What Boston Dynamics did not have, until now, was a frontier AI foundation model purpose-built for robotics at the scale and capability that Gemini Robotics represents.

What DeepMind Brings

Google DeepMind is one of the few organizations that combines frontier AI research capabilities with the infrastructure to train models at massive scale. The Gemini Robotics model was trained on thousands of hours of real-world robot demonstrations — a dataset that took twelve months to collect across a fleet of dual-arm robots.

"We developed our Gemini Robotics models to bring AI into the physical world. We are excited to begin working with the Boston Dynamics team to explore what's possible with their new Atlas robot as we develop new models to expand the impact of robotics, and to scale robots safely and efficiently."

— Carolina Parada, Senior Director of Robotics, Google DeepMind

DeepMind also brings something harder to quantify: scientific credibility. Its publications on Gemini Robotics have set benchmarks that competing labs measure themselves against — and that matters for attracting the talent needed to push the field forward.

A Note on Exclusivity

The deal is not exclusive for either side.

Google's operations have enlisted Apptronik Apollo humanoid robots in public-facing tests. Boston Dynamics has research relationships with Toyota Research Institute and the Hyundai-funded Robotics and AI Institute.

This is rational behavior in a market where no dominant platform has emerged yet. The company that builds the best VLA model wins the brain market. The company that builds the best hardware platform wins the body market. The company that successfully combines both — at scale, in real industrial deployments — wins the market.

The Safety Question Nobody Is Ignoring

Putting a 200-pound robot with 56 independently controllable joints and the ability to lift 110 pounds into an environment where human workers are present is not trivial from a safety standpoint. The failure modes are serious, and the industry knows it.

Hardware-level safety features in Atlas:

- 360-degree cameras for full situational awareness

- Human detection and automatic proximity response

- Fenceless guarding — operates in shared spaces without physical barriers

- Tactile sensing in hands to modulate grip force

But the deeper safety challenge is behavioral. A robot that can act on natural language instructions and generalize across environments needs to know when not to act, how to interpret ambiguous instructions safely, and how to handle situations its training data never anticipated. That is a machine learning problem — and it is one Google DeepMind has been working on through its broader AI safety research.

The Hyundai RMAC deployment strategy reflects appropriate caution: starting with proven, low-risk tasks like parts sequencing, and expanding only as reliability is validated at each stage before moving to anything more complex.

What This Means for Manufacturing and the Economy

The implications of this partnership extend well beyond Hyundai's Georgia factory.

The Labor Calculus

Humanoid robots are entering serious commercial deployment at a moment when manufacturing labor markets in the U.S. and Europe are structurally strained. Automotive plants in particular have long faced challenges recruiting for physically demanding, repetitive work — the exact tasks Atlas is designed to handle first.

This is not simply a story about robots replacing jobs. The more accurate near-term framing is robots doing jobs that are increasingly difficult to staff reliably: high injury rates, extreme temperatures, heavy repetitive lifting, and continuous shift requirements that humans find hard to sustain.

"The work left to automate is difficult because the tasks vary so much. That's where AI comes in."

— Robert Playter, CEO, Boston Dynamics

Fully repetitive, fully predictable manufacturing tasks were largely automated decades ago. What remains is work that requires judgment, adaptability, and the ability to operate in environments designed for humans — not machines.

Robotics as a Service

Hyundai has signaled that its long-term commercial strategy includes Robotics-as-a-Service (RaaS) offerings, allowing customers outside automotive to access Atlas capabilities without owning the hardware outright. If that model develops, the addressable market expands substantially into logistics, construction, energy, and facility management.

The economics of RaaS in robotics are still being worked out. But the software-subscription model that transformed enterprise software over the past two decades suggests that recurring service revenue from a large installed base of robots is ultimately more valuable than one-time hardware sales.

Google DeepMind's position as the intelligence provider in that stack deserves attention. If Gemini Robotics becomes the dominant foundation model for humanoid robots, Google captures recurring value every time an Atlas runs a task — regardless of who owns the hardware.

The Honest Assessment: What Still Has to Work

The partnership is genuinely significant. It is also still early, and several things have to go right before the vision becomes operational reality.

Model generalization. Gemini Robotics has demonstrated impressive results in controlled environments. Operating reliably in a real Hyundai factory — where parts arrive in unexpected orientations, lighting changes, equipment moves, and human workers behave unpredictably — is a fundamentally different challenge. The proof will come from production data flowing out of RMAC deployments later this year.

The sim-to-real gap. Much of the training for robotics foundation models happens in simulation, where generating diverse experience is fast and cheap. But simulated physics is not real physics, and skills learned in simulation do not always transfer cleanly to physical hardware. Bridging that gap reliably, at scale, remains one of the central technical challenges in the field.

Cost economics. No pricing has been disclosed for Atlas, but enterprise-grade humanoid robots are not cheap. The economics only work if productivity gains exceed the hardware, software, maintenance, and integration costs. The Hyundai deployments will generate the first real data on that question.

Competition. Tesla, Figure AI, Physical Intelligence, Agility Robotics, and a growing number of well-funded Chinese humanoid robotics companies are all working on variants of this same problem. The race is not over — it is just getting started.

What sets this partnership apart from most announcements in the humanoid robot space is specificity: hardware already in production, a deployment timeline measured in months rather than years, and an AI foundation model that has already been published, peer-reviewed, and independently evaluated. The combination of credible hardware, credible AI, and a major industrial customer committed to real-world deployment is not something most competitors can currently match.

FAQ

What did Boston Dynamics and Google DeepMind announce at CES 2026?

At CES 2026 in January 2026, Boston Dynamics and Google DeepMind announced a formal AI partnership to integrate Google DeepMind's Gemini Robotics foundation models with the new Atlas humanoid robot. The partnership focuses on developing advanced visual-language-action models for industrial humanoid applications, beginning in automotive manufacturing.

What is the Gemini Robotics model?

Gemini Robotics is a vision-language-action (VLA) AI model developed by Google DeepMind, built on the Gemini 2.0 multimodal foundation model. It allows robots to perceive their environment through cameras, understand natural language instructions, and translate that understanding into physical motor commands. A lighter on-device version runs locally on robot hardware with low latency and can be fine-tuned for specific tasks with as few as 50 demonstrations.

What are the specs of the new Boston Dynamics Atlas robot?

The production Atlas has 56 degrees of freedom with fully rotational joints, a 2.3-meter (7.5-foot) reach, and can lift up to 50 kilograms (110 pounds). It stands 6.2 feet tall, weighs 198 pounds, has 360-degree camera vision, tactile sensing in its hands, and runs on dual battery packs with approximately four hours of operating life. Battery swaps are autonomous and take about three minutes.

When will Atlas robots be deployed in Hyundai factories?

All 2026 Atlas production is committed to Hyundai's Robotics Metaplant Application Center (RMAC) and Google DeepMind. Commercial deployment at Hyundai Motor Group Metaplant America in Savannah, Georgia begins in 2028, starting with parts-sequencing tasks. More complex assembly work is planned from 2030.

Is the Boston Dynamics and Google DeepMind partnership exclusive?

No. Google DeepMind has also worked with other robotics hardware companies including Apptronik. Boston Dynamics has maintained research relationships with Toyota Research Institute and the Hyundai-funded Robotics and AI Institute. Both parties are working across multiple partners as the humanoid robot market develops.

How does this partnership fit into the broader humanoid robot race?

The partnership is one of several major moves in humanoid robotics in 2025-2026. Competitors include Tesla's Optimus, Figure AI's Figure 02 with its Helix VLA model, Agility Robotics' Digit in Amazon warehouses, and Physical Intelligence's pi-zero model. Nvidia is building infrastructure through its Cosmos and Isaac simulation platforms. The Boston Dynamics and DeepMind partnership stands out because the hardware is already in production and deployment timelines are measured in months, not years.

What is a vision-language-action model and why does it matter for robots?

A VLA model combines computer vision, natural language understanding, and physical action control in a single AI system. It allows a robot to perceive its environment, understand a plain language instruction, and determine how to physically accomplish the task — even in situations the robot has never encountered before. This is the core technology needed to move robots beyond rigid preprogrammed routines toward genuine adaptability in real-world environments.

What are the main risks and challenges for this partnership?

The key challenges include demonstrating that Gemini Robotics generalizes reliably from controlled test environments to real factory floor variability, closing the simulation-to-real transfer gap in model training, making the economics of large-scale deployment work, and maintaining a competitive lead as Tesla, Figure AI, Physical Intelligence, and Chinese humanoid robotics companies continue developing competing platforms.

Related Articles