Two independent surveys published in early 2026 tell a consistent story about how people are using AI for health decisions, and the numbers are significant enough to warrant serious attention from anyone building or evaluating healthcare AI tools.

A January 2026 survey by Confused.com Life Insurance found that 59% of UK adults now use AI to self-diagnose health conditions. A separate KFF Tracking Poll conducted in late February and early March 2026 across a nationally representative sample of 1,343 US adults found that roughly one in three Americans has turned to an AI chatbot for health information in the past year. For physical health questions specifically, that figure was 29%. For mental health, it was 16%.

These are not fringe behaviors. They reflect a structural shift in how people access health information, driven by two converging forces: the accessibility of AI tools and the difficulty of accessing healthcare professionals in a timely way.

Why People Are Turning to AI Instead of Doctors

The motivation behind AI health queries varies by demographic, but the research identifies several consistent drivers.

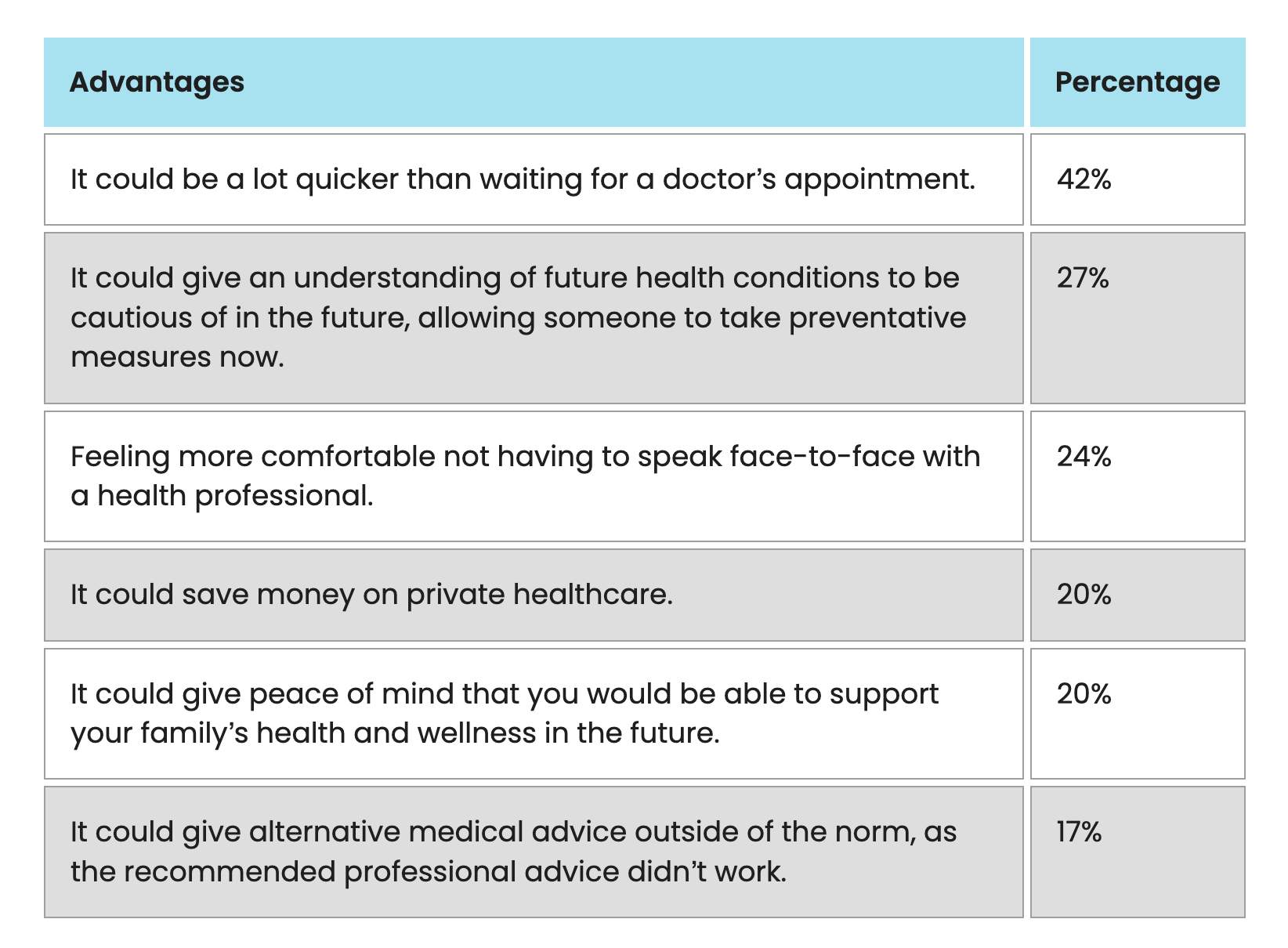

- Speed is the dominant reason. The average wait time for a GP appointment in the UK was 19 days at the time of the Confused.com survey. Among those who turned to AI for health advice, 42% said the primary reason was that AI is faster than waiting for a doctor's appointment. For people in their 30s and 40s, the figure was even higher, with 50 to 51% citing speed as the deciding factor.

- Cost and access matter for lower-income users. The KFF poll found that one in five adults who used AI for health information cited difficulties affording or accessing healthcare as a reason they turned to chatbots. That share was higher among uninsured individuals, with 30% citing access barriers versus 14% of insured individuals. Black and Hispanic adults were also more likely than White adults to report using AI for health information.

- Comfort with AI versus discomfort with doctors. Among UK respondents, 24% said they feel more comfortable using AI than speaking with a healthcare professional face to face. That figure rose to 39% among adults aged 18 to 24. Around 20% reported using AI to identify the best ways to support a family member's health.

The picture that emerges is not one of recklessness. It is one of rational adaptation to a healthcare system with access barriers, combined with the natural appeal of an always-available, non-judgmental conversational interface.

What People Are Actually Asking AI

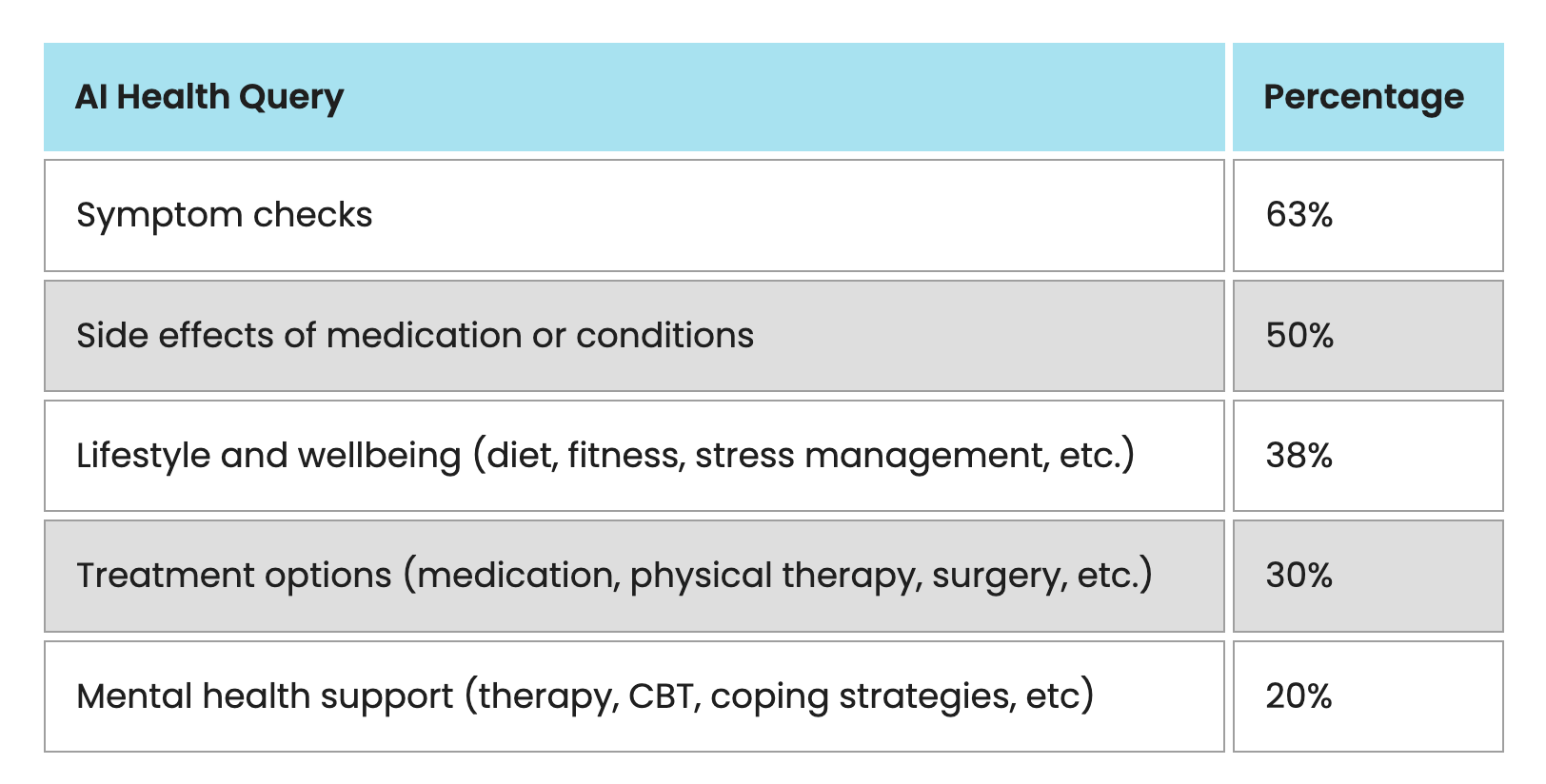

The Confused.com data breaks down the types of health queries that UK adults are directing to AI tools.

Symptom checks are the most common use, with 63% of AI health users searching for information about physical or mental symptoms they are experiencing. Side effects of medications follow at 50%. Wellbeing and lifestyle questions, covering diet and fitness, account for 38% of queries. Treatment options including medication information account for 30%. Mental health support, including therapy-adjacent queries and coping strategies, is sought by 20% of AI health users.

The KFF US data shows a similar pattern. Most users who turned to AI for health advice said they were looking for general information about health conditions or symptoms. The poll also found that 41% of people who used AI for health information uploaded personal medical data, including test results or doctor's notes, to receive more tailored responses. That represents approximately 13% of the general public who have shared personal medical records with an AI chatbot.

The Follow-Up Problem

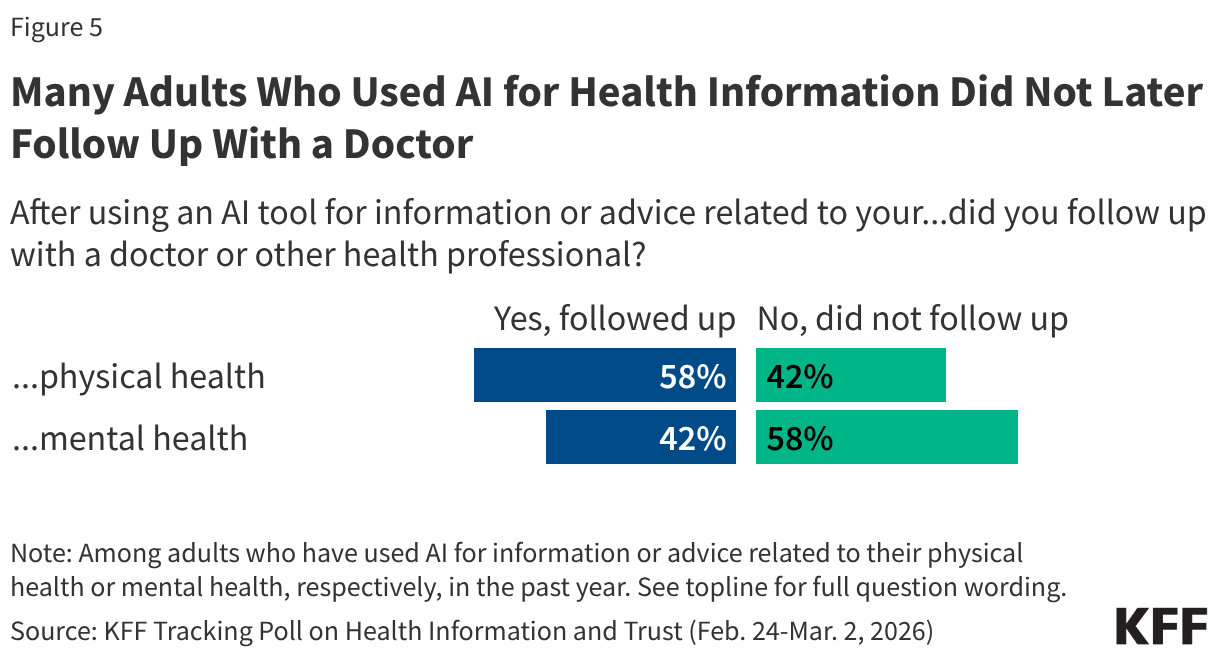

The most clinically significant finding from the KFF poll is not how many people are turning to AI for health questions, but how many of them never follow up with a healthcare provider afterward.

Among those who asked AI about physical health symptoms, 42% did not subsequently consult a doctor or healthcare professional. For mental health queries, the figure was substantially higher: 58% of people who asked AI about mental health concerns did not follow up with a mental health professional.

Younger adults, specifically those aged 18 to 29, were less likely to seek follow-up care across both categories. They were also approximately three times more likely than adults aged 50 and older to use AI chatbots for mental health advice, a combination that concentrates the follow-up gap in a population that may be more likely to be dealing with serious mental health issues and less likely to recognize the limits of AI support.

What AI Can and Cannot Do for Health

AI tools have a meaningful role in health information access. The research supports several genuine benefits:

- AI makes complex medical information more readable and accessible

- Pattern recognition across large symptom databases can surface possibilities a user might not have considered

- AI can help users prepare better questions for their actual healthcare appointments

- For populations with limited healthcare access, AI provides a low-barrier first point of contact

The clinical limitations are equally well-documented.

AI cannot conduct a physical examination, which is essential to a significant proportion of diagnoses. A headache described in text may point toward dozens of possibilities; the same headache examined in person, with visual assessment, neurological testing, and blood pressure measurement, narrows those possibilities considerably.

AI does not have access to a patient's full medical history. Symptom checkers may ask for some health background, but they cannot replicate the longitudinal understanding a physician develops over multiple interactions with the same patient. Family history, prior conditions, medication interactions, and previous test results are all clinically relevant and routinely unavailable to an AI responding to a symptom query.

AI systems hallucinate. A 2025 study in Communications Medicine documented that large language models generate false medical facts, sometimes in response to incorrect information provided in user prompts, and sometimes independently. The outputs can sound authoritative even when they are wrong.

AI carries documented biases in mental health contexts. A 2025 study in Computation and Language found that large language models expressed stigma toward people with mental health conditions and provided inappropriate advice in mental health scenarios. This is particularly significant given the share of users who are turning to AI specifically for mental health support.

The Privacy Gap

The KFF poll surfaces a striking disconnect between behavior and concern. While 41% of AI health users have uploaded personal medical information to a chatbot, 77% of adults say they are concerned about the privacy of personal health information shared with AI tools. Importantly, that concern extends to 65% of the people who have already shared personal medical data with an AI.

People are sharing medical information with AI while simultaneously expressing significant concern about doing exactly that. This suggests that the desire for immediate answers is overriding the privacy caution that users themselves report.

A 2025 study in Computers and Society found that six leading American AI developers use user chat data to train their AI models. For users sharing test results or physician notes to get personalized health analysis, the data handling implications are not always clearly communicated or understood.

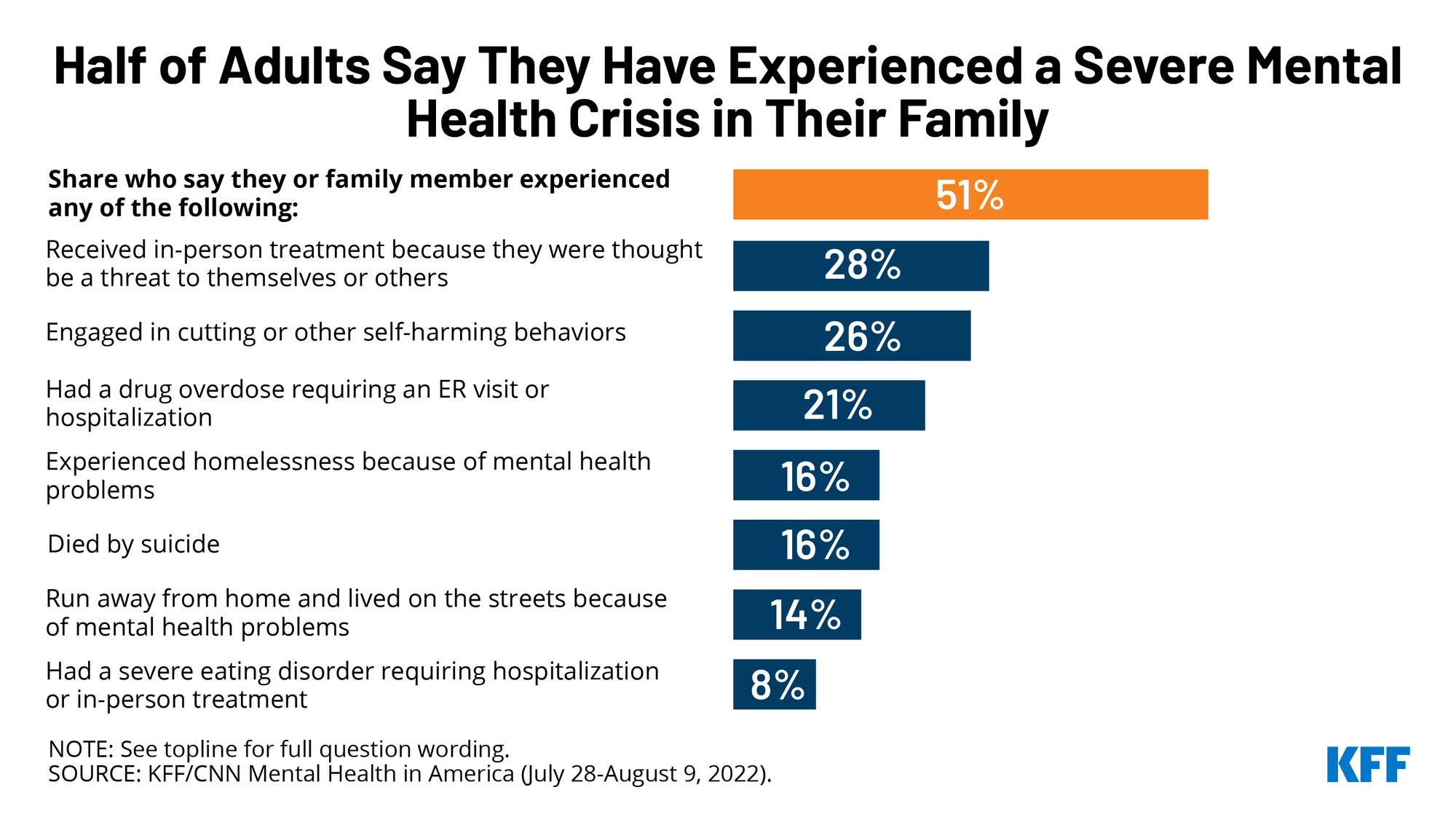

The Mental Health Risk Deserves Specific Attention

The mental health use case carries the highest risk profile among AI health queries, for reasons that go beyond accuracy. Mental health conditions often involve impaired judgment, crisis states, and situations where the quality of support and the timing of professional referral are genuinely consequential.

The Texas Attorney General opened an investigation in August 2025 into AI chatbot platforms for "potentially engaging in deceptive trade practices and misleadingly marketing themselves as mental health tools." The investigation focused on platforms that, according to the AG's office, "impersonate licensed mental health professionals" and "fabricate qualifications" despite "lacking proper medical credentials or oversight."

The KFF data showing that 58% of mental health AI users do not follow up with a professional, combined with the fact that adults under 30 are three times more likely to use AI for mental health advice than older adults, creates a profile of concentrated risk in a demographic that may be both the most likely to need professional mental health support and the least likely to seek it.

What Researchers and Clinicians Recommend

The research consensus across multiple studies and clinical perspectives points toward a consistent framework for how AI health tools can be used responsibly.

AI is most appropriate as a first information layer rather than a final arbiter. It can help users understand what symptoms might mean, generate questions to ask a healthcare provider, and navigate medical terminology in reports or diagnoses they have already received. These are high-value, low-risk functions.

AI is least appropriate as a substitute for clinical judgment in situations involving physical symptoms that require examination, mental health crises, symptoms that are acute or worsening, or conditions where misdiagnosis would delay treatment with meaningful consequences.

Bryan Janeczko, CEO of AI health platform ResetRX, offered a useful distinction: "While governed, non-diagnostic AI with clear guardrails and escalation protocols can help people understand their data and improve their health behaviors, diagnosis and treatment decisions should always be deferred to human clinicians."

Nate MacLeitch, CEO of QuickBlox, identified provenance as the key accountability mechanism: "When users seek guidance on decisions that can affect their wellbeing, and the assistant presents information without showing where it came from, or without reminding users that a qualified professional should make the final call, it risks misinformation and potential harm."

What the Data Means for Healthcare and Technology

At a population level, the 59% UK figure and the 32% US figure are not aberrations. They represent the early stages of a fundamental shift in where people go first when they have health questions, a shift that is not going to reverse as AI tools become more capable and healthcare access problems remain structural.

The question for healthcare systems, technology companies, and regulators is not whether to accept this shift, but how to shape it. The KFF findings suggest that one in five users who turn to AI for health information are doing so in part because they cannot access or afford conventional care. AI is filling a gap that the healthcare system is not currently able to fill for that population.

The public health implication is that AI health tools need clearer escalation protocols, more transparent provenance for the information they provide, and more explicit guidance about when professional follow-up is not optional. The 42% of physical health AI users who do not consult a doctor, and the 58% of mental health AI users who do not consult a professional, represent a population that received their first and only health input from a tool that cannot examine them, cannot order tests, and cannot be held accountable for a misdiagnosis.

Wrap up

The data from 2026 confirms that AI self-diagnosis is not a fringe behavior but a mainstream health information strategy, particularly among younger adults, lower-income users, and those with limited healthcare access. The motivations are rational: AI is fast, accessible, free, and non-judgmental in ways that a 19-day wait for a doctor's appointment is not.

The risks are also well-documented and specific: AI cannot perform physical examinations, does not have access to full medical histories, can hallucinate incorrect information with authority, carries documented mental health biases, and is associated with high rates of non-follow-up with professionals. The follow-up gap is the clearest clinical concern in the current research, and it is sharpest in the mental health context, where the stakes are highest.

The most useful mental model for using AI in a health context is similar to using a knowledgeable friend with medical knowledge: they can help you understand what you're dealing with and what questions to ask, but they are not your doctor, they cannot examine you, and their limitations are real even when they sound confident.

Frequently Asked Questions

How many people are using AI to self-diagnose?

A January 2026 survey by Confused.com Life Insurance found that 59% of UK adults use AI to self-diagnose health conditions. A KFF Tracking Poll conducted February to March 2026 found that approximately one in three US adults has turned to an AI chatbot for health information in the past year, including 29% who used AI for physical health questions and 16% for mental health questions.

What are people actually asking AI about their health?

Among UK adults using AI for health queries, symptom checks are most common at 63%, followed by medication side effects at 50%, wellbeing and lifestyle questions at 38%, treatment options at 30%, and mental health support at 20%. In the US, most AI health users report seeking general information about health conditions or symptoms, with a significant share uploading personal medical information including test results for more personalized responses.

Do people follow up with a doctor after asking AI?

Many do not. The KFF poll found that 42% of people who asked AI about physical health symptoms did not subsequently consult a doctor. For mental health, the figure was higher: 58% of people who asked AI about mental health concerns did not follow up with a mental health professional. Younger adults, particularly those aged 18 to 29, were less likely to seek follow-up care across both categories.

What are the clinical limitations of AI health diagnosis?

AI cannot conduct physical examinations, which are essential to a large proportion of diagnoses. It does not have access to a patient's full medical history, including family history, prior conditions, or previous test results. AI systems can hallucinate, producing false medical information that sounds authoritative. Research has also documented that AI expresses stigma toward people with mental health conditions and provides inappropriate advice in mental health scenarios. These are structural limitations, not fixable with better prompting.

Is it safe to upload medical records to an AI chatbot?

The KFF poll found that 77% of adults are concerned about the privacy of personal health information shared with AI tools, including 65% of people who have already done so. A 2025 study found that six leading American AI developers use user chat data to train their models. Users sharing personal medical information should review each platform's specific data handling policies before uploading sensitive health documents.

Why are people turning to AI instead of doctors?

Speed and access are the primary drivers. The average wait for a GP appointment in the UK was 19 days at the time of the Confused.com survey, and 42% of AI health users cited AI's speed as the reason they turned to it. The KFF US poll found that one in five AI health users cited difficulty affording or accessing healthcare as a contributing factor. Among younger adults, a preference for non-face-to-face communication with health information sources also plays a role.

Is AI better for some health questions than others?

Research indicates that AI is most useful as a first information layer, for understanding what symptoms might indicate, generating questions for healthcare appointments, and navigating medical terminology in existing reports. It is least appropriate for acute physical symptoms requiring examination, mental health crises, worsening or severe symptoms, and situations where misdiagnosis would meaningfully delay appropriate treatment.

Related Articles