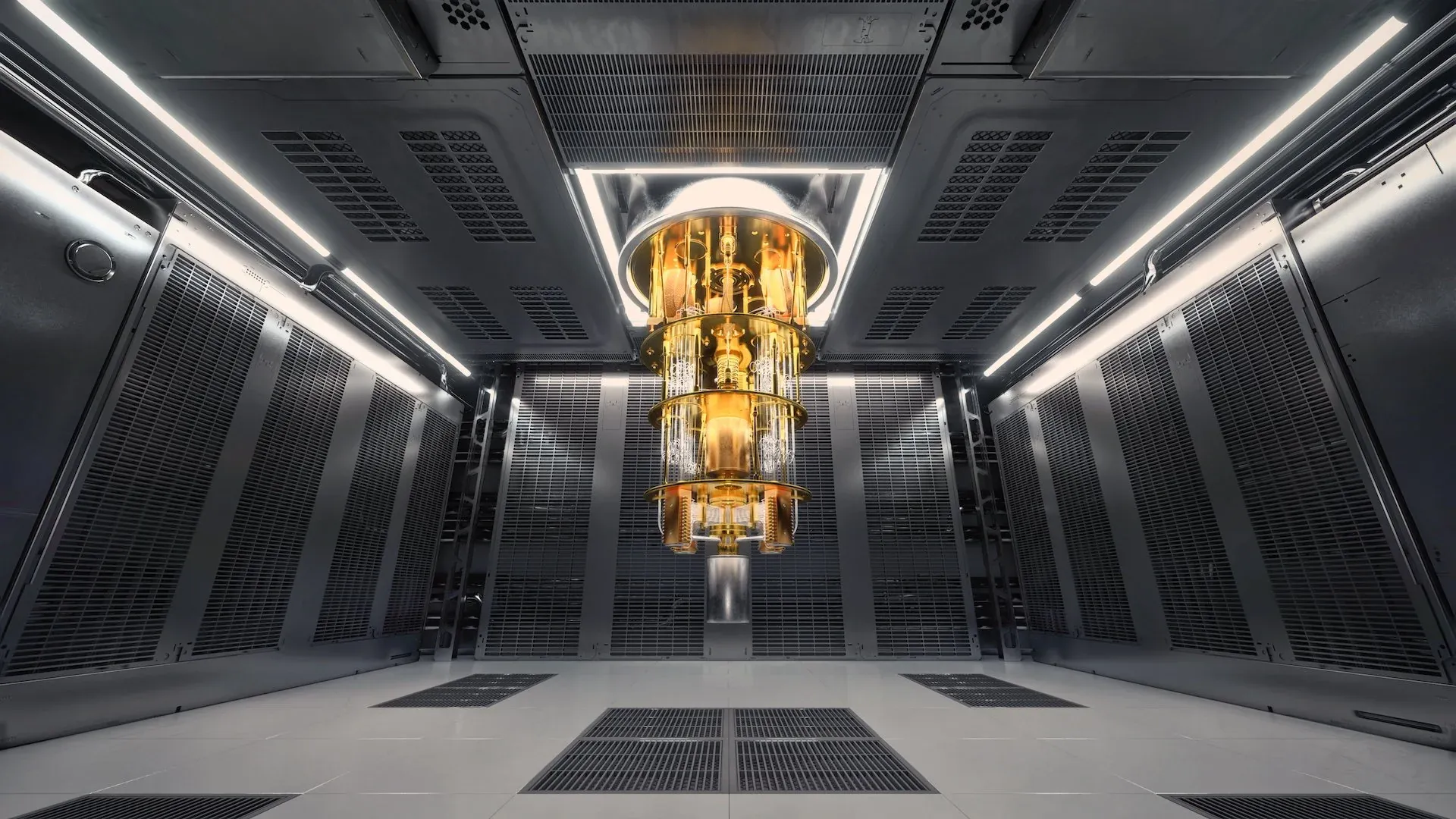

For thirty years, quantum computing's most important promise has lived almost entirely on paper.

Theorists could prove, mathematically, that a quantum computer running the right algorithm would solve certain problems exponentially faster than any classical machine. But proving it mathematically and demonstrating it on real, physical hardware are two entirely different things. Real hardware is noisy. Qubits decohere. Errors compound. And every previous claim of quantum outperforming classical rested on an assumption: that there was no classical algorithm clever enough to catch up. In June 2025, that changed.

A research team led by Dr. Daniel Lidar at the University of Southern California, working with collaborators at Johns Hopkins, published a paper in Physical Review X demonstrating an exponential quantum speedup that required no such assumption. The result was unconditional: the performance separation cannot be reversed because it does not depend on any unproven hypothesis about what classical algorithms can or cannot do.

This is the milestone the field has been working toward since Daniel Simon proved in 1994 that it should theoretically be possible. The gap between theoretical possibility and experimental fact has now, for one specific class of problem, been closed.

What "Unconditional" Actually Means

When researchers demonstrate that a quantum computer solves a problem faster than a classical one, the standard counterargument is: you have not proven there is no classical algorithm that could match the quantum performance. Maybe no one has found that algorithm yet. Maybe it exists and is simply undiscovered. Prior quantum speedup claims depended on the assumption that classical methods could not be significantly improved.

An unconditional speedup removes that escape hatch.

For Simon's problem, there is a rigorous mathematical proof that no classical algorithm, regardless of how clever, can match the quantum performance as the problem scales. The speedup does not rest on an assumption. It is provable from first principles.

"The performance separation cannot be reversed because the exponential speedup we've demonstrated is, for the first time, unconditional." — Dr. Daniel Lidar, USC

What is Simon's Problem?

Simon's problem involves finding a hidden repeating pattern in a mathematical function. Players attempt to guess a secret number known only to an oracle (a black box) by asking as few questions as possible.

| Solver | Queries required as problem scales |

|---|---|

| Classical computer | Exponentially many |

| Ideal quantum computer | Logarithmic only |

The gap widens without limit as the problem scales. That is precisely what makes it an exponential speedup.

Why it matters beyond the lab: Simon's problem is the direct precursor to Shor's algorithm, the quantum algorithm capable of breaking RSA encryption. Both belong to the same mathematical family: the abelian hidden subgroup problem. The USC result does not mean encryption is broken today, but it establishes, on physical hardware, the algorithmic mechanism that underlies that long-term concern.

How the Team Actually Did It

The theoretical proof existed. What was missing was a way to run the circuits on real hardware without noise destroying the advantage.

Noise is the central challenge of practical quantum computing. Qubits are extraordinarily sensitive: interactions with the environment, manufacturing imperfections, and the physical act of measuring a qubit all introduce errors. Past a certain circuit depth, errors accumulate and the quantum advantage disappears.

The team showed quantum speedup for circuits involving up to 58 qubits at Hamming weights up to seven. At Hamming weight eight and nine, the deeper circuits became too noisy and classical caught up again.

Four techniques made the demonstration possible, working together:

1. Circuit optimization The researchers compressed circuits to minimize depth, reducing the number of operations and limiting noise accumulation before measurement.

2. Restricted input complexity By limiting the Hamming weight of the hidden bitstrings, they kept computation within the range where today's hardware performs reliably.

3. Dynamical decoupling This error suppression technique applies carefully timed pulse sequences to idle qubits, preventing them from drifting due to environmental interference. It had the most dramatic impact on maintaining the speedup.

4. Measurement error mitigation Even after dynamical decoupling, measuring qubits introduces its own errors. The team applied post-processing corrections to filter out readout noise from final results.

"The key was squeezing every ounce of performance from the hardware: shorter circuits, smarter pulse sequences, and statistical error mitigation." — Phattharaporn Singkanipa, lead author, USC

The result is not that quantum computers can now solve any problem faster than classical. It is that on a specific, rigorously defined problem, the quantum advantage is real, measurable, and provably irreversible as the problem scales.

IBM's Concurrent Hardware Push: Nighthawk and Loon

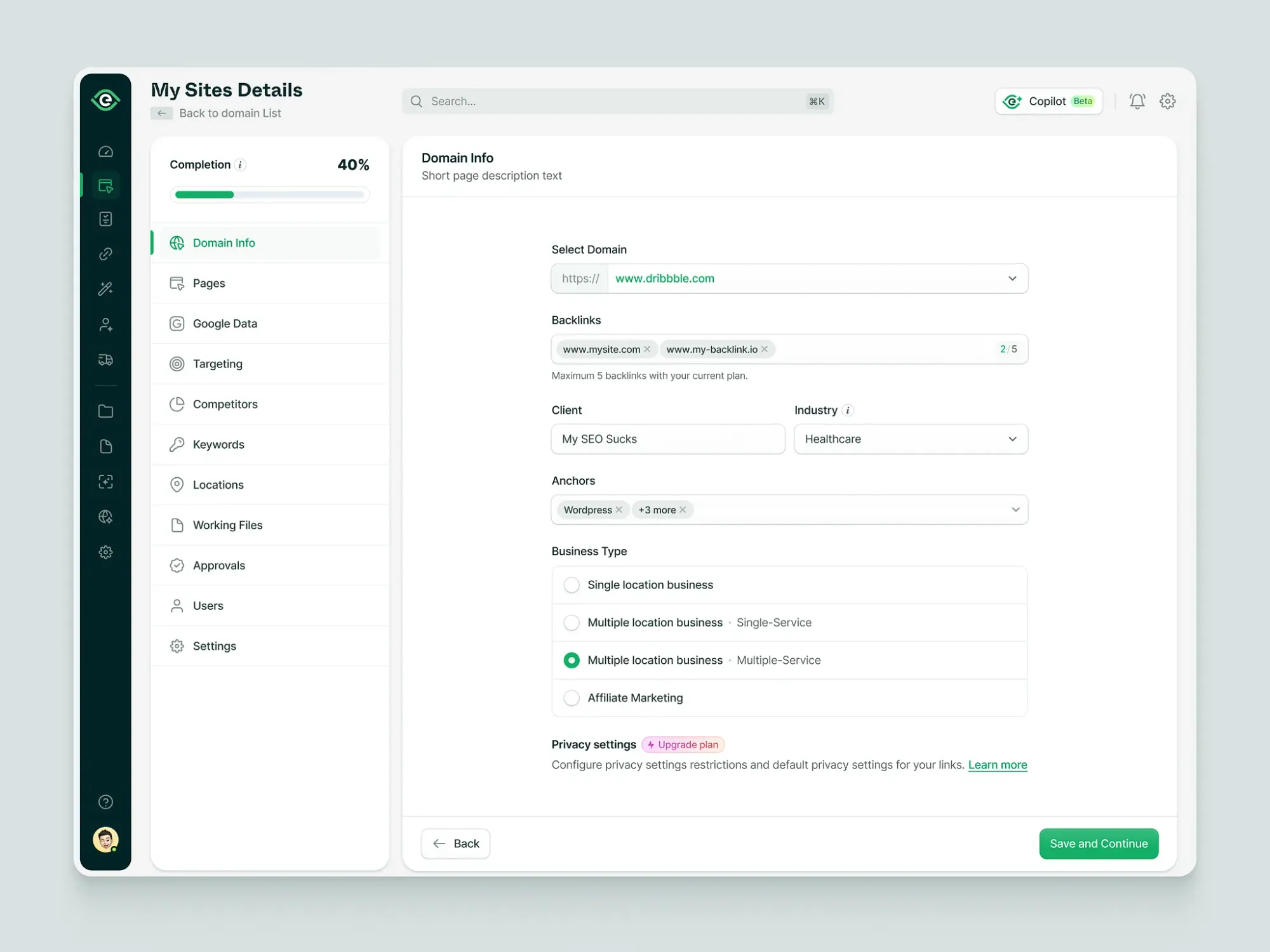

The USC result used IBM's existing Eagle processors. At IBM's Quantum Developer Conference in November 2025, the company unveiled two new processors representing the next phases of its strategy.

IBM Quantum Nighthawk: The Advantage Machine

Purpose: Designed specifically to deliver verified quantum advantage by end of 2026

| Spec | Detail |

|---|---|

| Qubits | 120 |

| Couplers | 218 next-generation tunable couplers |

| Topology | Square lattice |

| Connectivity vs. Heron | 20% greater |

| Circuit complexity vs. Heron | 30% more complex |

| Max gates (2025) | 5,000 two-qubit gates |

Gate depth roadmap:

- End of 2026: up to 7,500 gates

- 2027: up to 10,000 gates

- 2028: up to 15,000 gates with 1,000+ connected qubits

Gate depth targets matter more than qubit count. The number of operations a quantum computer can execute before noise overwhelms the result is what determines practical usefulness. IBM expects the first verified quantum advantage cases on Nighthawk to be confirmed by the research community by end of 2026.

IBM Quantum Loon: The Fault-Tolerance Testbed

Purpose: Not designed to beat classical benchmarks — designed to test fault-tolerant quantum computing components together for the first time

| Spec | Detail |

|---|---|

| Qubits | 112 |

| Role | Experimental fault-tolerance architecture testbed |

| Key new features | C-couplers, multi-layer routing, qubit reset circuits |

Fault tolerance is the field's ultimate goal. A fault-tolerant quantum computer detects and fixes errors in real time, meaning it can run circuits of arbitrary depth. Loon demonstrates all required hardware components integrated together on a single chip for the first time.

The path from Loon leads to Kookaburra (2026), then Cockatoo (2027), and ultimately to Starling in 2029: IBM's first fault-tolerant quantum computer targeting 100 million operations on 200 logical qubits.

The 300mm Manufacturing Shift

Alongside the new chips, IBM announced a transition to a 300mm semiconductor fabrication facility at the Albany NanoTech Complex, the same scale used for leading classical chips.

Impact so far:

- Development speed doubled, cutting processor build time by at least half

- Physical complexity of quantum chips increased tenfold

- Multiple chip designs can now be explored in parallel

Faster iteration is one of the most undervalued advantages in hardware development. IBM is now applying it directly to quantum processors.

IBM's Open Verification Strategy: Why It Matters

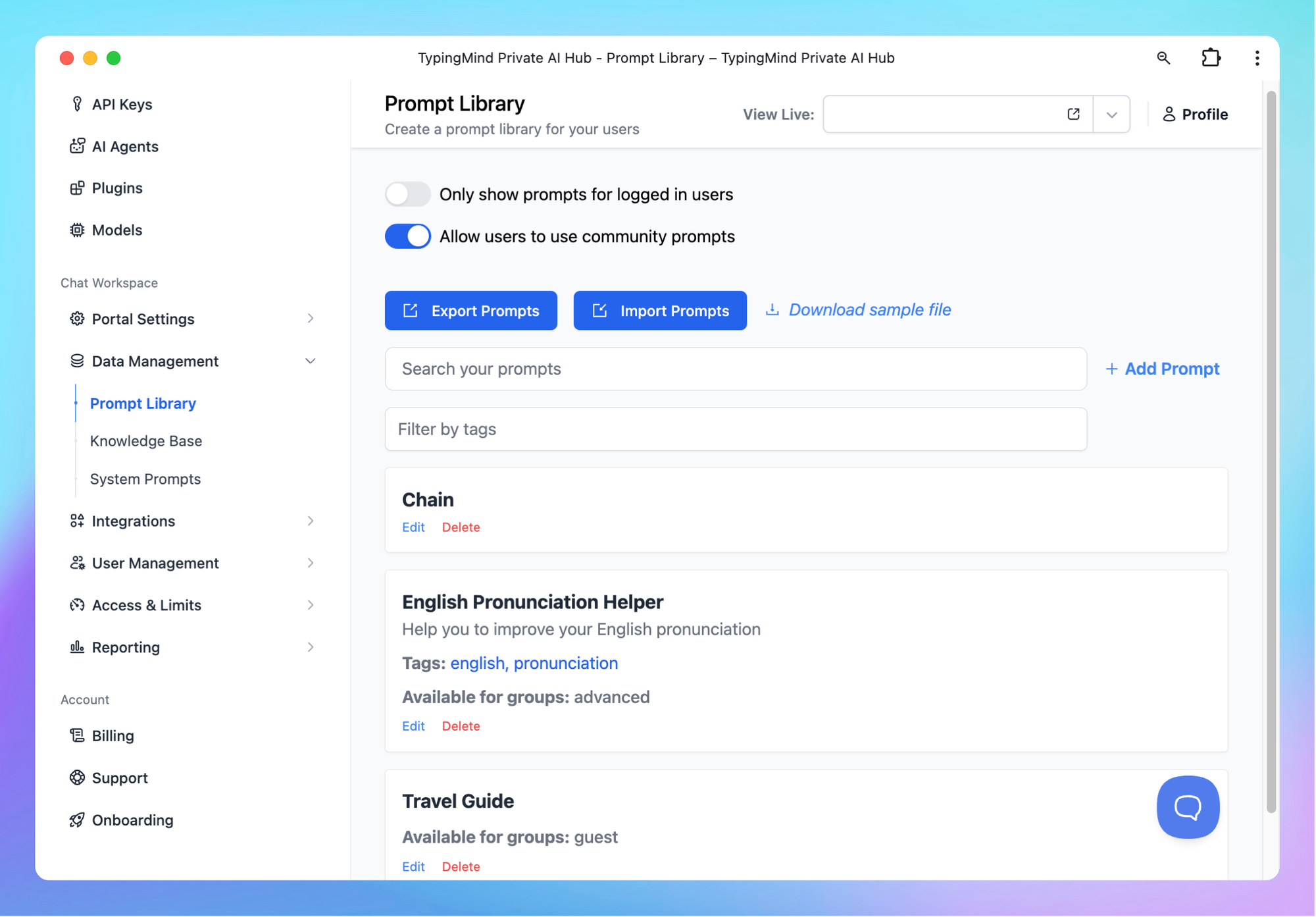

One of the most consequential aspects of IBM's approach is not a chip specification. It is the decision to set up an open, community-led quantum advantage tracker, with results contributed by IBM, Algorithmiq, the Flatiron Institute, and BlueQubit.

The tracker covers three experiment categories:

- Observable estimation

- Variational problems

- Classically verifiable problems

Any researcher can submit results. Any researcher can attempt to beat quantum experiments with improved classical methods. IBM's position is explicit: advantage is not claimed until the broader community validates the separation.

This approach serves two functions:

- It guards against the reputation damage of a quantum advantage claim that later gets reversed

- It forces the quantum field to engage seriously with the best classical methods, rather than benchmarking against deliberately weak baselines, which has been a recurring criticism of prior milestone claims

"We argue that the community hasn't achieved advantage yet, because we have not yet met key criteria: rigorous validation of the quantum computation and a demonstrable quantum separation measured in terms of efficiency, cost-effectiveness, accuracy, or some combination of the three." — IBM Quantum team, QDC 2025

The scientific integrity of naming exactly why current state is not yet full advantage, while mapping the specific criteria needed to get there, is what gives IBM's 2026 timeline credibility.

The Competitive Landscape

IBM's roadmap does not exist in isolation. Several organizations are pursuing quantum advantage on parallel timelines.

| Company | Approach | Key milestone | Status |

|---|---|---|---|

| IBM | Superconducting transmon qubits | Quantum advantage by end of 2026 | Nighthawk delivered, tracker live |

| Superconducting transmon qubits | Willow: below-threshold error correction | Delivered Dec 2024 | |

| Microsoft | Topological qubits (Majorana fermions) | Fault-tolerance hardware | Early stage, 2025 unveil |

| Quantinuum | Trapped-ion qubits | High gate fidelity at smaller scale | Competitive on accuracy |

| China | Optical quantum chip | AI workload speedup claims | Independent verification limited |

Google's Willow chip demonstrated that adding more physical qubits to an error correction code actually improved logical qubit performance rather than making it worse: a significant fault-tolerance milestone.

Microsoft's topological qubits are theoretically far more noise-resistant than conventional qubit designs and could offer a faster path to fault tolerance, though the technology is earlier in maturity than IBM's or Google's approaches.

The honest competitive picture: IBM has the most public, rigorously tracked roadmap with the most transparent verification methodology. That does not mean IBM will win, but it means IBM's progress is the most interpretable from the outside.

What Quantum Advantage Actually Changes (and What It Does Not)

The USC result, and the verified advantage IBM expects to demonstrate on Nighthawk by end of 2026, will be real milestones. They will also be narrow ones.

What It Means

Quantum computers can now demonstrate, on specific problem types, performance that classical machines cannot match as problems scale. This is not a theoretical prediction. It is a measured, published, peer-reviewed result. The step from "could theoretically do this" to "has experimentally done this" has been taken.

Industry applications with the same underlying mathematical structures:

- Molecular simulation for drug discovery

- Materials science optimization

- Certain financial portfolio problems

- Specific categories of machine learning

IBM's partners including RIKEN, Boeing, Cleveland Clinic, Algorithmiq, and Oak Ridge National Laboratory are already running quantum algorithms on Nighthawk-class systems. One demonstration by Algorithmiq on a 65-qubit IBM Heron processor simulated a biomolecule's binding site to a cancer-related target, achieving accuracy far beyond previous methods and enabling discrimination between two drug-candidate conformations.

What It Does Not Mean

The advantage is oracle-bound, for now. Simon's problem requires an oracle (a black box that knows the answer in advance). Most real-world problems are not oracle-based. Demonstrating advantage on oracle problems is necessary but not sufficient for advantage on the computational problems industries care about most.

Noise still limits the ceiling. The exponential advantage exists up to 58 qubits before noise wins. That ceiling will rise as hardware improves, but translating it into broad practical utility requires the fault-tolerant systems IBM targets for 2029, not 2026.

Quantum is not replacing classical. IBM's framing is explicit: quantum will form the core of a quantum-centric supercomputing architecture, where quantum processing units handle specific circuit-based computations while classical GPUs and CPUs handle the supporting work. These machines are complementary, not competitive in general.

The Roadmap to 2029 and What to Watch

IBM has a specific, public, timestamped roadmap that it has hit consistently since debuting it in 2019.

| Year | Milestone | Status |

|---|---|---|

| 2025 | Nighthawk (120 qubits, 5,000 gates) | Delivered Nov 2025 |

| 2025 | Loon (fault-tolerance component testbed) | Delivered Nov 2025 |

| 2026 | Verified quantum advantage | Targeted, in progress |

| 2026 | Kookaburra (first logical qubit module) | On roadmap |

| 2027 | Cockatoo (entanglement between modules) | On roadmap |

| 2028 | Magic state injection with multiple modules | On roadmap |

| 2029 | Starling (200 logical qubits, 100M gates) | Targeted |

The near-term milestone to watch: Verified quantum advantage on a practical problem type by end of 2026, confirmed through the open tracker rather than declared unilaterally. If that happens under IBM's community validation framework, it will represent the field's most credible milestone to date.

The long-term milestone to watch: Starling in 2029. That system, if realized as described, would represent a qualitative shift in what quantum computers can do and how widely applicable quantum advantage becomes, not an incremental improvement in specific benchmarks.

From Simon's problem as a thought experiment in 1994 to two 127-qubit processors demonstrating the same exponential speedup on physical hardware accessible over the cloud in 2025: the distance already traveled is remarkable. The distance remaining is also substantial. Neither of those things makes the other less true.

Frequently Asked Questions

What is quantum advantage and why does it matter?

Quantum advantage is the point at which a quantum computer solves a specific problem more efficiently, accurately, or cheaply than any classical computer can. It matters because quantum computers operate on fundamentally different physical principles, allowing them to explore vast computational spaces simultaneously in ways classical machines cannot. Achieving verified quantum advantage proves that the theoretical promise of quantum computing translates into real, measurable performance in practice.

What exactly did the USC team demonstrate with IBM's quantum processors?

The team demonstrated an unconditional exponential speedup for a modified version of Simon's problem, using two IBM Quantum Eagle processors with 127 qubits each. The speedup is unconditional because it does not depend on assumptions about classical algorithm limitations; the advantage is mathematically proven to be irreversible as the problem scales. The result was published in Physical Review X in June 2025.

What is Simon's problem and why is it significant?

Simon's problem involves finding a hidden repeating pattern in a mathematical function. Its significance is that it is the direct precursor to Shor's algorithm, the quantum algorithm that can factor large numbers exponentially faster than classical methods and could eventually break widely used encryption schemes. Simon's problem belongs to the same mathematical family (the abelian hidden subgroup problem), so demonstrating exponential speedup on Simon's problem validates the core mechanism that makes Shor's algorithm theoretically powerful.

Does this mean encryption is now at risk?

Not imminently. The USC result demonstrated advantage on circuits up to 58 qubits before noise limited performance. Breaking current encryption with Shor's algorithm would require a fault-tolerant quantum computer running circuits with millions of operations on thousands of logical qubits, which IBM's own roadmap targets for post-2029 timelines. The result establishes the theoretical mechanism on physical hardware; the scale needed for practical cryptographic implications is substantially larger.

What is IBM's quantum computing roadmap through 2029?

IBM is targeting verified quantum advantage on practical problems by the end of 2026, using the Nighthawk processor in combination with its open quantum advantage tracker for community validation. The Kookaburra processor in 2026 will demonstrate the first modular logical qubit storage. The Cockatoo processor in 2027 will demonstrate entanglement between separate processor modules. In 2029, IBM targets Starling: a fault-tolerant quantum computer with 200 logical qubits capable of running 100 million quantum operations per job.

How does IBM's approach compare to Google, Microsoft, and other quantum competitors?

IBM uses superconducting transmon qubits and has the most public and transparently tracked roadmap in the field. Google's Willow chip demonstrated below-threshold error correction in 2024. Microsoft is pursuing topological qubits based on Majorana fermions, a different hardware approach that is theoretically more noise-resistant but earlier in maturity. Quantinuum uses trapped-ion qubits with high gate fidelity at smaller scales. IBM's differentiation is its insistence on community-verified advantage rather than self-declared milestones, its open quantum advantage tracker, and its consistent record of hitting roadmap targets on schedule since 2019.

Related Articles