Uber's Chief Technology Officer Praveen Neppalli Naga delivered a candid admission to The Information this week: the company has already spent its entire annual AI budget, and it is only April.

"I'm back to the drawing board because the budget I thought I would need is blown away already," Naga said.

The culprit is not an infrastructure overrun or a failed contract. It is a coding assistant. Specifically, Anthropic's Claude Code, which spread through Uber's engineering organization faster than anyone in finance anticipated, and at a cost that no one had modeled.

What Actually Happened at Uber

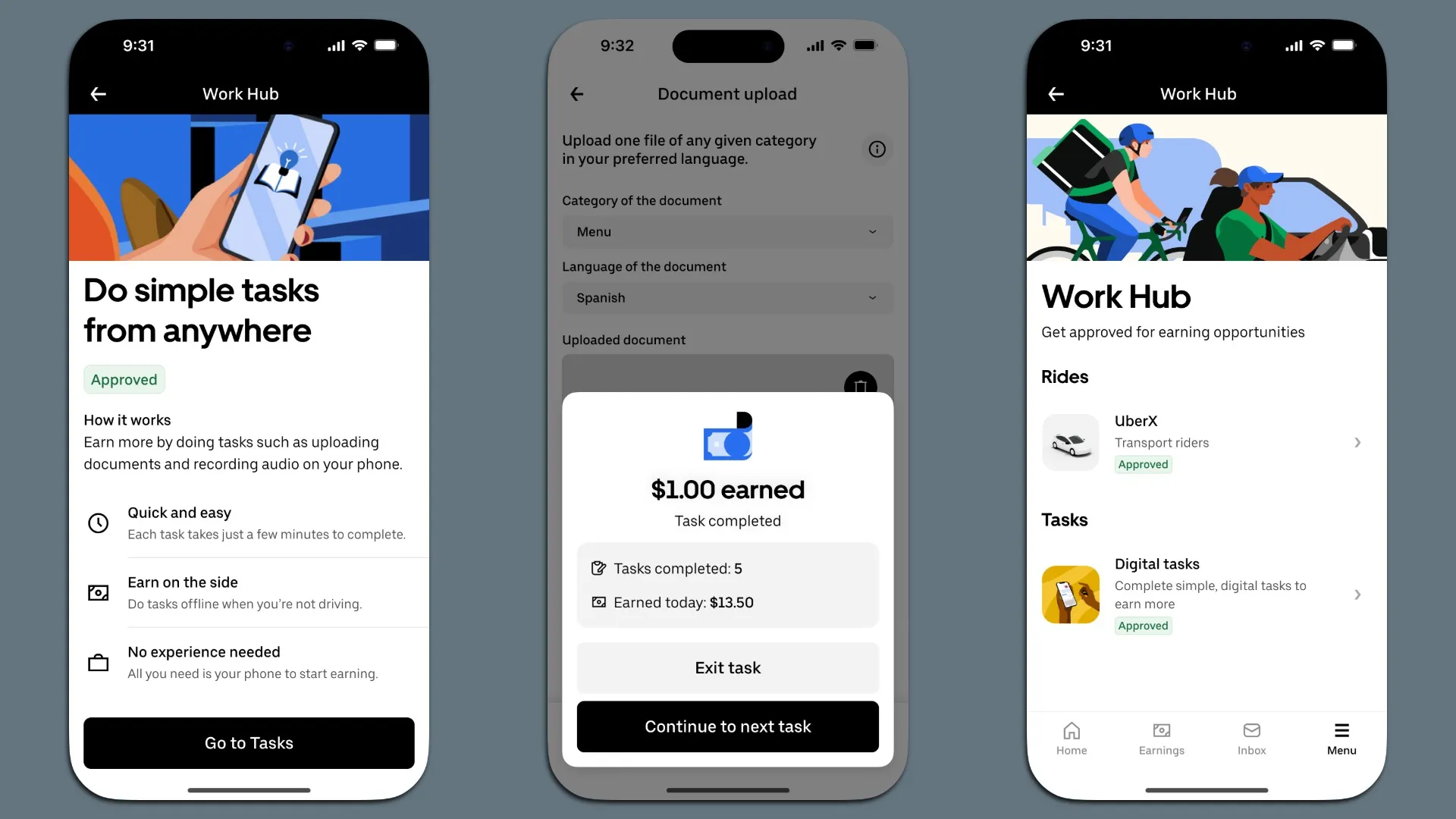

Uber did not stumble into AI tool adoption but actively encouraged it.

Engineers were given access to Claude Code, Cursor, and other AI coding tools, and the company built internal leaderboards ranking teams by usage to drive adoption. The strategy worked. Within a few months, AI tool usage at Uber went from measured to pervasive.

By March 2026, the Pragmatic Engineer newsletter reported that 84% of Uber's developers were classified as agentic coding users. Between 65% and 72% of code produced inside IDE-based tools was AI-generated. Claude Code usage specifically nearly doubled in three months, climbing from 32% of developers using it in December to 63% in February. Cursor and IntelliJ-based tools plateaued over the same period.

The productivity numbers were real: approximately 11% of all pull requests at Uber are now opened by AI agents, and around 11% of live backend code updates are written by AI systems handling everything from ride-matching algorithms to dynamic pricing and bug fixes. AI-related costs, however, rose roughly 6x since 2024, and the full-year budget Naga had set aside for AI tooling was gone before the second quarter started.

Why Claude Code Is Different from What Finance Expected

Enterprise budgeting for software tools typically assumes a per-seat model with predictable monthly costs. Claude Code does not work that way at scale.

The tool is usage-based. Engineers running agentic workflows, where Claude Code reads entire codebases, plans changes across dozens of files, runs tests, and opens pull requests autonomously, consume tokens at rates that bear no resemblance to a simple per-seat subscription. A developer using Claude Code to orchestrate parallel agents on a complex refactor consumes substantially more compute than one using it for occasional tab-completion.

Uber's CTO confirmed that Claude Code has become the dominant tool inside the company, with Cursor having plateaued. The company is now testing OpenAI's Codex as it continues to expand its AI coding stack.

The financial dynamic is not unique to Claude Code. Naga described a company-wide shift toward what he calls "agentic software engineering," where AI systems do not just assist but handle coding, testing, and deployment with other AI tools supervising the process, a vision that is genuinely productive and computationally expensive in ways that traditional IT budgeting models were not designed to capture.

Why the Budget Model Failed

Uber does not disclose its specific AI tooling budget, but the broader R&D context provides a frame. The company spent $3.4 billion on research and development in 2025, a 9% increase from the prior year, and securities filings indicate the company expects that figure to keep climbing.

The mechanism that broke the budget model is specific. Claude Code at $200 per month per engineer sounds manageable at the individual level. Multiply by 1,000 engineers running agentic workflows at scale, and you have $2.4 million per year before any additional enterprise or API usage. When those same engineers are running parallel agents, orchestrating background tasks through Uber's internal Minion platform, and using Claude Code for full-codebase refactors rather than isolated queries, the token consumption multiplies further.

No single approval gate was built to catch compounding subscriptions scaling virally across an engineering organization.

The enterprise AI implementation data supports this pattern. The Zylo 2026 SaaS Management Index found that organizations spent an average of $1.2 million on AI-native apps in 2026, a 108% year-over-year increase. Coding assistants represent a disproportionate share because of their usage-based pricing and high token consumption per session. Only 21% of organizations deploying AI agents have mature governance models, according to Deloitte survey data cited by SSNTPL, leaving the majority running autonomous systems without adequate financial oversight.

Uber encouraged adoption explicitly, which is arguably better governance than letting shadow AI spread uncontrolled. The budget model still failed to anticipate the consumption curve.

The Productivity Case and Why It Complicates the Story

Framing Uber's situation purely as a cost overrun misses the actual picture. The productivity gains are documented and specific: 11% of pull requests opened by AI agents, 65% to 72% of IDE-produced code AI-generated, agentic systems handling ride-matching, pricing, and bug fixes at operational scale. These are not pilot metrics.

Naga's longer-term vision, which he describes as "agent engineers," is a model where AI systems handle coding, testing, and deployment end-to-end. The human engineer becomes an orchestrator rather than an implementer. Whether that vision reduces headcount or amplifies the output of a stable team is a question Uber has not yet answered publicly.

The cost overrun and the productivity gains are the same phenomenon: the tool is being used heavily because it works, at a scale nobody budgeted for.

What This Means for Other Enterprises

Uber's situation is a preview for any organization actively encouraging AI coding tool adoption across engineering teams. Four things to act on now:

- Model token consumption, not seats. Claude Code charges based on usage, not per-seat. Agentic workflows consume orders of magnitude more tokens than simple prompt-and-response. Finance teams need consumption models built on actual agentic patterns before deployment begins.

- Assume adoption will outpace forecasts. Uber built leaderboards to drive adoption and succeeded faster than the budget could handle. Any organization actively incentivizing AI tool use should build significant headroom into its financial model.

- Build governance before rollout, not after. Uber is rebuilding its budget model reactively. Organizations that establish usage caps, centralized procurement, and real-time cost monitoring before scaling will avoid the same situation.

- The overrun is not a failure signal. Uber's CTO is not walking anything back. He is expanding the stack. A budget that got blown through because the tool worked is a different conversation than a budget that got blown through because the tool failed.

Conclusion

Uber's story is a documented case of what happens when a large engineering organization adopts agentic AI tools at genuine scale while the budget model was built for a different era. Claude Code at 63% developer penetration, running parallel agents across a monorepo, does not cost what a per-seat spreadsheet predicts. The headline is that Uber spent its AI budget in four months. The real story is that dozens of other finance teams will have the same conversation with their CTOs before the year is out, and most of them are not prepared for it.

Frequently Asked Questions

Why did Uber's AI budget run out so quickly?

Uber's CTO Praveen Neppalli Naga attributed the budget overrun to the rapid, company-wide adoption of AI coding tools, primarily Anthropic's Claude Code. The company actively incentivized adoption through internal leaderboards, and uptake far exceeded budget forecasts. By March 2026, 84% of Uber's developers were classified as agentic coding users, and Claude Code's usage nearly doubled in three months. AI-related costs at Uber rose approximately 6x since 2024.

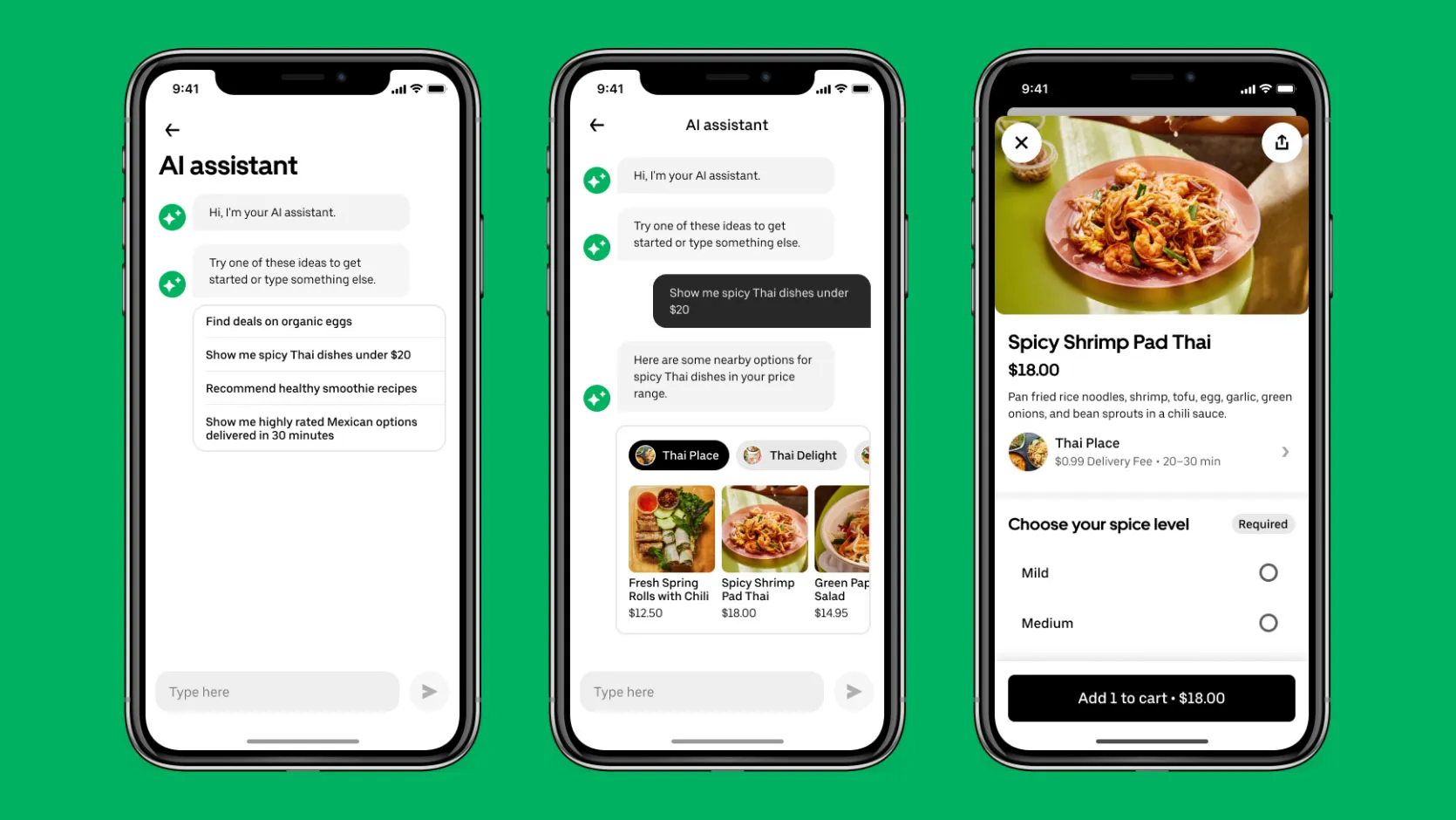

What is Claude Code and why is it expensive at scale?

Claude Code is Anthropic's terminal-native agentic coding tool. Unlike simple autocomplete tools, it reads entire codebases, plans multi-step changes across dozens of files, runs tests, and opens pull requests autonomously. It is priced on token consumption rather than a flat per-seat rate, which means usage costs scale nonlinearly as engineers run parallel agents and more complex agentic workflows. At 1,000 engineers using it heavily, the cost multiplies well beyond what a per-seat model would suggest.

What productivity gains is Uber seeing from AI coding tools?

By early 2026, approximately 11% of all pull requests at Uber were opened by AI agents, and 65% to 72% of code produced inside IDE-based tools was AI-generated. Around 11% of live backend code updates are written by AI systems. Uber's CTO has described a long-term vision of "agent engineers" that handle coding, testing, and deployment end-to-end, with human engineers functioning as orchestrators rather than implementers.

What should enterprise IT and finance teams do differently?

Enterprises planning agentic AI tool deployments at scale should build consumption models based on actual agentic usage patterns rather than per-seat estimates. Governance frameworks including usage caps, centralized procurement, and real-time cost monitoring should be established before deployment rather than after a budget overrun. Organizations that actively incentivize adoption should model adoption rates significantly higher than their initial forecasts.

Is Uber planning to stop using Claude Code?

No. Uber is expanding its AI stack, not contracting it. Naga stated the company is now testing OpenAI's Codex as an additional tool. The budget overrun has put Uber back to the planning stage on its AI spending model, but the direction of travel is toward more AI in engineering workflows, not less.

Related Articles