OpenAI has a new model in the pipeline, and the company is not hiding it.

On March 24, 2026, The Information reported that OpenAI had completed pre-training on its next frontier model, codenamed internally as "Spud." CEO Sam Altman told employees it is "a very strong model that could really accelerate the economy." Greg Brockman, OpenAI's President, went further in an appearance on the Big Technology Podcast, describing it as the product of "two years of research" with a "big model feel" and framing it explicitly as something other than an incremental update.

As of April 9, 2026, Spud is in safety evaluation and has not been released. No official launch date has been confirmed by OpenAI.

What Is Confirmed

The confirmed facts come from a small number of attributed sources and are worth separating from the substantially larger body of speculation that has accumulated around them.

- Pre-training completed March 24, 2026. The Information first reported this, and OpenAI leadership has not contradicted it. Pre-training completion marks the end of the initial training phase. Safety evaluation, post-training alignment, red-teaming, and staged rollout preparation typically follow, adding weeks to the timeline before a public release.

- Altman's framing: economic acceleration. In statements to employees reported by The Information, Altman described Spud as a model that could "really accelerate the economy." That language is notable for what it emphasizes: economic function rather than benchmark position.

- Brockman's framing: generational, not incremental. On the Big Technology Podcast, Brockman said: "There are two years of research inside this model. It has a big model feel. It's not an incremental improvement. It's a significant change in the way we think about model development." He also said Spud will better understand the context of requests without users having to over-explain, making interactions more intuitive.

- Sora was shut down to fund it. OpenAI discontinued Sora, its AI video generation app, on the same day pre-training on Spud concluded. Internal reporting and multiple sources describe compute freed from Sora being redirected toward Spud. Sora was consuming an estimated $1 million per day in compute costs at peak while generating minimal revenue. The $1 billion Disney licensing deal built around Sora was absorbed as a loss.

- The commercial name is not decided. OpenAI has not confirmed whether Spud will ship as GPT-5.5 or GPT-6. The decision, according to internal discussions reported across multiple outlets, depends on how large the performance gap is relative to GPT-5.4.

The Competitive Context That Makes Spud Urgent

To understand why Spud matters to OpenAI's internal timeline, it helps to look at where the leaderboard stood before it.

GPT-5.4, released March 5, 2026, was a strong model. It introduced native computer use, a 1 million token context window, and a 33% reduction in hallucination rates compared to GPT-5.2. It scored 57.70% on SWE-bench Pro, the benchmark used to measure real-world software engineering capability.

That number looked competitive until March 26, when a data leak from Anthropic's infrastructure revealed the existence of Claude Mythos, a model Anthropic described in internal communications as "the most powerful AI model ever developed." Mythos Preview posted 77.80% on SWE-bench Pro, placing it in an entirely different tier than everything else currently available. Anthropic subsequently announced on April 7 that Mythos would not receive a general public release due to cybersecurity concerns, and would instead be made available only to select partners under its Project Glasswing initiative.

The gap between GPT-5.4 at 57.70% and Mythos at 77.80% is the benchmark context in which Spud is being discussed. Multiple sources with access to OpenAI's internal framing say the company has indicated Spud is targeting that range.

Where Things Stand on the Leaderboard (Pre-Spud)

| Model | SWE-bench Pro | Context Window | Notable |

|---|---|---|---|

| Claude Mythos Preview | 77.80% | Not disclosed | Not publicly available |

| GPT-5.4 | 57.70% | 1M tokens | Current OpenAI flagship |

| Claude Opus 4.5 | 45.89% | 200K tokens | Previous Anthropic flagship |

| Gemini 3.1 Pro Preview | 43.30% | 2M tokens | Leads 13 of 16 major benchmarks |

Gemini 3.1 Pro's position is worth noting. Despite a lower SWE-bench score, it leads 13 of 16 major benchmarks broadly and matches GPT-5.4 on the Artificial Analysis Intelligence Index at roughly one third of the API cost.

The Naming Question

Whether Spud ships as GPT-5.5 or GPT-6 matters more than it might seem, because the naming itself carries market signal.

The rule OpenAI appears to be following, based on its track record with previous releases, is that a generational performance leap justifies a major version increment. An improvement that is strong but does not cross a threshold into a new capability tier stays in the minor version family.

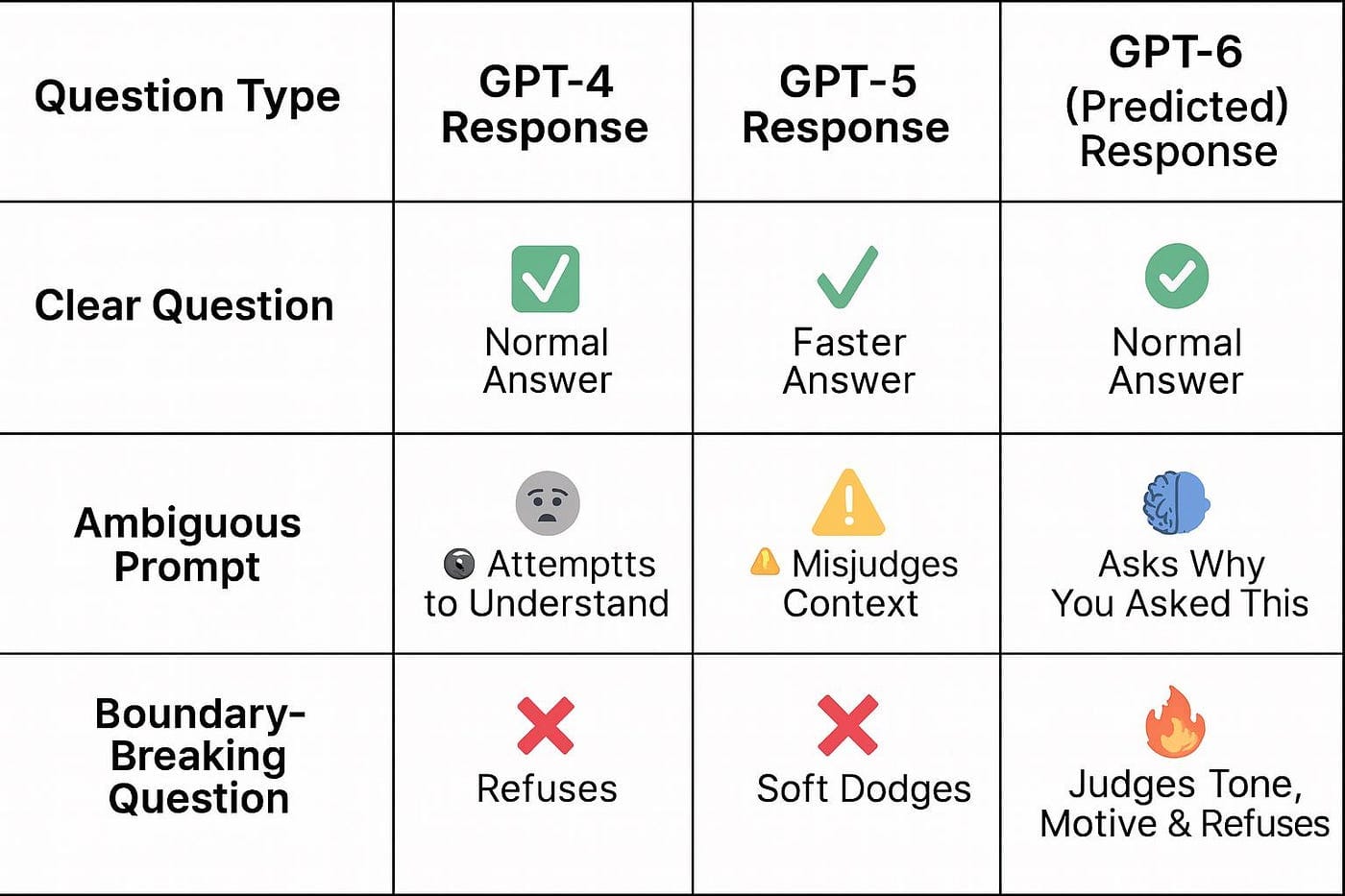

If Spud scores in the high 70s on SWE-bench Pro, matching or approaching Mythos territory, GPT-6 is the name that would fit. If it lands closer to the low 60s, GPT-5.5 is more appropriate. The two years of research framing Brockman used is not language anyone reaches for when describing a minor update. That said, OpenAI's model naming has been inconsistent throughout the GPT-5.x series, cycling through GPT-5.1 Codex, GPT-5.1 Codex Mini, GPT-5.2, GPT-5.3-Codex, and GPT-5.3-Codex-Spark in rapid succession, which makes the historical precedent less than reliable as a guide.

Pre-Launch Signals

Beyond the confirmed statements from Altman and Brockman, several signals from the days before this article were published suggest a launch window in the near term.

On April 14, OpenAI retired GPT-5.2-Codex and GPT-5.1-Codex-Mini from Codex. Model retirements are a standard pre-launch pattern: OpenAI clears older versions before releasing the next generation. A Codex team member posted on social media that "the next few weeks will be intense and fun," which drew significant engagement without disclosing anything specific.

An unverified source posted a specific leaked release date of April 16. That date has not been confirmed by OpenAI. Prediction markets on Polymarket assign approximately 78% probability to a release by April 30 and over 95% probability by June 30.

The expected rollout order, based on OpenAI's pattern with previous releases, is ChatGPT Plus and Pro subscribers first, followed by the free tier with access through the Thinking feature two to four weeks later, then the enterprise API two to four weeks after that. No pricing has been confirmed.

What Spud Is Being Built to Do

The language Altman and Brockman have used to describe Spud consistently emphasizes function over benchmark: economic acceleration, agent behavior, and more intuitive context understanding without over-prompting.

That framing aligns with OpenAI's broader product direction. The company has reorganized its product division under the name "AGI Deployment," renamed as part of the same internal restructuring that elevated Fidji Simo to oversee product. It has been building toward a unified superapp that consolidates ChatGPT, Codex, and the Atlas browser into a single product. Spud is described internally as the model that powers that vision.

The contrast with how frontier models were discussed eighteen months ago is noticeable. The conversation has moved from capability benchmarks as the primary frame toward economic impact and workflow integration. Whether that reflects a genuine capability threshold or represents IPO-adjacent narrative management is a question the benchmarks will eventually answer.

What Remains Unknown

To be precise about what this article can and cannot confirm:

OpenAI has not disclosed Spud's architecture, parameter count, or specific capability claims beyond executive statements. All technical analysis published elsewhere on this topic is speculative inference from prior OpenAI release patterns, not information from the company. Brockman's "big model feel" description and the two-years-of-research framing are real statements. The architecture and benchmark numbers are not.

The release window of April to May 2026 is a community consensus based on the pre-training completion date and Altman's "few weeks" statement. It is not a confirmed date. April 16 specifically comes from an unverified source and should be treated as a credible signal to watch rather than confirmed information.

The model could deliver the generational jump Altman and Brockman are describing. It could also be a well-marketed capable model that closes the gap with Mythos partially rather than fully. Both are possible. The benchmarks are what will settle it.

Conclusion

Spud is the most anticipated unreleased model in the AI industry right now, and the context around it is unusually rich. Pre-training completion on March 24 is confirmed. Two years of research and a significant change in model development philosophy are confirmed in Brockman's own words. The shutdown of Sora to redirect compute is confirmed. The April to May release window is a well-supported inference. Everything else is speculation.

What is not speculative is the competitive pressure. Anthropic's Mythos changed the benchmark ceiling. Google's Gemini 3.1 Pro leads most general benchmarks. DeepSeek V4 is expected in Q2. OpenAI's response is Spud, and based on what the company's own leadership has said about it, they believe it is worth the weight they are putting on it.

Frequently Asked Questions

What is OpenAI Spud?

Spud is the internal codename for OpenAI's next frontier model, currently in safety evaluation after completing pre-training on March 24, 2026, at the Stargate data center in Abilene, Texas. CEO Sam Altman called it "a very strong model that could really accelerate the economy." Greg Brockman described it as the product of two years of research with a "big model feel" that represents a significant change in how OpenAI thinks about model development.

Will Spud be called GPT-5.5 or GPT-6?

OpenAI has not confirmed the commercial name. The decision reportedly depends on how significant the performance leap is relative to GPT-5.4. If the improvement qualifies as generational, GPT-6 is expected. If it is strong but not a full generational shift, GPT-5.5 is more likely. Brockman's language about two years of research and a significant change in model development philosophy suggests OpenAI views this as more than a minor update.

When will Spud be released?

No official release date has been confirmed. Sam Altman said the model was "a few weeks" away from release on March 24, 2026. Prediction markets assign approximately 78% probability to a release by April 30 and over 95% by June 30. An unverified source has cited April 16 as a specific leaked date, but this has not been confirmed by OpenAI.

How does Spud relate to Claude Mythos and the current benchmark landscape?

GPT-5.4, OpenAI's current flagship, sits at 57.70% on SWE-bench Pro. Claude Mythos Preview posted 77.80% on the same benchmark before Anthropic announced it would not receive a public release due to cybersecurity concerns. OpenAI has indicated internally that Spud is designed to close that gap. Whether it matches Mythos territory or narrows the gap partially is the central open question about the release.

Why did OpenAI shut down Sora before releasing Spud?

Sora was consuming approximately $1 million per day in compute costs at its peak while generating minimal revenue. Downloads had declined roughly 66% between November 2025 and February 2026. OpenAI shut down the app and redirected the freed GPU resources toward completing Spud, absorbing the loss of a $1 billion Disney licensing deal built around Sora in the process.

What does "pre-training complete" mean for a release timeline?

Pre-training is the initial phase where a model learns from vast data. After pre-training, models typically go through post-training alignment, safety evaluation, red-teaming, and staged rollout preparation before public release. This process typically adds weeks to months to the timeline. Pre-training completion on March 24 means the model exists and the core development is done, but it does not mean the model is ready to deploy to users.

Related Articles