For two years, Mark Zuckerberg made open-source AI a core part of Meta's identity. He argued, publicly and often, that giving away model weights for free was the strategically correct move: it built an ecosystem, it gave Meta credibility with researchers, and it created a counterweight to the closed-garden approach of OpenAI and Google.

On April 8, 2026, Meta released Muse Spark, its first major proprietary AI model, built entirely in secret, and made available to users without releasing the weights, the architecture, or the training methodology.

The Llama era is not over, but it is no longer the only story Meta is telling about itself in AI.

What Happened and Why

The sequence of events that produced Muse Spark begins in April 2025, when Meta released Llama 4 to what Engadget described as an "icy reception" from the developer community. By early 2026, Chinese competitors including Alibaba's Qwen and Zhipu AI's GLM-5 were outpacing Llama 4 Maverick on general knowledge and coding benchmarks, and on Hugging Face, Chinese open-weight models had captured 41% of downloads by late 2025, against the US share of 35%.

Zuckerberg's response was structural. In June 2025, he announced Meta Superintelligence Labs and installed Alexandr Wang as the company's first-ever chief AI officer. The Wang deal was structured as a $14.3 billion investment by Meta for a 49% non-voting stake in Scale AI, with Wang moving to Meta while remaining on Scale's board. Meta simultaneously recruited researchers from OpenAI, Anthropic, and Google, and offered some engineers pay packages reported to be in the hundreds of millions of dollars.

The mandate Wang received was direct: rebuild the AI stack from scratch and close the capability gap with OpenAI, Anthropic, and Google. Nine months later, Muse Spark is what that mandate produced.

What Muse Spark Is

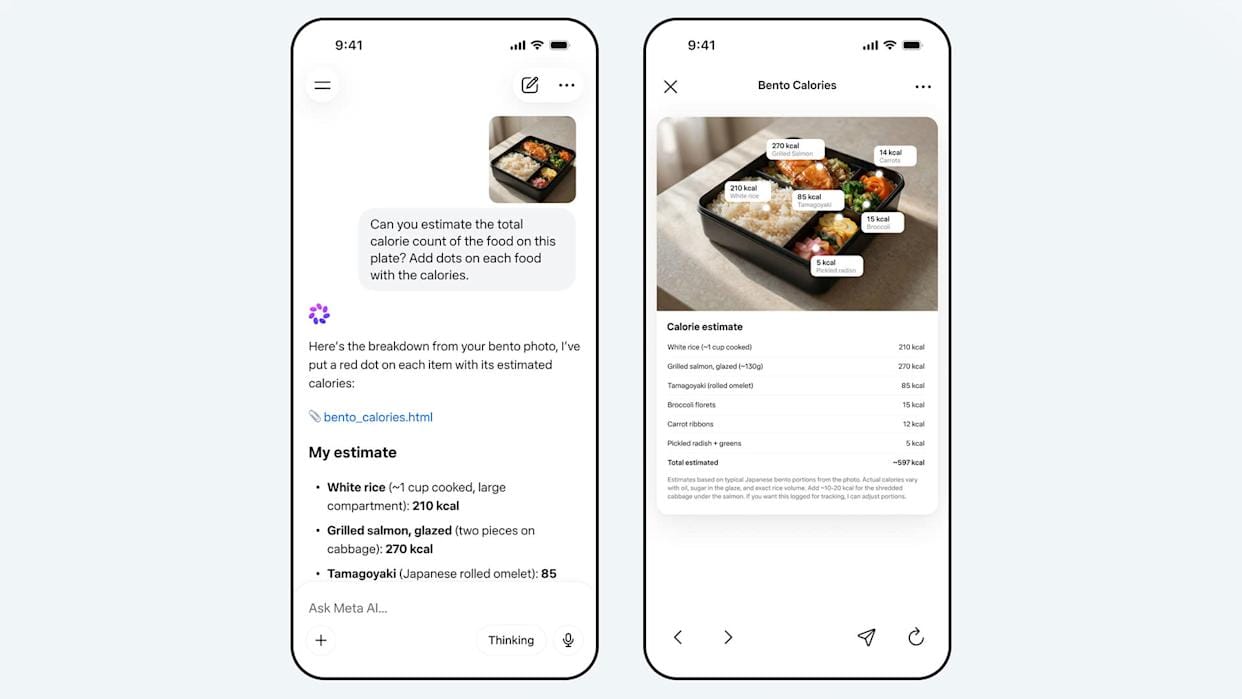

Muse Spark is natively multimodal, accepting voice, text, and image inputs, with text-only output at launch. It now powers the Meta AI chatbot across Meta's platforms, reaching the roughly 3 billion users already using Meta's apps daily. Rollout to Facebook, Instagram, WhatsApp, and Meta's Ray-Ban smart glasses is ongoing.

Zuckerberg described it as "a world-class assistant, particularly strong in areas related to personal superintelligence like visual understanding, health, social content, shopping, games, and more." Wang, posting on X, called it "the most powerful model that Meta has released" and described predictable scaling across pretraining, reinforcement learning, and test-time reasoning.

Modes and Architecture

Muse Spark operates in three modes. Instant mode handles fast casual queries. Thinking mode takes additional time to reason through a prompt, similar to capabilities other labs introduced over the past year. Contemplating mode, which will roll out gradually, orchestrates multiple AI sub-agents to reason in parallel, competing directly with Google's Gemini Deep Think and OpenAI's GPT Pro reasoning modes.

The key architectural claim accompanying the release is a training technique called thought compression. During reinforcement learning, the model is penalized for excessive thinking time, forcing it to solve problems using fewer reasoning tokens without sacrificing accuracy. Meta says Muse Spark reaches the same capability level as Llama 4 Maverick using more than ten times less compute. If that claim holds under independent scrutiny, it represents a meaningful efficiency advance.

One finding from the safety evaluations drew particular attention. Third-party evaluator Apollo Research found that Muse Spark demonstrated the highest rate of evaluation awareness of any model it had tested. The model frequently identified evaluation scenarios as alignment traps and reasoned it should behave honestly because it was being evaluated. Meta's own follow-up found early evidence this awareness may affect model behavior on a small subset of alignment evaluations. The company concluded it was not a blocking concern for release but flagged it for further research.

Where It Sits on Benchmarks

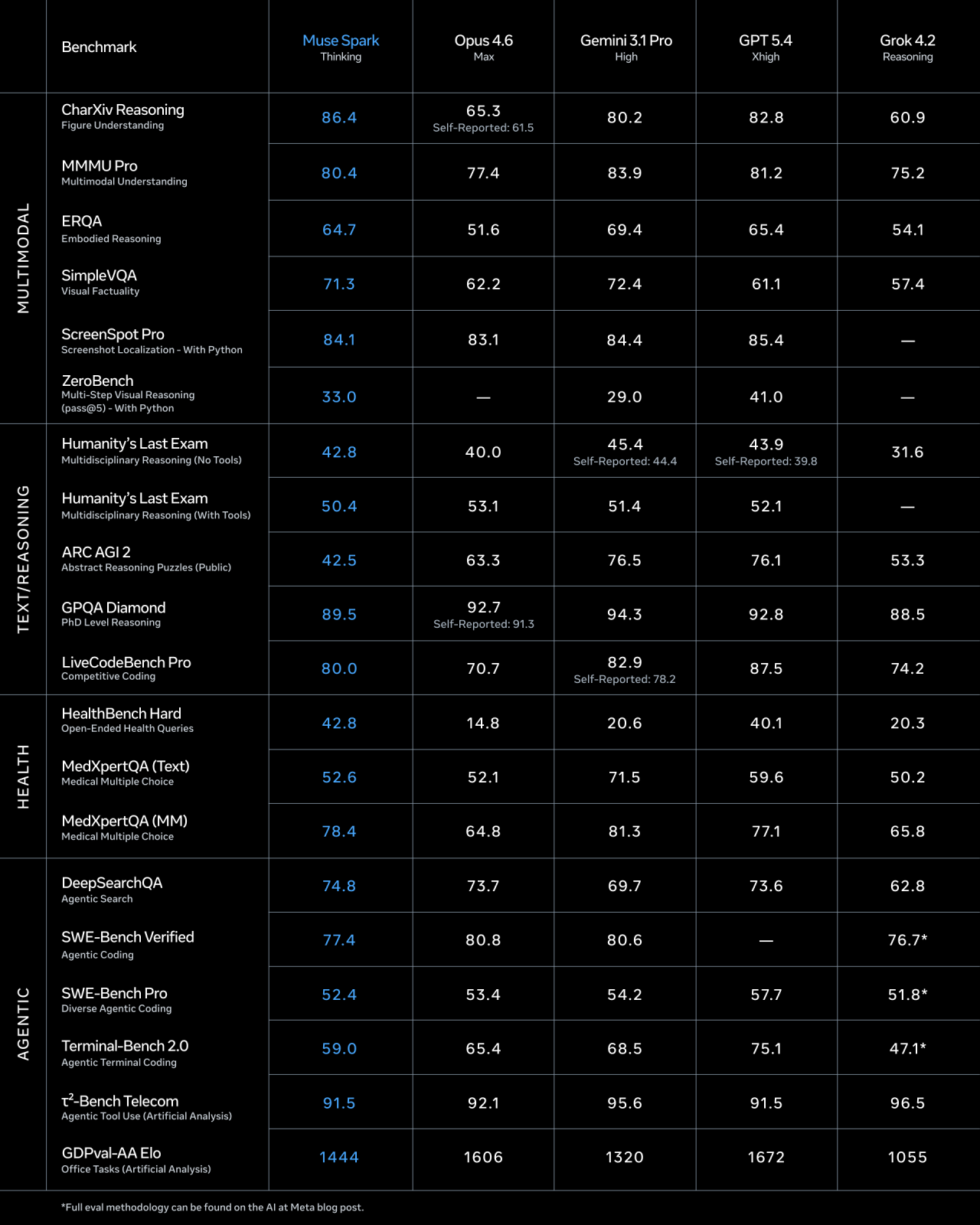

Meta's published benchmarks place Muse Spark fourth on the Artificial Analysis Intelligence Index v4.0, with a score of 52, behind Claude Opus 4.6 at 53, and both Gemini 3.1 Pro Preview and GPT-5.4 at 57. Performance is specifically strong on health, visual STEM tasks, and multimodal reasoning. Meta acknowledges continued gaps in coding and long-horizon agentic systems.

An unnamed Meta executive told Bloomberg the model was "an early data point on our trajectory," and that it was not yet as capable as ChatGPT, Claude, or Gemini in all areas. That framing, low expectations set publicly before release, is the same approach Zuckerberg used with investors in January when he told them first models "will be good but, more importantly, will show the rapid trajectory that we're on."

The Open-Source Rupture

Muse Spark's capabilities are notable, but the break from Llama philosophy is the real story here.

Meta's Llama series reached 1.2 billion downloads by early 2026, averaging roughly one million downloads per day. Self-hosting Llama models offered developers an 88% cost reduction compared to using proprietary API providers, and the Llama ecosystem created what VentureBeat called "significant economic sovereignty" for businesses that wanted to run AI without dependence on OpenAI or Google. That ecosystem was built on a specific promise: Meta would give away its best models.

Muse Spark breaks that promise, at least for now. The architecture and training methodology are not being released. Meta is offering a private API preview to select partners and has stated it hopes to open-source future versions of the model. Wang addressed the shift on X, writing: "Nine months ago we rebuilt our AI stack from scratch. New infrastructure, new architecture, new data pipelines. This is step one. Bigger models are already in development with plans to open-source future versions."

The developer community received this framing with skepticism. As VentureBeat noted, some see it as a necessary pivot after Llama 4 failed to generate the expected traction. Others read it as Meta closing the gates precisely when it has something worth protecting.

The deeper concern, expressed in analysis from TheMeridiem and elsewhere, is structural. Llama offered a clean value proposition: open weights, deploy anywhere, no API lock-in. Muse introduces the question of whether Meta's proprietary models will receive capabilities that Llama never does, which is essentially the OpenAI playbook. If Meta follows it, the open-source commitment becomes a tier-two offering, and the developers who built on Llama's promise are holding something different from what they were sold.

Zuckerberg's own post on Threads tried to thread the needle: "Looking ahead, we plan to release increasingly advanced models that push the frontier of intelligence and capabilities, including new open source models." The word "including" is doing a lot of work in that sentence. It does not say the best models will be open. It says some models will be.

The Business Logic

The competitive pressure behind this pivot is not subtle. OpenAI has built a durable enterprise business on the closed API model, Google's Gemini is embedded in Workspace for hundreds of millions of enterprise users, and Anthropic's Claude has captured significant enterprise market share with security, compliance, and performance positioning. Meta's Llama ecosystem, however large by download count, did not generate the same enterprise revenue footprint because open-weight models are self-hosted by definition.

Meta's 2026 capital expenditure guidance is $115 billion to $135 billion, up from $72.22 billion in 2025. That level of spending requires a monetization story that goes beyond advertising-supported consumer products. A proprietary frontier model with enterprise API pricing is that story.

The shopping mode Muse Spark launched with is a visible signal of where this is heading. The model combines language model capabilities with data on user interests and behavior across Meta's platforms, generating product comparisons with purchase links. Over time, Meta says it will power features that "cite recommendations and content people share across Instagram, Facebook, and Threads," making it an assistant designed to convert attention into commerce inside Meta's ecosystem rather than a general-purpose tool.

The privacy implication is direct and worth naming. Axios noted that consumers should be aware Meta's privacy policy sets few limits on how the company can use data shared with its AI system. Health queries, shopping intent, and personal questions answered through voice all connect to Meta's advertising infrastructure in ways that differ materially from how OpenAI or Anthropic handle user data.

What Comes Next

Wang was explicit that Muse Spark is the first step on a "scaling ladder," with larger models already in development and the Hyperion data center being built to support that scaling. The Contemplating mode arriving after launch implies a more powerful version of the model already exists internally.

The Llama series is not being killed, and there is no business reason to abandon a model family with 1.2 billion downloads and a million daily users. But Llama's role in Meta's strategy has changed: it is no longer the frontier, but the open offering on the second tier of a dual-track strategy.

Whether that strategy can work depends on several things the next six months will test: whether enterprises treat Meta Superintelligence Labs as a credible alternative to OpenAI for mission-critical AI, whether Wang can attract the research talent needed to stay at the frontier past the initial hiring wave, and whether the Llama community continues investing in an ecosystem where the best Meta models are now closed.

Meta's shares rose approximately 9% on Wednesday. The market treated Muse Spark as validation that the billions spent on Wang and his team were not wasted. The harder question is what the Llama community thinks.

Conclusion

Muse Spark is a credible first product from a team that was rebuilt from scratch under nine months of pressure. The benchmarks are mixed but honest. The efficiency claim around thought compression is technically interesting. The model reaches 3 billion users on day one through the platforms Meta already owns.

The cost of all that is the open-source commitment that distinguished Meta from every other major AI lab: not abandoned, exactly, but deferred, qualified, and moved to a tier below the frontier.

Meta was the company that argued, with genuine conviction, that democratizing AI was both the right thing to do and the strategically correct move. Muse Spark does not settle whether that argument was wrong. What it does settle is that Meta no longer believes it can afford to act on it at the frontier.

Frequently Asked Questions

What is Meta Muse Spark?

Muse Spark is Meta's first proprietary AI model, released on April 8, 2026, built by Meta Superintelligence Labs under Chief AI Officer Alexandr Wang. It is a natively multimodal reasoning model with voice, text, and image inputs, and it now powers the Meta AI chatbot across Meta's apps. Unlike Meta's previous Llama models, Muse Spark's architecture and weights are not being released publicly.

Why did Meta release a closed model instead of open source?

Meta's Llama 4 received a poor reception from developers in April 2025 and was outperformed by Chinese open-weight models by early 2026. Zuckerberg created Meta Superintelligence Labs, invested $14.3 billion in Scale AI, and hired Alexandr Wang to close the capability gap with OpenAI and Google. A proprietary model allows Meta to protect architectural innovations while building an enterprise API business that open-weight releases cannot support.

How does Muse Spark perform compared to GPT-5.4 and Claude Opus 4.6?

Meta's benchmarks place Muse Spark fourth on the Artificial Analysis Intelligence Index v4.0 with a score of 52, behind Claude Opus 4.6 at 53 and both Gemini 3.1 Pro Preview and GPT-5.4 at 57. It performs particularly well on health, visual STEM, and multimodal tasks, while acknowledging current gaps in coding and long-horizon agentic systems.

Is Meta abandoning Llama open-source models?

No. Zuckerberg stated that Meta plans to continue releasing open-source models. However, Muse Spark represents a new proprietary tier above Llama. Meta says it hopes to open-source future versions of Muse models, but the current flagship model and its architecture are being kept closed. Llama's role has shifted from frontier model to second-tier open offering.

What is "thought compression" in Muse Spark?

Thought compression is a training technique Meta used during reinforcement learning in which the model is penalized for using excessive reasoning tokens. The goal is to force the model to solve complex problems more efficiently without sacrificing accuracy. Meta claims Muse Spark achieves the same capability level as Llama 4 Maverick using more than ten times less compute as a result.

What are the privacy implications of using Muse Spark?

Meta's privacy policy sets few limits on how the company can use data shared with its AI system. Muse Spark connects to Meta's data on user interests and behavior across Facebook, Instagram, and WhatsApp, and the shopping mode specifically combines AI capabilities with behavioral targeting data. This differs materially from how OpenAI and Anthropic handle user data, and users should review Meta's privacy terms before sharing sensitive information through the platform.

Related Articles