On Friday, April 24, a Hangzhou startup with about 200 employees released an AI model that scores 80.6 percent on SWE-bench Verified. Anthropic's Claude Opus 4.7 scores 80.8 percent. The Anthropic model costs $25 per million output tokens. The Hangzhou model costs $3.48.

That is the entire story of DeepSeek V4, and it is not really a story about a model. It is a story about what happens when the price of a thing collapses by 86 percent and the buyers notice.

OpenAI is currently raising at an $852 billion valuation. Anthropic closed its last round at $800 billion. Both numbers depend on a single assumption: that frontier AI is rare, expensive to produce, and capturable through API margins. DeepSeek V4 just made all three claims look softer than they did on Thursday.

The Numbers

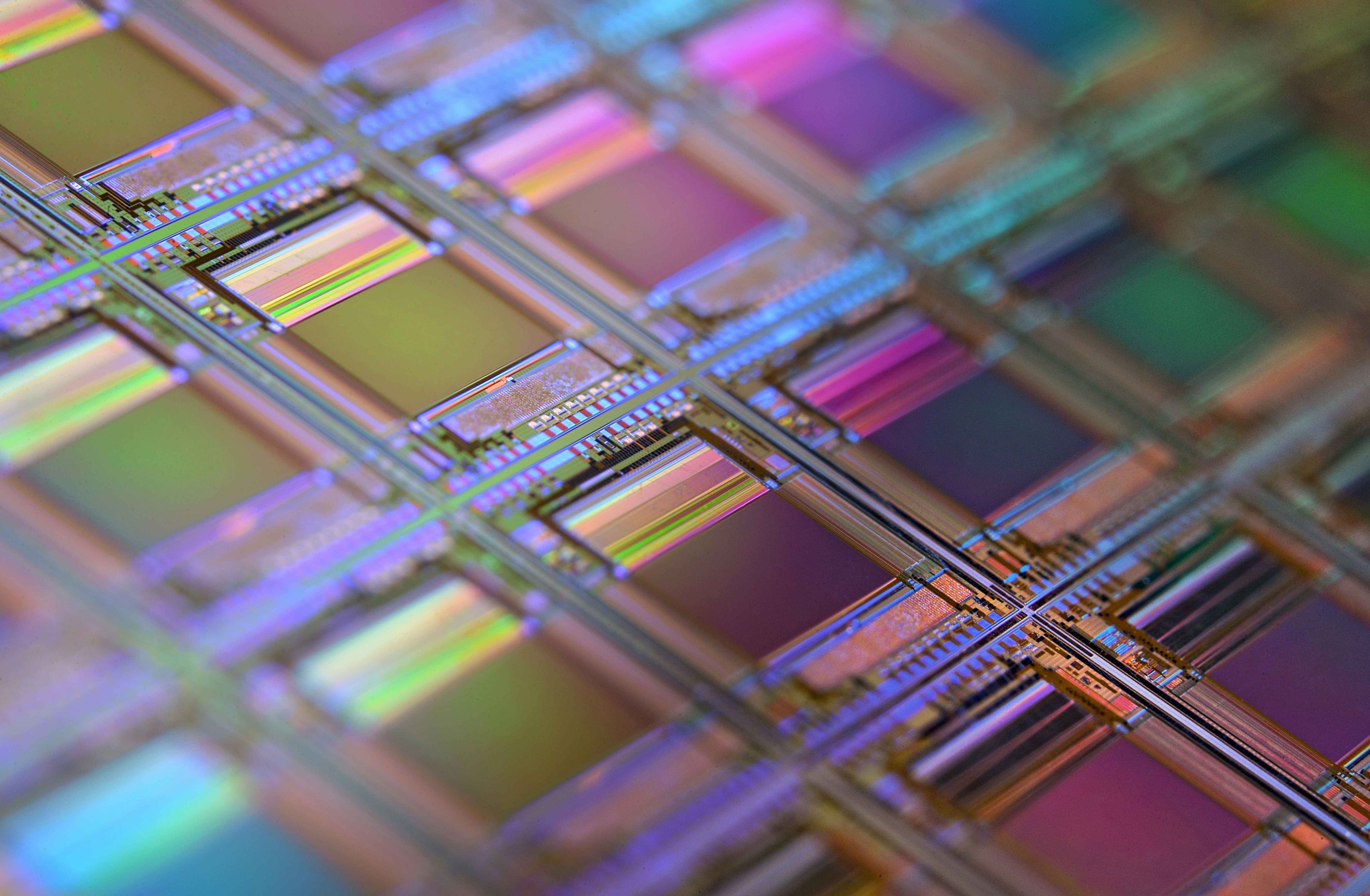

DeepSeek released two models in preview on Friday. V4-Pro is a 1.6 trillion parameter mixture-of-experts model with 49 billion active parameters per token. V4-Flash is the smaller sibling at 284 billion total and 13 billion active. Both ship with a 1 million token context window, both are open-weight under the MIT license, and both can be downloaded and run locally by anyone with the hardware.

The pricing is the part that should make American AI executives look at their cap tables. V4-Flash sells for $0.14 per million input tokens and $0.28 per million output tokens. V4-Pro sells for $1.74 input and $3.48 output. According to VentureBeat, that puts V4-Pro at roughly one-seventh the cost of GPT-5.5 and one-sixth the cost of Claude Opus 4.7.

On benchmarks, V4-Pro lands in the gap between "good enough" and "as good as." It scores 52 on the Artificial Analysis Intelligence Index, up from 42 for V3.2, making it the second strongest open-weights reasoning model in the world. On BrowseComp it hits 83.4 percent, behind GPT-5.5 at 84.4 and ahead of Claude Opus 4.7 at 79.3. On GPQA Diamond it scores 90.1, behind GPT-5.5 at 93.6 and Claude at 94.2.

That is a 3 to 4 point gap on academic reasoning and a coin-flip on real-world coding. For an enterprise picking a model to wire into a customer support agent or an internal coding assistant, the gap is much smaller than the price difference. The math is brutal. A team running 10 billion output tokens a month pays $250,000 with Anthropic and $34,800 with DeepSeek. Same workload, same accuracy on most tasks, $215,200 saved every month.

The hardware story is the second hammer. Fortune reported that Huawei's newest Ascend 950PR and 950DT chips received "day zero" support for V4. Huawei's chips powered parts of the training run, and the model now runs natively on Ascend supernode clusters. DeepSeek deliberately gave Chinese silicon early optimization access. Nvidia got nothing.

Pressure Points

The premium API is now a feature, not a moat

For two years the bull case for OpenAI and Anthropic has been the same: they sit on a frontier capability that takes a few billion dollars and a few thousand H200s to reproduce. As long as that gap holds, they can charge $25 per million tokens and pour the gross margin into the next training run. That gap was already closing. DeepSeek V4 closed it on the dimension that matters to most paying customers.

Look at where the closed labs still win. GPT-5.5 leads on multi-step browsing. Claude leads on adversarial reasoning and instruction following at long horizons. Gemini 3.1 Pro leads on world knowledge. These are real advantages, but they are advantages on the hardest 5 percent of tasks. The other 95 percent is coding, summarization, classification, extraction, RAG question answering, internal search. On those, V4-Pro is within a point or two and costs 14 percent of the price.

An API business charges premium prices because customers cannot get the same thing cheaper. When they can, the API business becomes a commodity business with a wrapper. OpenAI and Anthropic are about to find out which parts of their revenue are wrapper and which are real product.

Open weights changed who carries the cost

The MIT license is not a footnote. It means an enterprise can download V4-Pro, run it on their own infrastructure, fine-tune it on private data, and never send a token to Hangzhou. Cloudflare can serve it. Together AI can serve it. AWS Bedrock will almost certainly serve it within weeks. Every company that was paying OpenAI for inference now has a credible alternative they fully control.

That structurally changes who eats the cost of compute. When you call GPT-5.5, OpenAI rents the H200, runs the inference, pays Microsoft Azure for the slot, and charges you a margin on top. When you self-host V4-Pro, you rent the GPU directly from Lambda or CoreWeave and skip the model maker. The model maker captures zero. Simon Willison's analysis framed it bluntly: "almost on the frontier, a fraction of the price."

The first DeepSeek moment, in January 2025, wiped roughly $600 billion off Nvidia in a single day on the theory that AI compute demand was about to collapse. That theory was wrong. Demand kept rising. But the second DeepSeek moment is different. It is not about demand. It is about who collects the rent on it.

The chip story is the real geopolitical signal

U.S. export controls were designed to do one thing: prevent China from training frontier models on American hardware. Two years later, China has trained a near-frontier model on Chinese hardware and released it for free. Al Jazeera reported that Huawei's Ascend chips ran parts of V4's training and now offer full support across Ascend supernode clusters.

This is the outcome the export control architects were trying to prevent, and it has arrived faster than even the pessimistic forecasts. The mechanism that produced it is the one economists predicted. Cut off the supply of advanced chips, and Chinese labs are forced to invent efficiency techniques that get more out of less. Then those techniques run on domestic hardware that, freed from the constraint of competing with Nvidia head-to-head, has time to mature.

The political response will be loud. Expect new bills in Congress within weeks demanding restrictions on running open Chinese models on U.S. cloud infrastructure, demanding licensing requirements for companies that integrate DeepSeek, demanding the Treasury investigate any U.S. firm that contributes to its open-source repository. Some of those bills may pass. None of them will reverse what already exists on Hugging Face.

What Happens Next

Most likely scenario. Within 30 days, OpenAI cuts API prices on GPT-5 and GPT-5.5 by 30 to 50 percent. Anthropic follows within 60 days on Claude Sonnet, holds the line on Opus, and emphasizes safety, government certifications, and enterprise compliance as the differentiators. Both labs accelerate work on a smaller, cheaper tier explicitly designed to undercut DeepSeek on the long tail of tasks where its accuracy is good enough. Revenue growth slows. Gross margins compress. The next OpenAI funding round, if it closes at $852 billion, includes structural protections that look more like debt than equity.

Bull case for the closed labs. The next Claude or GPT release opens a real gap on agentic tasks, the kind where a model has to use tools, browse for hours, and recover from its own mistakes. If GPT-6 or Claude Opus 5 lands a 15-point lead on long-horizon agent benchmarks, premium pricing gets a new lease for 18 months. The bet is that frontier capability resets the moat faster than DeepSeek catches up.

Bear case. DeepSeek releases V4.5 in three months matching GPT-5.5 across the board. A second Chinese lab, Moonshot or Zhipu, releases something comparable. The Trump administration responds with a ban on running Chinese open-source models on U.S. cloud, which gets challenged in court and creates 18 months of regulatory uncertainty for every enterprise. OpenAI's IPO gets pushed to 2027. Anthropic's $800 billion valuation gets repriced in the secondary market at $400 billion. Nvidia takes a second hit, this time bigger than the first, as customers actually do the math on whether they need H200s for inference at this price point.

What To Watch

OpenAI API pricing page. If GPT-5 drops to under $5 per million output tokens within 30 days, that is the closed labs admitting the floor moved.

Hugging Face download counts on V4-Pro. If it crosses 500,000 weekly downloads inside a month, that is enterprise adoption, not just hobbyists testing it.

AWS Bedrock and Azure model catalogs. The day either hyperscaler lists V4-Pro for self-serve deployment, the moat conversation is over.

Anthropic's next funding round timeline. The company was reportedly targeting another raise this summer. Any delay past Q3 is the market telling Sarah Friar's counterpart at Anthropic that the $800 billion mark needs new evidence.

Congressional response. A bill restricting U.S. cloud hosting of open Chinese models would be a tell. It would mean the lobby got panicked enough to ask for political help. Track Senator Warner, Senator Cotton, and the House Select Committee on the CCP.

My Opinion

The American AI industry has been operating on a single shared assumption since GPT-4 shipped: that the cost of building a frontier model is a moat, and that moat translates into pricing power, and that pricing power justifies hundred-billion-dollar valuations. DeepSeek V4 just demonstrated that the second link in that chain is broken. The cost is still high. The moat does not exist.

I do not think this kills OpenAI or Anthropic. Both have real customers, real revenue, and real expertise that is hard to replicate. What it kills is the idea that the next $100 billion of value in AI accrues to the model layer. It does not. It accrues to whoever sits closest to the customer, with the best data and the cheapest serving infrastructure. That is hyperscalers, that is application builders, and increasingly that is enterprises themselves. The model is becoming the database: critical, undifferentiated, and never the place where the margin lives.

The American policy response will be the wrong one. Congress will try to ban or restrict DeepSeek on U.S. infrastructure. That will fail technically because the weights are already mirrored on dozens of servers worldwide, and it will fail strategically because it concedes the argument that American models cannot compete on merit. The right response is brutal honesty about what was just lost and an immediate pivot toward the parts of the stack where American companies still have a real advantage: enterprise integration, safety guarantees that governments will pay for, and the very specific capabilities at the long-horizon agentic frontier where the closed labs still lead. Pretending the moat exists does not bring it back.